Terraform for AI Agents (4): Compute — ECS, ACK, or Function Compute?

The three places an agent's main loop can live on Aliyun: a long-running ECS instance with pm2, a Kubernetes pod on ACK, or a Function Compute invocation. The cost-crossover model I use to pick between them, and a real cloud-init bootstrap that goes from bare Ubuntu to running agent in 90 seconds.

The single most important architectural decision in an agent system is where the agent loop process runs. There are three good options on Aliyun, plus a fourth that almost everyone forgets. Picking the wrong one isn’t catastrophic — you can migrate later — but it costs weeks of unnecessary work and several thousand RMB a month in idle compute.

This article covers all four options with working Terraform, cost crossovers, and operational gotchas I often encounter.

The four patterns#

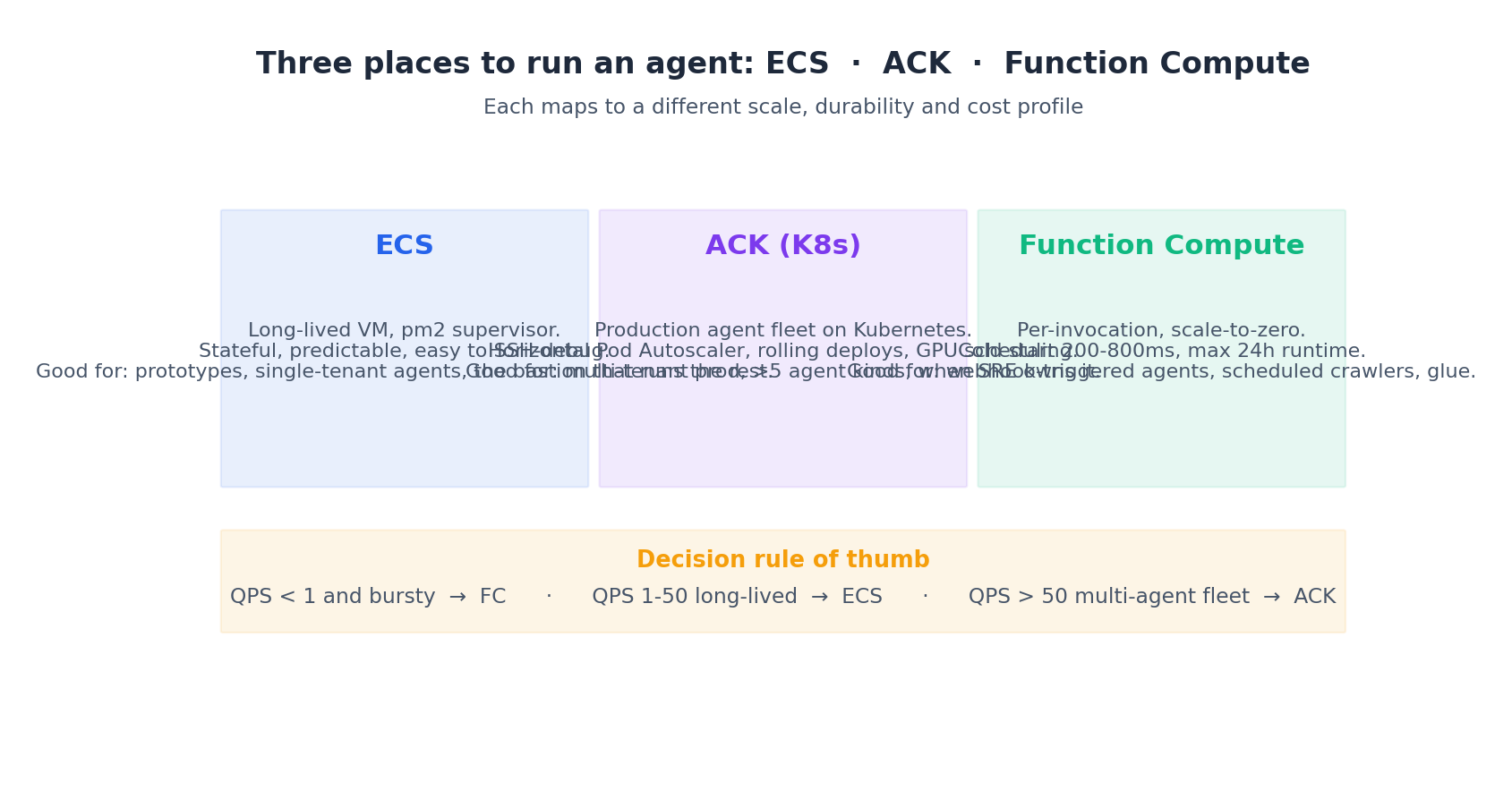

Each has a sweet spot:

- ECS is a Linux VM. Long-lived, stateful, easy to SSH into when you’re debugging. The right answer for prototypes, single-tenant agents, and anywhere you want to keep one machine “warm” with cached models or local state.

- ACK (Container Service for Kubernetes) is the prod answer at scale. Multiple agent kinds, autoscaling, rolling deploys, GPU scheduling. Worth the operational weight only when you have at least three or four agent services and an SRE who’s comfortable in K8s.

- Function Compute (FC) is per-invocation, scale-to-zero. Cold start 200-800ms, hard cap of 24 hours per invocation. Right for webhook-triggered agents, scheduled crawlers, and anything that runs in bursts and idles otherwise.

- Elastic Container Instance (ECI) is the one people forget — a container without a node underneath. Cold start ~5s, billed per second, no node pool to manage. The sweet spot is bursty batch jobs that run 2-30 minutes per shot, several times an hour.

The first three cover most cases. ECI fills the gap between “too long for FC” and “too bursty for ECS”.

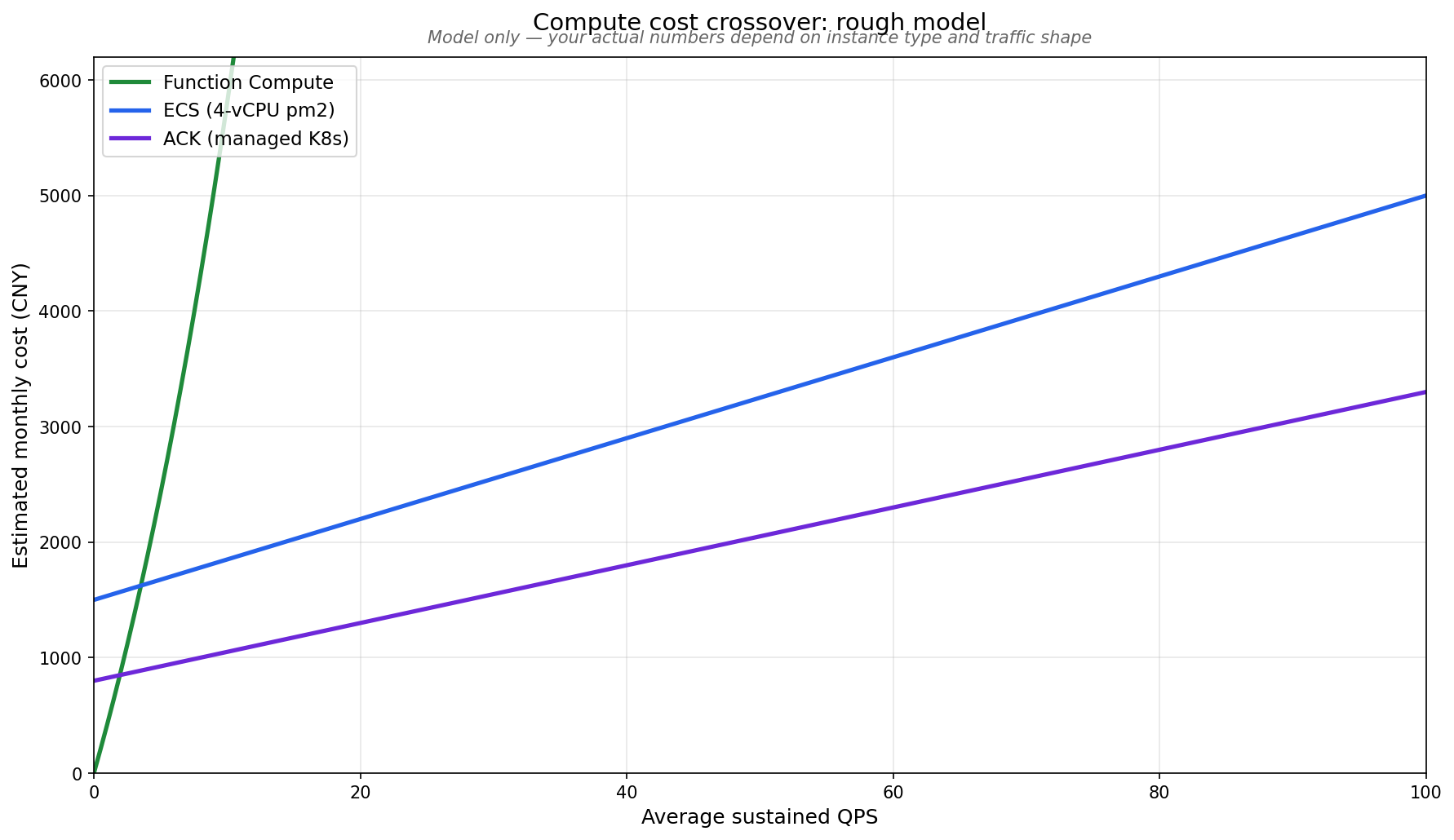

The cost crossover#

Here’s the rough monthly cost picture as a function of sustained QPS:

Under ~1 QPS sustained, FC dominates — you pay almost nothing during idle. From ~1 to ~30 QPS sustained, a single ECS box wins. Above that, ACK’s higher fixed cost gets amortised over enough load to be cheaper than packing more onto ECS. ECI sits orthogonally: cheaper than ECS below 50% utilisation, more expensive above.

The model is rough — your actual numbers depend on the instance family, network, and the agent’s chattiness — but the shape is reliable. Here’s the decision rule I use:

Bursty + low average → Function Compute

Steady + low-to-mid → ECS with pm2

Multi-agent + sustained mid-to-high → ACK

Bursty batch, 2-30 min per run → ECI

With the framing in place, let’s walk through each pattern and the Terraform that ships them.

Pattern 1: ECS with pm2#

For 80% of agent projects, this is what you want. One or two ECS instances behind an ALB, each running pm2 as the supervisor for the Python or Node agent process.

| |

Three things worth highlighting:

datablocks pick the image and instance type instead of hardcoding them.ubuntu_22_04_x64resolves to the latest patched image;data.alicloud_instance_types.agentfinds anecs.c7with 4 vCPUs and 16 GiB. When Aliyun deprecates an image SKU, your next plan picks the new one automatically. Hardcodingecs.c7.xlargeworks until that exact SKU is out of stock in your zone, at which point Terraform fails — letting the data source pick gives you graceful fallback.system_disk_kms_key_idties the disk to thememoryCMK from article 3. Encryption-at-rest costs nothing extra and removes a whole compliance headache.lifecycle { create_before_destroy = true }means a planned replace creates the new instance, attaches it to the ALB, drains the old, then destroys it — zero-downtime rotation. The trade-off is you briefly need 2× capacity, which is fine for two-instance fleets and starts to matter at 50.

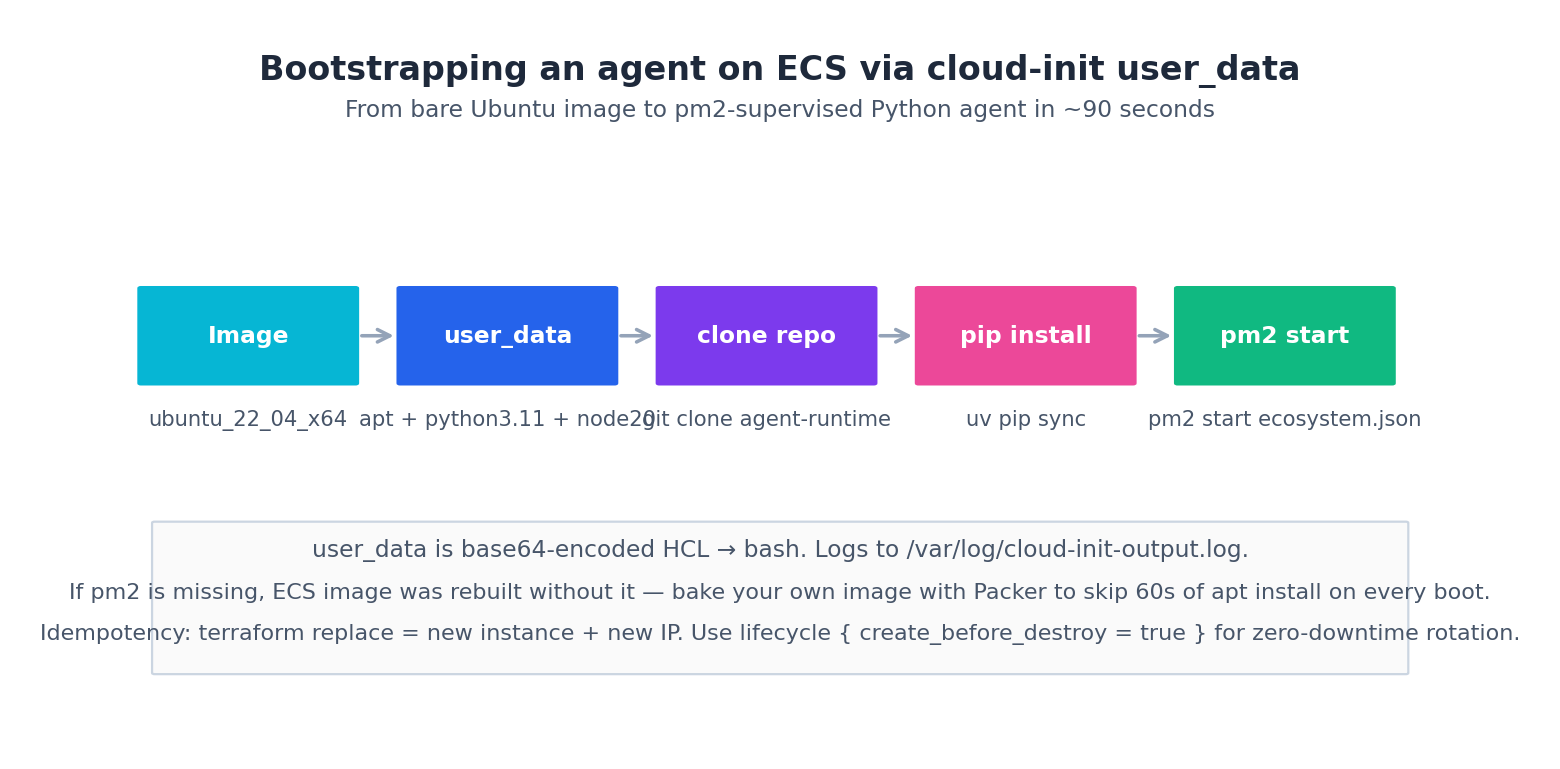

The cloud-init that actually survives a real bootstrap#

Most blog posts show a happy-path cloud-init: apt-get install, git clone, pm2 start, done. That works for a demo. Production bootstraps need to handle six things the happy path glosses over: DNS races, slow public mirrors, RAM credential timing, secret leakage via boot-disk snapshots, root-vs-service-account, and a bootstrap-done marker the ALB can gate on.

Here’s the version I actually run:

| |

The flow:

About 90 seconds from apply to pm2 status showing the agent as online. The first apt-get install is the slow step (~60s). Once you have a stable image, bake it with Packer so future ECS instances skip apt entirely and boot in 25 seconds.

Real-world tip:

user_datais logged to/var/log/cloud-init-output.logon the instance. When an agent doesn’t come up, that’s where you look first. Theset -euxo pipefailat the top makes failures loud and traceable — without it I lost two hours debugging a silentpip installfailure that turned out to be a missinggcc.

Pattern 2: ACK for production fleets#

Once you have three or more agent kinds running side by side, the per-VM operational cost dominates and ECS stops scaling. ACK gives you one cluster, one scheduler, one upgrade path, and a single place to wire autoscaling and observability.

The minimal Terraform to get a managed K8s cluster on Aliyun:

| |

A few notes:

ack.pro.smallis the managed control plane SKU. Aliyun runs the masters; you only pay for the worker ECS — about ¥350/month for the control plane on top of node cost. Don’t pick the unmanaged SKU unless you have a strong reason.pod_vswitch_idsis for Terway, the Aliyun-native CNI. Each pod gets a real VPC IP — no overlay network, security groups apply directly. This is the right default; Flannel makes networking debugging miserable.delete_protection = truedoes what it says —terraform destroywon’t kill the cluster. Set this on every prod cluster.- The

addonsblock enables ARMS Prometheus (article 7) and the SLS log collector. Provisioning these via Terraform means new clusters come pre-instrumented.

The actual agent pods come from a Kubernetes Deployment manifest — usually applied by a separate kubectl step or via the kubernetes Terraform provider. I keep the cluster in this terraform project and the workloads in a separate Helm chart, because they have different release cadences. The cluster changes once a quarter; the agent image changes ten times a day.

Pattern 3: Function Compute for event-driven agents#

Some agents only run when triggered — a webhook fires, a cron tick happens, an OSS object lands. For those, FC is unbeatable: zero idle cost, automatic scale-out, and the cloud handles the runtime entirely.

| |

What this gives you: a Python 3.11 function, 1 GiB RAM, 10-minute timeout, attached to the same VPC and security group as the rest of your stack, triggered every day at 9am. Zero servers to maintain. Cost: roughly ¥0.10 per invocation at this size, plus ¥0.0001 per GB-second of execution. A daily cron that runs for 5 minutes costs about ¥3/month. The same agent on an idle ECS would be ~¥250/month.

Three caveats I keep tripping on:

- Cold start. First invocation after idle takes 200-800ms more than subsequent ones. For a webhook with sub-second SLA, this matters; for a cron task, it doesn’t. Provisioned concurrency exists but defeats the point of FC — once you’re paying for warm instances 24/7, you may as well be on ECS.

- VPC attachment adds another 200-400ms to cold starts because FC has to attach an ENI to your VPC. Worth it if the function needs to reach RDS/OpenSearch over private network; skip the

vpc_configblock if it only calls public APIs. - 24-hour max runtime. For long agent loops, FC is a bad fit. Either chunk the loop into shorter steps (with state in OSS or Redis between steps) or move to ECS/ECI.

That third caveat is exactly the gap ECI fills.

Pattern 4: ECI for bursty batch agents#

ECS, ACK, and FC cover most cases, but there’s a fourth pattern that comes into its own for bursty batch agent work: Elastic Container Instance. ECI is “a container without a Kubernetes node underneath” — Aliyun runs the container directly on a fleet of bare-metal hosts they manage, you only pay for the seconds it runs.

The use case is sharp. You have an agent that runs for 2-30 minutes per invocation, several times an hour, often in parallel. Function Compute caps out at 24h but the cold start is rough for batch; ECS is wasteful (you’re paying for an idle box between bursts); ACK adds K8s overhead you don’t want. ECI hits the middle: cold start ~5s, no idle cost, no node pool to manage.

| |

Production gotchas I’ve hit:

- The image registry must use the

registry-vpcendpoint, not the public one. Public goes through your NAT and costs egress; VPC endpoint is free and faster. I once burned ¥200 in a week on egress before I noticed. restart_policy = "OnFailure"not"Always". ECI is single-shot by design;"Always"makes it loop on success too, which is the opposite of what batch jobs want.EmptyDirVolumeis per-container-group ephemeral, exactly like a Kubernetes emptyDir. Don’t store anything here you can’t reproduce — write outputs to OSS or RDS.ram_role_namelets the container fetch credentials from instance metadata, same pattern as ECS. No AK/SK in env vars.

The alicloud_eci_container_group resource has an annoying quirk: changes to containers[*] don’t always trigger a clean replace because the API is partly merge-based. For production batch jobs I provision the container group once via Terraform with ignore_changes on the runtime spec, then trigger new runs by calling the ECI API directly from the orchestrator, using the role and SG that Terraform provisioned. Treat the Terraform side as the template, not the executor.

Cost rough math: a 4 vCPU / 16 GB ECI is ~¥0.96/hour, billed by the second after a 1-minute minimum. An 8-minute research run costs ~¥0.13. 100 runs/day = ¥13/day = ¥390/month. The same workload on a permanently-running ECS would be ¥250-400/month even when idle. Below ~50% utilisation, ECI wins; above, ECS or ACK wins.

A real example: hybrid#

Most production agent stacks I’ve shipped end up hybrid:

- ECS for the always-on conversational agent that holds session state in memory

- ACK for the worker fleet that processes background jobs across three or four agent kinds

- FC for webhook receivers and daily cron tasks

- ECI for batch jobs that take 5-20 minutes and run dozens of times a day

Terraform makes this trivial — four modules in the same project, sharing the VPC and security groups from article 3. The skill is knowing which pattern fits which workload, not learning all the resource syntaxes.

Right-sizing the instance#

A common question: which ecs.* family for an agent runtime? My defaults:

| Workload | Family | Why |

|---|---|---|

| Conversational agent, no GPU | ecs.c7 | CPU-bound on tokenisation + I/O on LLM calls |

| Memory-heavy (large context) | ecs.r7 | More RAM per vCPU |

| Batch / scheduled with bursts | ecs.c7a (AMD) | ~15% cheaper, slightly slower per core |

| GPU inference of small models | ecs.gn7i | T4-class, cheapest GPU on Aliyun |

| Pretraining / large fine-tune | Use PAI-DLC, not ECS | Don’t reinvent the orchestration |

Avoid the burstable ecs.t6 family for agent runtime — CPU credits run out under sustained load and your latency goes off a cliff. They’re fine for the bastion that runs terraform apply and not much else.

When alicloud_pai_* doesn’t exist (and the fallback)#

If you’re shipping an agent that does its own training or hosts a custom LLM, the obvious first instinct is to spin up PAI-EAS via Terraform. Reality check: as of alicloud provider 1.230, PAI resource coverage is thin. You have alicloud_pai_workspace and a few related resources, but full EAS service deployment is not first-class — there’s no alicloud_pai_eas_service that lets you declare a model serving endpoint declaratively in HCL.

The practical fallbacks I use, in order of preference:

Fallback 1: provision the workspace, run EAS via API. Terraform owns the workspace and IAM; an imperative tool (eascmd or the EAS Python SDK) owns the runtime spec.

| |

A Makefile target then calls eascmd create eas-service.json where the JSON contains image, processor, instance type, and autoscaling config. This is honest about the split: declarative for the stable substrate, imperative for the moving runtime.

Fallback 2: ROS for the EAS bits. ROS (Aliyun’s native IaC) often supports resources before the Terraform provider does. You can call ROS from Terraform via alicloud_ros_stack:

| |

The eas-service.ros.json is a ROS template — Aliyun-flavoured CloudFormation — and exposes EAS properties the alicloud Terraform provider doesn’t yet. Terraform owns the stack, ROS owns the deep-cloud bits, and you get a unified terraform plan across both.

Fallback 3: write your own provider. Don’t. I tried it once — two weeks of Go work, then the official provider shipped the resource I needed a month later and I had a fork to maintain forever. The Aliyun team ships new alicloud provider resources monthly; you’d rather their work catch up than carry a fork.

The general principle for any “Terraform doesn’t have it yet” situation: Terraform owns the stable substrate, lighter tools own the moving parts. RAM roles, VPC, KMS, OSS — Terraform. Brand-new resources, beta APIs — null_resource + local-exec, ROS embedded, or a separate orchestrator. As the provider catches up, migrate the null_resource blocks into proper resources with terraform import.

Real-world tip:

alicloud_ros_stackis genuinely underrated. When the alicloud Terraform provider lags, ROS often has the API a quarter ahead. Embedding ROS templates inside Terraform gives you the best of both.

What’s Next#

Article 5 fills in the storage layer — vector store, relational, object store, backups — that everything we just provisioned needs to talk to. ECS instances, ACK pods, FC functions, and ECI containers are all useless until they have somewhere to put memory.

Then article 6 builds the LLM gateway in front of all the compute, article 7 wires observability and cost alarms, and article 8 stitches everything into one terraform apply.

Terraform Agents 8 parts

- 01 Terraform for AI Agents (1): Why IaC Is the Only Sane Way to Ship Agents

- 02 Terraform for AI Agents (2): Provider, Auth, and Remote State on OSS

- 03 Terraform for AI Agents (3): A Reusable VPC and Security Baseline

- 04 Terraform for AI Agents (4): Compute — ECS, ACK, or Function Compute? you are here

- 05 Terraform for AI Agents (5): Storage — Vector, Relational, and Object Memory

- 06 Terraform for AI Agents (6): LLM Gateway and Secrets Management

- 07 Terraform for AI Agents (7): Observability, SLS Dashboards, and Cost Alarms

- 08 Terraform for AI Agents (8): End-to-End — research-agent-stack in One Apply