Terraform for AI Agents (5): Storage — Vector, Relational, and Object Memory

An agent has three kinds of memory and they map onto three Aliyun services: PolarDB/RDS for sessions, OpenSearch (vector edition) or pgvector for embeddings, OSS for artifacts. Real Terraform for each, plus the lifecycle and backup rules that keep the bill flat.

Most tutorials gloss over an agent’s memory. ‘Just put the embeddings in Pinecone, the sessions in Postgres, and the screenshots in S3.’ On Aliyun, all three are managed services. Correctly provisioning them with Terraform can mean the difference between a working memory and losing three weeks of conversation history because the disk filled up at 4 AM.

This article covers all three layers, their Terraform configurations, the critical but tedious backup and disaster recovery (DR) setup, the major version upgrade process, and the Saturday outage that changed how I do things.

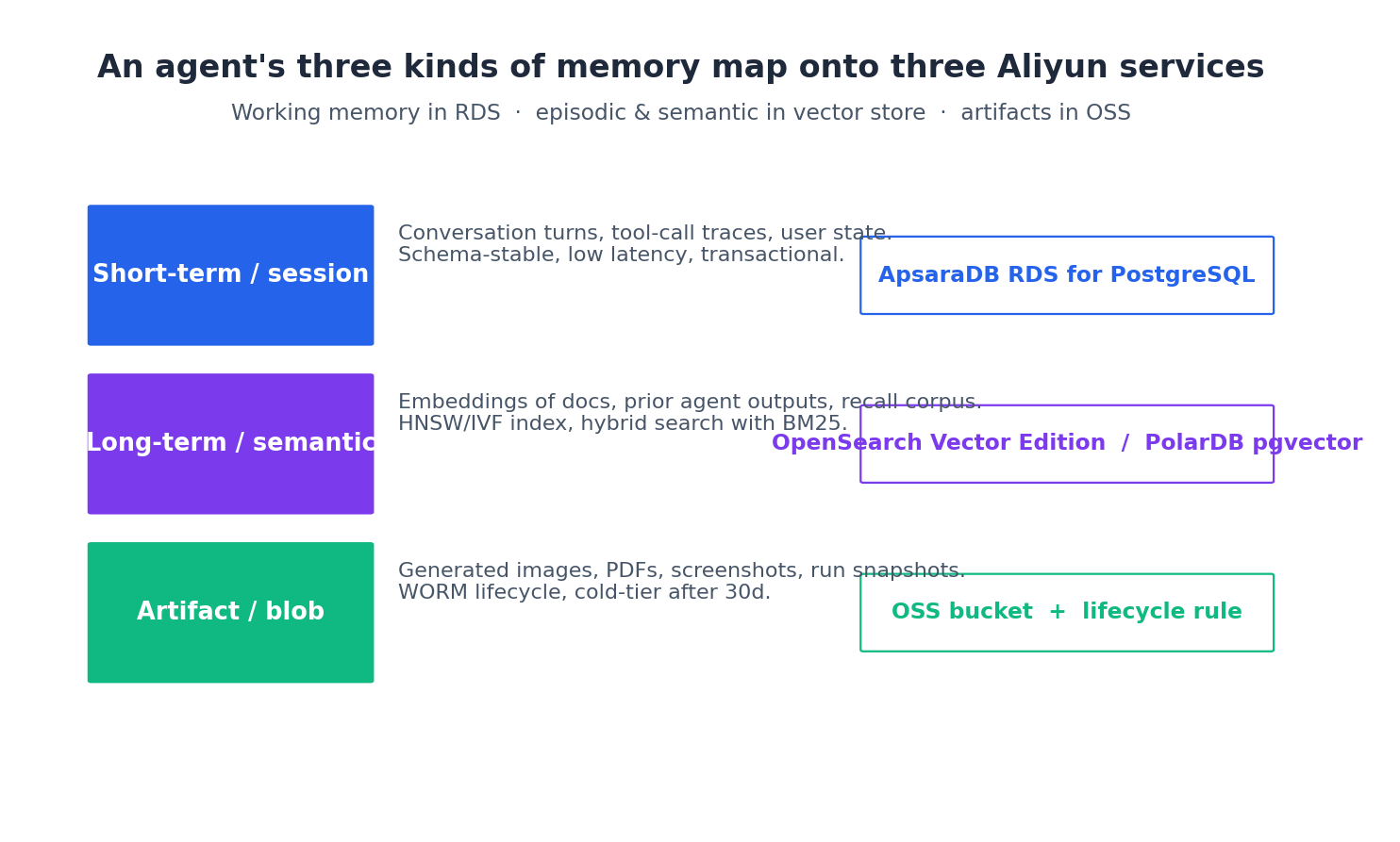

The three-layer memory model#

The mental model:

- Short-term / session — what the agent did in the current run and the last few runs. Conversation turns, tool calls, intermediate state. Schema-stable, low-latency, transactional. Goes in a relational database.

- Long-term / semantic — embeddings of documents, prior outputs, recall corpus. Hybrid lexical + vector search. Goes in a vector store.

- Artifact / blob — generated images, PDFs, screenshots, run snapshots. Often large, write-once-read-rarely. Goes in object storage.

Don’t conflate them. I once watched a team try to put 50 GB of generated PDFs in Postgres because “it has a bytea column”. It cost ten times what OSS would have, query latency went to mush, and backups took hours. Each layer has a service that’s good at exactly its job — pick the right one and the bill stays sane.

Layer 1: relational, RDS for PostgreSQL#

For session state—turn-by-turn conversation, tool call traces, and user identity—you need a robust RDBMS. PostgreSQL is my go-to, but MySQL is fine if your team prefers it. Use PolarDB when you need horizontal scaling.

| |

What earns its line in this block:

- Password lives in KMS Secrets Manager from birth. Generated by

random_password, written toalicloud_kms_secret, retrieved by the agent at startup via STS. Plaintext never leaves Terraform’s memory and is referenced downstream bysecret_id, not value, so it doesn’t sit in tfstate. encryption_keyties the disk to thememoryCMK. At-rest encryption, no extra cost.backup_period+retention_periodcreate automated backups three times a week, kept 30 days in prod, 7 in dev. RDS backups are stored on OSS; you don’t manage the bucket.zone_id_slave_ain prod creates a hot standby in a second zone. Failover is sub-30s. The cost is 2× — worth it for prod, overkill for dev.deletion_protectionpluslifecycle.prevent_destroyin prod block bothterraform destroyand provider-driven force-replaces. I’ll explain why both are needed in the incident section below — the short version is that one of them once saved my Saturday.

Tip. PolarDB is the right move once your sessions table crosses ~10M rows or you need read replicas without downtime. Migration from RDS to PolarDB is well-documented and Terraform handles both. Don’t start there — RDS is simpler and cheaper at small scale.

A small relational layer is the backbone. The next layer makes the agent feel intelligent.

Layer 2: vector store#

You have two reasonable choices on Aliyun for the vector layer:

- OpenSearch Vector Search Edition — managed, Lucene-backed, supports HNSW + IVF, billed per QPS quota.

- PolarDB or RDS PostgreSQL with

pgvector— co-located with your relational data, no new infra, slower past ~1M vectors.

For anything past prototype I prefer OpenSearch. The cost is real (~¥800/mo for the smallest instance), but you get hybrid lexical+vector search, which is better for retrieval. Pure vector similarity often loses to BM25 on real queries.

| |

The app group is the OpenSearch concept that holds an index. From here you create the index schema via the OpenSearch console or SDK — the alicloud_opensearch_app resource exists but the schema bit is operational, not provisionable. Pin embedding dimension (1536 for text-embedding-3-small, 1024 for Aliyun’s bge-m3) in the index settings and never change it; reindexing 10M vectors is a multi-day job.

If you go the pgvector route instead, add this to the RDS database creation:

| |

The Terraform half is just the database; the schema is application code (Alembic, Flyway, sqlx-migrate — pick one). Don’t try to manage table schemas in Terraform; that path leads to madness, and I have the scars to prove it.

Layer 3: object storage#

OSS is for artifacts: generated images, PDFs, screenshots, run-trace tarballs, and model checkpoints if you fine-tune. For an agent stack:

| |

Three things worth a closer look.

Bucket-name uniqueness#

OSS bucket names are globally unique across all Aliyun customers — same as S3. The random_id suffix avoids the “name already taken” plan failure that bites every first-time user. Once the bucket is created, the name is stable.

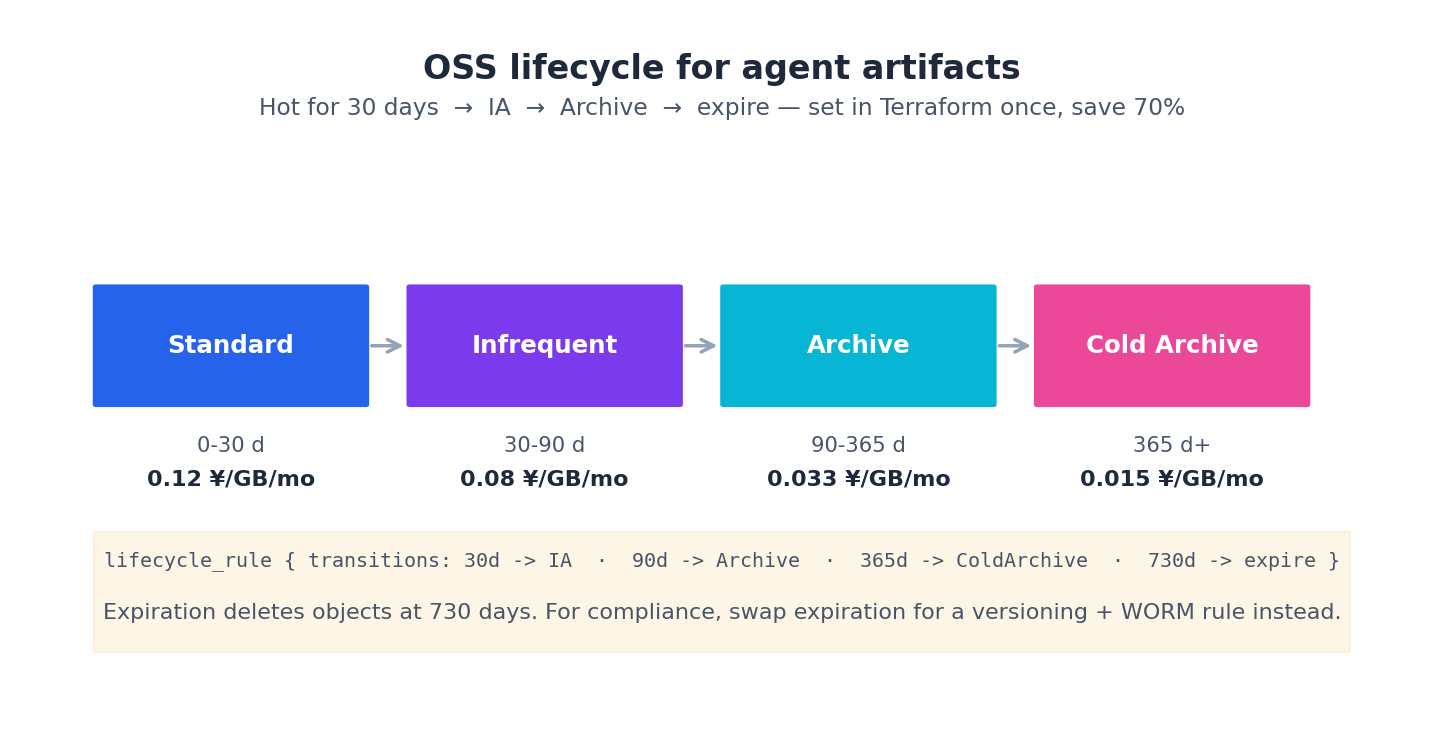

Lifecycle tiering#

The lifecycle_rule block is the single biggest cost lever in OSS:

- Standard (0–30 days, ~¥0.12/GB/mo) — what you write to by default.

- Infrequent Access (30–90 days, ~¥0.08/GB/mo) — cheaper storage, ~¥0.0125/GB retrieval.

- Archive (90–365 days, ~¥0.033/GB/mo) — minutes-to-hours retrieval.

- Cold Archive (365+ days, ~¥0.015/GB/mo) — hours retrieval, the cheapest tier.

For agent artifacts, keep 30 days in Standard, two months in Infrequent Access, nine months in Archive, and one year in Cold Archive, then delete. For a 1 TB artifact corpus, this means the difference between ~¥1500/mo (all Standard) and ~¥250/mo. Codify this in HCL to save significantly over a year. The main pitfalls are the 30-day minimum storage charge for IA and the Archive retrieval latency—avoid putting hot data in cold tiers.

Versioning#

versioning { status = "Enabled" } keeps every object version. An agent that overwrites artifacts/run-123/output.pdf doesn’t actually destroy the previous version — it’s still there with a different version ID. Two reasons this matters:

- Recovery. A bug overwrote 50,000 objects with garbage? Restore the previous versions in a script.

- Tamper-evidence. Combined with WORM (Write-Once-Read-Many) policies, this gives you regulatory compliance for free.

Versioned objects accumulate, so the noncurrent_version_expiration in the lifecycle rule above prunes old versions after 180 days. Without it the storage line will quietly double every six months.

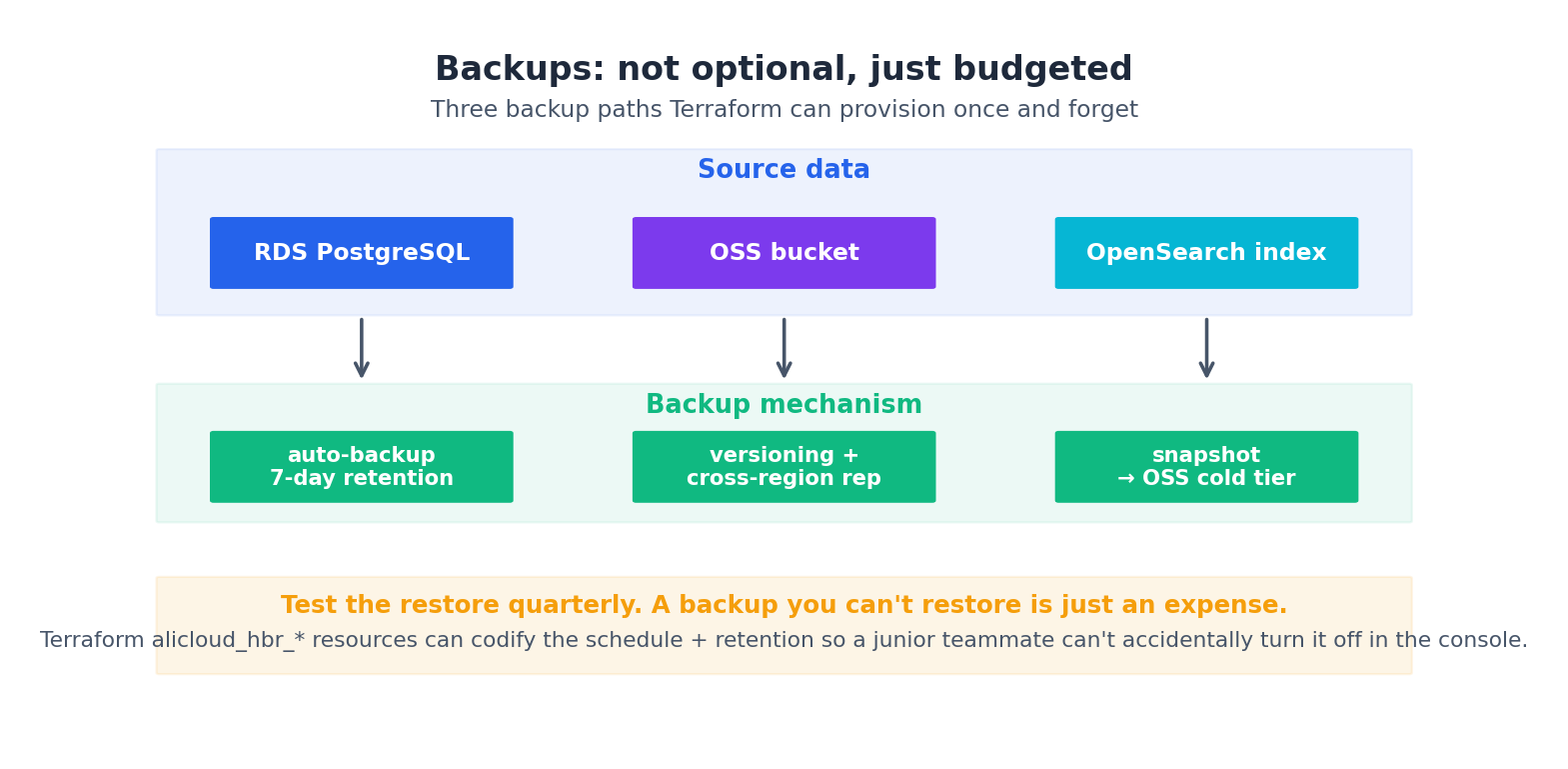

Backups, DR, and proving they work#

A Terraform-managed backup setup looks like this:

- RDS — built-in automated backups (already in the HCL above).

- OSS — versioning + cross-region replication for disaster recovery.

- OpenSearch — snapshot to OSS via the

alicloud_opensearch_*snapshot resources.

Cross-region replication for OSS is one resource:

| |

The aliased provider lets one Terraform run touch two regions:

| |

For a research agent that’s mainly stateless, you might decide DR isn’t worth the storage doubling. For a customer-facing one with conversation history that legally must persist, it’s mandatory.

Prove the replica works — monthly drill#

Replication you have never restored from is a backup that doesn’t exist. The script that catches a broken replica 30 days early instead of in the actual disaster:

| |

Two minutes of compute, zero human attention, and the replica health is no longer a question of faith. Wire it into the same GitHub Actions cron as the drift check from article 2. The same pattern — periodic automated drills of the things you’d otherwise discover are broken at the worst possible time — applies to RDS restore, KMS key rotation, and every single failover path.

Tip. I run a separate

restore-drill.shmonthly that pulls a random RDS backup into acn-shanghai-drinstance and runs schema/checksum verification. It’s the most useful 30 minutes I spend each month.

RDS major-version upgrades through Terraform#

Every two years Postgres has a major release and the old version goes EOL. RDS for PostgreSQL upgrades are a real operational event — they have downtime, they can fail, and the Terraform provider exposes them through engine_version changes that look innocent and aren’t.

The flow that has worked for me on a v15 → v16 upgrade:

Step 1 — snapshot before touching anything.

| |

Wait for completion via aliyun rds DescribeBackups. This is your “oh god” button.

Step 2 — clone to a sibling instance for the trial.

| |

terraform apply brings up a v16 instance with v15 data restored. QA points staging traffic at it for a week. Zero production risk.

Step 3 — when confident, upgrade in-place.

| |

terraform plan shows ~ engine_version: "15.0" -> "16.0" and the apply triggers an in-place upgrade. Downtime depends on database size — sub-1-minute for a small DB, up to 30 minutes for a multi-TB one. The upgrade is reversible only by restoring from the snapshot in step 1, so do not skip step 1.

Step 4 — tear down the trial.

| |

Then delete the trial in the console. Don’t terraform destroy it from the same project — that would walk dependencies and could touch siblings.

The whole process spans ~2 weeks calendar time, ~3 hours of focused work, zero unplanned downtime. The win of doing it through Terraform is that the trial-instance HCL stays in git — six months later when you do the v17 upgrade, the playbook is already there, in the same repo, reviewed by the same team.

Tip. Test the connection-string change in the agent code before the upgrade. Some Postgres v16 changes (e.g. removed

password_encryption = md5) break old client libraries. Run your agent against the trial instance for a full day before promoting.

A real incident: the night I terraform apply-ed the wrong workspace#

This one cost me a Saturday. Worth telling because the fix is structural, not “be more careful”.

Setup: three workspaces — dev, staging, prod. The dev RDS was a small pg.n2.medium.1c. Prod was pg.x4.large.2c with HA. I was working from a laptop, switched a feature branch, ran terraform plan to check it. Saw “Plan: 2 to add, 1 to change, 0 to destroy” — looked clean. Ran terraform apply. Walked away to make coffee.

Came back to a destroyed prod database.

Root cause: I had selected prod workspace in a previous session and never switched back. The change I was applying (a tag tweak) was harmless in dev. In prod, what looked like “1 to change” was actually a force-replace because of an unrelated provider bump that had landed in main and required RDS recreation. The provider plan output should have been clearer — it wasn’t.

The HCL that bit me:

| |

The provider, in its newly-bumped 1.231 version, had decided this parameter was now force_new for some engine versions. Plan said ~ parameter — looking like in-place — apply did a recreate.

Restore took 90 minutes from automated backup (mercifully recent). The post-mortem produced four structural fixes that have prevented every recurrence in two years.

Fix 1 — lifecycle { prevent_destroy = true } on prod stateful resources#

Already in the RDS block earlier in this article. Any terraform apply that would destroy a prod RDS now errors out with Resource has lifecycle.prevent_destroy set. To actually destroy you have to remove the line in HCL, file a PR, get approval, then the destroy is allowed. This single line would have stopped my Saturday outage cold.

Fix 2 — workspace prompt in the shell#

A function that yells on every terraform invocation:

| |

The 1-second pause is enough to break autopilot. Lives in my .zshrc. I haven’t accidentally hit prod since.

Fix 3 — prod apply only from CI, never from a laptop#

The cleaner version: revoke your laptop’s permission to apply against the prod state file. Only the GitHub Actions runner has the RAM role with oss:PutObject on the prod state prefix. Local terraform plan works (it only reads); local apply fails with AccessDenied.

| |

The Deny on the developer role wins over any Allow. Devs can plan; only CI can apply. The CI run is gated by PR review.

Fix 4 — a pre-apply hook that summarises destruction#

| |

Any plan that deletes something requires DESTROY=yes in the env. You cannot type that by accident. This is the belt to the suspenders of prevent_destroy — it catches the case where the destruction is in a child module you forgot to lock down.

Take all four. None alone would have saved me; all four together make the failure mode structurally impossible.

Connecting compute to storage#

The ECS instance from article 4 needs to actually reach this storage. Three pieces:

- Network — already done. The

agent_runtime_sg_idfrom the VPC module is the source for thememory_rds_sgandvector_store_sgingress rules. - Credentials — the agent reads the DB password from KMS Secrets Manager via STS:

1 2 3from alibabacloud_kms20160120.client import Client as KmsClient resp = kms_client.get_secret_value(GetSecretValueRequest(secret_name="agents-prod-rds-admin")) db_password = resp.body.secret_data - Endpoints — Terraform outputs them:

1 2 3 4 5 6 7 8 9output "rds_endpoint" { value = alicloud_db_instance.memory.connection_string } output "vector_endpoint" { value = alicloud_opensearch_app_group.vector.api_domain } output "artifacts_bucket" { value = alicloud_oss_bucket.artifacts.bucket }

The agent reads these from environment variables that cloud-init sets from the Terraform outputs. No hardcoded endpoints, no manual config files, no human in the loop on rotation.

What it costs#

Monthly, dev workspace, low traffic:

- RDS PostgreSQL (

pg.n2.medium.1c, 100 GB ESSD): ~¥350/mo. - OpenSearch vector (smallest spec): ~¥800/mo.

- OSS (10 GB Standard, lifecycle on): ~¥1.5/mo + traffic.

- KMS (covered in article 3): ~¥10/mo.

Roughly ¥1200/mo for the storage layer in dev. Prod with HA RDS, larger OpenSearch, more OSS, and the cross-region replica will be ¥3000–5000/mo. This is where the cost pressure starts being real — article 7 shows how to track and alert on it before it surprises you in the monthly bill review.

What’s Next#

Article 6 builds the LLM gateway in front of the compute we provisioned in article 4 and the storage we just provisioned. That’s the place where API keys live, quotas get enforced, and per-agent cost gets attributed. By the end of article 6 you’ll have a complete agent-runnable stack — the last two articles wire observability and cost control over the top.

Terraform Agents 8 parts

- 01 Terraform for AI Agents (1): Why IaC Is the Only Sane Way to Ship Agents

- 02 Terraform for AI Agents (2): Provider, Auth, and Remote State on OSS

- 03 Terraform for AI Agents (3): A Reusable VPC and Security Baseline

- 04 Terraform for AI Agents (4): Compute — ECS, ACK, or Function Compute?

- 05 Terraform for AI Agents (5): Storage — Vector, Relational, and Object Memory you are here

- 06 Terraform for AI Agents (6): LLM Gateway and Secrets Management

- 07 Terraform for AI Agents (7): Observability, SLS Dashboards, and Cost Alarms

- 08 Terraform for AI Agents (8): End-to-End — research-agent-stack in One Apply