Terraform for AI Agents (6): LLM Gateway and Secrets Management

Centralise LLM API access through one gateway: per-agent quotas, request logging, and zero secrets outside KMS. Terraform-provisioned API Gateway plus self-hosted LiteLLM on ECS, with DashScope/OpenAI/Anthropic keys rotating automatically through KMS Secrets Manager.

A pattern I see repeatedly in immature agent stacks: each agent has its own copy of OPENAI_API_KEY in its own .env file. Sometimes the same key, sometimes different ones, sometimes a colleague’s personal key from when they prototyped. When the bill arrives nobody can tell which agent caused which token spend, and when a key leaks (it always does) you’re playing whack-a-mole across a dozen .env files.

The real wake-up call hit me two years ago. A contractor finished his three-month engagement on a Friday, his laptop went home, and on the following Tuesday DashScope billing flagged 12 million tokens of qwen-max traffic from an IP we didn’t recognise. His personal API key — copy-pasted into a side project — was still sitting in our agent’s .env. Rotating it took six hours: three engineers, four repos, two CI pipelines, one panicked Slack thread. Never again.

This article addresses that pattern by building one LLM gateway that:

- Holds every provider key in KMS Secrets Manager (one bucket, one ACL, one rotation cadence)

- Authenticates agents via short-lived RAM-issued tokens — zero static AK on agent boxes

- Enforces per-agent QPM and daily token caps so a runaway loop costs ¥800/day, not your quarter

- Logs every request to SLS for forensics, cost attribution, and SOC-2 evidence

- Rotates keys without redeploying any agent — one PR, one apply, done

Two days of setup. Permanent operational dividend.

The shape#

Agents on the left, providers on the right, gateway in the middle. Every agent’s HTTP call to “an LLM” actually goes to the gateway, which decides which provider to dispatch to, attaches the right key, enforces quotas, and logs the result.

You have two options:

- Aliyun API Gateway in front of a custom backend — most managed, easiest to wire quota plans, native RAM integration. Best when routing is “one model, one provider, just rate-limit me.”

- Self-hosted LiteLLM on ECS behind an ALB — most flexible, supports the long tail of providers (DeepSeek, Moonshot, Zhipu, your fine-tuned PAI endpoint), easier to extend with cost tracking and cross-provider fallback.

I use both, depending on the routing complexity. For a simple proxy with quotas, API Gateway is sufficient. For multi-provider routing with budget guards and circuit breakers — which most teams need within six months — LiteLLM on ECS is better. The rest of this article focuses on LiteLLM because it suits 80% of teams.

Step 1: store every key in KMS Secrets Manager#

The first rule: provider keys never appear in .env files, in provider {} blocks, in agent code, or in tfstate plaintext. They live in KMS Secrets Manager and the gateway pulls them at startup via STS.

| |

The keys themselves come in via var.llm_keys — set with -var-file=secrets.auto.tfvars (gitignored) or TF_VAR_llm_keys='{...}' from a CI secret. They never live in your repository.

Cost note: KMS Secrets Manager bills around ¥0.4 per secret per month plus ¥0.03 per 10k API calls in Shanghai region. For four provider keys pulled twice an hour by two gateway boxes, the monthly bill is rounding error — under ¥10. The default service KMS key is free; only customer-managed CMKs cost ¥1/month each. Don’t let “KMS sounds expensive” be the reason you stay on .env files.

Real-world tip: When you rotate a provider key, change

secret_dataand bumpversion_id. KMS keeps the old version active for the recovery window so in-flight requests don’t fail; new gateway pulls get the new version. Plan this in PR form so it’s auditable.

Step 2: a RAM role the gateway can assume#

The gateway ECS or function needs permission to read these secrets — and only these secrets:

| |

Three key points:

- Resource-scoped policy. Only these secrets, not

kms:GetSecretValueon*. If the gateway box is compromised, the attacker cannot pivot to other KMS secrets — billing keys, RDS passwords, OSS buckets all stay sealed. - No long-lived AK. The role is assumed by the ECS instance via metadata service. Zero static credentials on disk, in env, or in cloud-init.

kms:Decryptis needed even just to read the secret because secrets are KMS-encrypted at rest. Forgetting this is the #1 reason your gateway boots and then 401s on every fetch.

Step 3: deploy LiteLLM on ECS#

LiteLLM is the easiest open-source LLM proxy I know. It uses the OpenAI API format on the frontend and translates to each provider’s format on the backend. Self-hosting it on ECS keeps things flexible.

| |

Two ecs.c7.large boxes — 2 vCPU, 4 GB each — comfortably handle 200+ requests per second of pure proxy traffic. LiteLLM is async I/O-bound; CPU rarely tops 30%. Don’t oversize. If traffic is bursty, put it in a scaling group and let CloudMonitor add nodes when CPU crosses 60% sustained.

gateway-init.sh does the boot:

| |

Each instance now runs the gateway on port 4000 with all provider keys loaded into the process env — never on disk. The ALB in front fans out:

| |

Agents now reach the gateway at http://<alb-id>.cn-shanghai.alb.aliyuncs.com/v1/chat/completions and never see a provider key. Internal-only ALB — no public IP, no listener on 443 facing the world. If an agent needs to call from outside the VPC, it goes through the bastion or CEN, never directly.

Step 4: per-agent quotas#

LiteLLM supports per-key quotas natively. The cleanest way to wire this through Terraform is to provision one LiteLLM “virtual key” per agent, each with its own QPM and token budget. Since LiteLLM stores these in its own database, you provision them via its API at apply time using a null_resource:

| |

I’m not in love with null_resource + local-exec — it’s the exit hatch for “the resource doesn’t exist in the provider yet.” But it works, and the alternative (writing a custom Terraform provider for LiteLLM) is more code than it’s worth for one team. If LiteLLM ever ships an official Terraform provider, swap in a day.

The output: each agent gets a distinct LITELLM_API_KEY env var that the cloud-init script in article 4 reads. Quota violations return 429 Too Many Requests, which agents must handle with exponential backoff — bake this into your shared HTTP client, don’t trust each agent author to remember.

A note on numbers. The schedule-agent ceiling at 100k tokens/day and ¥40/day might look low. It is — and intentionally so. A scheduling agent that suddenly spikes to 2M tokens is almost certainly stuck in a planning loop, and a hard cap is a far better error than a ¥3000 surprise on the monthly bill. Tune ceilings to “10x the agent’s last 30-day p99 daily usage” and revisit quarterly.

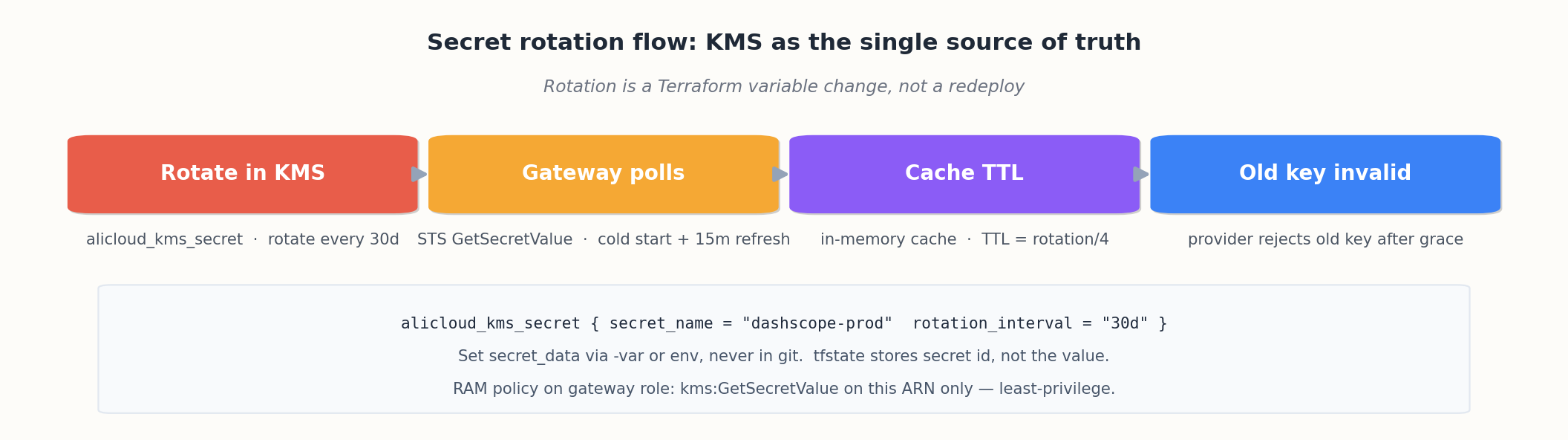

Step 5: secret rotation flow#

The whole point of putting keys in KMS Secrets Manager is rotation:

The lifecycle:

- You change the

secret_datain Terraform (or via the KMS API), bumpversion_idtov2 - KMS keeps

v1active for the rotation window (default 30 days) - Gateway instances re-pull on cold start; existing instances keep using the cached value until their next refresh (every 15min, configured in

gateway-init.sh) - After 30 days,

v1is disabled — anyone still using it getsInvalidSecretVersion - You confirm zero usage of

v1via SLS, then promotev2and retirev1

For a team, codify this as a runbook and re-execute it quarterly even if nothing leaks. Keys that have lived longer than a quarter are by definition stale; treat staleness as a low-grade incident. The contractor story I opened with happened because nobody had rotated DashScope keys in 14 months. After this article, that scenario is impossible — even if you forget, the 30-day window forces the issue.

Step 6: how plaintext still leaks (and how to plug it)#

Step 1 protects the secret at rest. There are at least three other places plaintext leaks if you’re not careful, and all three have bitten projects I’ve reviewed.

Leak 1: terraform.tfstate#

alicloud_kms_secret.secret_data is in your tfstate, in plaintext, every time you apply. Even with sensitive = true on the variable, the value lives in state JSON. The mitigation is layered:

- OSS bucket KMS-encryption (article 2) — already done. Protects the state at rest.

- OSS bucket access policy — restrict

oss:GetObjectto the CI runner role only, never developers. - Use the

datasource pattern instead of putting plaintext in HCL. When the secret is created out of band (e.g. by an HSM-rotation job or the KMS console), Terraform reads it but never authors it:

| |

The principle: Terraform should know the name of the secret, not the value. The runtime fetches the value from KMS via instance metadata. This is the single most important habit to build, and it inverts how most tutorials present secrets.

Leak 2: CI logs#

terraform plan output marks sensitive values as (sensitive value) if sensitive = true is set on the variable. But only on the variable — not on resource attributes that derive from it. A common slip:

| |

Mark every output that derives from a sensitive value as sensitive = true:

| |

For tfvars files, add them to .gitignore and configure CI to fail if they’re committed:

| |

Leak 3: provider debug logs#

TF_LOG=DEBUG terraform apply is the quickest way to debug a provider issue. It is also the quickest way to dump every API request and response — including request bodies that contain secrets — to your terminal scrollback. I have seen Slack screenshots of this happen, twice in two different companies.

When you must use TF_LOG, redirect to a file with restrictive permissions, never paste:

| |

Better, use TF_LOG_CORE=DEBUG (Terraform-only) which usually isolates the issue without including provider request bodies.

Step 7: plan-review-apply gates in CI#

The null_resource for LiteLLM key generation is fine for one engineer. For a team with multiple agents, multiple environments, and shared on-call rotation, you want a structured CI pipeline that humans review at the diff level. Here’s the GitHub Actions workflow I run:

| |

Three things this enforces that humans skim past:

terraform fmt -checkrejects un-formatted HCL. No more “did you run fmt?” comments cluttering review.tfsecruns Checkov-style scans — flags public buckets, unencrypted volumes, overly broad SG rules.- The plan posted as a PR comment means review reads the actual plan output, not “trust me bro, this looks fine.”

The matching apply workflow:

| |

GitHub’s environment mechanism gates prod applies behind required reviewer approval — a separate human (often the on-call) clicks “approve” before apply runs. Apply uses a different RAM role than plan: plan only has read; apply has write. Two-key launch.

This pipeline has caught five real incidents in the year I’ve run it: an accidental prevent_destroy = false flip, a CIDR overlap that would have broken VPC peering, a forgotten module version pin, an ignore_changes that masked real config drift, and a security group rule with 0.0.0.0/0 that snuck into a PR. Each was a comment on the PR, fixed before merge.

When to graduate to Atlantis#

GitHub Actions is the right starting point. Once you have more than five engineers running Terraform, the operational overhead of per-PR plan comments, lock contention, and manual approvals starts to creak. Atlantis is the next step: a self-hosted webhook server that listens to PRs, runs terraform plan automatically, comments the plan, and applies on atlantis apply from authorised users.

Compared to Actions:

- Plans run inside your VPC — no need to give external runners access to the OSS state bucket.

- One persistent server holds the lock for sequential applies — no race conditions across concurrent PRs.

- Per-project config (

atlantis.yaml) lets you scope which dirs are managed, with per-dir approval policies.

Provisioning Atlantis itself is a vpc-baseline + compute exercise — one ECS, one ALB, one RAM role. After two months on a 10-engineer team, the throughput improvement was concrete: plan-to-apply cycle dropped from 25 minutes (Actions queue + manual approval) to 8 minutes.

I don’t recommend Atlantis below 5 engineers — the ops cost of running it isn’t worth the throughput. Above 5, it pays for itself within a quarter.

Real-world tip: Whichever pipeline you pick, one master repo, many envs. Don’t fork your prod repo from dev. The single repo with

env/dev.tfvars,env/staging.tfvars,env/prod.tfvarskeeps the codepath identical across environments — which is the whole point of IaC. A forked-per-env layout is an anti-pattern that erodes the property you came for.

Bailian / DashScope specifically#

DashScope is just another OpenAI-compatible endpoint in LiteLLM’s eyes. The model names are dashscope/qwen-max, dashscope/qwen-plus, etc. The API key is what you generate from the DashScope console.

If you want first-class Aliyun-native treatment (so you can use STS instead of an API key), DashScope supports STS-based auth on some endpoints — but in 2026 the API-key path is still the standard, and rotating the key via KMS as above is the right operational pattern. When STS becomes the default (the roadmap suggests 2027), this article becomes one config flip easier; the rotation discipline remains.

Real-world tip: Set a

master_keyon LiteLLM (theLITELLM_MASTER_KEYenv var). Without it, anyone who can reach the gateway can issue themselves an API key. With it, only the master can mint subordinate keys — and the master never leaves Terraform’s variable space.

What this gives you#

After this article you have:

- One URL where every agent calls “the LLM”

- One place to add a new model provider (edit

litellm_config,terraform apply) - One place to rotate any provider key (edit

var.llm_keys,terraform apply) - One log stream (next article) showing every request, latency, token count, model, and agent

- Hard QPM and budget caps per agent — a runaway loop costs at most ¥800/day, not your entire month

- A CI pipeline that catches the regressions humans skim past, with two-key launch into prod

- Three concrete plaintext leak vectors closed off, not just the obvious one

The gateway is a strategic asset. Every team I’ve shipped one for has thanked me within a month — usually the first time someone’s API key gets accidentally checked into git and they realise rotating it is a one-line PR instead of the six-hour fire drill I lived through.

What’s Next#

Article 7 is observability and cost control: SLS for logs, ARMS for traces, CloudMonitor for metrics, the budget alarm that pings DingTalk when daily LLM spend crosses a threshold, and the SLS-driven cost dashboard that lets you see “which agent is burning my budget.”

Article 8 is the end-to-end walkthrough where everything in articles 2-7 lands as one terraform apply.

Terraform Agents 8 parts

- 01 Terraform for AI Agents (1): Why IaC Is the Only Sane Way to Ship Agents

- 02 Terraform for AI Agents (2): Provider, Auth, and Remote State on OSS

- 03 Terraform for AI Agents (3): A Reusable VPC and Security Baseline

- 04 Terraform for AI Agents (4): Compute — ECS, ACK, or Function Compute?

- 05 Terraform for AI Agents (5): Storage — Vector, Relational, and Object Memory

- 06 Terraform for AI Agents (6): LLM Gateway and Secrets Management you are here

- 07 Terraform for AI Agents (7): Observability, SLS Dashboards, and Cost Alarms

- 08 Terraform for AI Agents (8): End-to-End — research-agent-stack in One Apply