Time Series Forecasting (4): Attention Mechanisms — Direct Long-Range Dependencies

Self-attention, multi-head attention, and positional encoding for time series. Step-by-step math, PyTorch implementations, and visualization techniques for interpretable forecasting.

RNNs and LSTMs handled “too many time steps” but left a subtler limitation in place: information has to travel step by step. For step 100 to see what happened at step 1, the signal has to ride the hidden state through 99 intermediate stops — and each stop attenuates the signal a little and squashes it through a nonlinearity. Even with LSTM’s “highway” cell state, it’s still a single lane in a single direction.

Attention’s core idea is almost embarrassingly simple: why not let any two time steps talk directly? Instead of step 100 hearing about step 1 secondhand through 99 intermediaries, compute a direct “how much should step 100 care about step 1?” weight, then read step 1’s content with that weight. The distance between any two points collapses from 99 steps to 1 — and gradients no longer need to crawl through the whole sequence to update distant weights.

It sounds like brute force (every pair of steps now carries a relationship, blowing complexity from O(n) to O(n²)) but the payoff is enormous: long-range dependencies become trivial, training parallelizes (RNNs are forced to run step-by-step), and the attention weights themselves become a kind of self-explanation that you can visualize. This chapter starts where the field actually started — bolting attention onto an LSTM encoder/decoder — and works up to the full Query/Key/Value formulation, which is the doorway into the next chapter on Transformers. The closing case study uses attention on a stock-price forecaster and shows the weight heatmap so you can see exactly which days the model is reading.

What You Will Learn#

- Why recurrent models hit a wall on long-range dependencies, and how attention removes it.

- The Query / Key / Value mechanism, scaled dot-product attention, and the role of $1/\sqrt{d_k}$ .

- Two classic scoring functions — Bahdanau (additive) and Luong (multiplicative).

- How to wire attention into an LSTM encoder/decoder for time series.

- Multi-head attention specialised for time — different heads for recency, period, anomaly.

- The $O(n^2)$ memory wall and how sparse / linear attention bypass it.

- A worked stock-prediction case with attention-weight overlays.

Prerequisites: RNN/LSTM/GRU intuition (Parts 2-3), basic linear algebra, PyTorch.

Why attention? The bottleneck of recurrence#

In a length-$n$ recurrent model, the path between two time steps that are $k$ apart is $O(k)$ steps long. Every step squeezes information through a single hidden vector, and every step risks attenuating the gradient.

Real time series rarely cooperate with that geometry:

- An ECG anomaly minutes ago matters more than the last 200 samples of baseline.

- Today’s electricity load looks most like the same hour, last Wednesday.

- A stock price reacts to an earnings event that happened weeks ago.

Attention proposes a radically different geometry: every step has a direct, learned link to every other step. The path length between any two positions becomes $O(1)$ , and the link strength — the attention weight — is itself interpretable.

Scaled dot-product attention from first principles#

$$Q = X W^Q, \qquad K = X W^K, \qquad V = X W^V,$$with $W^Q, W^K \in \mathbb{R}^{d \times d_k}$ and $W^V \in \mathbb{R}^{d \times d_v}$ .

- Query $Q$ — “what is this step looking for?”

- Key $K$ — “what does this step advertise?”

- Value $V$ — “what does this step actually carry?”

Why divide by $\sqrt{d_k}$ ?#

If the entries of $Q$ and $K$ are i.i.d. with variance $1$ , then each dot product $q_i^\top k_j$ has variance $d_k$ . As $d_k$ grows, the softmax inputs become large in magnitude and the softmax saturates — gradients collapse to near zero on all but one position. Dividing by $\sqrt{d_k}$ rescales the variance back to $1$ and keeps gradients healthy.

A minimal implementation#

| |

The whole mechanism is two matrix multiplications surrounding a softmax. The expressive power lives in the learned projections $W^Q, W^K, W^V$ .

Bahdanau vs Luong: two classic scoring functions#

Before the Transformer, Bahdanau et al. (2015) introduced additive attention for sequence-to-sequence translation, and Luong et al. (2015) followed with multiplicative (dot-product) variants. Both are still useful when you wire attention into an RNN.

| Property | Bahdanau (additive) | Luong (multiplicative) |

|---|---|---|

| Score | $v^\top \tanh(W_1 h_i + W_2 s_{t-1})$ | $s_t^\top W h_i$ |

| Cost per pair | One MLP forward | One dot product |

| Parametrisation | $v, W_1, W_2$ | $W$ (often identity) |

| Best when | Query and key live in different spaces | $Q$ and $K$ share a space |

| Modern usage | Rare in pure Transformers | Standard (with $1/\sqrt{d_k}$ ) |

Both produce a vector of pre-softmax scores, both finish with softmax + weighted sum. The Transformer simply chose the cheaper one and added the scale factor.

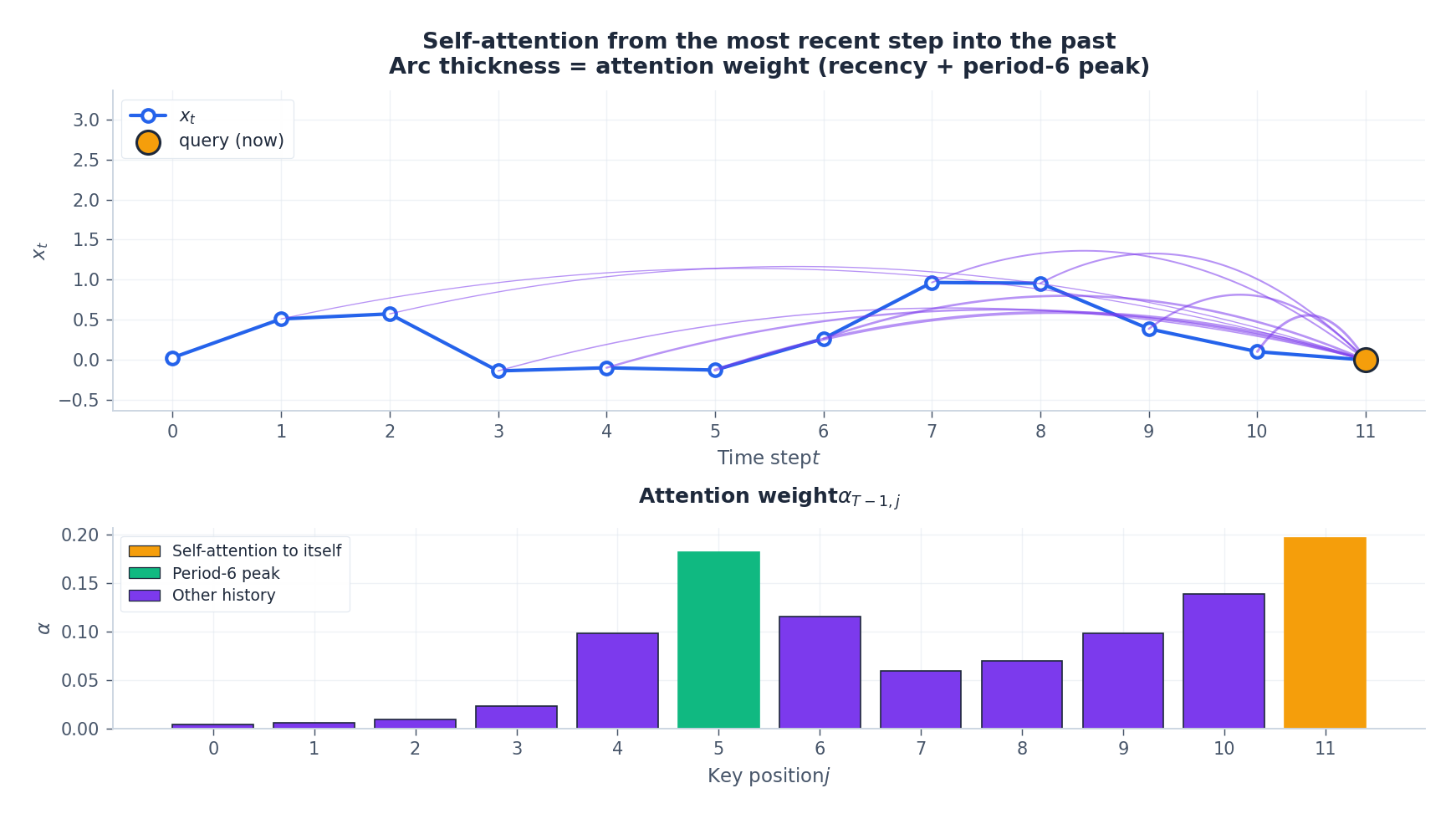

Self-attention applied to a time series#

In the seq2seq world, queries come from the decoder and keys/values come from the encoder — two different sequences. Self-attention drops that distinction: the same sequence acts as $Q$ , $K$ , and $V$ . Each step looks at every other step in the same window.

For time series this is exactly what we want. Suppose we want to forecast the next value given a 12-step window. The attention weights from “now” back into the past tell us which historical step the model is leaning on.

Causal masking#

For forecasting we must prevent step $i$ from looking at the future. The standard fix is a causal mask — a lower-triangular matrix added to the scores, with $-\infty$ in the upper triangle so the softmax kills those entries:

| |

This is the only line that distinguishes a forecasting Transformer from a sequence-classification Transformer.

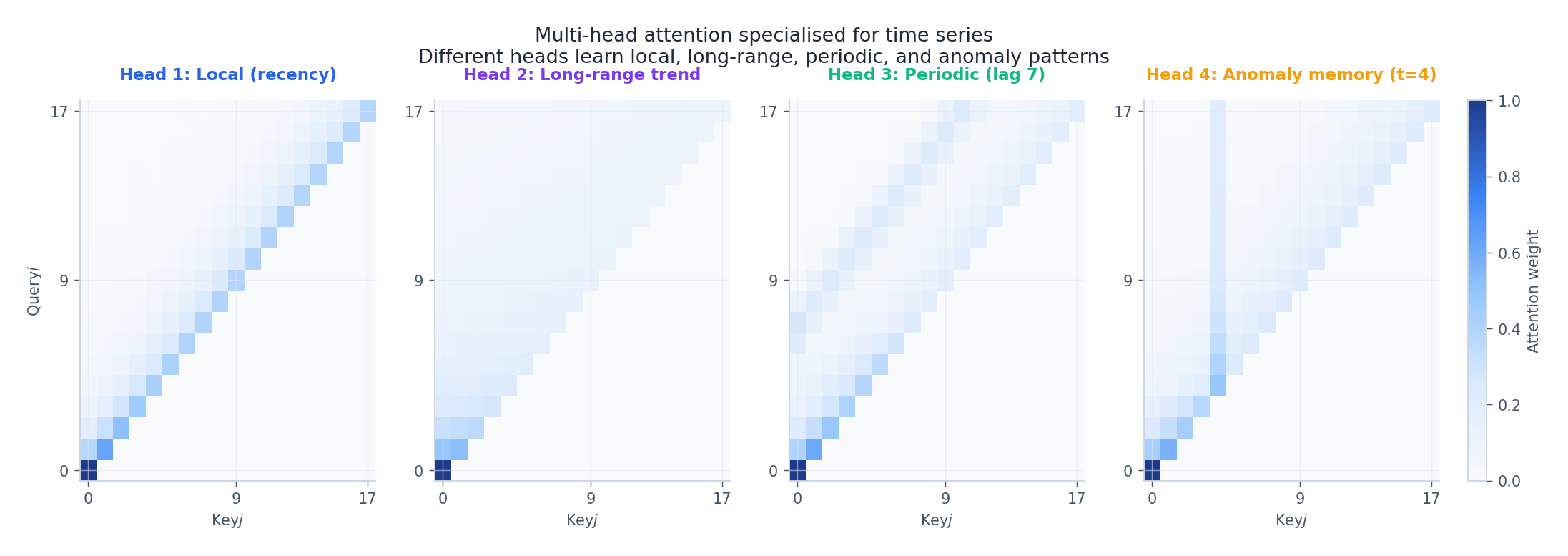

Multi-head attention, specialised for time#

$$ \text{MultiHead}(X) = [\text{head}_1; \dots; \text{head}_h] \, W^O, \qquad \text{head}_j = \text{Attention}(X W^{Q}_j, X W^{K}_j, X W^{V}_j). $$Each head has its own $W^Q_j, W^K_j, W^V_j \in \mathbb{R}^{d \times (d/h)}$ and is free to specialise. In time series we typically observe four families of heads after training:

| Head | What it learns | Why it matters |

|---|---|---|

| Local | Sharp diagonal | Short-term momentum |

| Long-range | Diffuse triangle | Slow drift, regime |

| Periodic | Off-diagonal stripes | Daily / weekly cycles |

| Anomaly | Vertical column | “Remember the spike at $t=k$ ” |

PyTorch implementation:

| |

How many heads? For $d_\text{model} = 64\!-\!128$ , four heads is a sensible starting point. If you visualise heads after training and several are near-identical, reduce. If a single head is trying to encode multiple distinct patterns, increase.

Positional encoding: putting time back in#

Self-attention is permutation-invariant — shuffling the input shuffles the output identically. For time series, that throws away the most important variable in the dataset. We must inject position explicitly.

Sinusoidal encoding#

$$ PE_{(p, 2i)} = \sin\!\left(\frac{p}{10000^{2i/d}}\right), \qquad PE_{(p, 2i+1)} = \cos\!\left(\frac{p}{10000^{2i/d}}\right). $$Why this exact form?

- Boundedness: every entry sits in $[-1, 1]$ , regardless of $p$ .

- Linear shift-equivariance: $PE_{p+\Delta}$ is a fixed linear function of $PE_p$ , so the model can learn relative offsets like “look 7 steps back” with a single linear projection.

- Multi-scale: low-index dimensions move slowly (long-term position), high-index dimensions move quickly (fine-grained position).

Time-aware encoding for irregular sampling#

When samples are not equally spaced (sensor data, trades), feed the actual timestamp difference rather than the index. A common pattern:

| |

This generalises sinusoidal PE to arbitrary time intervals — the same code handles 1 Hz IoT data, irregular trade ticks, and missing samples uniformly.

Attention + LSTM: the practical hybrid#

Pure Transformers shine on long sequences but require a lot of data. For windows in the 50-500 step range, a hybrid is often the strongest baseline: an LSTM extracts local temporal features cheaply, and attention then chooses which encoder state matters at each forecast step.

| |

Empirically this architecture (or variants — DA-RNN, dual-stage attention, etc.) wins many M-competition style benchmarks, especially when the horizon is short and data is limited.

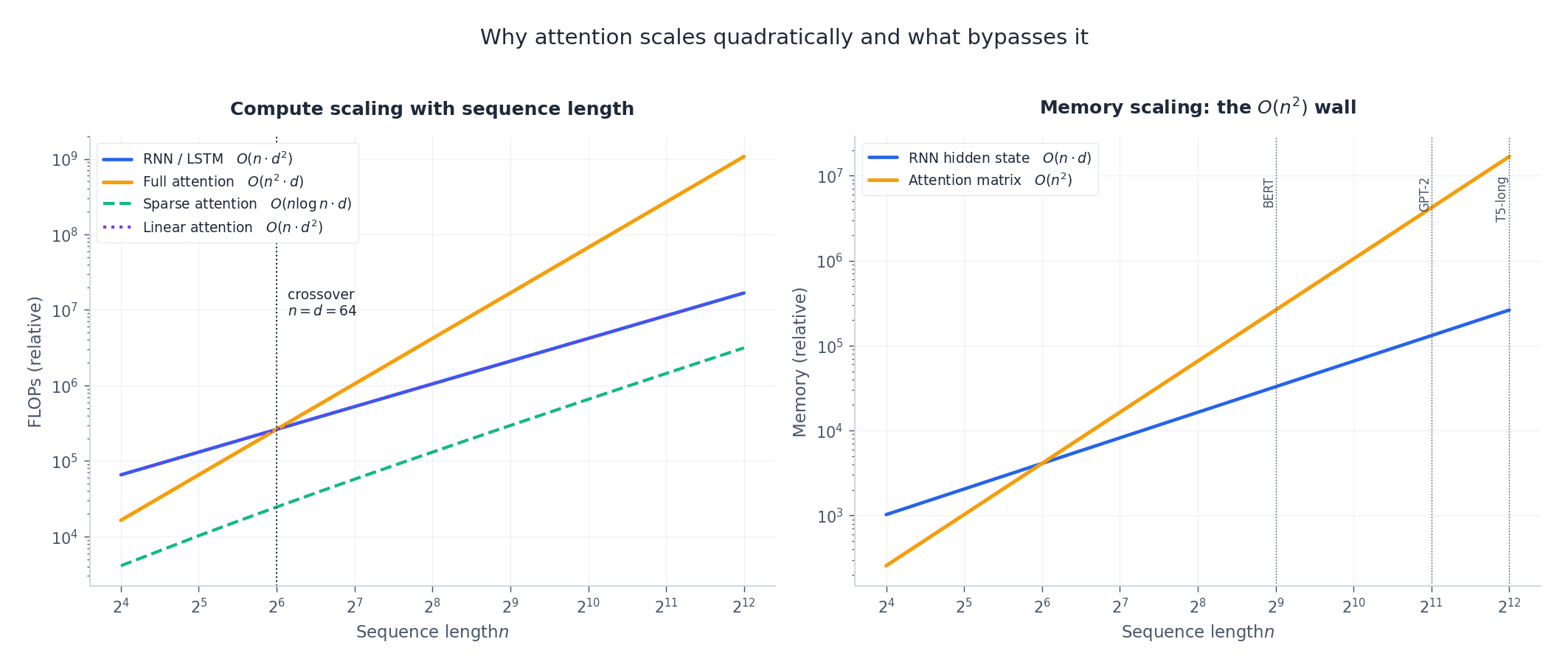

The $O(n^2)$ wall and how to escape it#

The attention matrix has $n^2$ entries. Every entry is computed and stored. For a 4096-step window with float32, that is 64 MB per head, per layer, per example. The wall is real.

| Variant | Time | Memory | Idea |

|---|---|---|---|

| Full attention | $O(n^2 d)$ | $O(n^2)$ | Compute every pair |

| Sparse / strided | $O(n \log n \cdot d)$ | $O(n \log n)$ | Local windows + dilated jumps (Longformer, BigBird) |

| Linear attention | $O(n d^2)$ | $O(n d)$ | Replace softmax with a kernel feature map (Linformer, Performer) |

| Informer ProbSparse | $O(n \log n \cdot d)$ | $O(n \log n)$ | Score only the top-$\log n$ queries (covered in Part 8 ) |

For most time-series problems, $n$ is in the hundreds, $d$ is in the tens to a few hundred, and the crossover with RNNs sits in your favour. Reach for sub-quadratic variants only when the standard implementation runs out of memory.

Case study: forecasting a stock price#

To make the whole pipeline concrete, here is a synthetic stock series with three regimes — a slow trend, a 30-day cycle, and an earnings event at day 60 — forecast 10 days ahead by an LSTM+attention model and a no-attention baseline.

Three things to notice:

- The earnings-day weight is large — attention has discovered an event memory without being told what an earnings release is.

- The cycle peak is preserved — the orange forecast follows the 30-day oscillation, while the baseline collapses to a near-linear extrapolation.

- Interpretability comes for free — the same matrix that drives the prediction also explains it. With LSTMs you would need post-hoc tools (integrated gradients, SHAP); with attention the explanation is a softmax row.

A note of caution: attention weights are correlated with importance, not identical to it. For high-stakes deployments, validate explanations with perturbation tests (zero-out a key step, see the prediction shift) rather than reading the heatmap as ground truth.

Practical recipe for time-series attention#

- Standardise inputs. Attention scores are dot products; without normalisation, large-scale features dominate.

- Add positional encoding. Sinusoidal for regular sampling, time-aware for irregular.

- Use causal masks during training and inference for forecasting.

- Start with 4 heads, $d_\text{model} \in [64, 128]$ . Scale only if validation loss demands it.

- Layer-norm before attention, dropout on attention weights and on the feed-forward block.

- Lower learning rate than RNNs — $10^{-4}$ to $5 \cdot 10^{-4}$ with a warm-up of a few hundred steps.

- Visualise heads early. If they collapse to identical patterns, reduce $h$ or add diversity regularisation.

- Beware the $O(n^2)$ wall. If you need $n > 1024$ , go straight to a sub-quadratic variant or to Informer (Part 8 ).

Common Pitfalls#

- Forgetting to scale by $\sqrt{d_k}$ — training stalls within a few steps.

- Wrong masking — subtle data leakage that inflates training metrics and crashes at deployment.

- Attention to padding — forgetting the padding mask leaks the special token’s signal into every position.

- Treating weights as causal explanations — they are evidence, not proof.

- Training on too-short windows — if all useful history fits in 10 steps, an LSTM will probably outrun a Transformer.

Summary#

Attention replaces the sequential, lossy information channel of an RNN with a direct, content-addressable lookup. The math is two matrix multiplications and a softmax; the consequences are profound:

- $O(1)$ path length between any two time steps.

- Fully parallel training — every position is computed at once.

- Built-in interpretability via the attention matrix.

- A clean abstraction for multi-scale temporal patterns through multi-head attention.

The price is $O(n^2)$ memory and the need to inject position explicitly. For most time-series problems those costs are well worth paying — and Parts 5, 6, and 8 of this series will show how the Transformer, TCN, and Informer push the idea further.

Mnemonic — Q asks, K answers, V carries; scale by $\sqrt{d_k}$ , softmax to weights, multiply V to read; many heads, many views.

What’s next#

Attention does one simple thing — let any two time steps compute a direct relationship — and the consequences are out of proportion to the simplicity. The “long-range dependencies must be hand-held by gates” problem the RNN era struggled with disappears. As bonuses you get parallel training (no more step-by-step recurrence) and free visualization (just plot the attention weights as a heatmap).

This chapter still uses attention gently, as a helper bolted onto LSTM. The next chapter on Transformers takes it to the limit: no RNN at all, just attention stacks all the way down. That pure-attention approach took over NLP, but porting it to time series introduces two new problems — how to inject ordering (attention is permutation-invariant on its own) and how to deal with O(n²) cost (a month of hourly data is already 720 steps). The next chapter walks through the four families of fixes — sparse, linear, patched, decoder-only — and their flagship models (Autoformer, FEDformer, Informer, PatchTST).

Before you get there, run the attention-heatmap code from this chapter and pick a few moments you already know matter — known events, known periodic peaks — and check that the model puts weight on them. The habit of aligning a model’s self-explanation against your own domain knowledge transfers cleanly to every Transformer-style model later.

References#

- Vaswani et al., Attention Is All You Need, NeurIPS 2017.

- Bahdanau, Cho, Bengio, Neural Machine Translation by Jointly Learning to Align and Translate, ICLR 2015.

- Luong, Pham, Manning, Effective Approaches to Attention-based Neural Machine Translation, EMNLP 2015.

- Qin et al., A Dual-Stage Attention-Based Recurrent Neural Network for Time Series Prediction, IJCAI 2017.

- Kitaev, Kaiser, Levskaya, Reformer: The Efficient Transformer, ICLR 2020.

- Beltagy, Peters, Cohan, Longformer: The Long-Document Transformer, 2020.

- Zhou et al., Informer: Beyond Efficient Transformer for Long Sequence Time-Series Forecasting, AAAI 2021. — covered in Part 8 .

Time Series Forecasting 8 parts

- 01 Time Series Forecasting (1): Traditional Statistical Models

- 02 Time Series Forecasting (2): LSTM — Gate Mechanisms and Long-Term Dependencies

- 03 Time Series Forecasting (3): GRU — Lightweight Gates and Efficiency Trade-offs

- 04 Time Series Forecasting (4): Attention Mechanisms — Direct Long-Range Dependencies you are here

- 05 Time Series Forecasting (5): Transformer Architecture for Time Series

- 06 Time Series Forecasting (6): Temporal Convolutional Networks (TCN)

- 07 Time Series Forecasting (7): N-BEATS — Interpretable Deep Architecture

- 08 Time Series Forecasting (8): Informer — Efficient Long-Sequence Forecasting