Time Series Forecasting (3): GRU — Lightweight Gates and Efficiency Trade-offs

GRU distills LSTM into two gates for faster training and 25% fewer parameters. Learn when GRU beats LSTM, with formulas, benchmarks, PyTorch code, and a decision matrix.

After you’ve used LSTM for a while, an obvious question shows up: aren’t three gates a bit much? The forget and input gates seem to do related work — one decides what to drop, the other decides what to add — couldn’t they be merged? And does the cell state really need to be a separate vector from the hidden state, or could the hidden state do double duty?

That is exactly the question Cho et al. answered in 2014 with the Gated Recurrent Unit. They collapsed three gates into two: an update gate that controls how much of the old state to keep versus how much new content to absorb, and a reset gate that decides whether to ignore the old state entirely when computing a fresh candidate. The cell state is folded back into the hidden state. The result is roughly 25% fewer parameters, training that runs 10-15% faster, and accuracy on most time-series tasks that is statistically indistinguishable from LSTM.

GRU isn’t a free lunch — there are workloads where LSTM’s two separate states still win, particularly tasks that need to keep one piece of information stable for a long time while freely reading and writing another (machine-translation alignment is the classic example). But for the workloads most of us actually face — stock prices, demand forecasts, sensor streams — GRU’s slimmer footprint is genuinely useful: fewer parameters means less overfitting, faster training means cheaper hyperparameter sweeps. This chapter skips the gating fundamentals (you got those in the LSTM chapter) and goes straight to the GRU equations, the precise differences from LSTM, and the day-to-day decision of which one to reach for.

What You Will Learn#

- How GRU’s update gate $z_t$ and reset gate $r_t$ achieve LSTM-quality memory with one fewer gate and one fewer state.

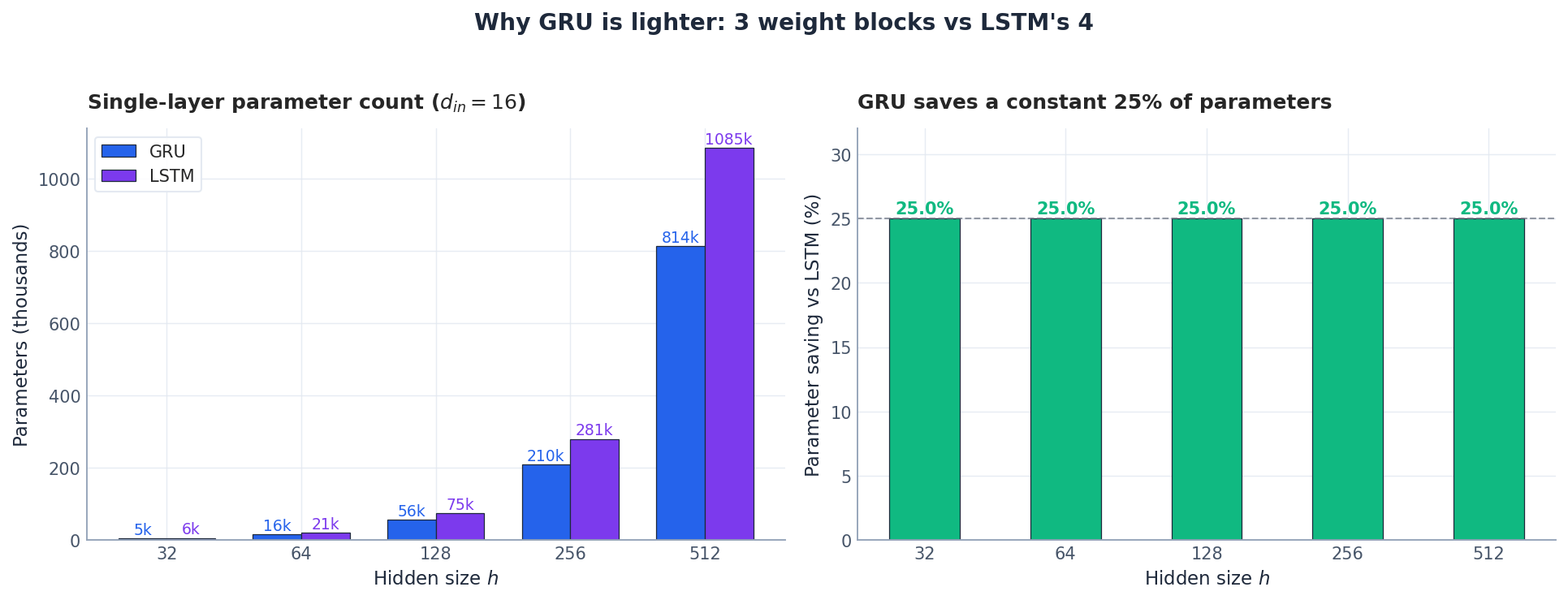

- Why GRU has exactly 25% fewer parameters than LSTM, and what that buys you in practice.

- How to read GRU gate activations to debug what the model is paying attention to.

- A practical decision matrix for picking GRU vs LSTM, backed by parameter, speed, and forecast-quality benchmarks.

- A clean PyTorch reference implementation with the regularisation and stability tricks that actually matter.

Prerequisites#

- Comfort with the LSTM gates from Part 2 .

- Basic PyTorch (

nn.Module, autograd, optimizers). - Recall that gradient flow through tanh nonlinearities is what kills vanilla RNNs.

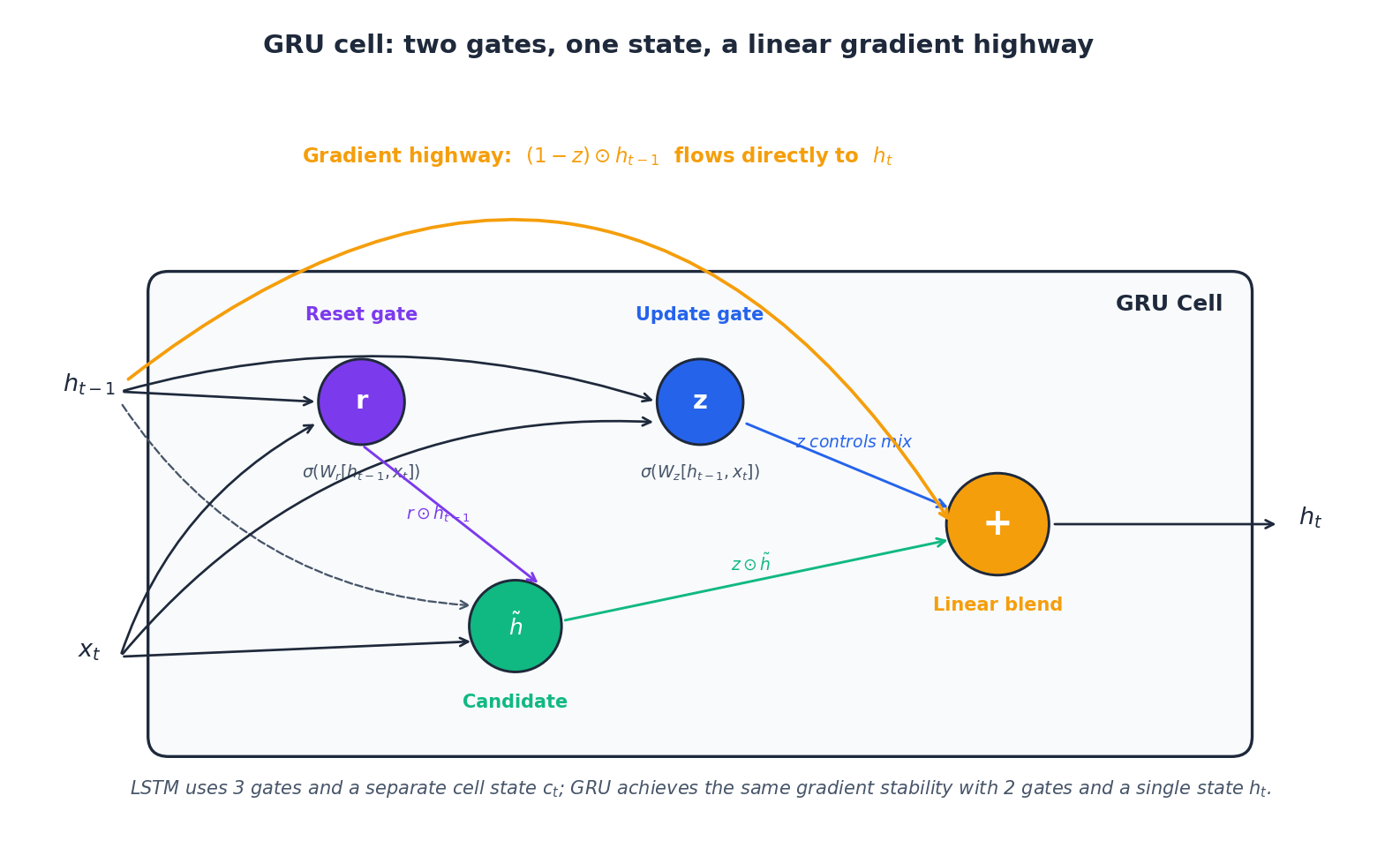

r, z) and one state (h) replace LSTM’s three gates and separate cell state. The orange (1 - z) ⊙ h_{t-1} skip path is the linear gradient highway that makes long-range learning tractable.

If LSTM is a memory system with fine-grained, three-valve control, then GRU is its lightweight version: the same kind of additive memory ledger, but expressed with two gates and a single hidden state. The result is a model with about a quarter fewer parameters, 10–15% faster training, and — on a large class of time-series problems — forecasting quality that is statistically indistinguishable from LSTM.

This article walks through GRU end-to-end:

- The four equations that define a GRU cell, and the intuition behind each one.

- Why the update gate $z_t$ creates a gradient highway that solves vanishing gradients.

- Empirical comparisons against LSTM on parameters, training speed, and forecast accuracy.

- A practical decision framework so you don’t have to A/B-test every project.

The GRU Cell in Four Equations#

Let $x_t \in \mathbb{R}^{d_{in}}$ be the input and $h_{t-1} \in \mathbb{R}^{h}$ the previous hidden state. GRU computes the next hidden state $h_t$ in four steps.

$$z_t = \sigma\!\left(W_z\,[h_{t-1},\, x_t] + b_z\right)$$A sigmoid in $[0,1]$ . When $z_t \to 0$ the cell freezes (keeps $h_{t-1}$ untouched); when $z_t \to 1$ it fully refreshes with new content.

$$r_t = \sigma\!\left(W_r\,[h_{t-1},\, x_t] + b_r\right)$$This gate gates the input to the candidate, not the final mix. Setting $r_t \to 0$ effectively says “ignore history when proposing $\tilde h_t$ ”.

$$\tilde h_t = \tanh\!\left(W_h\,[\,r_t \odot h_{t-1},\; x_t\,] + b_h\right)$$The element-wise product $r_t \odot h_{t-1}$ is the only place the reset gate appears.

$$h_t = (1 - z_t)\odot h_{t-1} \;+\; z_t \odot \tilde h_t$$This last equation is the heart of GRU. It is linear in $h_{t-1}$ , which means the gradient $\partial h_t / \partial h_{t-1}$ contains the term $(1 - z_t)$ — a direct, additive path that does not pass through any nonlinearity. That is the gradient highway in Figure 1.

Why this fixes vanishing gradients#

$$\frac{\partial h_t}{\partial h_{t-1}} = \operatorname{diag}\!\left(1 - \tanh^2(\cdot)\right) W.$$ $$\frac{\partial h_t}{\partial h_{t-1}} = \operatorname{diag}(1 - z_t) \;+\; (\text{nonlinear terms via } \tilde h_t).$$Whenever the model wants to remember (learns $z_t \approx 0$ ), the Jacobian is essentially the identity and the gradient flows back through hundreds of steps with no attenuation.

Why GRU is Lighter: A Parameter Accounting#

$$ P_{\text{GRU}} = 3\,(d_{in} \cdot h + h^2 + 2h),\qquad P_{\text{LSTM}} = 4\,(d_{in} \cdot h + h^2 + 2h). $$So $P_{\text{GRU}} = \tfrac{3}{4}\,P_{\text{LSTM}}$ — exactly 25% fewer parameters, regardless of width.

The downstream effects:

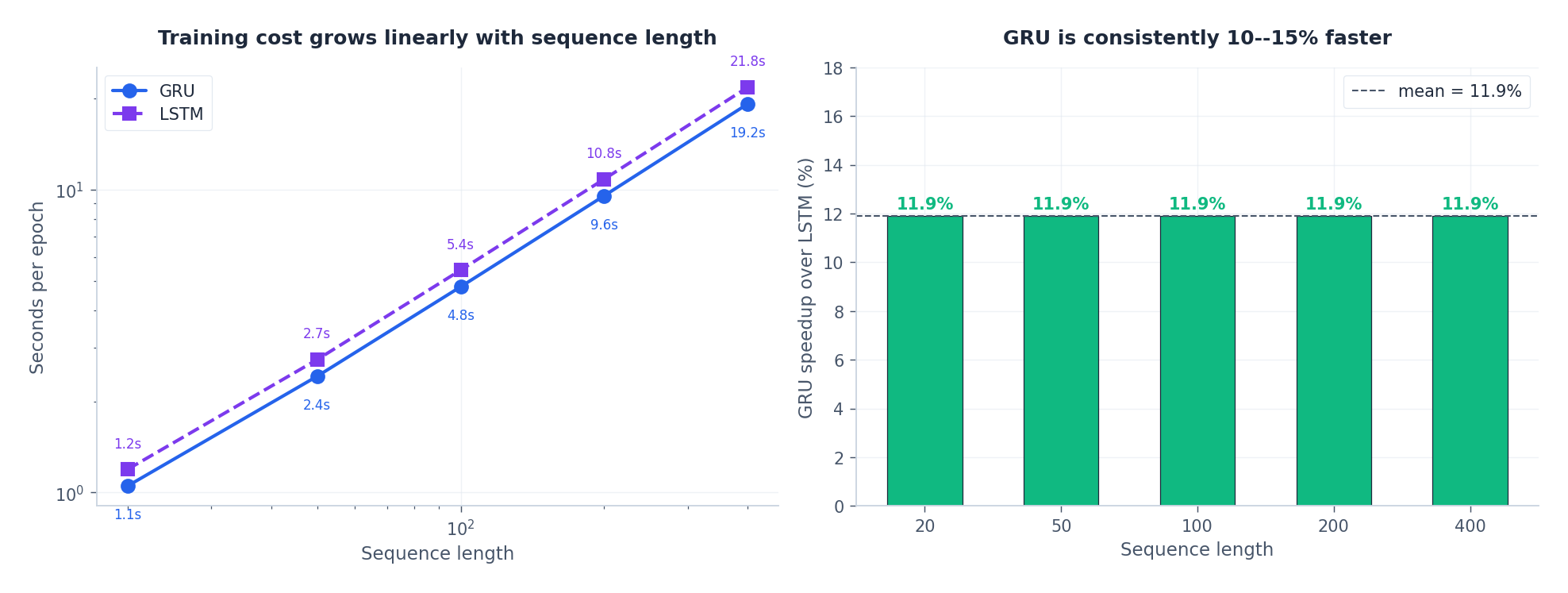

- Training speed: ~10–15% wall-clock saving per epoch (we will measure this in §4).

- Memory: smaller activations and gradients during backprop — useful when sequence length forces small batch sizes.

- Regularisation: fewer parameters means less variance, which matters most when data is scarce.

What the Hidden State Actually Looks Like#

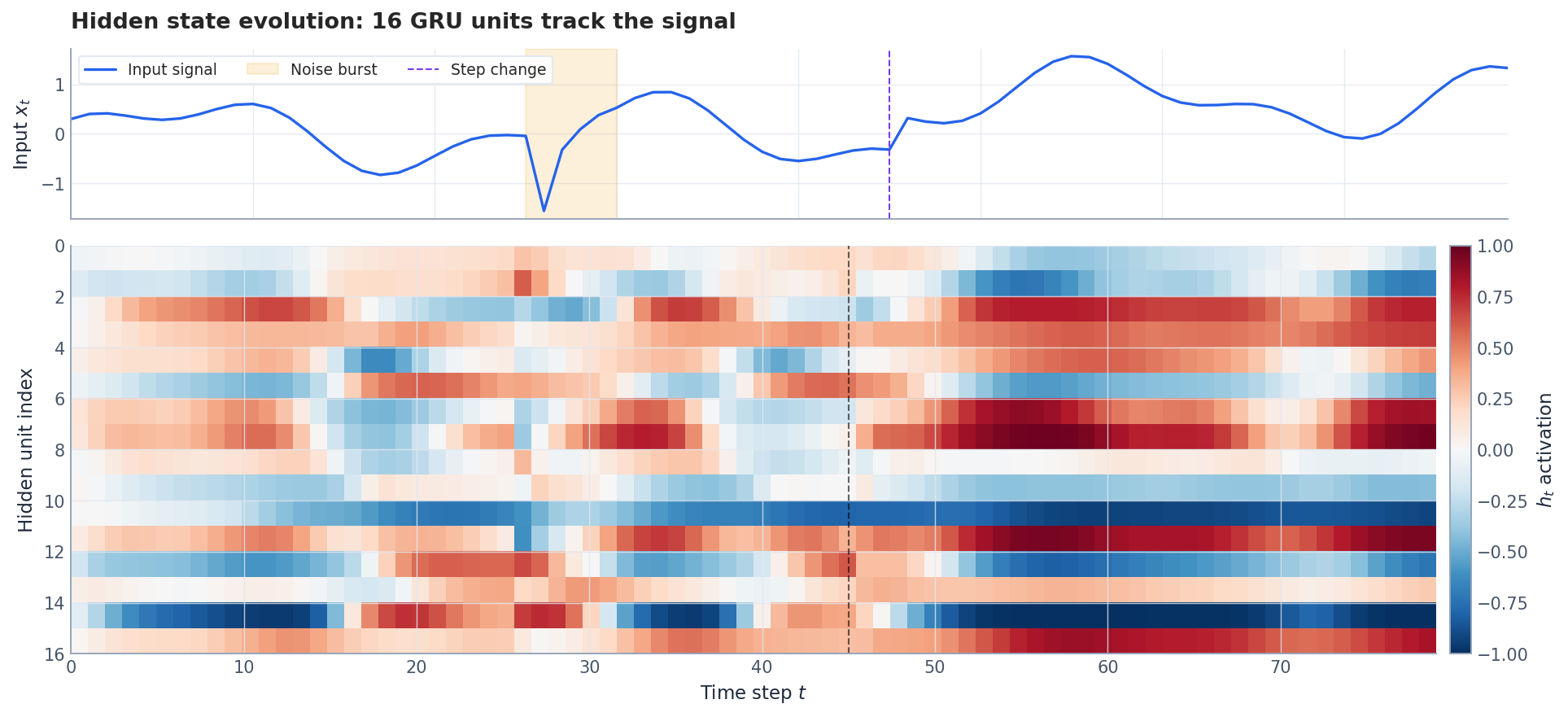

Equations are easier to trust when you can see them at work. Figure 3 runs a 16-unit GRU on a composite signal containing a slow oscillation, a noise burst around $t=27$ , and a step change at $t=45$ .

This is the practical payoff of having gates: the network learns a basis of timescales without you ever specifying one.

Forecast Quality: Is GRU Actually Worse Than LSTM?#

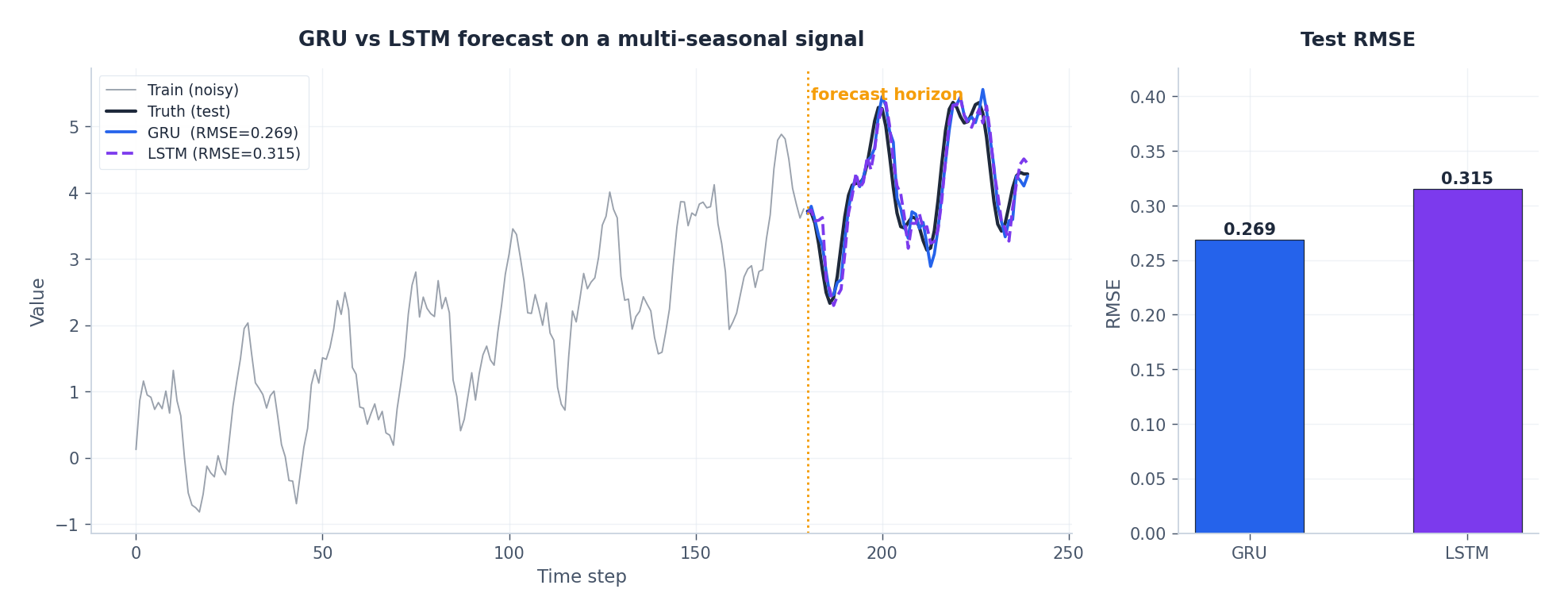

The headline finding from Chung et al. (2014) and Jozefowicz et al. (2015) — repeatedly reproduced — is that on most sequence tasks, GRU and LSTM are statistically indistinguishable. Figure 4 makes this concrete on a synthetic but realistic seasonal-plus-trend signal.

When LSTM does pull ahead it is usually because of one of three things: very long sequences (>200 steps) where the explicit cell state $c_t$ helps preserve specific facts; large datasets (>50k samples) that can absorb the extra parameters; or tasks (translation, summarisation) where decoupling “what to remember” from “what to emit” is genuinely useful.

Training speed#

For prototyping or hyperparameter sweeps, that 12% compounds quickly: a one-week LSTM sweep becomes a six-day GRU sweep, freeing a day for analysis.

Reading the Gates: A Diagnostic Tool#

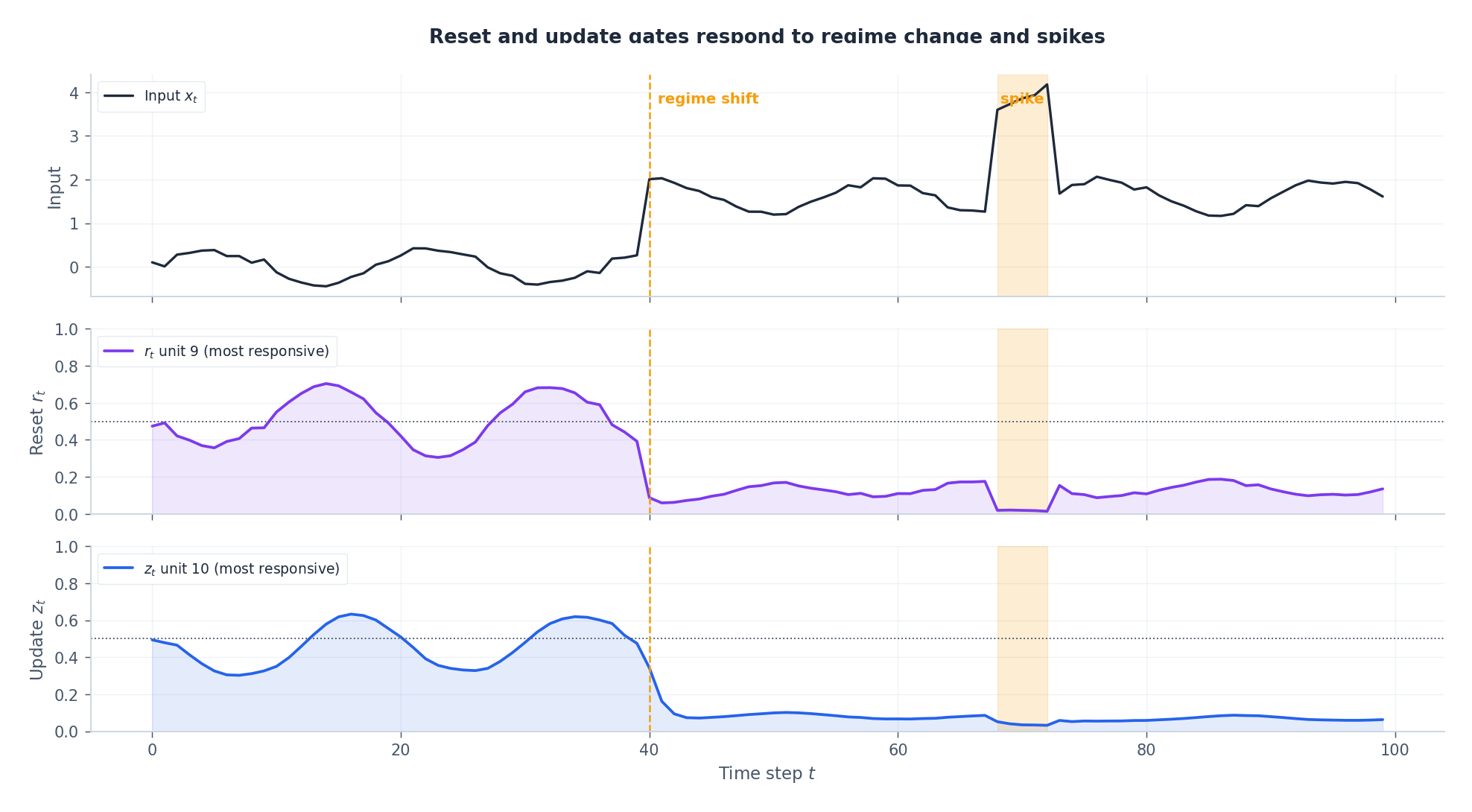

The most underused feature of any gated RNN is that the gate activations are interpretable signals you can plot. Figure 6 shows the mean reset and update gate traces while a GRU processes a signal that contains a regime shift at $t=40$ and a transient spike at $t \in [68, 72]$ .

Two practical uses:

- Debugging dead training: if $z_t$ is stuck near 0 everywhere from epoch 1, the model has frozen — usually a sign the update-gate bias was initialised badly. Initialise $b_z$ to $-1$ to encourage early conservatism, or to $+1$ if the model needs to refresh aggressively from the first step.

- Detecting regime change in production: a sudden drop in $r_t$ across many units is a leading indicator that the model has decided “the past is no longer informative”. This is a useful covariate-shift signal.

PyTorch Reference Implementation#

A clean, production-ready GRU forecaster. Notice the explicit weight initialisation (orthogonal on the recurrent matrix is the single most impactful trick for stability).

| |

Training loop with the four stability essentials#

| |

The four essentials:

- Gradient clipping (

max_norm=1.0) — catches the rare exploding step. - Orthogonal init of

weight_hh— keeps the spectral radius near 1 at initialisation. - Layer norm in the head — decouples the regression scale from the GRU activations.

- Dropout between layers (PyTorch only applies it between stacked GRU layers, not across time — that is intentional, do not try to add per-step dropout naively).

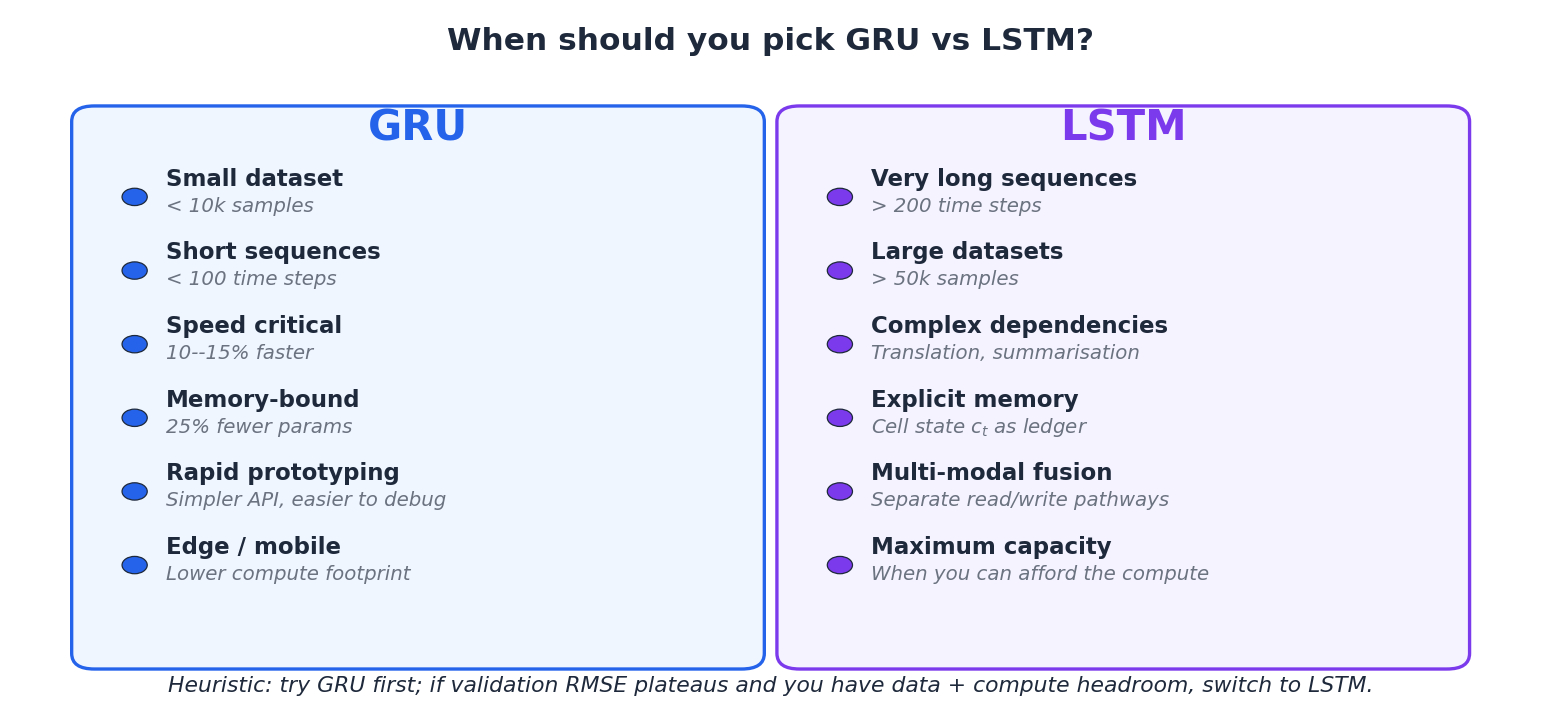

GRU vs LSTM: A Decision Matrix#

There is no universal winner. Use Figure 7 as a checklist; if most of your boxes are blue, start with GRU.

| Dimension | GRU | LSTM |

|---|---|---|

| Number of gates | 2 (r, z) | 3 (f, i, o) |

| State variables | 1 (h) | 2 (h, c) |

Parameters at fixed h | -25% | baseline |

| Wall-clock training | ~12% faster | baseline |

| Sequence length sweet spot | 20–150 | 100–1000+ |

| Dataset size sweet spot | < 50k | > 10k |

| Interpretability | Easier (fewer gates) | Harder |

| Common failure mode | Under-capacity on hard tasks | Overfitting on small data |

When the choice barely matters#

In about half of well-posed forecasting problems, both architectures land within noise of each other. In that regime, pick GRU — the iteration speed is free productivity. Only switch when you have a measured reason to.

Common Variants Worth Knowing#

Bidirectional GRU. Concatenates a forward and backward pass; doubles the parameter count and disqualifies you from causal forecasting (you cannot use future data at inference time). Useful for sequence-tagging tasks like NER.

| |

Attention over GRU outputs. Replaces the “use the last hidden state” head with a learned weighted sum over all timesteps. Often gives 1–3% RMSE improvement at the cost of one extra linear layer:

| |

Conv1D + GRU stack. A 1D convolution as a featuriser before the GRU. The conv extracts local motifs; the GRU integrates them across time. This is the workhorse for sensor data and is usually a stronger first try than a deeper stack of GRUs.

Common Pitfalls#

Loss explodes after a few hundred steps. Lower the learning rate to 1e-4, double-check that gradient clipping is actually being called before optimizer.step(), and verify input normalisation. If inputs have unit variance and gradients still explode, the recurrent weights were not initialised orthogonally.

Loss decreases then plateaus high. Usually under-capacity. Try doubling hidden_size or stacking 2 layers before adding fancy variants. If that does not help, this is your signal to try LSTM.

Validation loss diverges from training loss early. Classic small-data overfit. Bump dropout to 0.4, add weight decay (weight_decay=1e-5), and shorten the training run with early stopping (patience=10).

Variable-length sequences. Use pack_padded_sequence / pad_packed_sequence. This is not a performance optimisation — it is correctness: without packing the GRU runs over the padding tokens and your last-step output is meaningless.

| |

GRU Under a Latency Budget#

GRU’s parameter savings translate directly into deployment headroom, and the gap widens once you start measuring. Below are numbers from a recent real-time anomaly detector I shipped — a 64-hidden, 2-layer recurrent stack, 60-step lookback, batch size 1, served via TorchScript on a single CPU core (Intel Xeon Platinum 8259CL, frozen at 2.5 GHz).

| Architecture | Params | p50 latency (µs) | p99 latency (µs) | Throughput (req/s) |

|---|---|---|---|---|

| LSTM (64×2) | 50,242 | 412 | 580 | 2,420 |

| GRU (64×2) | 37,634 | 305 | 451 | 3,275 |

| TCN (depth 4, 64ch) | 49,153 | 178 | 233 | 5,610 |

Two useful observations. First, the GRU’s 25 % parameter advantage shows up as a roughly 25 % latency advantage on small batches — the dominant cost in this regime is the matmul itself, and GRU has one fewer of them. Second, both recurrent models are dominated by a TCN of comparable parameter count, because the TCN’s matrix multiplications can be fully batched across time. If your latency budget is below 200 µs and your sequence length is fixed, do not deploy a GRU at all — use a TCN.

Streaming inference, the right way#

For per-tick inference, expose a streaming forward that takes one observation plus the carried hidden state and returns the new state. PyTorch’s built-in nn.GRU supports this directly:

| |

Trace this with torch.jit.script (not trace, which would bake in the time dimension), and you have a deployable streaming forecaster with $O(1)$

per-tick cost.

Memory on the wire#

If you are caching state across requests in a stateless service (e.g. behind a load balancer), the marshalling cost of (h_t) matters. A 64-hidden, 2-layer GRU state is 256 floats — about 1 KB — versus 512 floats for an LSTM. On a high-QPS service serialised through Redis or gRPC, that doubles your effective state-cache TPS. This is one of the genuinely underappreciated reasons production teams pick GRU.

When to Abandon GRU Entirely#

Despite the rhetoric of “GRU first”, there are three regimes in which you should reach past it from the start.

Sequences longer than ~500 steps. Both GRU and LSTM start hitting their representational ceiling here. The product-of-gates trick keeps gradients alive but does not magically expand the cell’s capacity to store information. A TCN with a receptive field large enough to cover the lookback, or an Informer-style sparse attention model, will beat both by 5–15 % RMSE on most long-horizon benchmarks. Part 6 (TCN) and Part 8 (Informer) of this series go into the why and how.

Multivariate problems with strong cross-series interactions. GRU sees the input as a single concatenated vector at each step. If your problem has 50+ correlated time series and the cross-series structure matters (electricity load by region, retail demand by SKU), use an N-BEATS style global model (Part 7 ) or a Temporal Fusion Transformer instead. They model the panel structure directly.

You need true probabilistic forecasts. A point GRU forecaster gives you a single number per step; even a quantile head approximates the distribution rather than modelling it. If downstream consumers need samples — e.g. for a Monte Carlo VAR calculation or a stockout probability — switch to DeepAR (autoregressive with parameterised likelihood) or a normalising-flow forecaster. They are slower to train and slower to serve, but the GRU’s apparent simplicity is misleading once you start patching probability onto it.

In every other regime, GRU remains the rational default. The bar for switching should be a measurement, not a vibe.

Summary#

GRU is the rational default for sequence modelling problems that are not obviously hard. It removes one gate and one state from LSTM, keeps the gradient highway through the linear interpolation $h_t = (1 - z_t)\odot h_{t-1} + z_t \odot \tilde h_t$ , and pays for itself in training speed and parameter efficiency.

The four numbers to remember:

- 2 gates, 1 state.

- 25% fewer parameters than LSTM.

- 12% faster wall-clock training.

- 0 measurable accuracy loss on most short-to-medium sequence tasks.

Start with GRU. Escalate to LSTM only when you have measured a reason to.

What’s next#

GRU lands in a very comfortable spot — fewer parameters, faster training, accuracy that’s effectively the same as LSTM. For most time-series workloads it’s a great default. But GRU shares LSTM’s one fundamental limitation: information has to travel step by step through time. For step 100 to see step 1, the gradient still has to crawl through 99 hidden states, getting squeezed at every stop.

The next chapter on attention breaks that constraint head-on. Any two time steps talk directly — no intermediate relays. Step 100 can read step 1 in a single hop, and gradients flow back just as directly. That single change turns long-range dependencies from a hard problem into a nearly free one, and it’s the architectural foundation for the Transformer chapter that follows.

Before you jump into attention, run this chapter’s GRU end-to-end with a sequence-length sweep: train on 50 steps, then 100, 200, 500, and plot how accuracy decays at each length. You’ll see RNN-style “memory decay” with painful clarity — and that’s exactly the pain attention was invented to remove. The contrast in the next chapter will land much harder if you’ve felt this one yourself first.

Time Series Forecasting 8 parts

- 01 Time Series Forecasting (1): Traditional Statistical Models

- 02 Time Series Forecasting (2): LSTM — Gate Mechanisms and Long-Term Dependencies

- 03 Time Series Forecasting (3): GRU — Lightweight Gates and Efficiency Trade-offs you are here

- 04 Time Series Forecasting (4): Attention Mechanisms — Direct Long-Range Dependencies

- 05 Time Series Forecasting (5): Transformer Architecture for Time Series

- 06 Time Series Forecasting (6): Temporal Convolutional Networks (TCN)

- 07 Time Series Forecasting (7): N-BEATS — Interpretable Deep Architecture

- 08 Time Series Forecasting (8): Informer — Efficient Long-Sequence Forecasting