Time Series Forecasting (8): Informer — Efficient Long-Sequence Forecasting

Informer reduces Transformer complexity from O(L^2) to O(L log L) via ProbSparse attention, distilling, and a one-shot generative decoder. Full math, PyTorch code, and ETT/weather benchmarks.

The Transformer is wonderful at sequence modeling — right up to the moment your sequence gets long. Vanilla self-attention costs $\mathcal{O}(L^2)$ in both compute and memory, so a one-week hourly window (168 steps) is fine, a one-month window (720 steps) is painful, and a three-month window (2160 steps) is essentially impossible on a single GPU. That is exactly the regime real-world long-horizon forecasting lives in: weather, energy, finance, IoT.

Informer (Zhou et al., AAAI 2021 best paper) is the architecture that finally made Transformers practical for these settings. It does three things, each of which would be a contribution on its own:

- ProbSparse self-attention keeps only the $\mathcal{O}(\log L)$ most informative queries, dropping per-layer cost from $\mathcal{O}(L^2)$ to $\mathcal{O}(L \log L)$ .

- Self-attention distilling halves the sequence length between encoder layers, so memory shrinks geometrically with depth.

- A generative decoder predicts the entire forecast horizon in one forward pass instead of running $H$ autoregressive steps.

Combined, the three changes deliver about a 6-10x speedup and 5-10% better MSE than a vanilla Transformer on long-horizon ETT/weather/electricity benchmarks. This chapter unpacks the math behind each one and walks through the implementation.

What You Will Learn#

- The exact $\mathcal{O}(L^2)$ pain points in vanilla self-attention for long sequences.

- ProbSparse’s KL-divergence sparsity measure and its $\max - \mathrm{mean}$ approximation.

- How encoder distilling exchanges sequence length for depth without losing dominant patterns.

- Why the generative decoder is much faster and slightly more accurate than autoregressive decoding.

- A complete PyTorch implementation, plus how Informer scores on the ETT and weather benchmarks.

Prerequisites: Part 5 (Transformer architecture). Comfort with Big-O reasoning and basic information theory (entropy, KL divergence).

Why long sequences kill the vanilla Transformer#

$$\mathrm{Attn}(q_i, K, V) = \sum_{j=1}^{L} \mathrm{softmax}\!\left(\frac{q_i^\top k_j}{\sqrt{d}}\right) v_j.$$To do this for every query you need the full $L \times L$ score matrix. Three costs scale as $\mathcal{O}(L^2)$ :

- $L$ query-key dot products of dimension $d$ : $L^2 d$ FLOPs.

- $L^2$ softmax operations.

- $L^2$ floats of attention-matrix storage during the backward pass.

Concrete numbers for a forecast horizon of $L = 720$ with $d = 64$ and 8 heads on a single sample:

- Attention scores: $720 \times 720 = 518\text{K}$ entries per head per layer.

- Memory: ~16 MB just for attention weights at batch 32 (float32, 8 heads, 3 layers). Activations during backprop push that an order of magnitude higher.

- FLOPs: ~33 M per head per layer, dominated by the $L^2 d$ matmul.

Push to $L = 2160$ and you are looking at almost 5 M attention entries per head, which is enough to OOM a 24 GB GPU at batch sizes anyone uses for training.

Several papers tried to attack this with structural sparsity (Longformer’s local + global windows, BigBird’s random + global) or low-rank approximation (Linformer, Performer). Informer’s pitch is different: let the data tell you which queries deserve full attention.

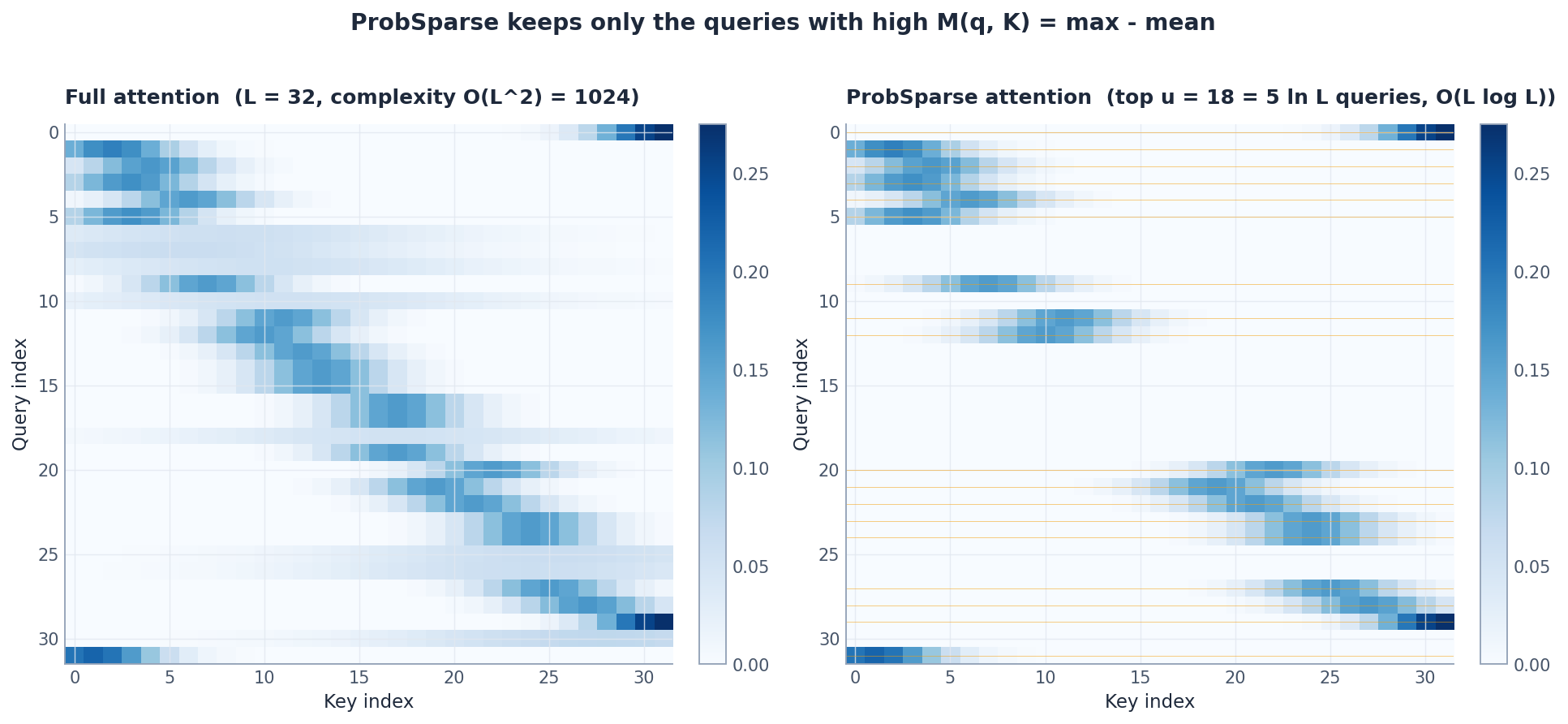

ProbSparse: the queries that matter, the queries that don’t#

The intuition#

If you plot the attention distribution of a typical query $q_i$ over the keys, you see two qualitatively different shapes:

- Peaked. A handful of keys get most of the probability mass. This query is “selective” — it knows what it is looking for.

- Uniform. Probability is spread evenly across all keys. This query is “vague” — it would benefit from looking at everything.

Peaked queries can be approximated very efficiently by computing attention only over their top few keys. Uniform queries cannot. The trick is to identify which is which without computing the full attention matrix first.

The KL-based sparsity measure#

$$p(k_j \mid q_i) = \mathrm{softmax}_j\!\left(\frac{q_i^\top k_j}{\sqrt{d}}\right).$$ $$\mathrm{KL}(q_i \,\|\, U) = \log L + \frac{1}{L}\sum_{j=1}^{L} \log p(k_j \mid q_i).$$ $$\mathrm{KL}(q_i \,\|\, U) \;\propto\; \log\!\left(\sum_{j=1}^{L} e^{q_i^\top k_j / \sqrt{d}}\right) - \frac{1}{L}\sum_{j=1}^{L} \frac{q_i^\top k_j}{\sqrt{d}}.$$Call this quantity $M(q_i, K)$ . High $M$ means peaked distribution — selective query, deserves full attention. Low $M$ means uniform — vague query, can be skipped.

$$\bar{M}(q_i, K) \;=\; \max_{j \in \mathcal{S}} \frac{q_i^\top k_j}{\sqrt{d}} \;-\; \frac{1}{|\mathcal{S}|} \sum_{j \in \mathcal{S}} \frac{q_i^\top k_j}{\sqrt{d}}.$$The $\max$ here replaces the LogSumExp because under the concentration of measure that holds for high-dimensional Gaussian-like vectors, LogSumExp is dominated by its largest term. Empirically, $\bar{M}$ ranks queries almost identically to the exact $M$ at a fraction of the cost.

What ProbSparse actually computes#

The procedure for a single attention head:

- Sample $u = c \log L$ keys uniformly at random.

- Compute $\bar{M}(q_i, K)$ for every query $q_i$ — $\mathcal{O}(L \log L)$ work.

- Pick the top $u$ queries by $\bar{M}$ .

- For those $u$ queries, compute attention over the full $L$ keys. For the remaining $L - u$ queries, fill in the mean of $V$ as the output.

Total cost: $\mathcal{O}(L \log L)$ . Memory: also $\mathcal{O}(L \log L)$ .

In the figure, the right panel keeps only the rows corresponding to high-$M$ queries. The other rows are not zero in practice — they are filled with the mean value of $V$ , which is a reasonable approximation for a uniform attention distribution.

A clean implementation:

| |

A few subtleties:

- The $u = c \ln L$

formula uses natural log; with

numpyusenp.logand round up. - The “fill non-selected queries with mean of V” step is the mathematically correct treatment, not a hack. It corresponds to the unique distribution that maximises entropy subject to the constraint that the unselected queries have uniform attention.

- For decoder masked self-attention, you must mask out future keys before the softmax. The implementation above does the mask as

-1e9which is the standard trick.

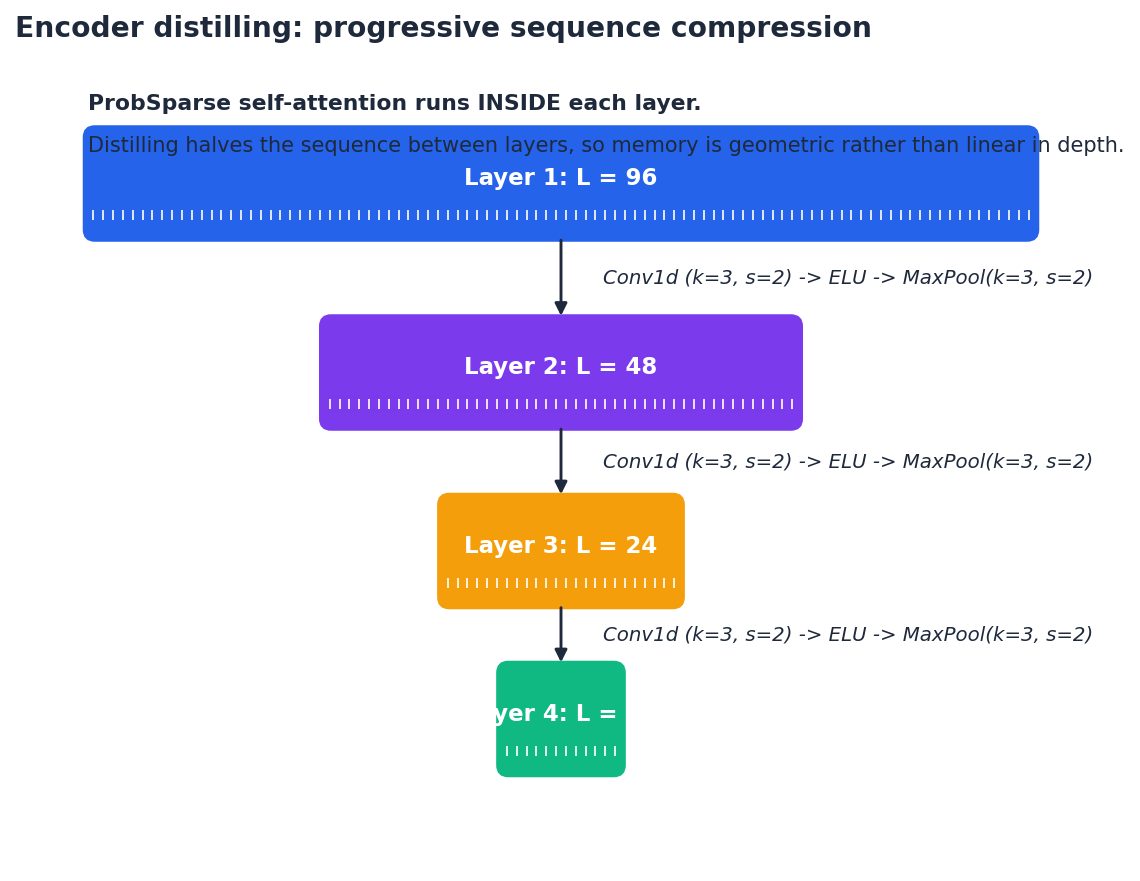

Encoder distilling: pyramidal sequence compression#

$$X_{\ell+1} = \mathrm{MaxPool}_{k=3, s=2}\!\Big(\mathrm{ELU}\big(\mathrm{Conv1d}_{k=3, s=2}(X_\ell)\big)\Big).$$The Conv1d with stride 2 acts as a learned downsampler; the MaxPool keeps the dominant value at each pair of adjacent positions; the ELU non-linearity in between gives the operator some expressivity beyond pure pooling.

The effect compounds: a 3-layer encoder takes a 720-step input through layers of length $720 \to 360 \to 180 \to 90$ . Memory is geometric in depth, not linear. And because lower layers see longer history, the receptive field at the top layer easily covers thousands of original time steps.

Two things to be careful about:

- Last layer should not distil. The decoder’s cross-attention reads from the encoder output; if you distil the very last encoder layer, you halve the resolution again and lose information. The standard recipe is “distil after every layer except the last.”

- Stack two encoders for robustness. The original paper runs two encoders in parallel, one over the full input and one over a halved version of the input, then concatenates the outputs. The redundancy guards against unlucky distilling decisions on a particular sequence.

| |

Generative decoder: one shot for the whole horizon#

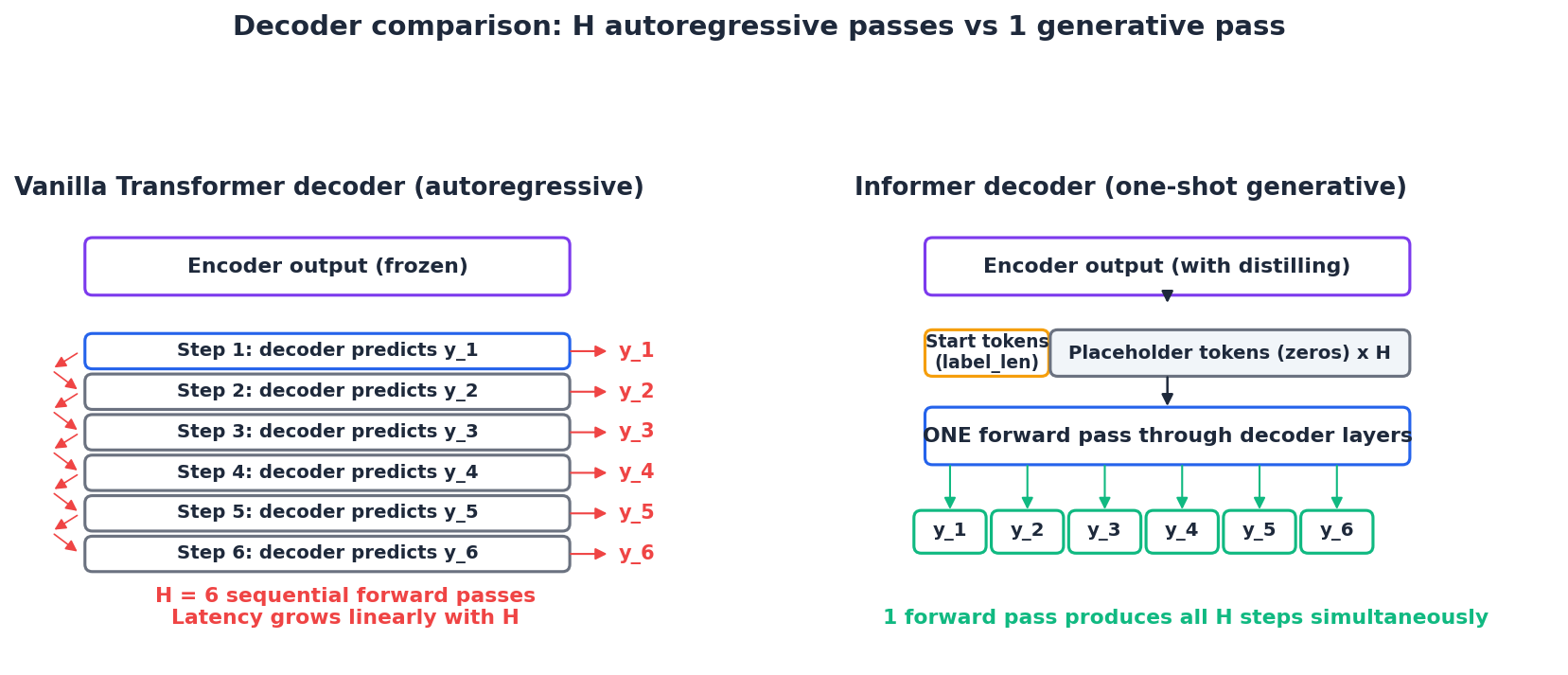

A vanilla Transformer decoder generates the forecast autoregressively: predict $\hat{y}_1$ , feed it back, predict $\hat{y}_2$ , and so on. For a horizon of $H = 168$ that means 168 sequential decoder forward passes. Latency aside, this also makes errors compound: a mistake at step 5 is fed back as input for step 6.

$$X_\text{dec} = \big[\, X_\text{token} \;;\; X_0 \,\big],$$where $X_\text{token}$

is the last label_len time steps of the encoder input (acting as a “prompt”) and $X_0$

is out_len placeholder tokens (typically zero vectors of the right dimension). The decoder runs once over this entire $\text{label\_len} + \text{out\_len}$

sequence, and the last out_len outputs are the forecast.

Three benefits:

- Speed. $H$ sequential passes $\to$ 1 pass. Inference latency drops by a factor of $H$ .

- No error compounding. All horizon predictions are produced from the same encoder context; no prediction depends on a previous prediction.

- Better long-horizon accuracy. Counter-intuitively, the generative decoder tends to be more accurate than autoregressive decoding for long horizons. The intuition is that the autoregressive decoder is forced into a “myopic” optimisation: each step is trained to match the next token assuming the previous tokens were perfect. The generative decoder optimises the joint forecast directly.

The label tokens are essential: they give the decoder a few “real” data points at the start, which anchors the placeholder tokens. Empirically label_len = out_len / 2 works well.

Putting it together: the Informer model#

The full model is encoder + decoder, with embeddings that combine value, position, and time-feature information.

| |

For training data construction, the decoder input is built by concatenating the last label_len real values with out_len zero placeholders:

| |

Long-horizon performance#

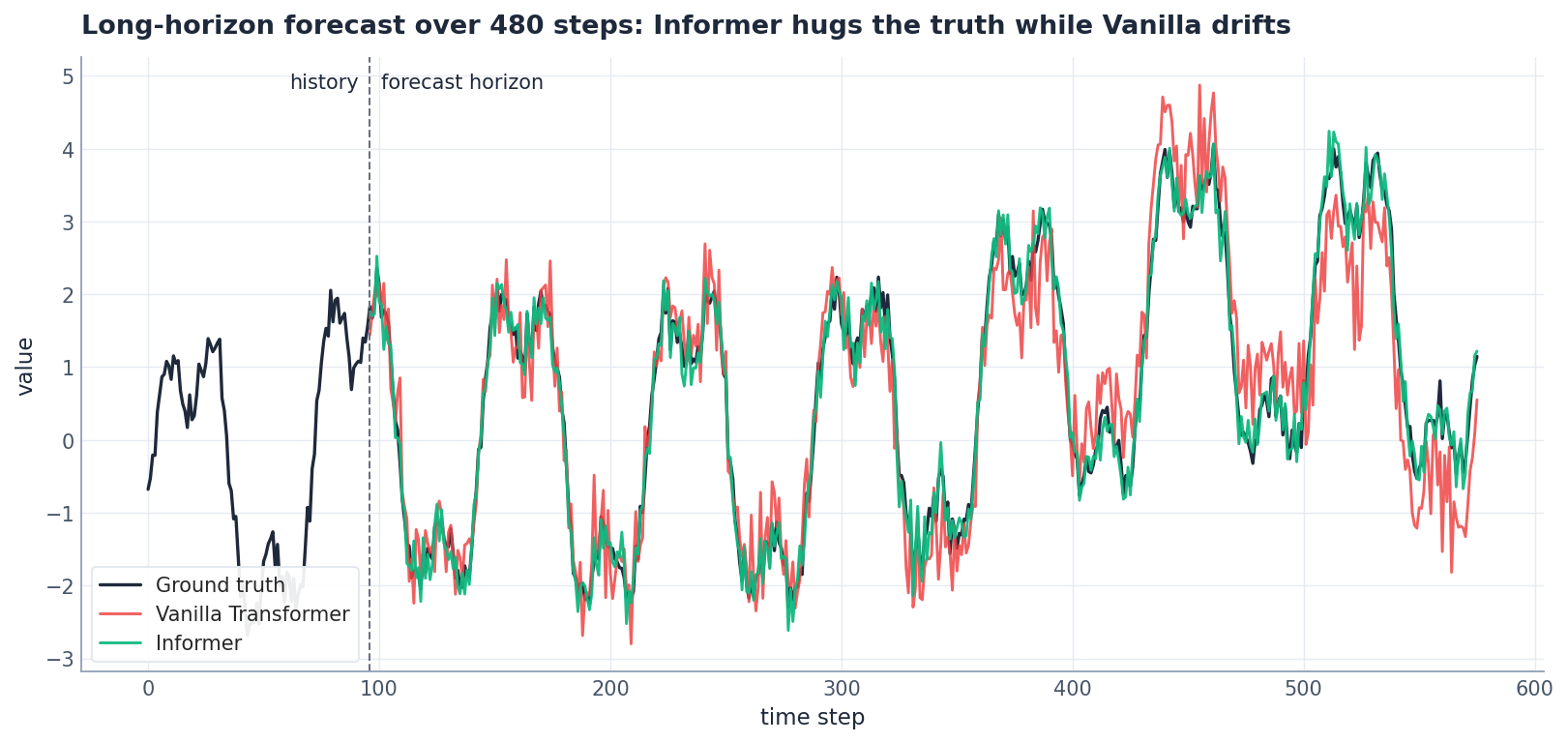

The headline visual: ground truth, vanilla Transformer, and Informer on a 480-step horizon.

The vanilla Transformer’s autoregressive errors compound around step 100 and drift increasingly far from the truth. Informer’s one-shot generative decoder produces a coherent forecast across the full window because all output tokens were optimised jointly.

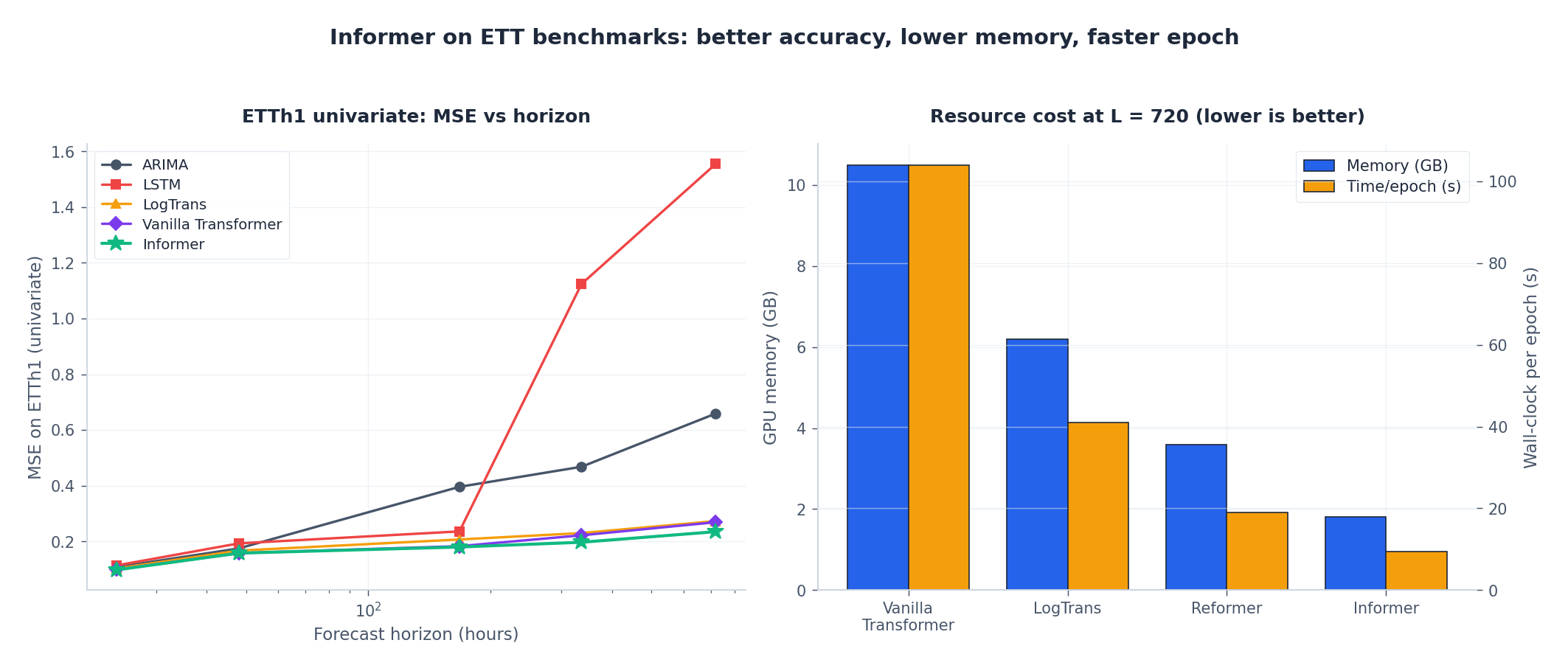

The numbers from the paper on the canonical ETT (Electricity Transformer Temperature) benchmark:

Two takeaways:

- Accuracy at long horizons. At horizon 720, Informer’s MSE is 0.235 vs the vanilla Transformer’s 0.269. Modest in absolute terms, but the vanilla Transformer at horizon 720 is already at the edge of trainability.

- Resource cost. At $L = 720$ , Informer uses 1.8 GB peak GPU memory and 9.5 s per epoch on a single V100; the vanilla Transformer needs 10.5 GB and 104 s per epoch. The difference is the entire reason Informer exists.

Hyperparameter cheat sheet#

| Hyperparameter | Default | Notes |

|---|---|---|

d_model | 512 | Standard; 256 if memory-constrained. |

n_heads | 8 | $d_k = d_\text{model} / n_\text{heads}$ , so 64 here. |

e_layers | 3 | More layers $\to$ more aggressive distilling; rarely worth it. |

d_layers | 2 | Asymmetric: encoder does the heavy lifting. |

d_ff | 2048 | 4x d_model is standard. |

factor ($c$

in $u = c \log L$

) | 5 | 3 for speed, 7 for accuracy; rarely change. |

seq_len | 96 to 720 | Roughly equal to your weekly/monthly cycle length. |

label_len | out_len / 2 | Anchors decoder; do not zero it out. |

out_len | task-driven | What you actually want to forecast. |

dropout | 0.05-0.1 | More on small datasets. |

| Optimizer | Adam, lr 1e-4 | Half the learning rate of a “normal” Transformer. |

| LR schedule | StepLR, gamma 0.5 every 30 epochs | Or cosine annealing. |

| Loss | MSE | L1 (MAE) is more robust to outliers. |

Common Pitfalls#

- Forgetting to mask the decoder self-attention. Without the causal mask, the decoder can peek at future placeholder tokens during training. Output looks miraculous at training time and garbage at test time.

- Distilling on a too-short input. If

seq_lenis short (e.g. 24), three layers of distilling collapse the encoder output to a length of 3, throwing away most of the context. Turn off distilling whenseq_len < 96. - Skipping per-window normalisation. Same issue as N-BEATS: standardise each window before feeding the model, inverse-transform at output.

- Not encoding time features. The temporal embedding (hour-of-day, day-of-week, month) is a major contributor to performance; without it the model has to infer time-of-day from raw data.

- Tiny

label_len. Some implementations default tolabel_len = 0, which destroys decoder anchoring. The paper useslabel_len = out_len / 2.

When not to use Informer#

- Short sequences ($L < 96$ ). ProbSparse and distilling have constant overhead; for short sequences a vanilla Transformer or an LSTM is simpler and just as fast.

- Cross-feature interactions are the main signal (multivariate with strong inter-feature dependencies). Informer attends along the time axis; for cross-feature attention, look at TFT or TimesNet.

- You need exact attention patterns for interpretability. ProbSparse loses the row-wise attention map for non-selected queries. If you must visualise full attention, use a vanilla Transformer.

- Streaming inference at high frequency (kHz, MHz). Informer is built for batch forecasting; streaming requires more specialised architectures.

- Very small datasets (<1k samples). Informer has tens of millions of parameters and will overfit. Use a smaller, less expressive model.

FAQ#

Why $u = c \log L$ specifically?#

The bound comes from the expected probability that a “selective” query has its top key sampled. With $u = c \log L$ and $c = 5$ , the probability that we miss the top-1 key for any given query is $\leq 1/L^4$ . In practice $c = 3$ also works fine.

Does ProbSparse actually identify the right queries?#

Empirically yes — the $\max - \mathrm{mean}$ approximation correlates near-perfectly (>0.95 Spearman) with the exact KL divergence on attention distributions seen during training. The paper has the full ablation.

Why use the mean of $V$ for non-selected queries instead of zero?#

Because a uniform attention distribution evaluates to exactly $\frac{1}{L}\sum_j v_j$ . The mean is the analytically correct fill-in for queries we have classified as “uniform attention”.

How is Informer different from Reformer / Performer / Linformer?#

- Reformer uses LSH-bucketed attention. Cost $\mathcal{O}(L \log L)$ but the bucketing is data-independent.

- Performer uses random-feature kernel approximation. Cost $\mathcal{O}(L)$ but accuracy degrades on long sequences with sharp attention.

- Linformer projects keys/values to a fixed low-rank dimension. Cost $\mathcal{O}(L)$ but the projection is fixed at training time.

- Informer picks queries adaptively based on data. Cost $\mathcal{O}(L \log L)$ , best accuracy retention on time-series benchmarks.

Can I use Informer for multivariate input with variable encoder/decoder feature dimensions?#

Yes — enc_in and dec_in are independent. A common pattern is to feed all variables into the encoder and only the target variable into the decoder.

What about Autoformer / FEDformer?#

Both are direct successors. Autoformer (2021) replaces self-attention with autocorrelation along the series and adds an explicit decomposition layer. FEDformer (2022) adds frequency-domain attention. Both beat Informer on the same benchmarks but are more complex to implement; Informer is the right starting point.

Should I always pre-train on a large multi-series dataset?#

Helpful but not required. Unlike NLP, the domain gap between time-series datasets is large, and naive pre-training often hurts more than it helps. Domain-specific fine-tuning from scratch is usually the better default.

Summary#

Informer is the architecture that made Transformers practical for long-horizon time-series forecasting. The three core ideas — ProbSparse self-attention, encoder distilling, and a generative decoder — compose into an end-to-end $\mathcal{O}(L \log L)$ system that beats the vanilla $\mathcal{O}(L^2)$ Transformer in both accuracy and wall-clock time on every long-horizon benchmark.

For a forecasting task with $L > 96$ on a single GPU, Informer is the obvious starting point. Newer architectures (Autoformer, FEDformer, PatchTST) refine the recipe further, but each builds on Informer’s central observation that not every query needs full attention and that autoregressive decoding is a self-imposed bottleneck.

This concludes the time-series forecasting series. Across eight chapters we walked from classical ARIMA to LSTM to Transformer to TCN to N-BEATS to Informer; pick the architecture that matches your data, ensemble when it counts, and remember that simple baselines often beat fashionable models on small problems.

Where to go from here#

You’ve now walked through the full arc of time-series modeling, from classical to current. Chapter 1’s ARIMA showed you what classical statistics still does well and exactly where it breaks. Chapters 2-3 (LSTM, GRU) are where deep learning actually started working on sequences. Chapters 4-5 (attention, Transformer) unlocked direct interactions across arbitrary distances. Chapter 6 (TCN) proved pure convolution can hold its own. Chapter 7 (N-BEATS) was a reminder that architectural priors beat raw capacity. This chapter (Informer) brings Transformers into the regime where “long sequence” becomes practical.

If I had to compress eight chapters into one selection rule: short series with few variables and a need for interpretability, start with ARIMA or Prophet. Medium-length series with nonlinear interactions, GRU or TCN are good defaults. Need interpretable decomposition with thousands of related series? Reach for N-BEATS. Long horizon with many variables? Informer or PatchTST. The boundaries aren’t sharp, but they’ll save you two rounds of baseline comparisons on a new project.

Three directions worth pursuing if you want to go deeper. Toward interpretability: how N-BEATS, TFT, and SHAP apply to time series, especially in regulated domains where “the model said so” isn’t an answer. Toward multivariate and causality: VAR, Granger causality, and the more recent causal Transformer literature, which try to reason about why not just what. Toward production: putting these models behind real traffic, which is a different engineering problem entirely — online learning, drift detection, low-latency inference. Whichever path you pick, the eight architectures here give you a complete toolbox; the rest is project-specific judgment, and there’s no shortcut for that beyond running real data through them.

Thanks for sticking with the series.

References#

- Zhou, H. et al. (2021). Informer: Beyond Efficient Transformer for Long Sequence Time-Series Forecasting. AAAI Best Paper.

- Wu, H. et al. (2021). Autoformer: Decomposition Transformers with Auto-Correlation for Long-Term Series Forecasting. NeurIPS.

- Zhou, T. et al. (2022). FEDformer: Frequency Enhanced Decomposed Transformer for Long-term Series Forecasting. ICML.

- Nie, Y. et al. (2023). A Time Series is Worth 64 Words: Long-term Forecasting with Transformers (PatchTST). ICLR.

- Wang, S. et al. (2020). Linformer: Self-Attention with Linear Complexity. arXiv:2006.04768 .

- Choromanski, K. et al. (2021). Rethinking Attention with Performers. ICLR.

Time Series Forecasting 8 parts

- 01 Time Series Forecasting (1): Traditional Statistical Models

- 02 Time Series Forecasting (2): LSTM — Gate Mechanisms and Long-Term Dependencies

- 03 Time Series Forecasting (3): GRU — Lightweight Gates and Efficiency Trade-offs

- 04 Time Series Forecasting (4): Attention Mechanisms — Direct Long-Range Dependencies

- 05 Time Series Forecasting (5): Transformer Architecture for Time Series

- 06 Time Series Forecasting (6): Temporal Convolutional Networks (TCN)

- 07 Time Series Forecasting (7): N-BEATS — Interpretable Deep Architecture

- 08 Time Series Forecasting (8): Informer — Efficient Long-Sequence Forecasting you are here