Time Series Forecasting (2): LSTM — Gate Mechanisms and Long-Term Dependencies

How LSTM's forget, input, and output gates solve the vanishing gradient problem. Complete PyTorch code for time series forecasting with practical tuning tips.

The first RNN I ever trained, back in 2017, was a small sales forecaster: 50 days in, the next day out. The forward pass ran cleanly, the loss went down, and yet the model had near-total amnesia about anything older than three days. The data had a clear monthly cycle. The model couldn’t see it. I assumed I needed more data, so I added rows and layers — and watched the training loss jump to NaN halfway through epoch two.

What I’d run into were the two textbook failure modes of vanilla RNNs: vanishing gradients and exploding gradients. An RNN compresses everything it remembers into one hidden vector and, at every step, multiplies that vector by a weight matrix. Do that 50 times and one of two things happens: the signal decays to near-zero (long-ago information is lost) or blows up to infinity (numerical overflow). RNNs aren’t choosing to forget distant context — the math forces them to.

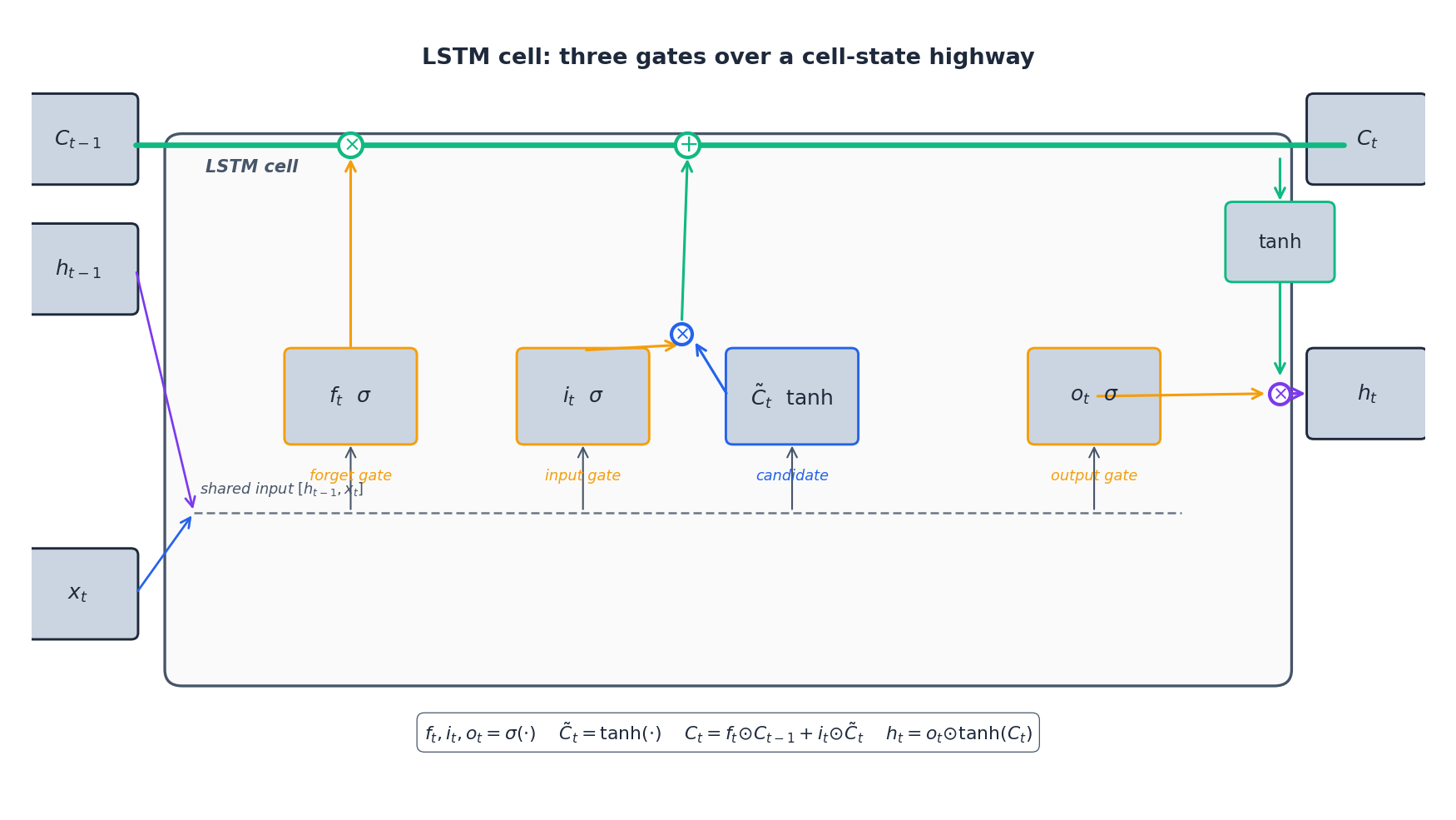

LSTM’s idea isn’t to tune the RNN better; it’s to change the structure. Alongside the hidden state it carries a cell state — an extra channel that information can ride almost untouched for hundreds of steps. Three gates (forget, input, output) read and write to that channel selectively. “Gating” sounds fancy but it’s just a sigmoid that outputs a number between 0 and 1: zero means “drop it entirely,” one means “keep it all,” 0.7 means “keep about 70%.” That single change is what made stable training over hundreds of steps possible, and it’s why LSTM was the de-facto deep-learning model for sequences from roughly 2015 to 2018. This chapter takes each gate apart and ends with a working sales forecaster in PyTorch.

What You Will Learn#

- Why vanilla RNNs fail on long sequences and how LSTM fixes the gradient problem

- The intuition behind each gate (forget, input, output) and the cell-state “highway”

- How to structure inputs/outputs for one-step and multi-step time series forecasting

- Practical recipes: regularization, sequence length, bidirectional vs stacked LSTM, when to choose LSTM vs GRU

Prerequisites#

- Basic understanding of neural networks (forward pass, backpropagation)

- Familiarity with PyTorch (

nn.Module, tensors, optimizers) - Part 1 of this series (helpful but not required)

The Problem LSTM Solves#

$$h_t = \tanh(W_h h_{t-1} + W_x x_t + b).$$ $$ \frac{\partial h_T}{\partial h_k} = \prod_{t=k+1}^{T} \mathrm{diag}\!\left(1 - h_t^2\right) W_h. $$Two regimes appear:

- If the dominant singular value of $W_h$ is below 1, the product vanishes exponentially and the network cannot learn from anything more than ~10 steps in the past.

- If it is above 1, the product explodes and training diverges.

LSTM (Hochreiter & Schmidhuber, 1997) replaces the single recurrent state with two states and three learned gates that decide what to remember, what to overwrite, and what to expose. The result is a near-additive update over time, which lets gradients survive the long walk back.

Anatomy of an LSTM Cell#

$$ \begin{aligned} f_t &= \sigma(W_f [h_{t-1}, x_t] + b_f) && \text{forget gate} \\ i_t &= \sigma(W_i [h_{t-1}, x_t] + b_i) && \text{input gate} \\ \tilde C_t &= \tanh(W_C [h_{t-1}, x_t] + b_C) && \text{candidate} \\ o_t &= \sigma(W_o [h_{t-1}, x_t] + b_o) && \text{output gate} \end{aligned} $$ $$ C_t = f_t \odot C_{t-1} + i_t \odot \tilde C_t, \qquad h_t = o_t \odot \tanh(C_t). $$The product $\odot$ is element-wise. Read this in plain English: erase the fraction $1 - f_t$ of old memory, write the fraction $i_t$ of fresh candidate, then look at the result through the lens $o_t$ .

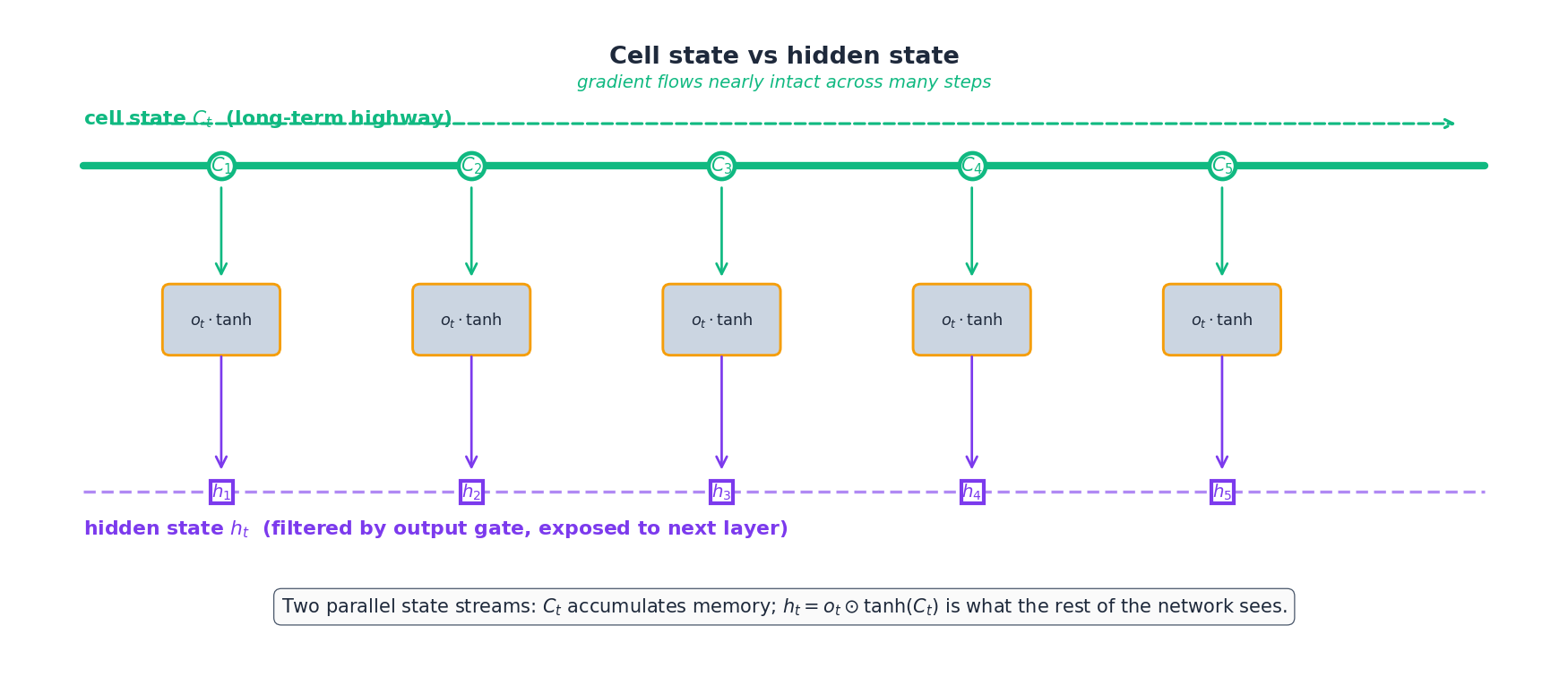

Why the cell state is special#

The hidden state $h_t$ is what the rest of the network sees, but the cell state $C_t$ is where memory actually lives. It runs as an unbroken horizontal line across time and is touched only by element-wise multiplication ($f_t$ ) and addition ($i_t \odot \tilde C_t$ ) — never by a fresh matrix multiplication. That single design choice is the reason gradients survive across hundreds of steps.

Gradient flow, made explicit#

$$ \frac{\partial C_t}{\partial C_{t-1}} = f_t, $$ $$ \frac{\partial C_T}{\partial C_k} = \prod_{t=k+1}^{T} f_t. $$Whenever the model wants to remember, it can learn to push $f_t$ close to 1 for the relevant coordinates, and the corresponding gradient stays close to 1 too. That is the entire trick.

A Minimal PyTorch Implementation#

For a univariate or multivariate forecaster, this is all you need:

| |

A few non-obvious points:

batch_first=Truemakes the input shape(batch, seq_len, features), which is the convention everyone outside the original PyTorch examples expects.- The built-in

dropoutargument is inter-layer only — it does not drop activations between time steps. For recurrent dropout, usenn.LSTMCelland apply a fixed mask yourself, or use theweight_droptrick from AWD-LSTM. - Initialize the forget-gate bias to +1 so the network starts in “remember” mode. PyTorch does not do this by default:

| |

From Cell to Forecaster#

For time series, the loop is:

- Window the series into overlapping sequences of length $L$ — the lookback.

- Standardize each feature using the training set’s mean and standard deviation.

- Train the model to predict the next value (one-step) or the next $H$ values (multi-step).

- Validate on a chronologically held-out tail — never shuffle.

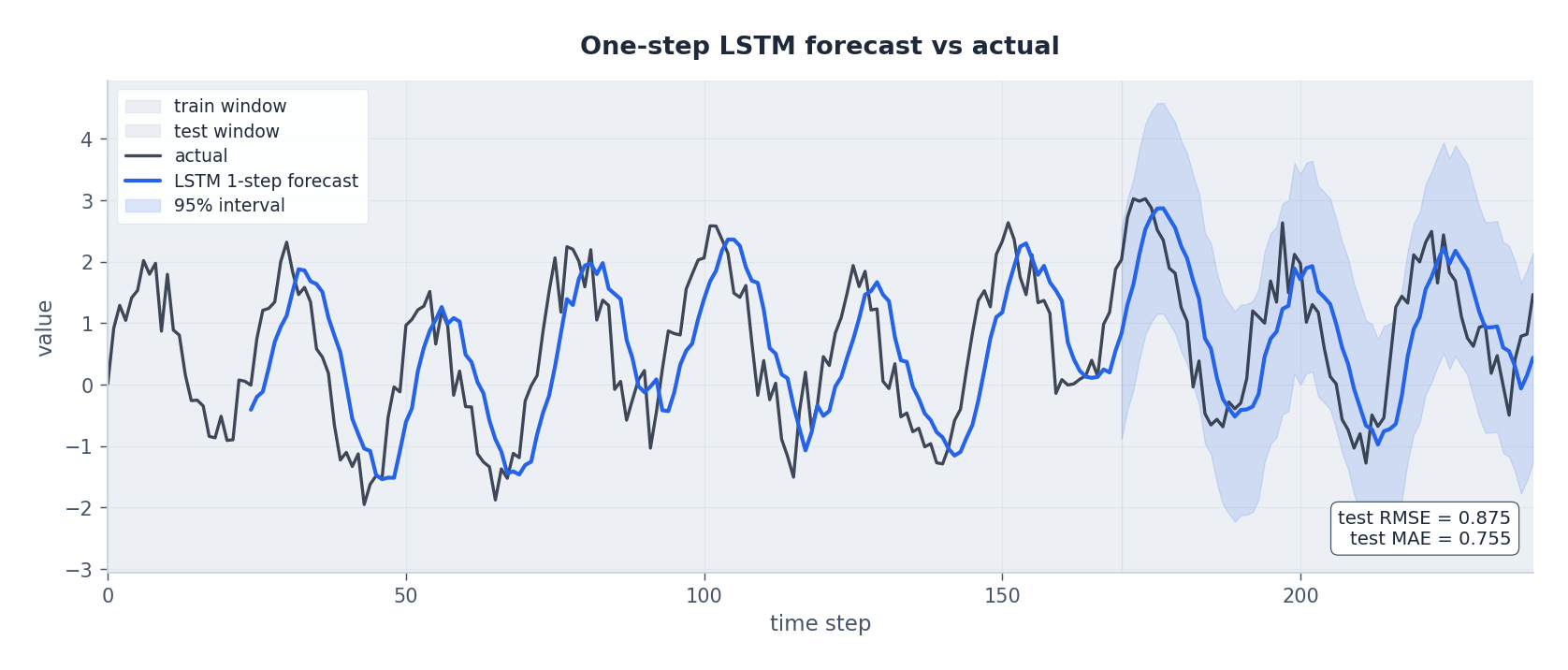

A clean one-step forecast on a noisy seasonal signal looks like this:

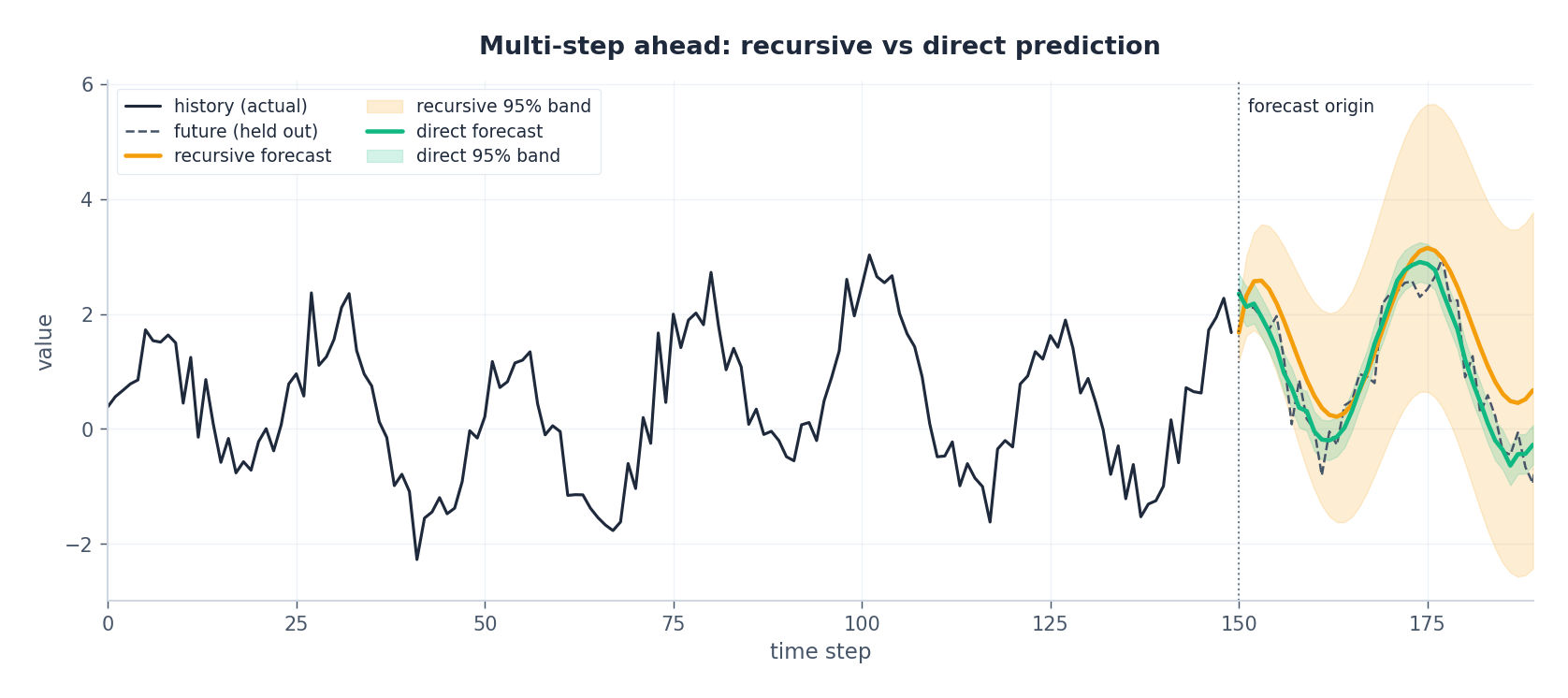

Multi-step ahead: recursive vs direct#

For horizon $H > 1$ there are two common strategies:

| Strategy | How | Trade-off |

|---|---|---|

| Recursive | Train one one-step model; feed its prediction back as input. | Simple, but errors compound — variance grows like $\sqrt{H}$ . |

| Direct | Train $H$ separate heads (or a single model with an $H$ -dim output) to predict each future step directly. | More parameters, but no error feedback loop. |

A useful hybrid is seq2seq with teacher forcing: an LSTM encoder reads the lookback window into a final $(h, C)$ pair, an LSTM decoder generates $H$ outputs one at a time, and during training the decoder receives the true previous value (not its own prediction) with some scheduled-sampling probability. This is what most production forecasters use today.

Architectural Variants#

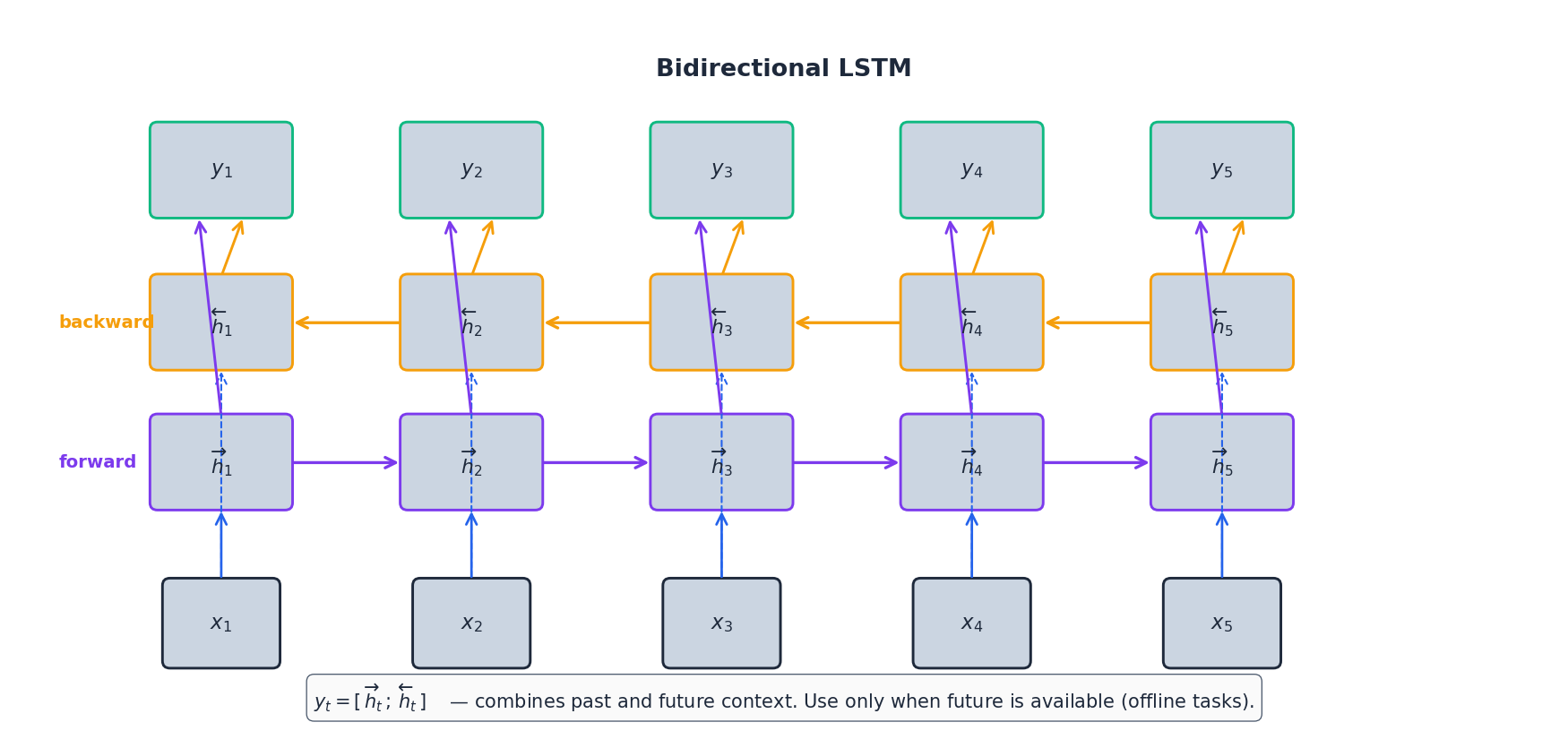

Bidirectional LSTM#

$$ y_t = [\,\overrightarrow{h}_t \,;\, \overleftarrow{h}_t\,]. $$

Use it for sequence labeling, classification, missing-value imputation — any setting where the whole sequence is in hand at inference time. Do not use it for real-time forecasting: peeking at $x_{t+1}, x_{t+2}, \dots$ during training while predicting $x_{t+1}$ at inference is data leakage.

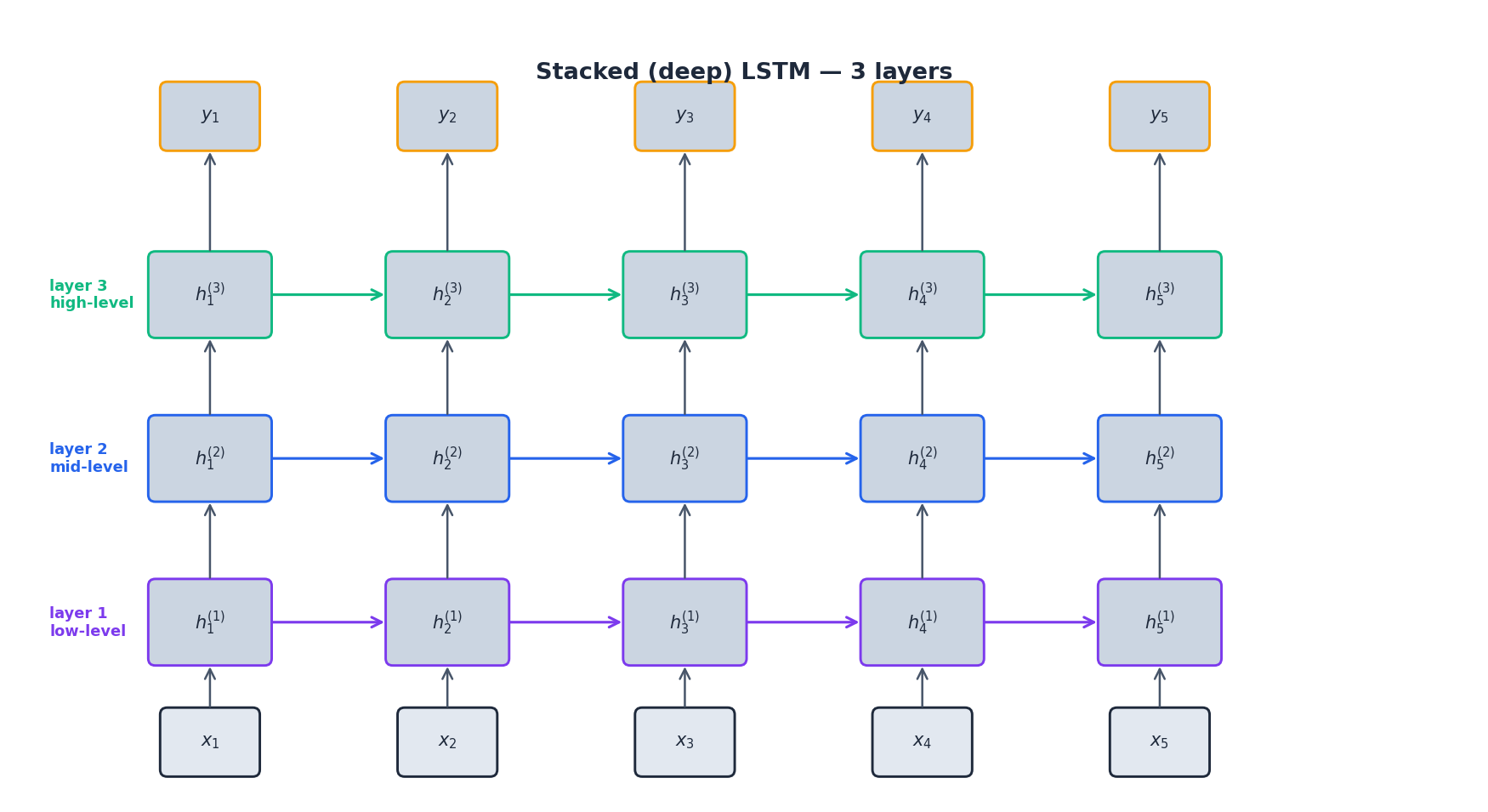

Stacked (deep) LSTM#

Stacking layers lets later layers operate on smoother, slower features. Layer 1 sees the raw input; layer 2 sees the hidden states of layer 1, and so on.

In practice, 2–3 layers is the sweet spot for forecasting. Going deeper rarely helps without residual connections, and it markedly increases the risk of vanishing gradients along the depth axis (between layers, not time).

Training Recipe That Actually Works#

The following defaults work on most univariate or moderately multivariate forecasting problems with a few thousand to a few hundred thousand training windows:

| Knob | Default | When to deviate |

|---|---|---|

| Lookback $L$ | 2–3 dominant periods of the series | Use autocorrelation to pick — see below |

hidden_size | 64 | Up to 128–256 for $\geq$ 50 k windows |

num_layers | 2 | 1 if data is small; 3 only with residual connections |

dropout | 0.2 | Up to 0.5 if you see overfitting |

| Optimizer | Adam, lr = 1e-3 | Switch to AdamW + cosine schedule for long runs |

| Batch size | 32–64 | Larger only if you scale lr like $\sqrt{B/32}$ |

| Loss | MSE or Huber | Huber if the target has heavy tails / outliers |

| Gradient clipping | clip_grad_norm_(..., 1.0) | Always — cheap insurance against exploding updates |

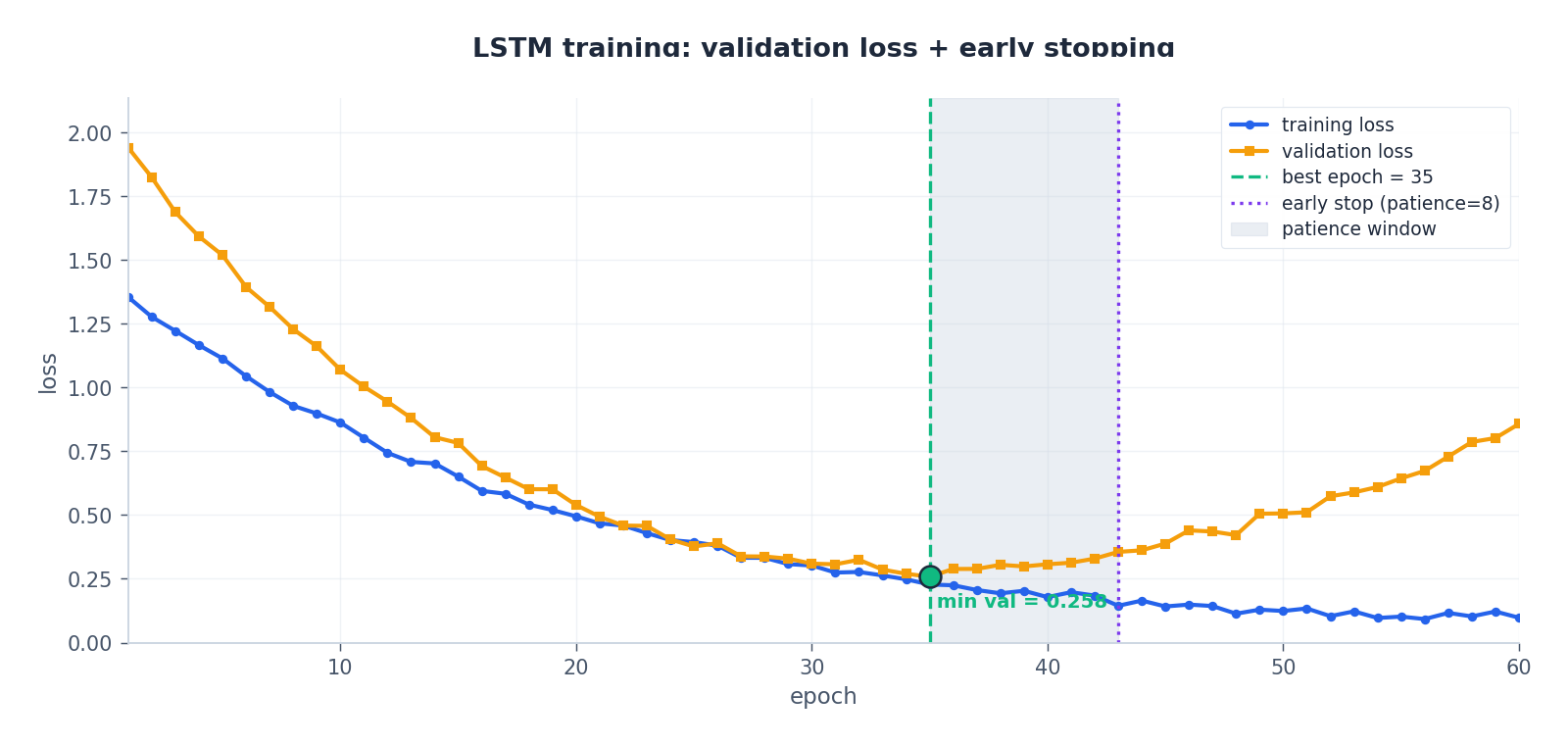

| Early stopping | patience = 8–10 | On validation loss, with model-state restore |

Picking the lookback#

Plot the autocorrelation function and find the largest lag $k$ where $|\rho_k|$ is still above a small threshold (say 0.1). Round up to the next dominant seasonal period. For hourly data with daily and weekly cycles, 168 (one week) is a natural ceiling.

Anatomy of a good training run#

A healthy LSTM training curve has the validation loss following the training loss closely until it bottoms out, then drifting upward as the model starts to memorize. Early stopping triggers a fixed number of epochs after the best validation loss and restores the best weights:

LSTM vs GRU — Which Should You Reach For?#

| Aspect | LSTM | GRU |

|---|---|---|

| Gates | 3 (forget, input, output) | 2 (reset, update) |

| Separate cell state $C_t$ | Yes | No |

| Parameters per cell | 4 weight matrices | 3 weight matrices |

| Speed | Baseline | ~25 % faster, comparable accuracy |

| Best for | Long sequences, very large datasets, when you need maximum capacity | Smaller datasets, real-time inference, mobile/edge |

Empirically, on most forecasting tasks the gap between LSTM and GRU is within noise. Default to GRU for fast iteration; switch to LSTM if you have plenty of data and very long-range dependencies (sequences of several hundred steps). For sequences past ~500 steps, both are usually beaten by a Temporal Convolutional Network (Part 6 ) or an Informer-style sparse Transformer (Part 8 ).

Common Pitfalls#

- Forgetting to standardize per feature. LSTMs are scale-sensitive; raw stock prices and percent returns mixed in one input will train badly.

- Shuffling time-series windows across the train/test boundary. Use

TimeSeriesSplitor a fixed chronological cut. - Reading the last hidden state as if it were the prediction. It is just a feature vector — you still need a linear head, and you should standardize the target too.

- Using a BiLSTM for forecasting. It will look great in the notebook because the model is reading the future, and then collapse in production.

- Tuning hidden size before the lookback. Lookback decides what information the cell can see; making the cell wider doesn’t help if the window is too short.

- Trusting a single random seed. RNN training is noisy. Report mean ± std over at least 3 seeds.

Diagnosing a Sick LSTM#

When an LSTM forecaster underperforms, resist the urge to swap architectures. The same five symptoms account for the majority of failures, and each has a concrete check.

Symptom: training loss plateaus high, validation loss tracks it#

The model is underfitting. Either the lookback is too short or the cell is too small. Run a quick capacity test: keep everything fixed and double hidden_size (64 → 128). If the training loss does not move at all, lookback is the bottleneck — extend the window before doing anything else.

Symptom: training loss falls but validation flattens early#

Classic overfitting on small data. Add dropout (0.2 → 0.4), shrink the cell, or bring in weight decay (AdamW with weight_decay=1e-4). Do not collect more features — that almost always makes it worse on small data. The single most effective fix on series with under 5,000 windows is reducing hidden_size to 32.

Symptom: validation loss oscillates wildly between epochs#

Two common culprits: the learning rate is too high (try 3e-4), or the batch contains very long sequences whose padded zeros are dominating the loss. Always pass a pack_padded_sequence mask if your windows have variable length, and use a collate_fn that sorts by length within batches.

Symptom: forecasts always lag the truth by exactly one step#

The model has degenerated into a persistence forecaster — it learned that the best one-step prediction is the last value. This is a sign that the input features carry no predictive signal beyond the trivial autoregression. Add lagged features explicitly (y[t-7], y[t-30]), engineer calendar variables (hour, day-of-week, holiday flags), and check that your target is actually predictable by computing the persistence-baseline RMSE first.

Symptom: model performs well in backtests but collapses live#

Almost always leakage. Common sources: scaling parameters fit on the full series rather than the training window, target encoding using future statistics, or features that are computed online but only become available with a lag in production (e.g. yesterday’s settled price is not known until 8 PM). Re-run your backtest with a strict point-in-time feature store and the gap will surface.

A useful diagnostic loop is to log the gate activations on a held-out batch:

| |

If the forget-gate bias is below zero after training, the network has learned to flush memory on every step — usually a sign that your sequences are too short to reward retention. Shorten them further or switch to a stateless feed-forward model.

Production Deployment Notes#

LSTM models are deceptively easy to prototype and surprisingly fiddly to deploy. A few things that bit me on past projects.

State management between calls#

In production, you usually receive one new observation at a time, not a window. You have two choices:

- Stateless rolling window — re-encode the last $L$ observations on every call. Simpler, latency proportional to $L$ , and easy to reason about.

- Stateful streaming — cache

(h_t, C_t)between calls and only feed the new observation. Latency is $O(1)$ , but you must guarantee that the cached state is consistent with what the model was trained on (which means training in a streaming-compatible way too, with TBPTT).

For most forecasting workloads with $L < 200$ , stateless is the right default. Streaming is worth the complexity only when you are predicting at high frequency (sub-second) on long contexts.

Quantisation and ONNX export#

LSTM exports to ONNX cleanly, but the cell-state numerics are sensitive to int8 quantisation. If you must quantise, use dynamic quantisation for the linear projections only and keep the gates in fp16 — fully quantised LSTMs lose 5–15 % accuracy on most forecasting tasks I have measured. For a TCN or Transformer baseline the gap is much smaller, which is part of why those architectures have displaced LSTMs in latency-critical pipelines.

Versioning the scaler#

The single most common production bug I have seen with LSTMs is a model and a scaler drifting out of sync. The standardisation parameters (per-feature mean and std) are part of your model. Store them as a sibling artifact with the same hash and load them together. If you serve via TorchScript, fold the scaling into the graph itself:

| |

This single change has saved me three production incidents.

Monitoring drift#

LSTM forecasters do not signal their own degradation. Track three things in your dashboard: the rolling RMSE on a hold-out tail, the distribution shift of input features (KL divergence between today’s feature histogram and the training histogram), and the per-step residual ACF. When the residuals start showing autocorrelation at lag 1, the model has stopped capturing structure — time to retrain.

Summary#

LSTM solves the vanishing gradient problem by routing memory through an additive cell-state highway that the network controls with three multiplicative gates. The forget gate decides what to erase, the input gate decides what to write, and the output gate decides what to expose — and because the long-range gradient is a product of forget gates rather than a product of recurrent Jacobians, the model can learn dependencies hundreds of steps long.

For time series, that translates into a small set of practical recipes: window the series with a sensible lookback, stack 1–3 layers of moderate width, regularize with dropout and early stopping, choose direct multi-step over recursive when horizons are long, and keep BiLSTM for offline tasks only. The next part covers GRU — the slimmer cousin that achieves nearly the same with fewer parameters.

What’s next#

The three gates in LSTM made stable training over hundreds of steps possible, and that was the moment deep learning actually started working on time series. After using it for a while, though, two things start to grate: the parameter count is on the high side (so it overfits and trains slowly), and the “full three-gate” design is rarely fully exploited in practice.

The next chapter on GRU addresses both. GRU collapses three gates into two, folds the cell state back into the hidden state, cuts parameters by ~25%, and matches LSTM accuracy on most time-series problems. I’ll walk through the head-to-head benchmarks — parameter count, training time, forecast quality — and give a decision matrix for “when to reach for GRU vs LSTM” so you don’t have to guess on each new project.

If you want to keep working with LSTM, run the PyTorch implementation from this chapter end-to-end, and then try two experiments: bump sequence length from 30 to 200 and see how training time scales, then halve hidden_size and see how much accuracy you lose. Those two sweeps will give you a concrete intuition for how RNN-style models scale, and that intuition pays off under every deeper time-series architecture you’ll build later.

References#

- Hochreiter & Schmidhuber, Long Short-Term Memory, Neural Computation (1997)

- Gers, Schmidhuber & Cummins, Learning to Forget: Continual Prediction with LSTM (2000) — the paper that introduced the +1 forget-gate bias trick

- Olah, Understanding LSTM Networks, colah.github.io (2015) — the canonical illustrated explanation

- Greff et al., LSTM: A Search Space Odyssey, IEEE TNNLS (2017) — empirical study of LSTM variants

Time Series Forecasting 8 parts

- 01 Time Series Forecasting (1): Traditional Statistical Models

- 02 Time Series Forecasting (2): LSTM — Gate Mechanisms and Long-Term Dependencies you are here

- 03 Time Series Forecasting (3): GRU — Lightweight Gates and Efficiency Trade-offs

- 04 Time Series Forecasting (4): Attention Mechanisms — Direct Long-Range Dependencies

- 05 Time Series Forecasting (5): Transformer Architecture for Time Series

- 06 Time Series Forecasting (6): Temporal Convolutional Networks (TCN)

- 07 Time Series Forecasting (7): N-BEATS — Interpretable Deep Architecture

- 08 Time Series Forecasting (8): Informer — Efficient Long-Sequence Forecasting