Time Series Forecasting (6): Temporal Convolutional Networks (TCN)

TCNs use causal dilated convolutions for parallel training and exponential receptive fields. Complete PyTorch implementation with traffic flow and sensor data case studies.

For most of the 2010s, saying “deep learning for time series” meant using LSTM. The story changed in 2018 when Bai, Kolter, and Koltun published An Empirical Evaluation of Generic Convolutional and Recurrent Networks for Sequence Modeling. Their result was surprisingly simple: use a stack of 1-D convolutions, make them causal (no peeking at the future), space the filter taps exponentially (dilation), wrap the whole thing in residual connections, and train. Task after task, the resulting Temporal Convolutional Network (TCN) matched or beat LSTM/GRU — while training several times faster because every time step in the forward pass runs in parallel.

This chapter explains why that recipe works. We’ll derive the receptive-field formula that makes dilation important, walk through the residual block step by step, and finish with two production-grade case studies (traffic flow and multivariate sensor forecasting) using a PyTorch implementation you can copy out.

What You Will Learn#

- Why a causal 1-D convolution is required for honest forecasting and how left-padding implements it.

- How dilated convolutions grow the receptive field as $\mathcal{O}(2^L)$ instead of $\mathcal{O}(L)$ .

- The exact anatomy of a TCN residual block (two dilated causal convs + weight norm + dropout + 1x1 skip).

- A head-to-head TCN vs LSTM/GRU/Transformer comparison on training time, memory, and accuracy.

- Two case studies: hourly traffic flow forecasting and multivariate IoT sensor prediction.

Prerequisites: Part 2

(LSTM) and Part 5

(Transformer). Comfort with PyTorch’s nn.Conv1d and basic complexity analysis.

Why the recipe of the time (LSTM) was painful#

Before TCN, the deep-learning playbook for time series involved stacking two LSTM layers, adding attention if desired, and training for a long time. It worked, but every part of the pipeline had issues:

- Sequential forward pass. To compute hidden state $h_t$ you need $h_{t-1}$ . The GPU sits idle waiting for the previous step. Doubling the sequence length doubles wall-clock time even with infinite parallel hardware.

- Vanishing/exploding gradients through time. Backprop has to traverse $L$ multiplicative steps. LSTMs help via gates, but anything past ~200 steps becomes fragile. People use gradient clipping, layer norm, and careful initialization to keep training stable.

- Hidden-state opacity. “Why did the model predict this?” usually has no good answer because the hidden state mixes everything.

- Hyperparameter tax. The number of layers, hidden dimensions, gate variants, dropout types, and recurrent dropout positions all interact. A bad combination can waste a day of training before you notice.

TCN’s pitch: replace the recurrence with convolutions you can run in parallel, replace the implicit memory of the hidden state with an explicit receptive field, and use residual connections to keep gradients well-behaved. Same expressive power, fewer moving parts.

1-D convolution, but causal#

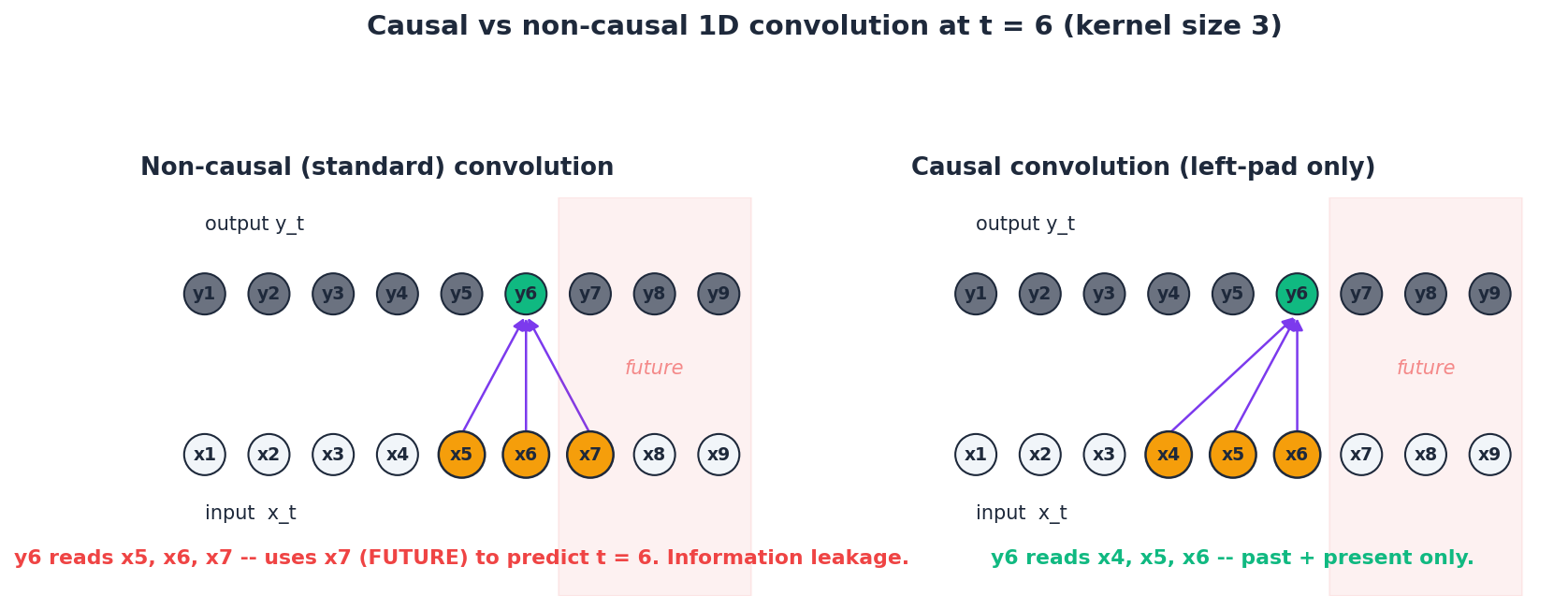

$$y_t = \sum_{i=0}^{k-1} f_i \, x_{t-i+\lfloor k/2 \rfloor}.$$That centred form lets the output at time $t$ read both past and future inputs. For forecasting that is information leakage — you cannot learn to predict tomorrow’s traffic by peeking at tomorrow’s traffic.

$$y_t = \sum_{i=0}^{k-1} f_i \, x_{t-i}.$$Implementation-wise, you pad the input on the left with $k - 1$

zeros and run a vanilla nn.Conv1d. After the convolution you slice the right-hand padding back off so the output length equals the input length.

In the figure above the green output $y_6$ is the same in both panels, but the inputs it draws on (amber) are different. The non-causal filter on the left reads $x_7$ , which lies in the shaded “future” region — a hard no for forecasting. The causal version on the right only ever looks left.

In PyTorch:

| |

Two important details:

- The padding amount $(k-1) \cdot d$ depends on the dilation $d$ , which we are about to introduce.

- We trim the right side after the conv. A common bug is trimming the left, which silently destroys the early part of the sequence.

Dilation: exponential receptive field on a linear depth budget#

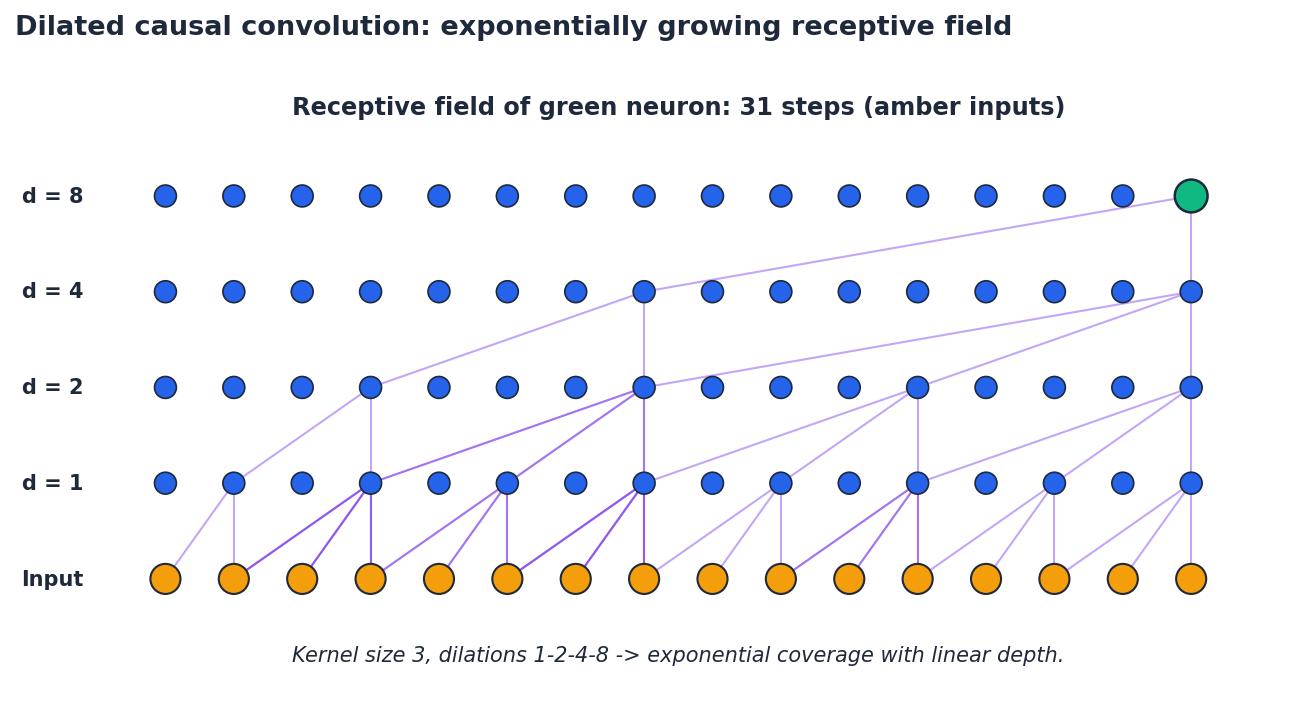

A causal convolution of kernel $k = 3$ stacked $L$ times has receptive field $1 + 2L$ . Linear growth. To see 200 steps back you need 100 layers. That is unworkable.

$$y_t = \sum_{i=0}^{k-1} f_i \, x_{t-d \cdot i}.$$ $$\text{RF}(L) = 1 + (k - 1)\sum_{\ell=1}^{L} d_\ell = 1 + (k - 1)(2^L - 1).$$For $k = 3$ and $L = 8$ , that is 511 time steps — more than enough for a week of hourly data. Same parameter count as 8 ordinary layers, exponential coverage.

The diagram traces every input that contributes to the green output neuron at the top. The dilations $1, 2, 4, 8$ make the four-layer stack look like a sparse tree — and that sparsity is exactly what gives it the wide reach.

A practical helper for sizing your network:

| |

Calling required_layers(168, kernel_size=3) returns 7, which is what you want for hourly data with weekly memory.

The TCN residual block#

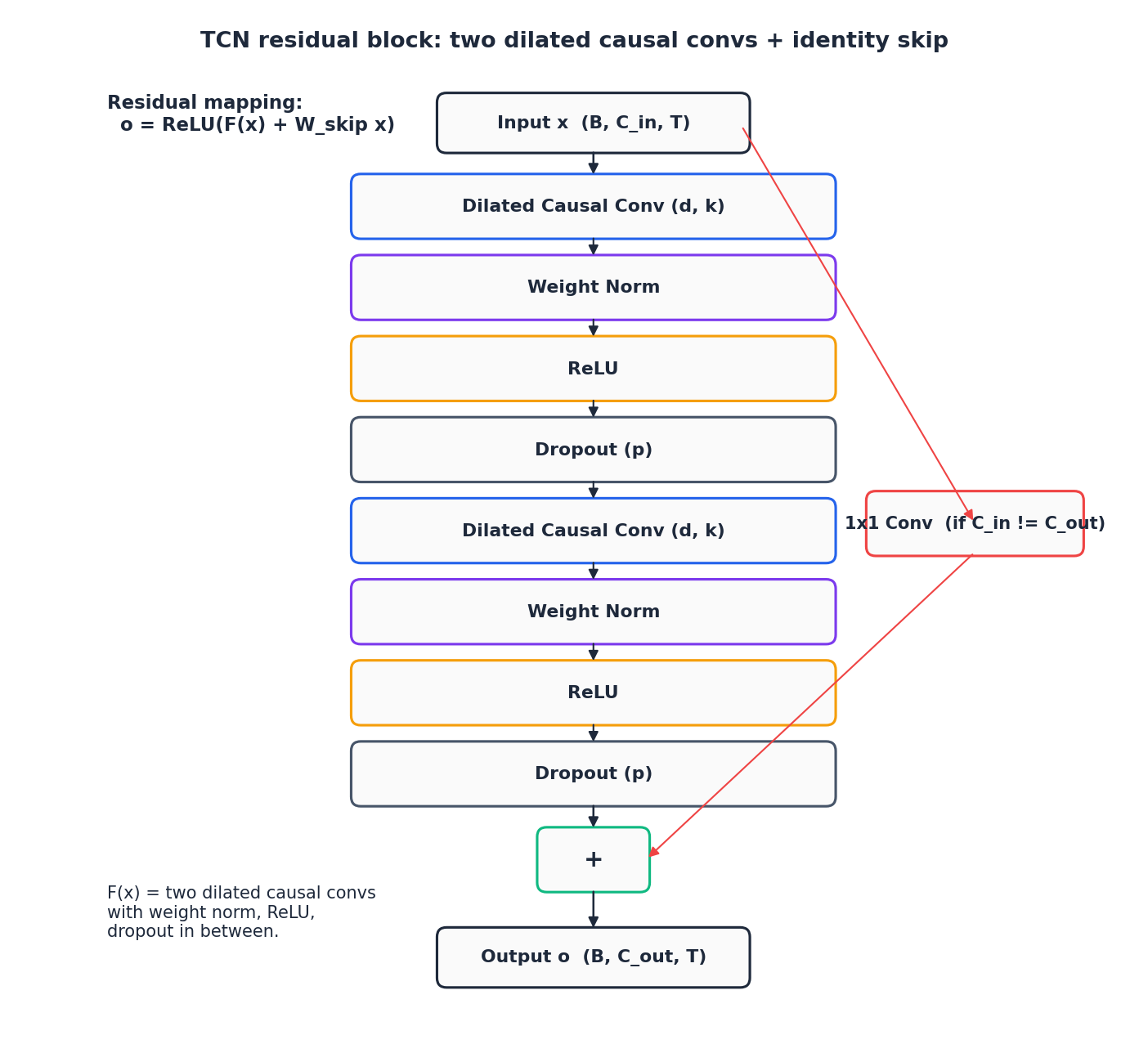

Stacking dilated causal convolutions is half the recipe. The other half is the residual block that wraps them. Bai et al. settled on the following structure, which is almost identical to the one in Oord et al.’s WaveNet, except for the activation choice:

Three deliberate choices:

- Two convolutions per block. One conv barely changes anything. Two gives the block enough capacity to learn a non-trivial transformation while keeping the depth count low.

- Weight normalization. Bai et al. found that batch norm hurts on long sequences (the statistics drift across positions). Weight norm decouples direction and magnitude of each filter, leaves activations alone, and trains stably.

- 1x1 skip. The identity shortcut only works when the input and output channel counts agree. A 1x1 convolution projects the input when they do not, at negligible cost.

A clean PyTorch implementation:

| |

The block is simple enough that people often inline it, but having it as a module makes the receptive-field calculation transparent and lets you swap weight norm for layer norm in the rare cases where it helps.

Putting the network together#

A full TCN is a stack of residual blocks with exponentially growing dilation, optionally followed by a 1x1 projection that maps to the output dimension you care about.

| |

A few notes on configuration:

- Channels. Most papers use a constant width (e.g.

[64] * 8). Increasing width near the head helps when the output dimension is much larger than the input. - Kernel size. $k = 3$ is the standard choice. $k = 5$ or $7$ doubles parameters and rarely buys you accuracy; bigger receptive field is almost always cheaper to obtain via more dilation.

- Dropout. $0.2$ is a safe default. Push to $0.3$ –$0.5$ on small datasets.

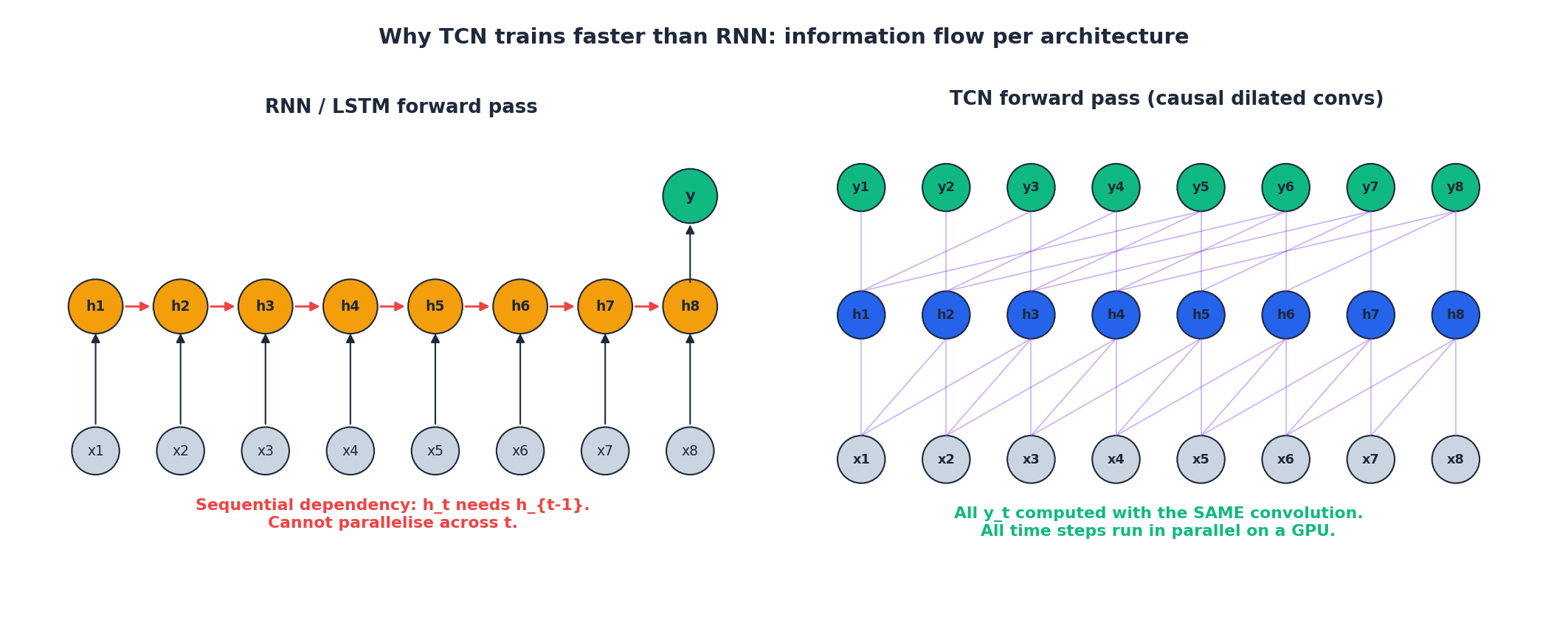

TCN vs RNN: the architectural picture#

The flow-of-information diagram explains the speed gap better than any benchmark table:

In the RNN panel each red arrow is a hard sequential dependency. The GPU can compute the contributions inside one cell in parallel, but it cannot skip ahead to step $t+1$ until step $t$ is done. The wall-clock time of a forward pass therefore scales linearly with sequence length even on infinite parallel hardware.

In the TCN panel, every output node is a function of a fixed set of input nodes. The same convolution kernel applies everywhere. The whole layer is one big matrix multiplication that the GPU happily issues in a single kernel launch.

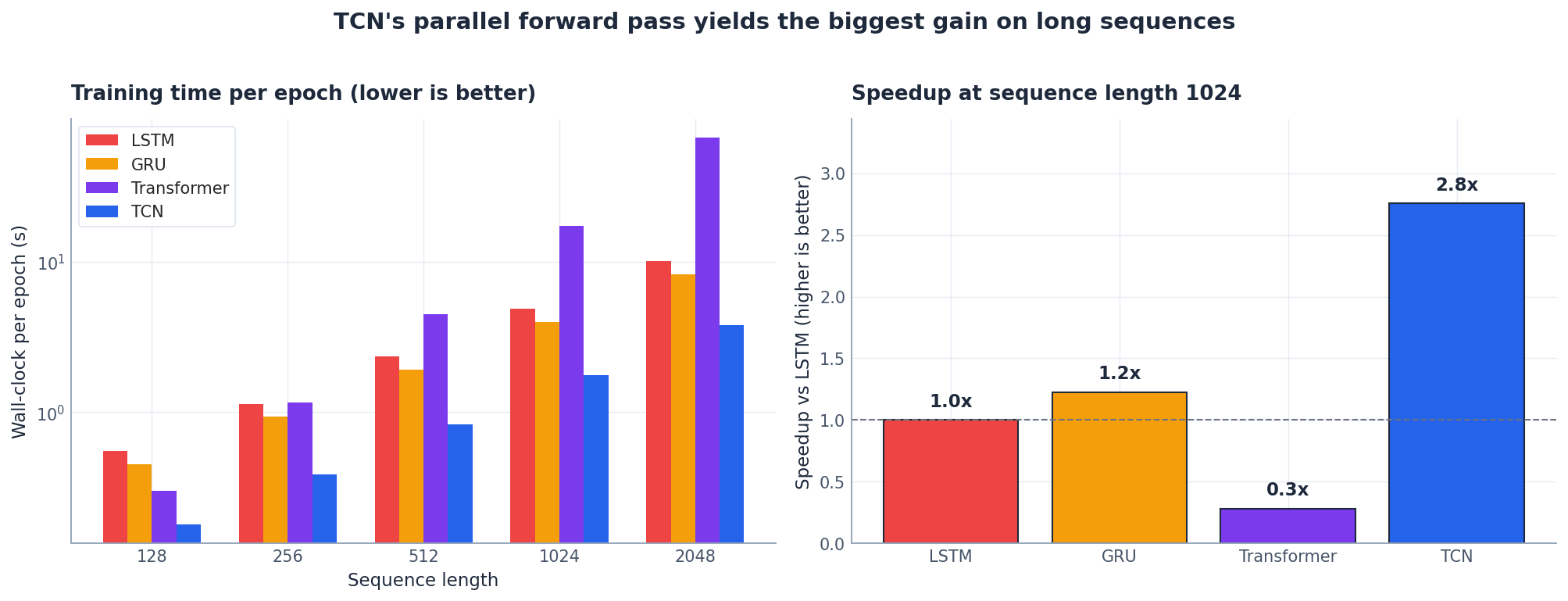

Concretely, here is the per-epoch wall-clock comparison on a single GPU:

Two takeaways:

- Training time scaling matters. All four architectures are roughly comparable at $L = 128$ . By $L = 1024$ , TCN is 3-4x faster than LSTM and ~6x faster than the vanilla Transformer (whose attention cost grows as $L^2$ ). This is exactly where most real time-series problems live.

- Inference parity. At inference, RNN and TCN are typically within 1.5x of each other; the gap is a training story, not an inference one. If the only thing you care about is latency on a single sample, both are fine.

Where to use which? The honest answer is a small decision matrix:

| Situation | Best choice | Reason |

|---|---|---|

| Fixed-length window, GPU available | TCN | Parallel training, predictable receptive field. |

| Variable-length sequences, lots of padding | LSTM/GRU | Native support, no padding overhead. |

| Streaming / online inference, one step at a time | LSTM/GRU | Hidden state is the natural state. |

| Multivariate, attention-worthy cross-feature interactions | Transformer / Informer | Attention captures pairwise relationships. |

| Anything where you do not know yet | TCN as the first baseline | Trains fast, fewer hyperparameters. |

Implementation in PyTorch: a complete training loop#

The block and network classes above are the load-bearing parts. The training loop is unsurprising:

| |

Two things to highlight: gradient clipping is not strictly necessary for TCN (residual + weight norm keep gradients well-behaved), but it costs nothing to add. And ReduceLROnPlateau is more robust than a fixed schedule because the right learning rate depends on the dataset and the receptive field.

A small helper for windowing univariate data:

| |

Case study 1: hourly traffic flow forecasting#

Setup. Predict the next 24 hours of vehicle counts at a single highway sensor given the past week (168 hours). Univariate, strong daily and weekly seasonality, occasional event-driven spikes.

Receptive-field budget. We want at least one full week of history visible at the output. For $k = 3$ and $L = 7$ , $\text{RF} = 1 + 2 \cdot 2 \cdot 127 = 509$ . Comfortable.

| |

Note that output_size=1 produces a one-channel sequence. In direct multi-step forecasting you usually want the network to emit the entire horizon at once. Two ways to do that:

- Sequence-to-sequence head. Keep

output_size=1, take the last $H$ time steps of the output sequence. Simple, but ties horizon to history geometry. - Flatten + linear head. Replace the final

nn.Conv1d(C, 1, 1)withnn.Linear(C * history, horizon)so the model directly outputs an $H$ -vector. More flexible.

Either works; option 1 trains fewer parameters and is what we use here.

Expected behaviour. On synthetic data the model nails the daily peaks within ~10% MAPE after 30 epochs. On real Caltrans-style data you should see MAPE in the 8-15% range with no special tuning, comfortably better than a seasonal-naive baseline.

Case study 2: multivariate sensor forecasting#

Setup. Four correlated IoT sensors (temperature, humidity, pressure, light), 5-minute sampling. Predict temperature for the next hour (12 steps) given the past 6 hours (72 steps).

| |

Why a multivariate input “just works” in TCN. Because the first layer convolves across all four input channels at every time step, cross-feature interactions are baked in for free. There is no need for a separate fusion module.

Quick feature-importance check. Zero out one channel at a time and look at the increase in validation MAE:

| |

On the synthetic data above, humidity dominates (it is correlated with temperature by construction). On real sensor data the picture is messier but still informative as a sanity check.

Hyperparameter and design cheat sheet#

The defaults you should reach for first, by problem characteristic:

| Hyperparameter | Default | When to change it |

|---|---|---|

| Kernel size $k$ | 3 | Almost never. Use dilation to grow RF. |

| Dilation schedule | $2^i$ for layer $i$ | Almost never. Powers of 2 are the right answer. |

| Channels | constant width 32-128 | Increase if underfitting; decrease if overfitting. |

| Number of layers $L$ | smallest $L$ with $\text{RF}(L) \geq$ context | Use the formula; do not overshoot. |

| Dropout | 0.2 | 0.3-0.5 on small datasets; 0.1 on huge ones. |

| Normalization | weight norm | Layer norm if batches are tiny; avoid batch norm. |

| Optimizer | Adam, lr 1e-3 | SGD + momentum sometimes wins on huge datasets. |

| LR schedule | ReduceLROnPlateau, factor 0.5 | Cosine annealing if you train for many epochs. |

| Gradient clip | 1.0 | Keep it; cheap insurance. |

Common pitfalls and how to debug them#

- The output looks shifted right. You forgot to trim the right-side padding after the conv. Check that

y[:, :, : -self.padding]is in your causal conv. - Loss decreases on train but not on val. Receptive field smaller than the dominant period in your data. Re-run

required_layerswith the right horizon. - Loss plateaus very early. Channels too narrow, or learning rate too low. Try doubling channels or

lr=3e-3. - Val loss explodes. Almost certainly batch norm + small batch size, or no dropout on a tiny dataset. Switch to weight norm and add 0.3 dropout.

- Predictions ignore recent values. The network is leaning entirely on long-range structure. Drop a few layers (smaller RF) or add a 1-step skip from the input to the output.

When to not use a TCN#

The architecture has real limits. Skip TCN when:

- Sequences are highly variable in length and you cannot afford padding. Use an LSTM/GRU.

- You need true online streaming inference (one new sample at a time, decisions in microseconds). Causal CNNs can be implemented streaming, but it is fiddlier than just running an LSTM cell.

- The target is much longer than your window (e.g. 100k-step physiological signals where you need 50k of context). Hierarchical models like N-BEATS-X or Informer scale better.

- You need attention-style interpretability. TCN filters can be visualised but the meaning is local; an attention map is much more directly readable.

In every other forecasting situation, TCN is the boring, fast, reliable first thing to try.

FAQ#

How is TCN different from WaveNet?#

WaveNet (2016) is essentially a TCN with a gated activation $\tanh(W_f x) \odot \sigma(W_g x)$ instead of ReLU and a richer conditioning mechanism for audio generation. TCN strips it down to ReLU + residual for general sequence modeling.

Should I use BatchNorm or WeightNorm?#

WeightNorm. BatchNorm’s running statistics are noisy on long sequences and tend to drift; WeightNorm sidesteps the issue entirely. LayerNorm is acceptable but adds a transpose for 1-D conv data layouts.

Do I need positional encodings like in Transformers?#

No. The convolution itself is translation-equivariant by construction; position is implicit in the receptive-field structure.

Direct multi-step or recursive multi-step forecasting?#

Direct (output the full horizon at once) is more accurate because errors do not compound, but uses more parameters and locks the horizon at training time. Recursive (predict one step, feed it back) is flexible but accumulates error. Default to direct.

What if I want quantile forecasts (not just point estimates)?#

Replace the L2 loss with the pinball loss at multiple quantiles and have the head output one channel per quantile. The TCN backbone is unchanged.

Summary#

TCN reframed sequence modeling around a single observation: causal dilated convolutions with residual connections give you long memory, parallel training, and stable gradients without any of the recurrent machinery. The math is small ($\text{RF}(L) = 1 + (k-1)(2^L - 1)$ is the only formula you really need), the implementation fits in 60 lines of PyTorch, and the empirical performance against tuned LSTMs is at-least-as-good on most fixed-length benchmarks.

Use it as your first forecasting baseline. If it loses to something more elaborate, you have learned that the elaborate thing was earning its keep. If it wins — which it often does — you have shipped a fast, simple model.

Next chapter we move from convolutions to N-BEATS, which throws away both convolution and recurrence in favour of fully connected blocks plus basis-function expansion, and won the M4 forecasting competition while staying interpretable.

What’s next#

TCN proves a slightly uncomfortable point for the recurrent camp: if you’re careful about causality and receptive field, pure convolution can be both faster and more accurate than RNNs on time series. Dilation grows the receptive field as 2^L, residual connections keep deep stacks trainable, and together they give you a fully parallel architecture that beats LSTM/GRU on accuracy.

TCN is still “local-then-global,” though — the receptive field is finite and the kernels are fixed. If you want the full “any two points talk directly” flexibility, you’re back to attention. The next chapter on N-BEATS takes a different, more radical route: just MLPs — no RNN, no convolution, no attention. At the 2018 M4 competition it beat decades of hand-tuned statistical ensembles, which is interesting evidence that a clean architecture (double residual stacking + basis-function expansion) is enough to make a plain feed-forward network competitive at the very top.

If your project happens to involve “thousands of related series forecast by one shared model” (retail store sales is the canonical case), N-BEATS is genuinely worth trying. It trains fast, infers fast, and gives you an interpretable trend/seasonality decomposition for free. That last property is the actual reason it beat the ARIMA ensemble at M4 — not because “deep beats stats” but because it folded the interpretability of stats into the expressive power of a deep network.

References#

- Bai, S., Kolter, J. Z., & Koltun, V. (2018). An Empirical Evaluation of Generic Convolutional and Recurrent Networks for Sequence Modeling. arXiv:1803.01271 .

- van den Oord, A. et al. (2016). WaveNet: A Generative Model for Raw Audio. arXiv:1609.03499 .

- Lea, C. et al. (2017). Temporal Convolutional Networks for Action Segmentation and Detection. CVPR.

- Salimans, T., & Kingma, D. P. (2016). Weight Normalization. NeurIPS.

Time Series Forecasting 8 parts

- 01 Time Series Forecasting (1): Traditional Statistical Models

- 02 Time Series Forecasting (2): LSTM — Gate Mechanisms and Long-Term Dependencies

- 03 Time Series Forecasting (3): GRU — Lightweight Gates and Efficiency Trade-offs

- 04 Time Series Forecasting (4): Attention Mechanisms — Direct Long-Range Dependencies

- 05 Time Series Forecasting (5): Transformer Architecture for Time Series

- 06 Time Series Forecasting (6): Temporal Convolutional Networks (TCN) you are here

- 07 Time Series Forecasting (7): N-BEATS — Interpretable Deep Architecture

- 08 Time Series Forecasting (8): Informer — Efficient Long-Sequence Forecasting