Time Series Forecasting (5): Transformer Architecture for Time Series

Transformers for time series, end to end: encoder-decoder anatomy, temporal positional encoding, the O(n^2) attention bottleneck, decoder-only forecasting, and patching. With variants (Autoformer, FEDformer, Informer, PatchTST) and a real implementation.

The 2017 Attention Is All You Need paper took the attention mechanism from the previous chapter to its logical extreme: drop the RNN entirely. Transformers stack pure attention into a full sequence model — no recurrence, no hidden state propagating over time. Originally designed for machine translation, the architecture was quickly adapted to every other sequence task, time series included.

Dropping a vanilla NLP Transformer onto a time-series problem runs into two immediate complications. The first is position. Attention is a set operation — shuffle the input order and the output is unchanged. For a time series, order is everything: a temperature curve that goes up-then-down and one that goes down-then-up are entirely different signals. NLP solves this with sinusoidal position encodings; do those still make sense for time series, or should we use learned encodings, or just concatenate calendar features (hour-of-day, day-of-week) directly into the input?

The second complication is cost. Attention’s O(n²) complexity is mostly fine in NLP — a sentence is at most a few hundred tokens — but a month of hourly time-series data is already 720 steps, three months is 2160, and O(n²) blows through memory fast. Around that one constraint, the field has developed four families of fixes: sparse attention (only some token pairs interact), linear attention (kernel tricks bring complexity to O(n)), patching (group adjacent steps into single tokens), and decoder-only stacks (GPT-style autoregressive). This chapter walks through each family with its flagship model — Autoformer, FEDformer, Informer, PatchTST — and ships a clean PyTorch reference you can run.

What You Will Learn#

- The full encoder-decoder Transformer, redrawn for time series

- Why position must be injected, and how sinusoidal / learned / time-aware encodings differ

- What multi-head attention actually learns over a temporal sequence

- Where vanilla attention breaks down (O(n^2)) and the four families of fixes: sparse, linear, patched, decoder-only

- A clean PyTorch reference implementation, plus when to reach for Autoformer / FEDformer / Informer / PatchTST

Prerequisites#

- Self-attention and multi-head attention (Part 4 )

- Encoder-decoder architectures and teacher forcing

- PyTorch fundamentals (

nn.Module, training loops)

Why Transformers for Time Series#

LSTM and GRU process a sequence step by step. Three things follow from that:

- Path length is O(L). Information from step $t-L$ has to ride through $L$ recurrence steps before it can influence step $t$ . That’s where vanishing gradients come from.

- Training is sequential. Step $t+1$ cannot start until step $t$ has finished, so a GPU sits half-idle.

- The hidden state is a bottleneck. The model has to compress everything it might need from the past into one fixed-size vector.

Self-attention removes all three constraints at once. Every position sees every other position in one matrix multiply, the path length between any two steps is $O(1)$ , and the whole sequence is processed in parallel. The cost is memory: storing $n \times n$ attention weights is $O(n^2)$ , which we will deal with in Sections 5 and 7.

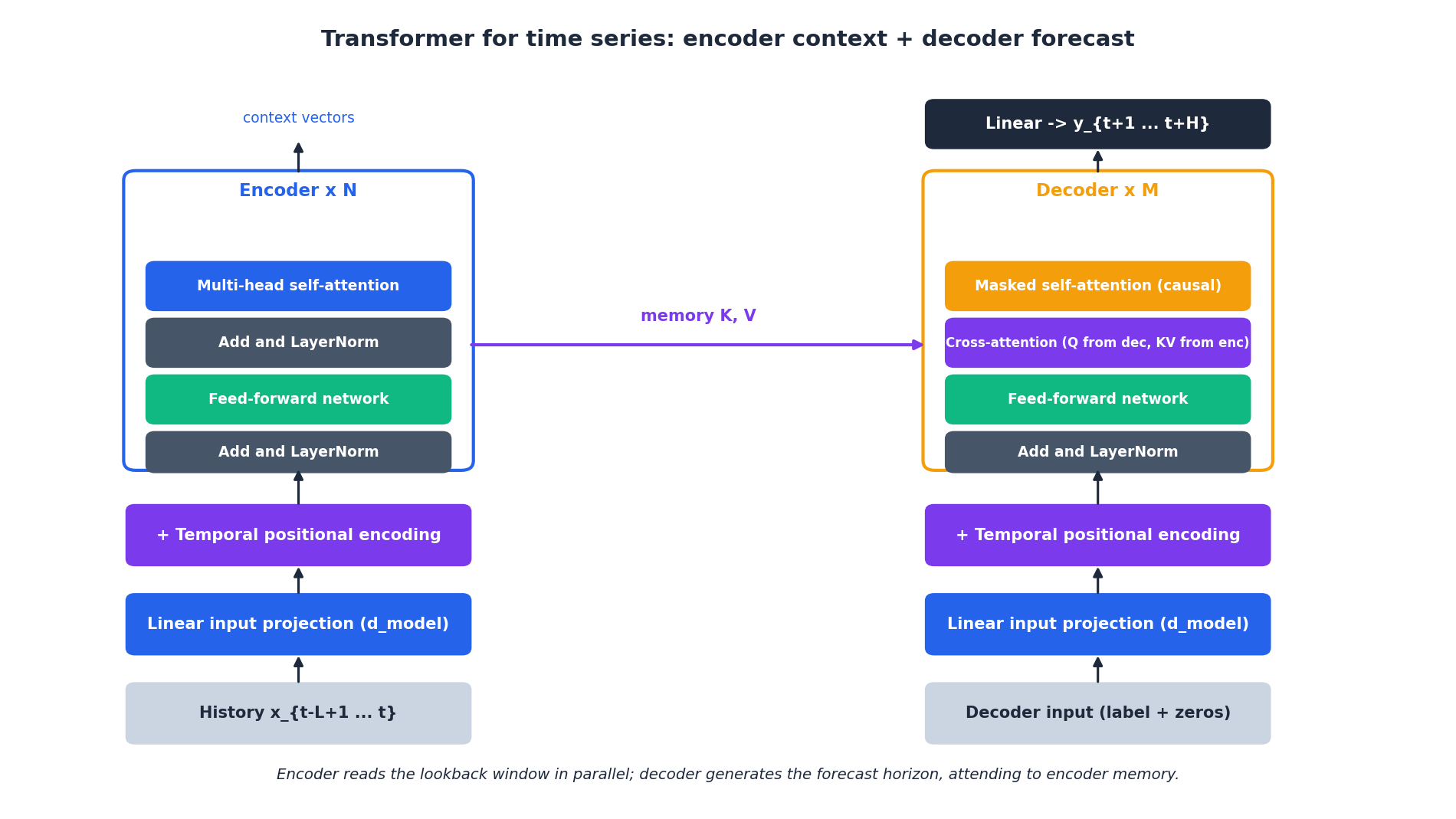

The Architecture, Block by Block#

A time-series Transformer is the original 2017 architecture with three small but consequential changes:

| Component | Original NLP | Time series |

|---|---|---|

| Input embedding | Token embedding lookup | Linear projection of continuous features |

| Positional info | Sinusoidal on token index | Time-aware encoding (calendar features, irregular dt) |

| Output head | Softmax over vocabulary | Linear to a real-valued forecast vector |

Encoder#

Reads the lookback window $x_{t-L+1:t}$ and produces context vectors $M \in \mathbb{R}^{L \times d_{\text{model}}}$ . No mask — every position attends to every other.

Decoder#

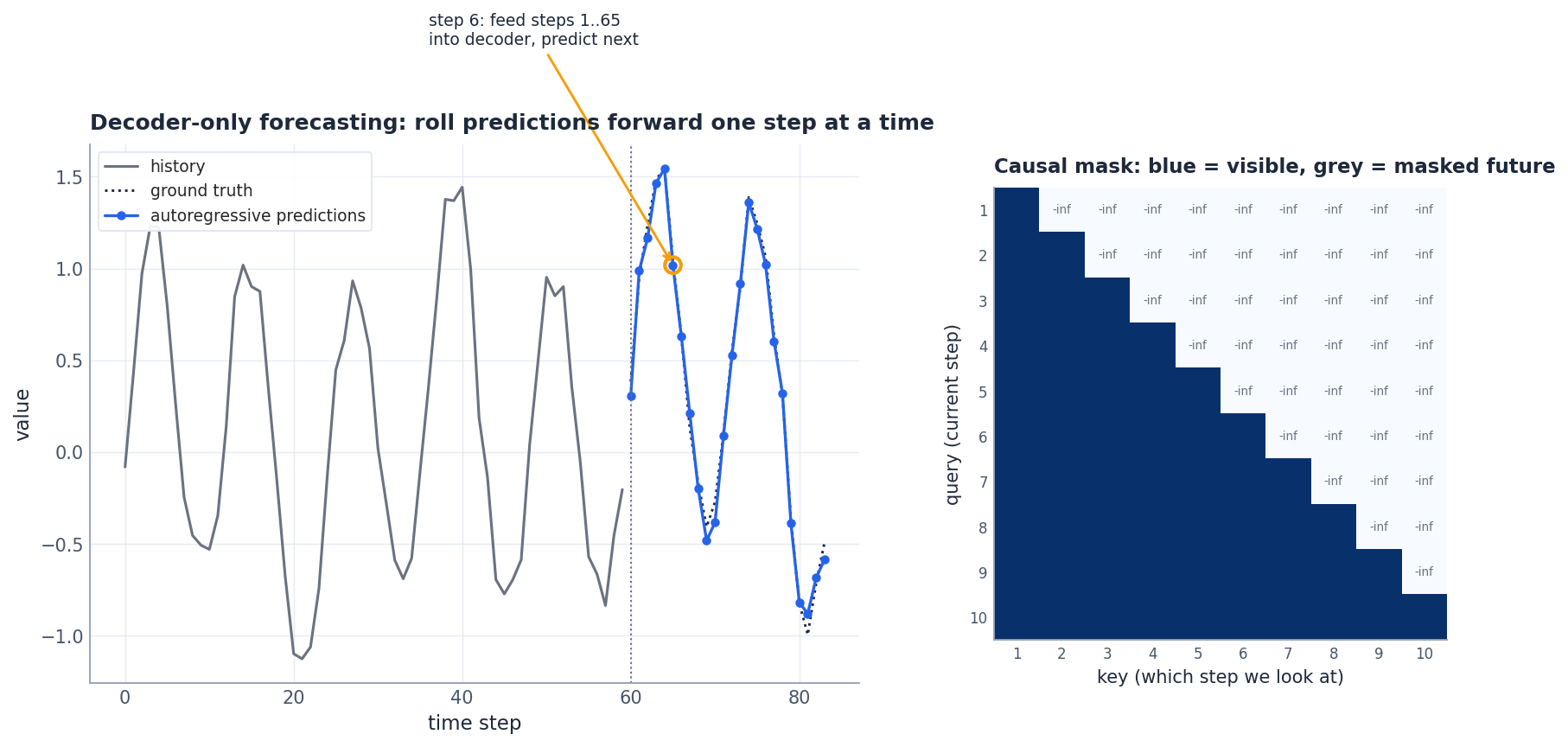

Takes the label window (last $L_{\text{label}}$ steps of history) concatenated with placeholder zeros for the forecast horizon, and emits the prediction $\hat{y}_{t+1:t+H}$ . It uses two attention sub-layers per block:

- Masked self-attention with a causal mask, so step $t+k$ can only see steps up to $t+k-1$ .

- Cross-attention where queries come from the decoder and keys / values come from the encoder memory $M$ . This is the only place the decoder ever looks at the encoder output.

The label window: a small but useful trick#

Pure encoder-decoder models often suffer at the boundary between history and forecast. The fix used by Informer / Autoformer is to feed the decoder $L_{\text{label}}$ steps of known history plus $H$ zero-filled placeholders, so the decoder always starts from a known state and rolls forward into the unknown.

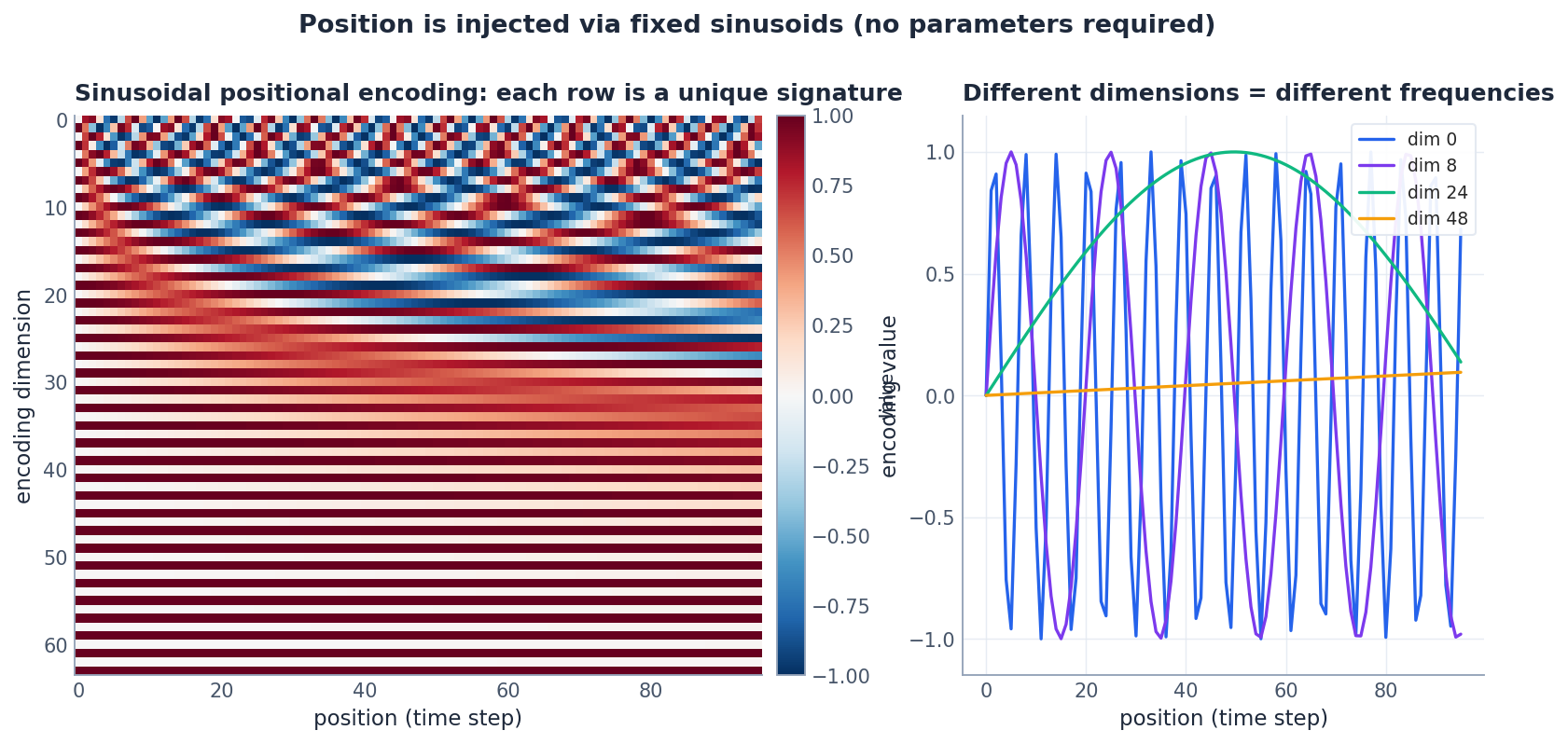

Positional Encoding for Time#

$$ \text{PE}_{(p, 2i)} = \sin\!\left(\frac{p}{10000^{2i/d}}\right), \qquad \text{PE}_{(p, 2i+1)} = \cos\!\left(\frac{p}{10000^{2i/d}}\right). $$Each position $p$ ends up with a unique signature built from a geometric series of frequencies. Low-index dimensions oscillate fast (they encode short-range position), high-index dimensions oscillate slowly (they encode long-range position).

For time series we usually want richer positional information than just “step index”:

- Calendar features: hour-of-day, day-of-week, month, holiday flag. Each gets its own learned embedding and is added to the input.

- Irregular sampling: replace position $p$ with the actual timestamp $\tau_p$ , normalised. Used by Time2Vec and Continuous-Time Transformer.

- Relative position: encode $\tau_q - \tau_k$ inside the attention score itself (T5 / TUPE style). Better for very long contexts.

| |

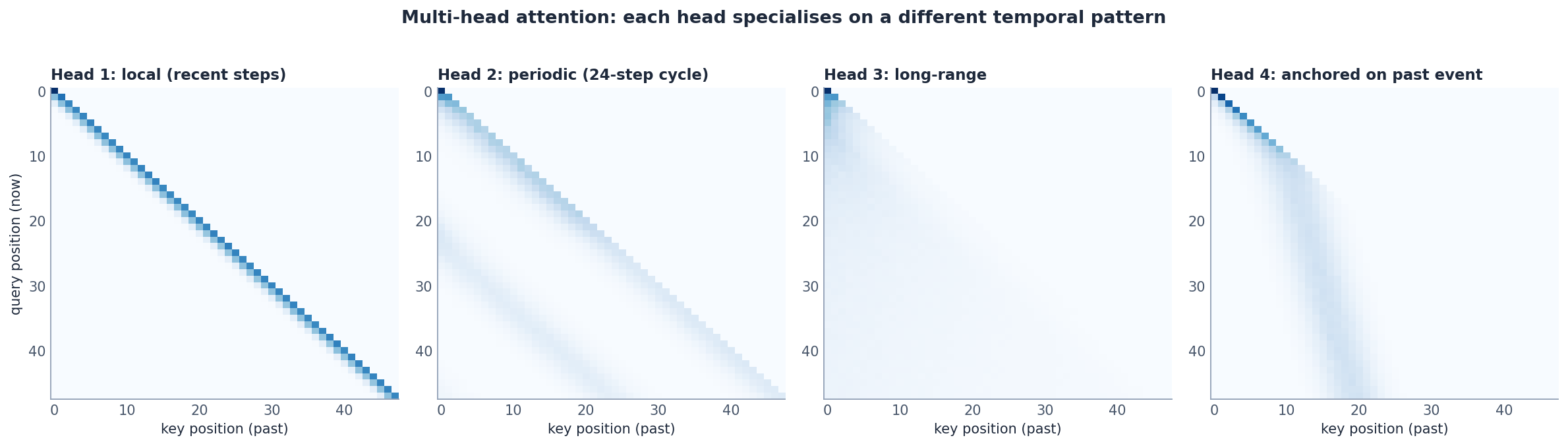

What Multi-Head Attention Learns#

A single attention head can only model one type of relationship. Multi- head splits the model into $h$ parallel attention computations on $d_k = d_{\text{model}} / h$ -dimensional projections, then concatenates. For time series, different heads tend to specialise:

| Head pattern | What the model is doing |

|---|---|

| Local (diagonal) | Mostly an autoregressive moving average |

| Periodic stripes | Locking onto a known cycle (24-hour, weekly) |

| Long-range diffuse | Pulling in slow trend information |

| Anchored bands | Latching onto a specific past event (spike, regime shift) |

This is also where interpretability comes from: averaging the final layer’s attention over heads tells you which historical steps the forecast actually depends on.

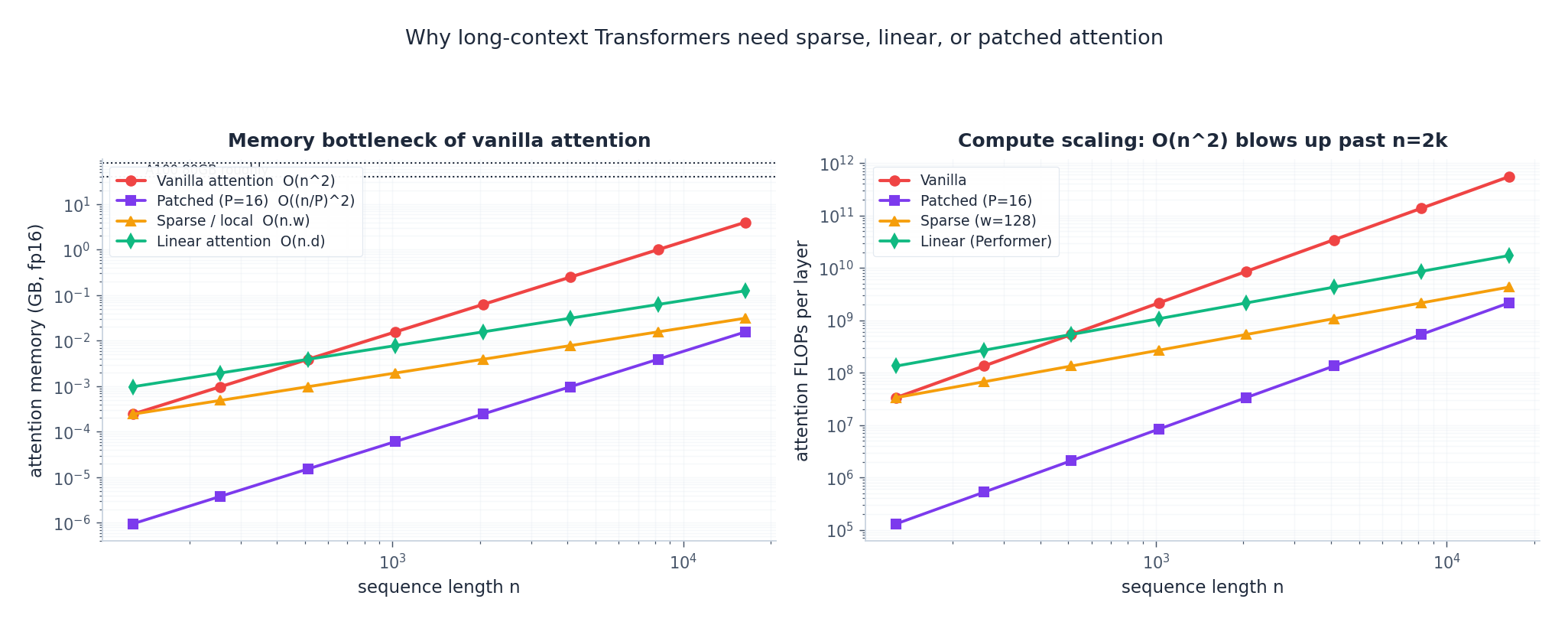

The O(n^2) Bottleneck#

$$M_{\text{attn}} = h \cdot n^2 \cdot 2 \;\text{bytes}.$$This is fine at $n=512$ (4 MB) and uncomfortable at $n=4096$ (256 MB); at $n=16384$ a single layer needs over 4 GB just for the attention matrices. Compute scales the same way: each layer is $O(n^2 d_{\text{model}})$ FLOPs.

There are four families of fixes, in increasing order of “how much they change the model”:

- Sparse attention (Longformer, BigBird, Informer’s ProbSparse): only compute scores for a sparse subset of $(q, k)$ pairs. Cost $O(n \cdot w)$ where $w$ is a window or number of selected keys.

- Linear attention (Performer, Linformer, Nystromformer): replace the softmax with a kernel that factorises, so attention becomes $O(n \cdot d^2)$ .

- Patching (PatchTST, autoformer-style series decomposition): shorten the sequence itself by grouping consecutive steps into patches. We come back to this in Section 7 .

- Decoder-only with KV cache (Section 6 ): you still pay $O(n^2)$ in training, but inference is incremental.

In practice, for forecasting horizons up to a few hundred steps with lookback windows under 2k, vanilla attention is fine. Past that, patching is the most cost-effective change — it usually improves accuracy and slashes compute.

Decoder-Only Autoregressive Forecasting#

GPT-style decoder-only Transformers have largely won in NLP. The same recipe works for forecasting: drop the encoder, train one stack with a causal mask, and roll predictions forward one step at a time.

| |

Trade-offs vs. encoder-decoder:

| Property | Encoder-decoder | Decoder-only |

|---|---|---|

| Training cost | Two stacks | One stack |

| Inference latency | One forward pass for all $H$ | $H$ forward passes (with KV cache, much cheaper) |

| Exposure bias | Mitigated by teacher forcing | Present unless you do scheduled sampling |

| Pre-training transfer | Awkward | Natural — this is how foundation TS models (TimesFM, Lag-Llama, Chronos) are built |

For forecasting from a single foundation model on many tasks, decoder-only is now the dominant choice.

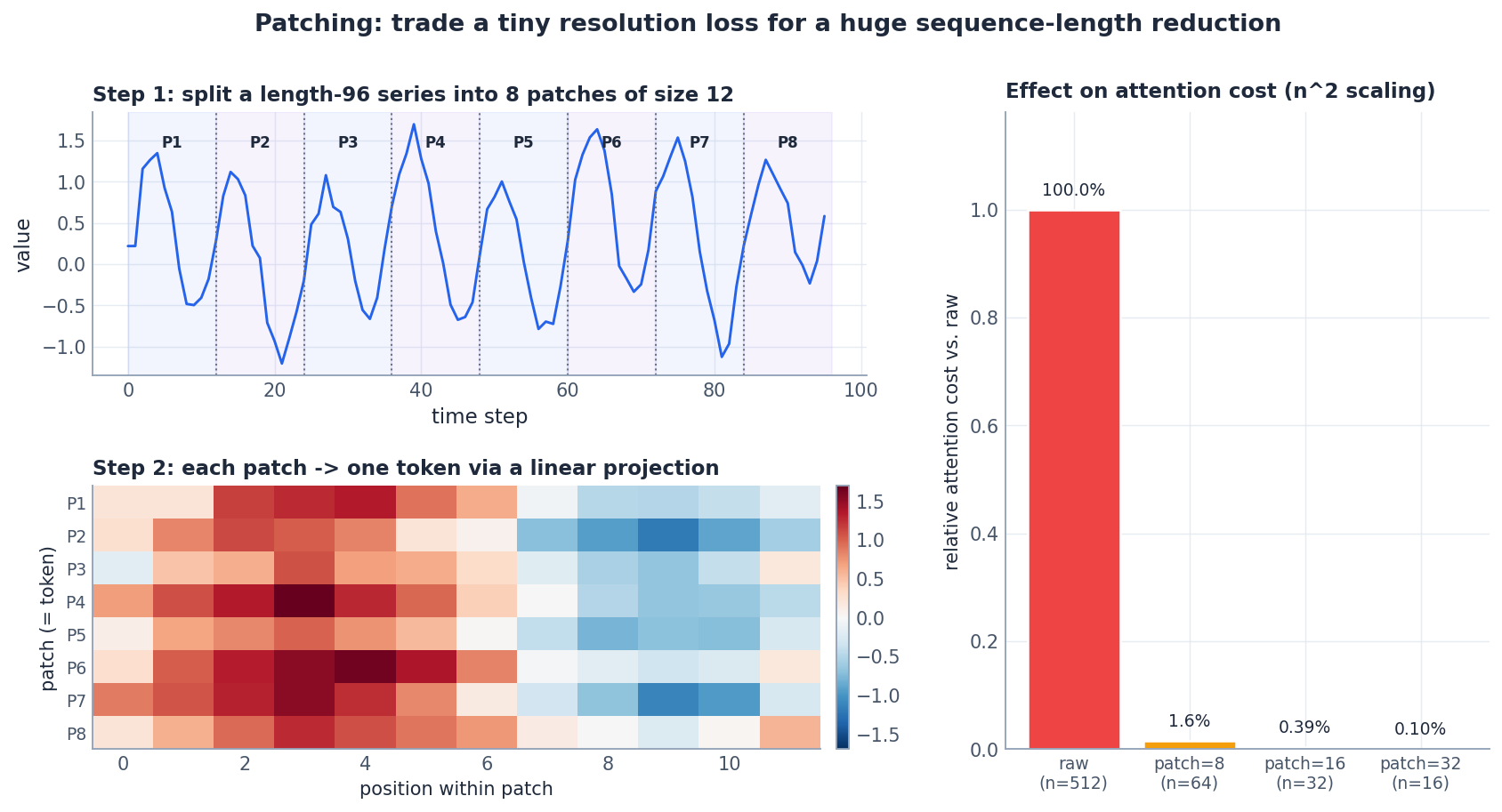

Patching: The Single Best Speedup#

PatchTST (Nie et al., ICLR 2023) made a quietly revolutionary observation: time steps are not the right tokens. A length-512 hourly series has way more tokens than a typical NLP sentence, but each “token” carries almost no information. Group them into patches of size $P$ and you get $\lceil L / P \rceil$ tokens that each summarise a short waveform.

Why patching helps so much:

- Attention cost drops by $P^2$ . With $P=16$ on $L=512$ , you go from 262k attention entries per head to ~1k.

- Each token is meaningful. A patch of 12 hourly values captures half a day — a useful unit. A single hour does not.

- Locality bias for free. The local pattern inside a patch is handled by the linear projection; attention only needs to model cross-patch (longer-range) interactions.

- Per-channel independence. PatchTST treats each variable as its own sequence, shares weights, and avoids spurious cross-channel attention in early training.

| |

A Reference Implementation#

Putting it together with nn.Transformer:

| |

A few production notes:

norm_first=True(pre-LN) is more stable for deep stacks; the original post-LN can require warm-up to converge.- GELU rather than ReLU in the FFN — standard since BERT and consistently better in our experience.

- Always normalise per series (z-score) before the model and invert at the output. Forgetting this is the most common reason a Transformer “won’t train” on time series.

Variants and How to Pick One#

| Variant | Key idea | Best when | Year |

|---|---|---|---|

| Vanilla | Encoder-decoder + sinusoidal PE | Lookback < 1k, you want a baseline | 2017 |

| Informer | ProbSparse attention + label window | Very long lookback (5k-10k) | 2021 |

| Autoformer | Series decomposition + auto-correlation in place of self-attention | Strong, clean seasonality | 2021 |

| FEDformer | Attention in the frequency domain | Periodic data, long horizons | 2022 |

| PatchTST | Patching + channel independence | Most multivariate forecasting | 2023 |

| iTransformer | Treat each variable as a token, attend across variables | Many correlated channels | 2024 |

If you’re starting fresh in 2024-2025, our default recommendation is PatchTST or iTransformer, both of which beat the older variants on the standard ETT / Electricity / Traffic benchmarks while being simpler to implement and faster to train.

Performance and Engineering#

Forecast quality#

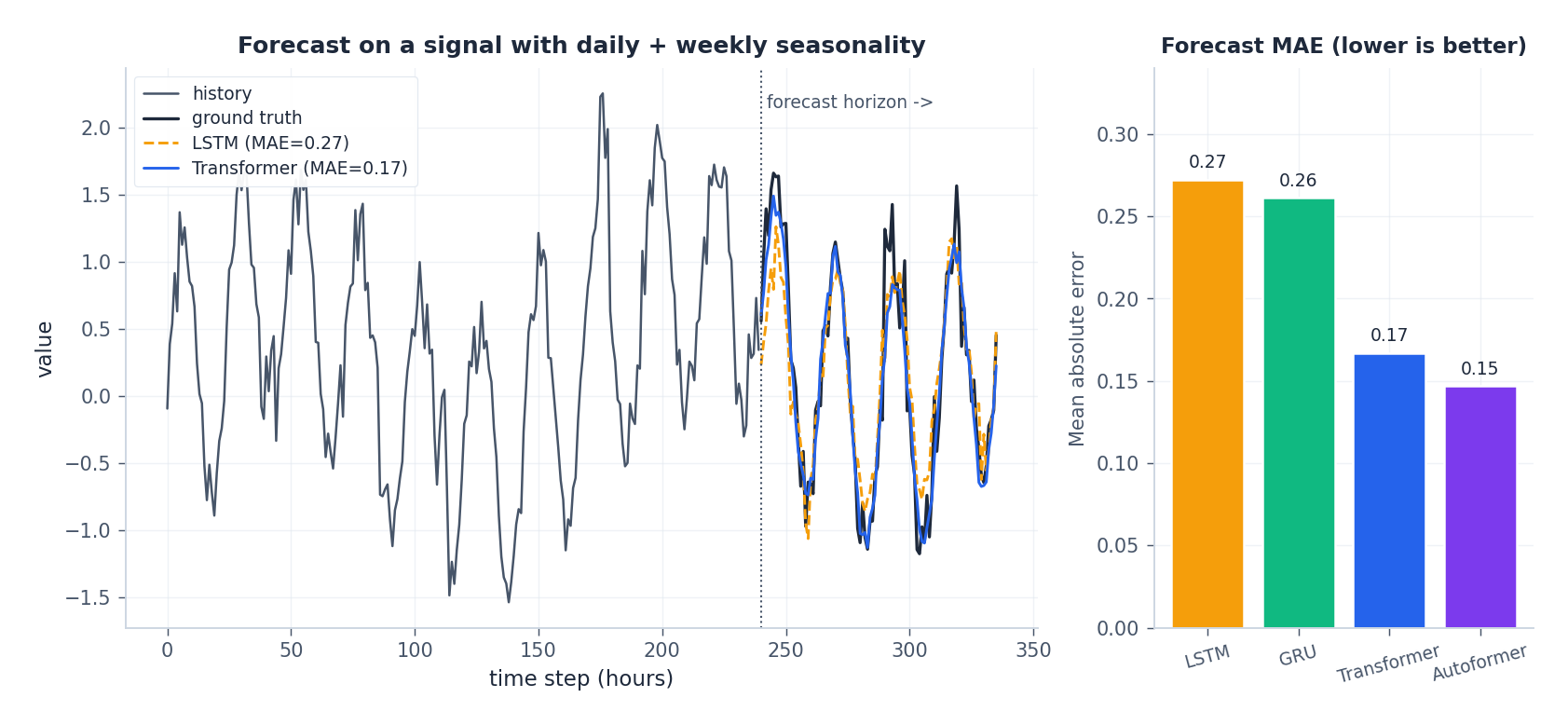

We forecast a 96-step horizon on a synthetic signal with daily and weekly seasonality plus random spikes. The Transformer cleanly captures both seasonalities; the LSTM tracks the dominant daily cycle but drifts on the weekly component.

Training recipe (the boring stuff that matters)#

- Optimizer: AdamW, $\beta = (0.9, 0.95)$ (the GPT-3 setting — the default 0.999 is too sluggish for time series).

- Schedule: linear warm-up over the first 5-10% of steps, then cosine decay to zero. Without warm-up, deep Transformers diverge.

- Learning rate: start at $1\text{e-}4$ for $d_{\text{model}}=128$ , scale down for larger models.

- Gradient clipping: $\|g\| \le 1.0$ . Non-negotiable.

- Batch size: as large as fits. Transformers benefit dramatically from large batches for stability.

- Mixed precision (

torch.cuda.amporbfloat16): 2-3x speedup with no accuracy loss. - Patience: forecasting Transformers usually need 100-300 epochs; language Transformers’ 3-10 epochs do not transfer.

Production: serving cost and the RevIN trick#

- Use

torch.compile(PyTorch 2.x) — 1.5-2x latency win for free. - For decoder-only deployments, cache K and V across steps so each new prediction is $O(n)$ rather than $O(n^2)$ .

- Reversible Instance Normalization (RevIN, ICLR 2022): normalise each input series at inference time, denormalise at the output. A one-line change that fixes the “model trained on history that drifts away from production” failure mode.

Common Pitfalls#

| Symptom | Likely cause | Fix |

|---|---|---|

| Loss flat at the variance of the data | Forgot to normalise the input | z-score per series, denormalise at output |

| Loss diverges after a few hundred steps | No warm-up, post-LN with high LR | Linear warm-up + norm_first=True |

| Validation collapses to a constant | Decoder leaks future via wrong mask | Verify tgt_mask is strictly upper-triangular |

| OOM at lookback > 1024 | Vanilla attention | Patching first, then sparse / linear if needed |

| Forecast tracks recent value, ignores trend | Position not injected, or PE swamped by feature scale | Scale PE to feature norm; add calendar features |

| “Transformer is worse than LSTM” | Dataset under 10k samples, model under-regularised | Smaller model, dropout 0.2-0.3, weight decay |

Summary#

The Transformer is not magic — it’s the simplest architecture that gives every time step direct access to every other, in parallel. For time series, three things matter:

- Position is the input — without good positional information, a Transformer cannot tell a Monday from a Friday. Use sinusoidal PE plus calendar features (or relative position for irregular data).

- Vanilla attention is O(n^2) — and that’s only a problem past a few thousand steps. The cheapest fix is patching, which usually improves accuracy too.

- Pick the variant that matches the data — PatchTST or iTransformer for most multivariate problems, FEDformer / Autoformer for clean seasonality, decoder-only for foundation-model-style transfer.

Everything in this article is just engineering on top of that.

What’s next#

The Transformer pushes attention to its conclusion — no recurrence, just attention and feed-forward layers stacked all the way. After it dominated NLP, the time-series community spent a few years localizing it: position-encoding schemes, O(n²) complexity workarounds, and seasonality/trend-aware inductive biases. Out of that work came Autoformer, FEDformer, PatchTST, and a small zoo of variants.

One sharp problem none of those generic variants fully solved: long-horizon forecasting. When you need to predict 168 future steps from 720 past steps — common in energy, weather, IoT — vanilla Transformer’s O(n²) makes both training and deployment impractical. The chapter on Informer , the AAAI 2021 best paper, attacks the problem with three coordinated changes: ProbSparse attention (O(n²) to O(n log n)), encoder distilling (each layer halves the sequence), and a generative decoder (predict the whole horizon in one pass instead of autoregressively). Together they deliver 6-10x speedup and better accuracy than vanilla Transformer on long-horizon benchmarks.

Before you jump to Informer, two intermediate architectures are worth your time: TCN trades attention for causal dilated convolutions and gets parallel training out of the deal, and N-BEATS uses a pure MLP stack for an interpretable trend/seasonality decomposition. Both are proof that “non-attention deep architectures” can be cheaper, more interpretable, and sometimes better on specific time-series workloads.

Time Series Forecasting 8 parts

- 01 Time Series Forecasting (1): Traditional Statistical Models

- 02 Time Series Forecasting (2): LSTM — Gate Mechanisms and Long-Term Dependencies

- 03 Time Series Forecasting (3): GRU — Lightweight Gates and Efficiency Trade-offs

- 04 Time Series Forecasting (4): Attention Mechanisms — Direct Long-Range Dependencies

- 05 Time Series Forecasting (5): Transformer Architecture for Time Series you are here

- 06 Time Series Forecasting (6): Temporal Convolutional Networks (TCN)

- 07 Time Series Forecasting (7): N-BEATS — Interpretable Deep Architecture

- 08 Time Series Forecasting (8): Informer — Efficient Long-Sequence Forecasting