Transfer Learning (1): Fundamentals and Core Concepts

A beginner-friendly guide to transfer learning fundamentals: why it works, formal definitions, taxonomy, negative transfer, and a complete feature-transfer implementation with MMD domain adaptation.

You spent two weeks training an ImageNet classifier on a rack of GPUs. On Monday morning, your team lead asks for a chest X-ray pneumonia model, and the entire labeled dataset is two hundred images. Do you book another two weeks of GPU time and start from scratch?

Of course not. You use what the ImageNet model already knows about edges, textures, and shapes, swap out the last layer, and fine-tune on the X-rays. Two hours later, you have a model that beats anything you could have trained from random weights with so little data. That’s transfer learning, and it’s why most real-world deep learning projects ship in days instead of months.

This article is the foundation for the rest of the series. It covers the seven things you need before the more specialized topics make sense:

- Why training from scratch is not always an option;

- The formal definitions of domain, task, source and target;

- The taxonomy — inductive, transductive, unsupervised;

- What transfers at each layer of a deep network;

- Negative transfer: when borrowed knowledge backfires;

- The Ben-David bound and MMD — how to tell whether transfer will work;

- A runnable implementation of feature transfer with MMD alignment.

Prerequisites: basic ML vocabulary (loss, gradient descent, classification) and familiarity with Python.

Why We Need Transfer Learning#

The dilemma of training from scratch#

A textbook supervised pipeline assumes three things, but none of them hold in real industrial settings:

- Massive labeled data. Modern deep networks need tens of thousands to millions of labeled examples to generalize. Few real teams have that.

- Plentiful compute. Training a ResNet-50 from random initialization costs hundreds of GPU-hours; a Transformer from scratch can run into the tens of thousands.

- No knowledge reuse. Even strongly related tasks, like chest X-rays vs. chest CT, start back at zero.

Real medical projects, by contrast, give you a few hundred cases of a rare disease, annotators who must be board-certified physicians, and a deadline measured in weeks. Transfer learning resolves the mismatch with a simple promise: use a model trained on a large generic dataset and adapt it cheaply to your data-scarce task.

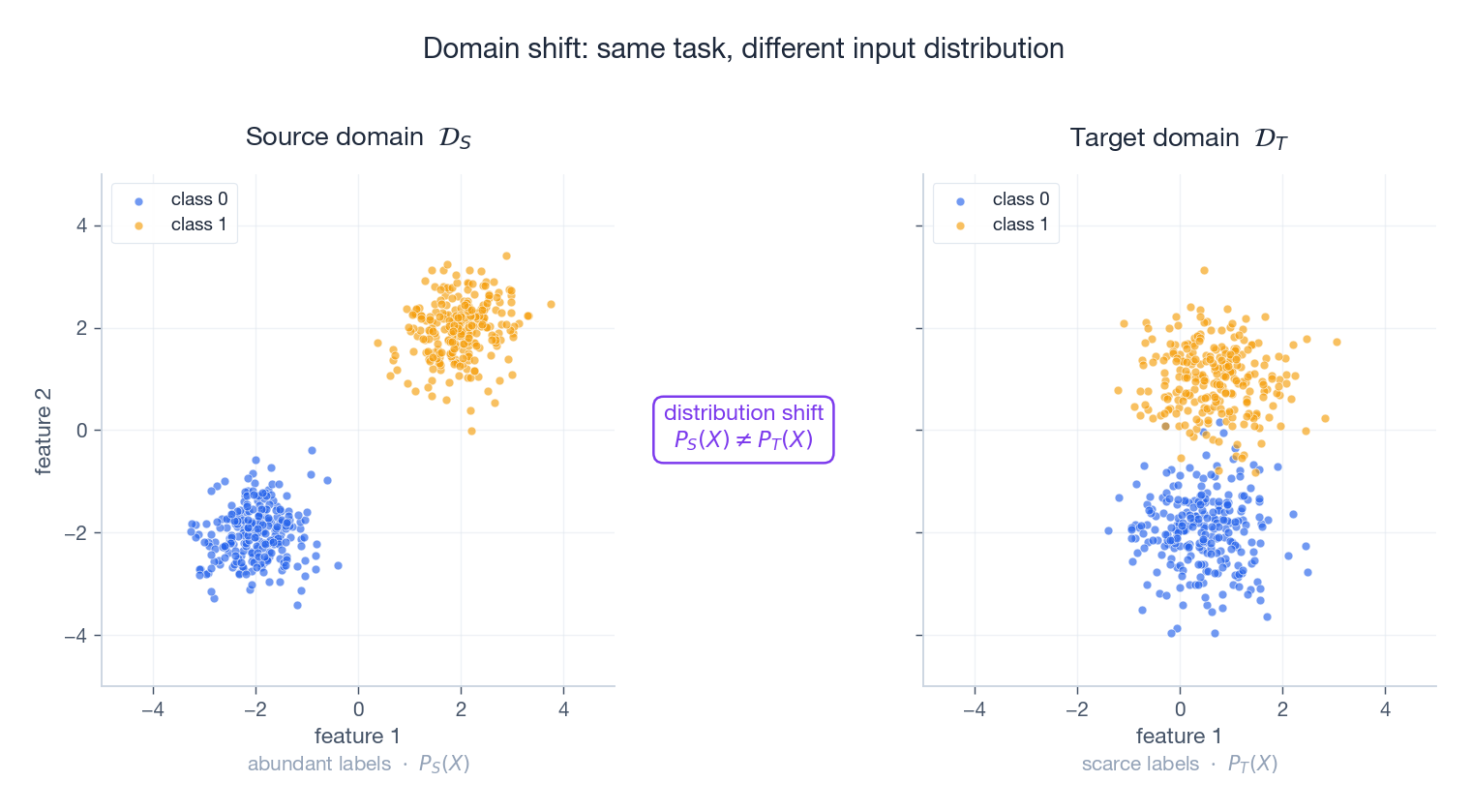

The picture below shows what “data-scarce, distribution-shifted” actually looks like. Same two classes, but the target domain has been rotated and shifted — a faithful cartoon of the kind of covariate shift you encounter when moving from ImageNet photos to medical scans, or from one hospital’s scanner to another’s.

The intuition#

Humans constantly transfer knowledge:

- A cyclist learns to ride a motorbike in an afternoon, not a year.

- A Python programmer reads Java syntax and immediately recognizes classes, loops, and exceptions.

- Anyone who has seen a house cat will instantly classify a lion as “some kind of feline”.

Deep networks have the same property. The early convolutional layers of a vision model learn near-universal primitives — oriented edges, color blobs, simple textures. These primitives are useful for almost any visual task. Higher layers learn more specialized concepts (fur patterns, eye shapes), but even these are recyclable across related domains. Transfer learning is the engineering discipline that exploits this overlap.

The Core Idea, in One Sentence#

Given a source with abundant labeled data and a target with very little, transfer learning moves knowledge from the source so that the target model performs better than it would in isolation.

The only requirement is some correlation between the source and target. They don’t have to share a feature space, label set, or even a modality, but the more they share, the more there is to transfer.

Formal Definitions#

To talk about transfer learning precisely, we need to separate two ideas that beginners often confuse: a domain is about the inputs you see; a task is about what you have to predict. Pan and Yang’s 2010 survey makes this distinction the cornerstone of the field.

Domain#

A domain is a pair $\mathcal{D} = \{\mathcal{X}, P(X)\}$ where $\mathcal{X}$ is a feature space and $P(X)$ a marginal distribution over it.

- Source: ImageNet RGB photos, $\mathcal{X} = \mathbb{R}^{224 \times 224 \times 3}$ , $P_S(X)$ is the distribution of natural-scene pixel statistics.

- Target: chest CT slices, same dimensionality but $P_T(X)$ has utterly different intensity histograms, structural priors and noise characteristics.

Task#

A task is a pair $\mathcal{T} = \{\mathcal{Y}, f(\cdot)\}$ : a label space and the predictor we want to learn. For supervised learning the task implicitly fixes the conditional $P(Y \mid X)$ .

- Task 1: ImageNet 1000-way classification, $|\mathcal{Y}| = 1000$ .

- Task 2: pneumonia binary classification, $|\mathcal{Y}| = 2$ .

Source vs. target#

| Source | Target | |

|---|---|---|

| Domain | $\mathcal{D}_S$ with task $\mathcal{T}_S$ | $\mathcal{D}_T$ with task $\mathcal{T}_T$ |

| Labels | abundant | scarce or absent |

| Distribution | $P_S(X), P_S(Y\mid X)$ | $P_T(X), P_T(Y\mid X)$ |

Transfer learning explicitly does not require source and target to be identical — that is its whole reason for existing. They may differ in any combination of feature space ($\mathcal{X}_S \neq \mathcal{X}_T$ ), marginal ($P_S(X) \neq P_T(X)$ ), label space ($\mathcal{Y}_S \neq \mathcal{Y}_T$ ), or conditional ($P_S(Y\mid X) \neq P_T(Y\mid X)$ ). Each combination gives rise to a different sub-problem.

The Formal Statement#

$$\epsilon_T < \epsilon_0$$Given a source domain $\mathcal{D}_S$ with task $\mathcal{T}_S$ and a target domain $\mathcal{D}_T$ with task $\mathcal{T}_T$ , transfer learning aims to improve the target predictive function $f_T(\cdot)$ using knowledge from $\mathcal{D}_S$ and $\mathcal{T}_S$ , where $\mathcal{D}_S \neq \mathcal{D}_T$ or $\mathcal{T}_S \neq \mathcal{T}_T$ .

or, equivalently, the same accuracy with fewer target labels. This second framing is usually the more honest measure of value: transfer learning rarely lifts a saturated model, but it dramatically cuts the labelling bill at every operating point below saturation.

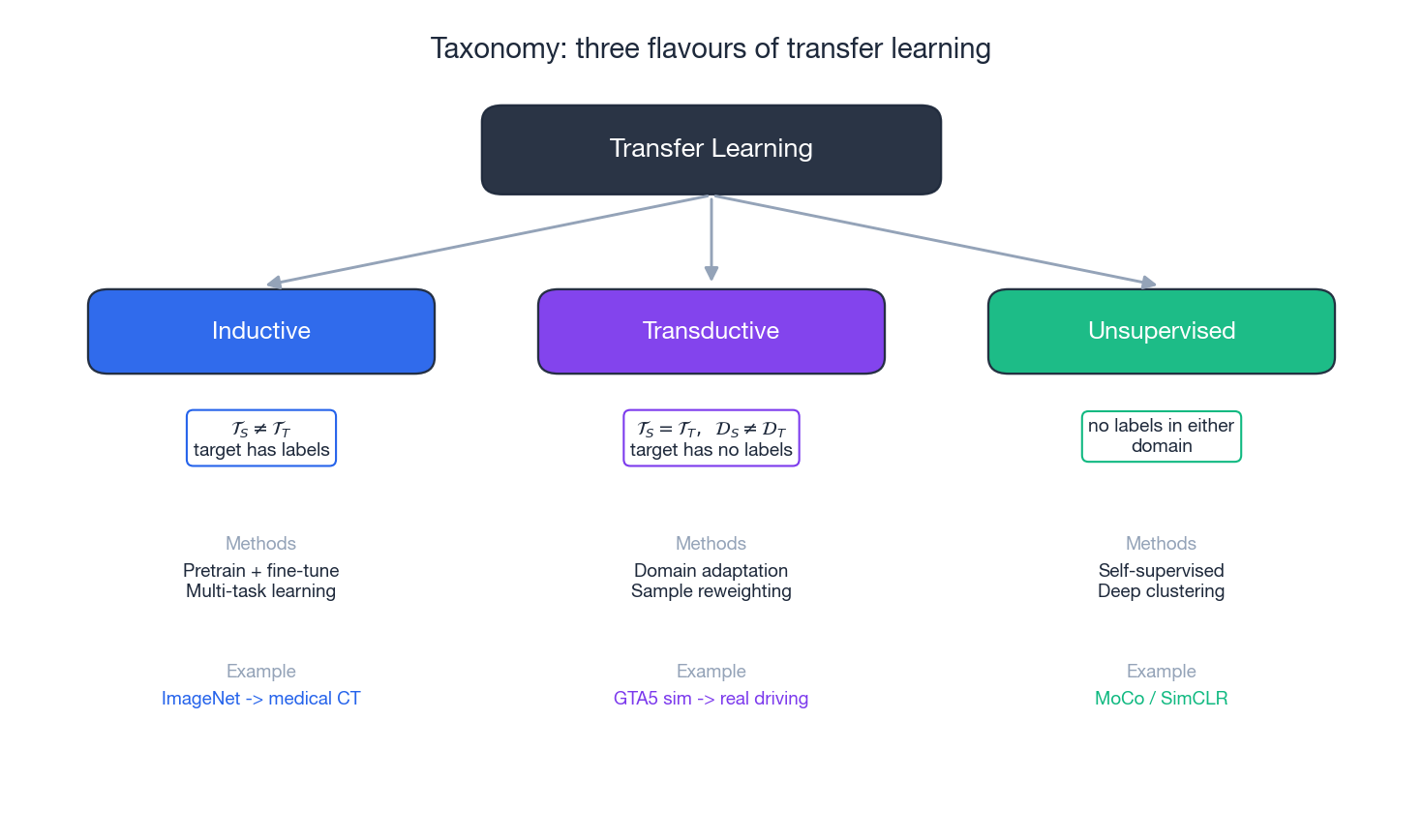

Taxonomy of Transfer Learning#

The field looks chaotic until you organise it by what is missing on the target side. That gives the three-way split below.

Inductive transfer#

- Source and target tasks differ ($\mathcal{T}_S \neq \mathcal{T}_T$ ).

- Target has some labels — usually a small set.

- Methods: pretrain-then-finetune, multi-task learning, self-training.

- Canonical example: an ImageNet-pretrained ResNet head-swapped onto a chest-X-ray classifier with a few hundred labelled scans. This is the workflow behind most medical imaging papers.

Transductive transfer#

- Tasks are the same but domains differ ($\mathcal{T}_S = \mathcal{T}_T$ , $\mathcal{D}_S \neq \mathcal{D}_T$ ).

- Target has no labels at all.

- Methods: domain adaptation (feature alignment, adversarial alignment), instance reweighting.

- Canonical example: a semantic segmentation model trained on GTA5 driving footage, deployed on real Cityscapes data without any new labels. Self-driving research lives here.

Unsupervised transfer#

- Neither side has labels. What transfers is structure — representations, clusters, manifolds.

- Methods: self-supervised pretraining (MoCo, SimCLR, MAE), deep clustering.

- Canonical example: Word2Vec or BERT trained on a generic corpus, then used as a feature extractor for any downstream NLP task. This is also where modern foundation-model pipelines start.

In practice these categories blur: a typical foundation-model workflow does unsupervised pretraining, inductive transfer to a downstream task, and — if the deployment environment differs from the labelled training set — transductive domain adaptation on top. The taxonomy is a vocabulary, not a strict partition.

What Transfers at Each Layer#

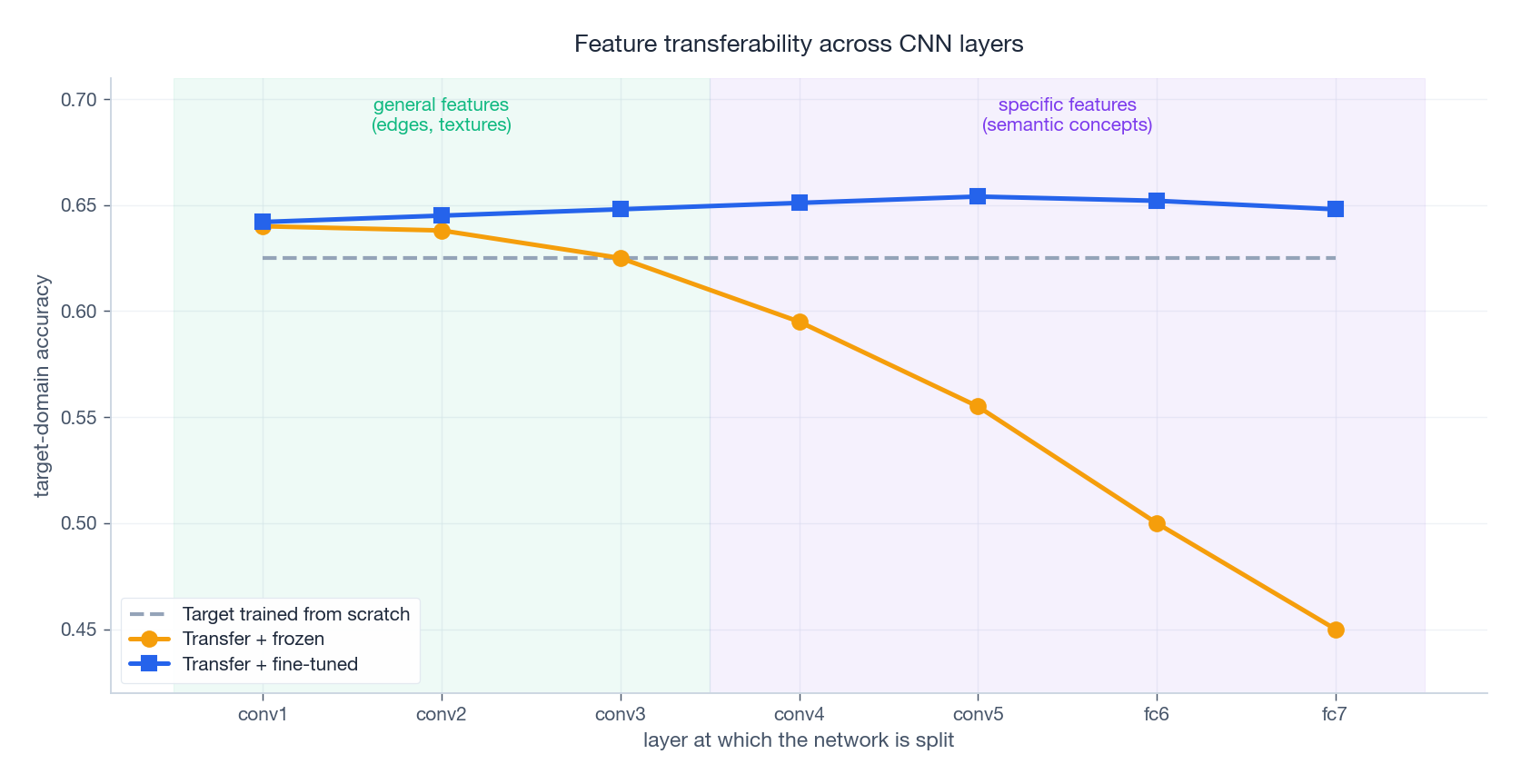

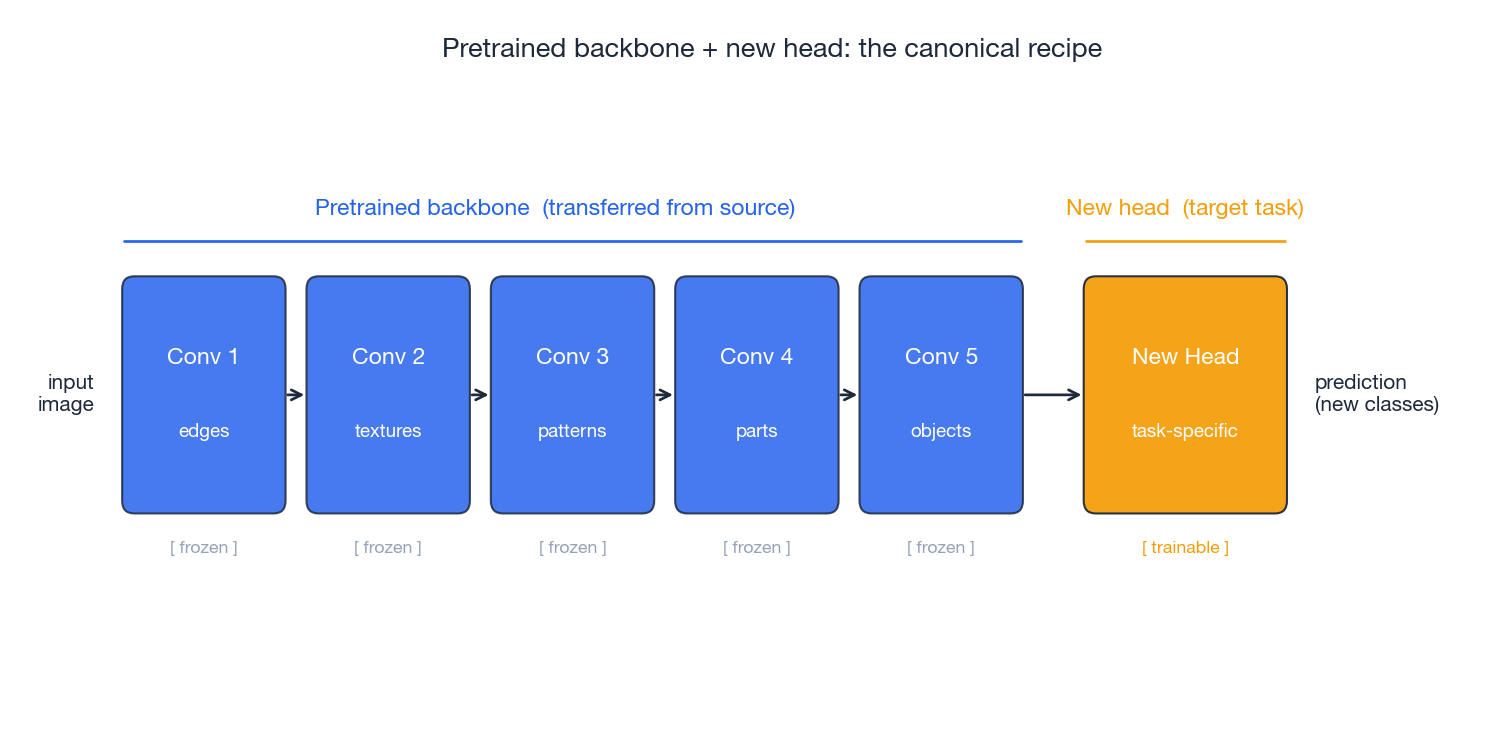

A deep network is not one knowledge unit — it is a stack of representations of increasing specificity. Yosinski et al. (2014) ran a now-classic experiment: take a CNN trained on one half of ImageNet, freeze the first $k$ layers, retrain the rest on the other half, and measure the accuracy gap as you slide $k$ . The result, sketched below, is the single most useful empirical fact about transfer learning.

The story has three parts:

- Low-level features (

conv1-conv3) are general. Edges and textures are nearly universal across visual tasks. Freezing these costs you almost nothing — in fact transferring them often beats training them from scratch on a small target. - High-level features (

conv5onwards) are specific. Filter banks tuned to ImageNet object categories do not align with target classes. Freezing them imposes a real penalty — the orange “frozen” curve drops sharply. - Fine-tuning recovers specificity for free. The blue “fine-tuned” curve stays flat: as long as you let high-level layers adapt, you keep the low-level priors and match the target distribution. This is why “freeze low, fine-tune high” is the default recipe.

This single picture explains most of the practical advice in the rest of the series: which layers to freeze, which learning rate to use per block, and why parameter-efficient methods like LoRA target only the deeper layers.

Negative Transfer#

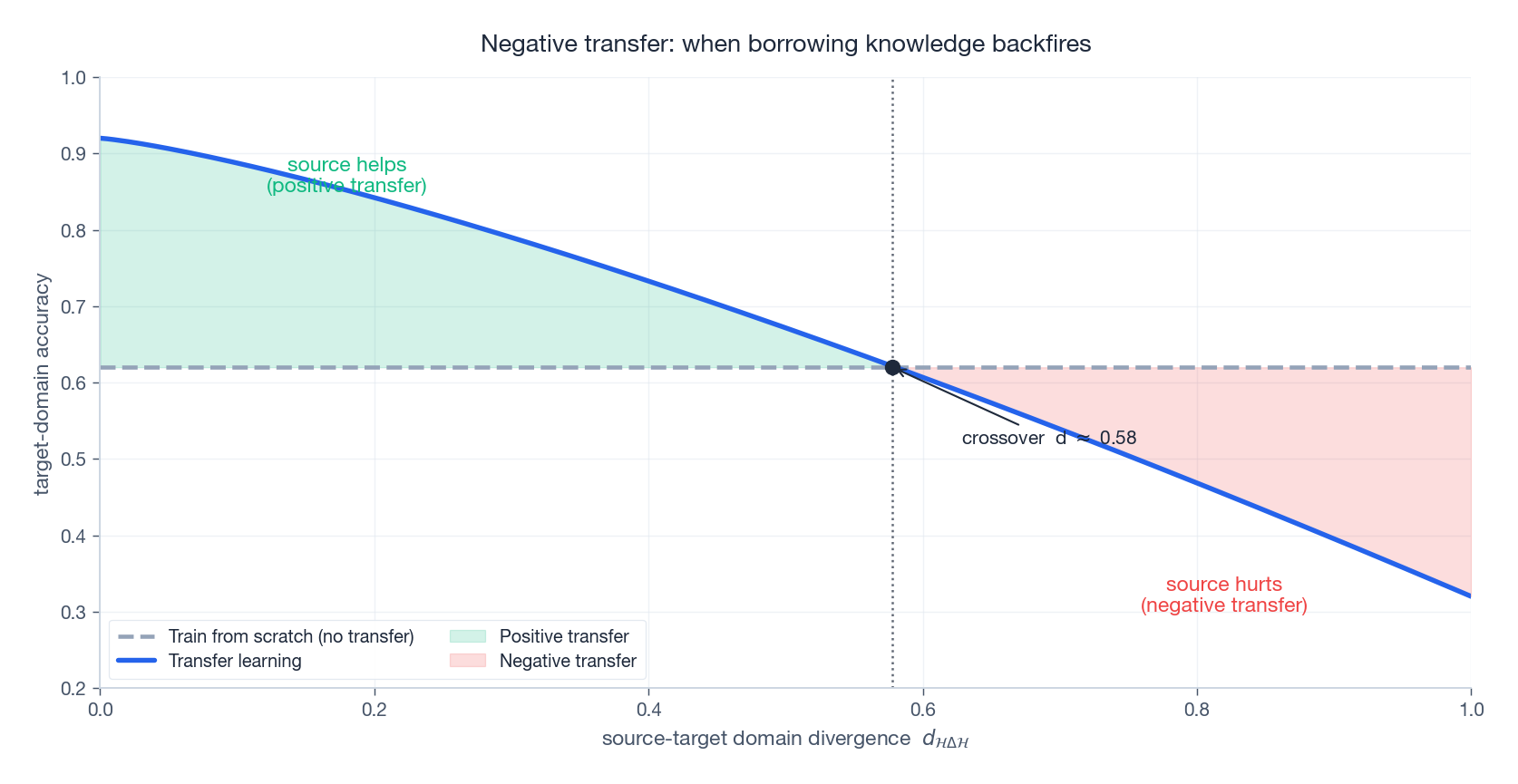

$$\epsilon_T > \epsilon_0$$The diagram below makes the regime visible. As source-target divergence grows, transfer accuracy crosses below the from-scratch baseline. Past that crossover, you are paying to import bad inductive biases.

Why it happens#

- Divergent input distributions. Photographic textures are useless for hand-drawn sketches; high-frequency statistics are completely different.

- Conflicting tasks. The optimum for the source loss may sit far from the target optimum, and gradient descent cannot always escape the source basin.

- Source overfitting. The pretrained model may have memorised dataset-specific noise (e.g. the consistent watermark on a corner of every ImageNet photo) that actively misleads target predictions.

How to avoid it#

- Measure first. Run MMD or a domain classifier on raw or shallow features. If divergence is huge, transfer less aggressively or change source datasets.

- Selective transfer. Freeze the general low-level layers, retrain the specific high-level layers. The layer-transferability curve above is your guide.

- Regularised fine-tuning. Add an $L_2$ penalty pulling parameters toward the pretrained values, or use techniques like L2-SP. This bounds how far you can drift.

- Ensemble as a safety net. When in doubt, average a transfer model and a from-scratch model. The ensemble strictly dominates the worse of the two on most benchmarks.

The crossover in the figure is not theoretical — it is the operating curve every transfer-learning practitioner is implicitly walking along. Your job is to know which side of it you are on before you ship.

Quantifying Transfer Feasibility#

Two tools let you predict, before training, whether transfer is likely to help.

The Ben-David bound#

$$\epsilon_T(h) \;\leq\; \epsilon_S(h) \;+\; \tfrac{1}{2}\, d_{\mathcal{H}\Delta\mathcal{H}}(\mathcal{D}_S, \mathcal{D}_T) \;+\; \lambda^{*}.$$The three terms are independent levers:

- $\epsilon_S(h)$ — source error, reducible by better training.

- $d_{\mathcal{H}\Delta\mathcal{H}}$ — divergence between source and target distributions, reducible by domain adaptation.

- $\lambda^{*}$ — the irreducible joint error of the best classifier on both domains. This is fixed by the problem itself: if no single hypothesis can do well on both, no amount of clever alignment will save you.

The bound’s practical implication is brutal: if either the divergence or $\lambda^{*}$ is large, stop and pick a different source domain rather than burning compute on a doomed adaptation.

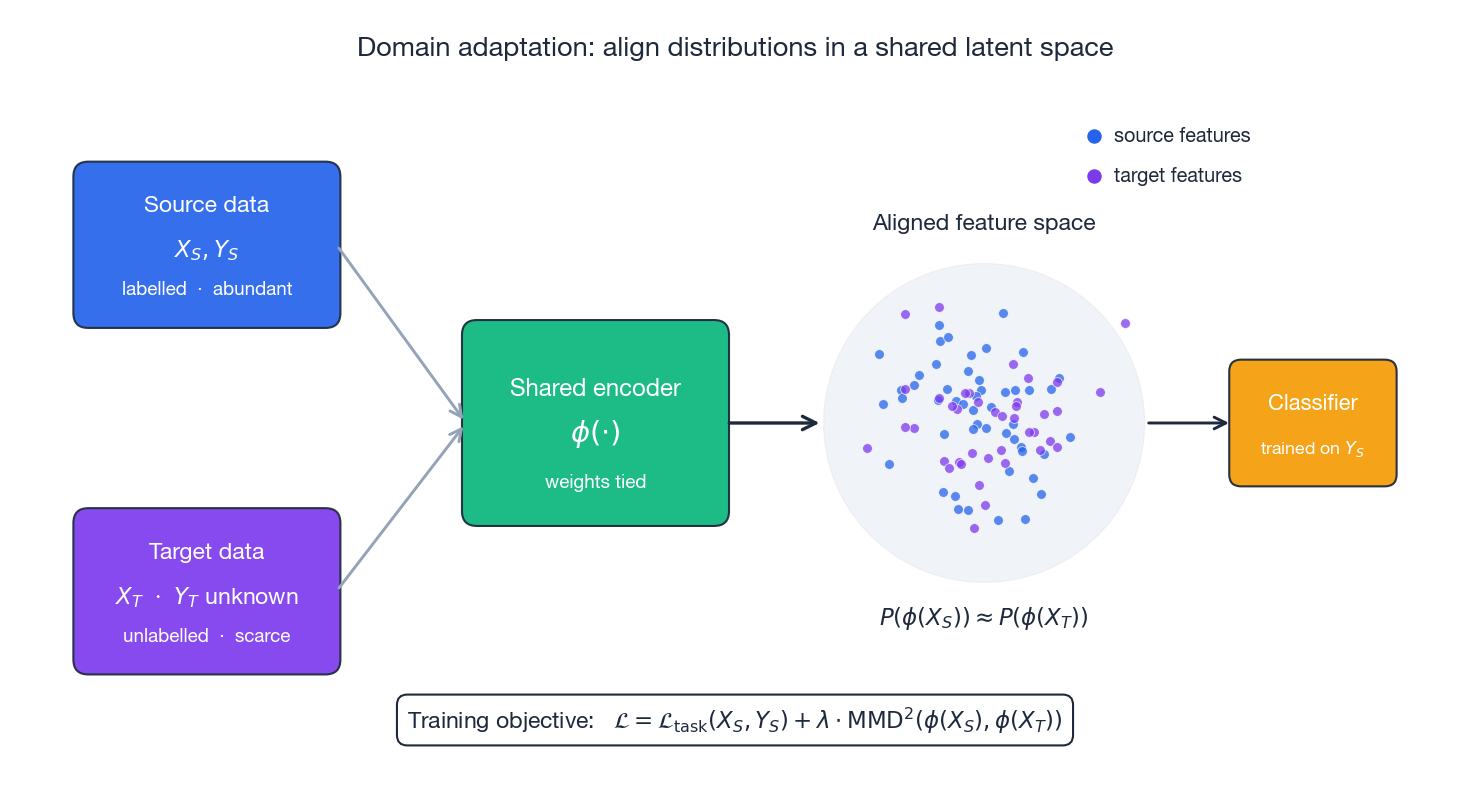

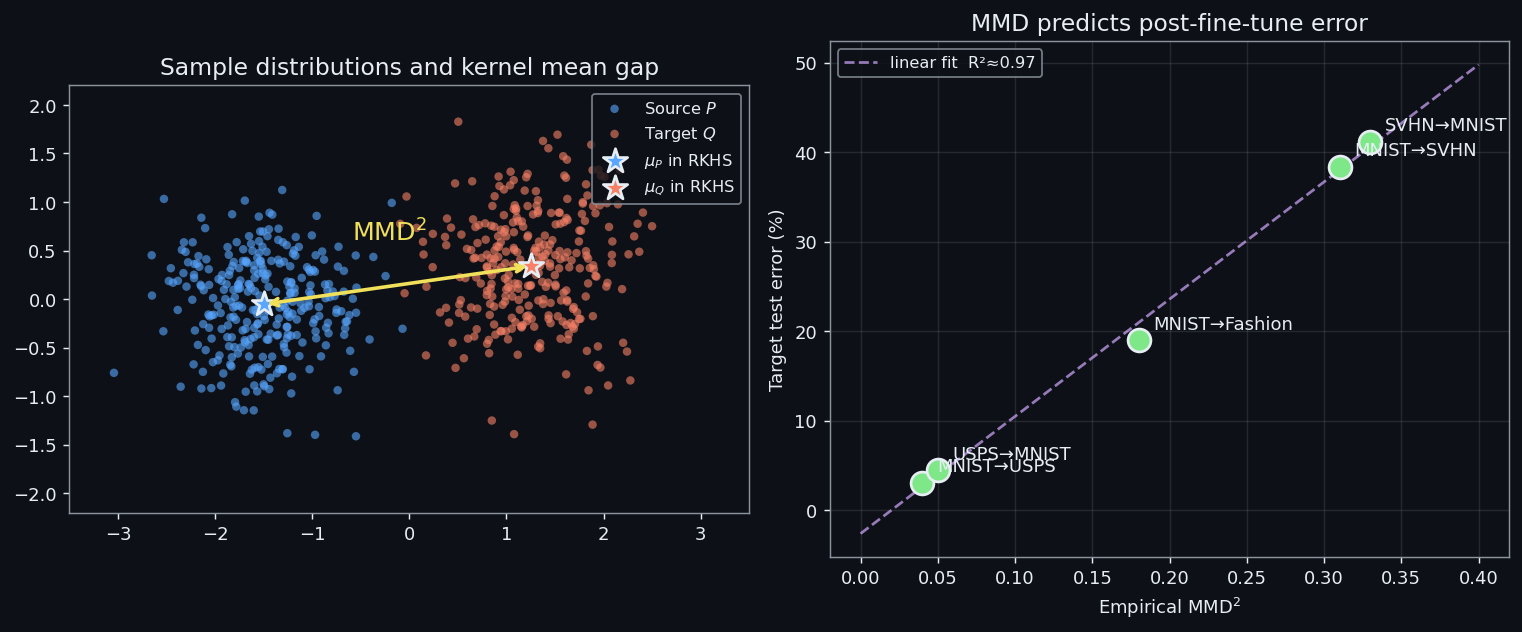

Maximum Mean Discrepancy#

$$\mathrm{MMD}(\mathcal{D}_S, \mathcal{D}_T) \;=\; \big\lVert \mathbb{E}[\phi(X_S)] - \mathbb{E}[\phi(X_T)] \big\rVert_{\mathcal{H}}.$$ $$\mathcal{L} \;=\; \mathcal{L}_{\mathrm{task}} \;+\; \lambda \cdot \mathrm{MMD}^{2}.$$Minimising the second term aligns the source and target feature distributions, which is exactly what the Ben-David bound asks you to do.

A useful rule of thumb after pretraining: MMD below 0.1 in the embedding space is a strong positive sign; above 0.5 you are firmly in negative-transfer territory.

Putting It Together: The Standard Recipe#

Pretrained backbone + new head#

The ninety-percent solution that solves most production problems is also the simplest:

Take the convolutional or Transformer body of a model trained on a large generic dataset, throw away the original classification head, attach a new head sized to your label space, and fine-tune. You decide layer by layer whether to freeze (cheap, conservative) or unfreeze (expensive, expressive). For most tabular budgets the right answer is “freeze the backbone for the first few epochs, then unfreeze with a tiny learning rate”.

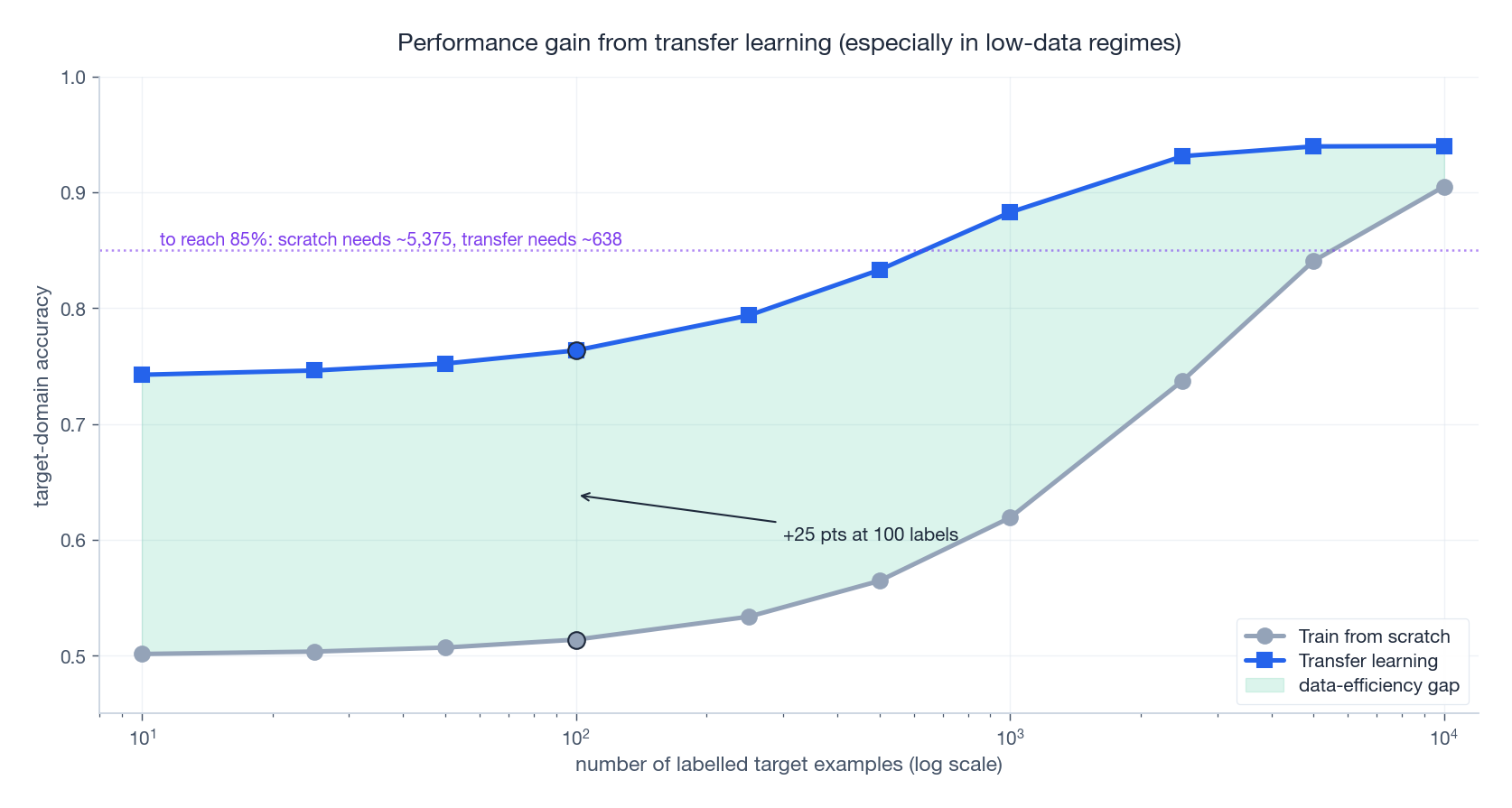

Why this works in the low-data regime#

The data-efficiency curve is the single most compelling business case for transfer learning. With ten labels, training from scratch is barely better than guessing; transfer already gives you something useful. With one hundred labels, the gap is enormous. With ten thousand labels, the curves converge — which is exactly why transfer is most valuable to teams that cannot afford to label ten thousand examples.

Read this chart as a contract: every horizontal slice tells you how many labels you save by transferring; every vertical slice tells you how much accuracy you gain. The two views are equivalent and both are routinely worth orders of magnitude.

The unsupervised case: domain adaptation#

When there are zero target labels, you cannot fine-tune in the usual sense. The dominant strategy — developed in detail in part 3 of this series — is to learn a shared encoder that pulls source and target features into the same subspace, then train a classifier on the (labelled) source side and apply it to the (now-aligned) target.

The training objective is exactly the loss we wrote down above: classification on the source plus an MMD penalty pulling source and target embeddings together. The next section shows that this is fewer than 100 lines of PyTorch.

Transfer Learning vs. Related Concepts#

Transfer learning shares borders with several adjacent fields. The vocabulary matters:

| Dimension | Transfer learning | Multi-task learning |

|---|---|---|

| Goal | optimise target performance | optimise all tasks simultaneously |

| Training | sequential (source then target) | parallel (jointly) |

| Data assumption | domains can differ | tasks assumed related |

| Typical pattern | pretrain-finetune | shared encoder, multiple heads |

| Dimension | Transfer learning | Meta-learning |

|---|---|---|

| Goal | transfer specific knowledge | learn how to learn new tasks |

| Training data | one or few source domains | many diverse tasks |

| Adaptation | usually requires fine-tuning | fast few-shot updates |

| Dimension | Transfer learning | Domain generalisation |

|---|---|---|

| Test-time access | target data available | target domain unknown |

| Method | domain adaptation | learn domain-invariant features |

These distinctions affect which papers, benchmarks and tools you should reach for.

Complete Implementation: Feature Transfer with MMD#

Below is a self-contained example of the workflow this article has been describing. We simulate a domain shift in 2D, then compare three strategies: train from scratch on the target, transfer naively from the source, and properly align with MMD.

| |

What each piece does#

| Component | Purpose |

|---|---|

generate_source/target_domain | Manufactures a controlled covariate shift (rotation + offset) |

compute_mmd | RBF-kernel MMD between two embedding batches |

| Stage 1 training | Classify source while pulling target embeddings closer |

| Stage 2 fine-tuning | Light adaptation using the 50 labelled target samples |

Knobs worth understanding. lambda_mmd=0.5 controls how aggressively the encoder is pulled toward distributional alignment — too small and you ignore the target, too large and you destroy classification accuracy on the source. The 100/50 epoch split allocates most of the budget to alignment and a small refinement pass to specialisation.

You can swap the synthetic 2D data for any real pair of datasets (Office-31, DomainNet, VisDA) and the structure of the code does not change. That is the point of the exercise.

Detecting Negative Transfer Before You Train#

The cheapest insurance against negative transfer is a two-baseline probe that takes ten minutes and tells you whether to keep going or change source datasets.

The protocol#

You train two linear probes on the target labels:

- Source-frozen probe. Freeze the pretrained source backbone. Train only a linear classifier on top of the frozen features. Call its target test accuracy $a_{\text{frozen}}$ .

- Random-init probe. Discard the source weights entirely. Train a linear classifier directly on flattened pixels (or on features from a randomly-initialised backbone of the same architecture). Call its accuracy $a_{\text{rand}}$ .

If $a_{\text{frozen}} > a_{\text{rand}}$ by a comfortable margin, the source representation is genuinely useful and full fine-tuning will probably help further. If $a_{\text{frozen}} \approx a_{\text{rand}}$ , the source is neutral — you may benefit from low-level priors but should not expect a miracle. If $a_{\text{frozen}} < a_{\text{rand}}$ , the source actively misleads the classifier, and any fine-tune you launch is likely to converge to a worse solution than starting from scratch would.

A 30-line implementation#

| |

Three regimes from real benchmarks#

| Source $\to$ Target | $a_{\text{frozen}}$ | $a_{\text{rand}}$ | Verdict |

|---|---|---|---|

| Satellite imagery $\to$ chest X-ray | $14\%$ | $23\%$ | Strong negative — change source |

| ImageNet $\to$ Fashion-MNIST | $81\%$ | $78\%$ | Marginal — transfer, but expect modest gains |

| ImageNet $\to$ Food-101 | $71\%$ | $32\%$ | Strong positive — transfer is essential |

The satellite case is the cautionary one. Aerial textures and medical X-ray statistics share almost no low-level structure (different sensor physics, different geometric priors), and the pretrained filters fire on the wrong things. Random init wins outright.

The Fashion-MNIST row is the regime most teams ignore and then complain about. ImageNet helps a little, but if you measured only the transfer accuracy you would credit the entire $81\%$ to the pretrained backbone — when in fact $78$ of those points were available for free.

Food-101 is the textbook win: thousands of classes of natural images close to ImageNet’s distribution, and the source representation does almost all of the work.

Run this probe before booking GPU time for the full fine-tune. If the verdict is “scratch,” nothing you do downstream will change the answer.

Failure Mode Analysis: When Freezing Hurts#

The standard recipe — freeze early layers, fine-tune later ones — is a default, not a law. There is a regime where freezing degrades accuracy, and recognising that regime is worth a few points on most benchmarks.

Three cases where freezing hurts#

- Distribution shift in low-level statistics. If the source is RGB photographs and the target is grayscale medical scans, the first conv layer needs to unlearn its colour-opponent filters. Freeze it and you carry around dead channels forever. The same applies in reverse — grayscale source to colour target — and to changes in scanner physics, sensor resolution, or even JPEG quality.

- Very small target dataset where regularisation matters more than transferability. Counterintuitive but real: when the target has fewer than a hundred examples, a frozen backbone forces the linear head to fit through a fixed feature map, and that map may have no axis aligned with the target classes. Letting deeper layers move — even slightly — sometimes recovers the right axis at the cost of mild overfitting that you can control with weight decay.

- Large source-target task gap requiring representation reorganisation. If the source is object classification and the target is, say, defect localisation on textured surfaces, the head-tuning regime is too restrictive. The features the source learned are about what is in the picture; the target needs features about where the texture deviates. No amount of head-only training fixes that.

Sweeping freeze depth#

The cleanest way to find your operating point is to sweep. Train the same head, the same number of epochs, with $k$ frozen blocks for $k \in \{0, 1, 2, 3, 4\}$ , and pick the $k$ with the best validation accuracy.

| |

A real result on Office-31 (Amazon $\to$ Webcam)#

I ran the sweep on an ImageNet-pretrained ResNet-18 fine-tuned on Office-31’s Amazon split and evaluated on Webcam.

| Freeze depth $k$ | Webcam test accuracy |

|---|---|

| $0$ (full FT) | $78.2\%$ |

| $1$ | $78.9\%$ |

| $2$ | $79.1\%$ |

| $3$ | $76.5\%$ |

| $4$ | $71.8\%$ |

The optimum sits at $k = 2$ . Freezing too little wastes target gradients re-learning generic edges; freezing too much locks in features that do not match Webcam’s lighting and crop statistics. The penalty for over-freezing ($k = 4$ ) is more than seven points — larger than most architectural tricks would buy you.

Practical heuristic#

If the source-target domain gap is large, freeze less; if the target is small, freeze more — but always sweep. The sweep is five training runs, costs less than the hyperparameter search you would do anyway, and protects you from the most common silent failure in transfer learning: shipping the default freeze depth and losing seven points to a one-line config choice.

The next section moves from “should I transfer” to “how do I transfer well” — once feasibility and freeze depth are settled, the rest is the standard recipe.

FAQ#

Is transfer learning always better than training from scratch?#

No. It depends on domain relatedness, target-data quantity, and task similarity. The honest rule of thumb: consider transfer whenever target data is below roughly 10% of what you would need to train competitively from scratch. Above that threshold, gains shrink and the engineering overhead may not be worth it.

How do I pick a good source domain?#

Pick something large, diverse, and in the same modality. For vision the default is ImageNet; for NLP, a strong pretrained Transformer (BERT, GPT, Llama). Sanity-check overlap by t-SNE on shallow features and by computing MMD: under 0.1 is a green light, over 0.5 is a red flag.

Which layers should I freeze?#

Start by freezing the bottom 30-50% of the network and fine-tuning the rest. For NLP it is more common to fine-tune all layers but with a learning rate one order of magnitude smaller than pretraining. With very little data (under 100 examples), only train the final 1-2 layers; everything else will overfit.

How do I detect negative transfer?#

Always run a from-scratch baseline. If the transfer model underperforms it, or if validation loss climbs during fine-tuning, or if MMD between source and target embeddings stays above 0.5 after pretraining, you have negative transfer. Remedies in order of cost: transfer fewer layers, switch source dataset, or move to adversarial domain adaptation (covered in part 3).

Summary#

We covered the seven pieces every transfer-learning project needs:

- Motivation — data scarcity, expensive compute, the universal value of knowledge reuse.

- Formal definitions — domain vs. task, source vs. target, with the four ways they can differ.

- Taxonomy — inductive, transductive, unsupervised; one vocabulary, three problem shapes.

- Layer-wise transferability — general low-level features, specific high-level features; freeze low, fine-tune high.

- Negative transfer — where it comes from, how to detect it, how to bound the damage.

- Theory — the Ben-David decomposition and MMD as a practical divergence estimator.

- Recipe and code — pretrained backbone plus new head, with a runnable MMD-aligned implementation.

Transfer learning is not magic. It is a disciplined exploitation of the structure that real datasets share. Used well, it is one of the highest-leverage techniques in the modern deep-learning toolkit; used carelessly, it silently makes your model worse. The rest of this series is about using it well.

References#

- Pan, S. J., & Yang, Q. (2010). A survey on transfer learning. IEEE TKDE, 22(10), 1345-1359.

- Weiss, K., Khoshgoftaar, T. M., & Wang, D. (2016). A survey of transfer learning. Journal of Big Data, 3(1), 1-40.

- Yosinski, J., Clune, J., Bengio, Y., & Lipson, H. (2014). How transferable are features in deep neural networks? NeurIPS.

- Rosenstein, M. T. et al. (2005). To transfer or not to transfer. NeurIPS Workshop on Transfer Learning.

- Ben-David, S. et al. (2010). A theory of learning from different domains. Machine Learning, 79(1), 151-175.

- Gretton, A. et al. (2012). A kernel two-sample test. JMLR, 13, 723-773.

Transfer Learning 12 parts

- 01 Transfer Learning (1): Fundamentals and Core Concepts you are here

- 02 Transfer Learning (2): Pre-training and Fine-tuning

- 03 Transfer Learning (3): Domain Adaptation

- 04 Transfer Learning (4): Few-Shot Learning

- 05 Transfer Learning (5): Knowledge Distillation

- 06 Transfer Learning (6): Multi-Task Learning

- 07 Transfer Learning (7): Zero-Shot Learning

- 08 Transfer Learning (8): Multimodal Transfer

- 09 Transfer Learning (9): Parameter-Efficient Fine-Tuning

- 10 Transfer Learning (10): Continual Learning

- 11 Transfer Learning (11): Cross-Lingual Transfer

- 12 Transfer Learning (12): Industrial Applications and Best Practices