Transfer Learning (3): Domain Adaptation

A practical guide to domain adaptation: covariate shift, label shift, DANN with gradient reversal, MMD alignment, CORAL, self-training, AdaBN, and a complete DANN implementation.

Your autonomous-driving stack works perfectly on sunny California freeways. Then it rains in Seattle. Top-1 accuracy drops from 95% to 70%. The model did not get worse — the data distribution shifted, and your training set never told it what wet asphalt looks like at dusk.

This is the everyday problem of domain adaptation: you have abundant labelled data in one distribution (the source) and unlabelled data in another (the target), and you need the model to perform on the target. This article shows you how, from first-principles theory to a working DANN implementation.

What You Will Learn#

- Three flavours of distribution shift — covariate, label, concept — and how each is fixed

- The Ben-David bound: why adaptation is possible, and the precise quantity it lets you reduce

- DANN: adversarial alignment with the gradient reversal layer, in one backward pass

- MMD and CORAL: explicit, non-adversarial distribution-matching losses

- Self-training, AdaBN, CycleGAN, ADDA — the rest of the modern toolbox

- A complete DANN implementation in PyTorch

- A decision tree for picking a method, plus benchmark numbers on Office-31 and DomainNet

Prerequisites: Parts 1–2 of this series, basic familiarity with GAN-style adversarial training.

Three Faces of Distribution Shift#

A domain is a feature space $\mathcal{X}$ with a marginal distribution $P(X)$ . A task is a label space $\mathcal{Y}$ with a conditional distribution $P(Y \mid X)$ . Domain adaptation studies what happens when the source and target disagree on one of these.

| Setting | Source | Target | Goal |

|---|---|---|---|

| Source domain $\mathcal{D}_S$ | many labelled $(x_i, y_i)$ | — | — |

| Target domain $\mathcal{D}_T$ | — | mostly unlabelled $x_j$ | learn $f: \mathcal{X} \to \mathcal{Y}$ that works on $\mathcal{D}_T$ |

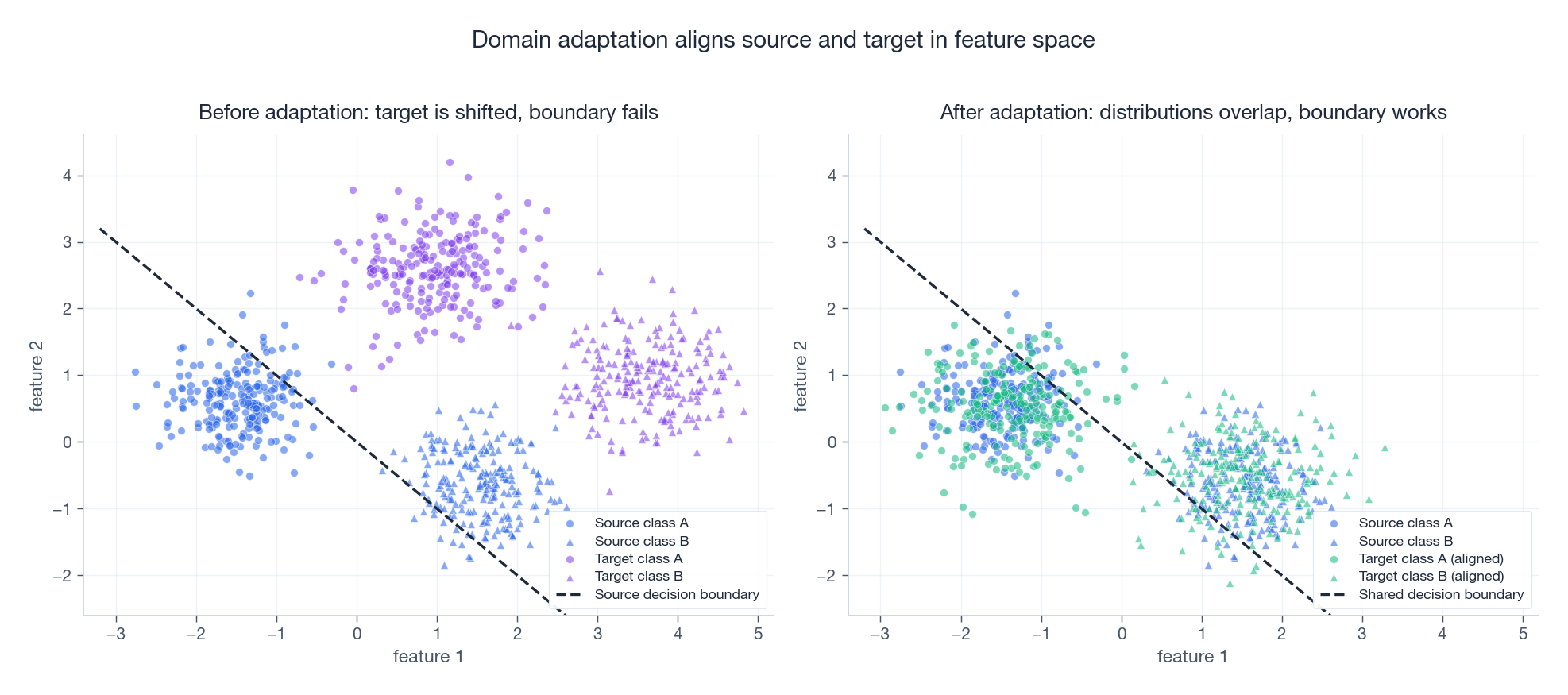

The figure is the entire game in one picture: before adaptation, the source-trained boundary slices through empty target space; after adaptation, both domains share a feature manifold and the same boundary works.

Covariate shift — the input distribution moved#

$$P_S(X) \neq P_T(X), \qquad P_S(Y \mid X) = P_T(Y \mid X)$$The labelling rule is unchanged; only what you observe is different. Examples:

- A spam filter trained on 2020 email and deployed in 2026: topics drift, but spam is still spam.

- CT scans from a Siemens scanner used to evaluate scans from a GE machine: the imaging characteristics differ, but radiologists score them the same way.

Estimating densities in high dimensions is hopeless, so practitioners estimate the ratio directly with KLIEP, uLSIF, or a probabilistic classifier (Bayes-optimal classifier between source and target gives you the ratio for free).

Label shift — the prevalence moved#

$$P_S(Y) \neq P_T(Y), \qquad P_S(X \mid Y) = P_T(X \mid Y)$$Class-conditional appearance is unchanged; only base rates differ. Examples:

- An ICU model deployed in outpatient clinics where disease prevalence is much lower.

- A recommender trained on a young-skewing pilot, deployed across all age cohorts.

Standard fix. Estimate the target prior $P_T(Y)$ by EM on unlabelled target data (BBSE / RLLS work well), then rescale each source-trained probability by $P_T(y) / P_S(y)$ and renormalise.

Concept shift — the rule itself moved#

$$P_S(Y \mid X) \neq P_T(Y \mid X)$$This is the hard case. “Sick” is positive in a music review and negative in a product review even though the word is identical. With no target labels at all, no method can untangle this — concept shift demands at least a few labelled target examples (the semi-supervised DA setting).

Theory: the Ben-David Bound#

$$ \epsilon_T(h) \;\leq\; \epsilon_S(h) \;+\; \tfrac{1}{2}\, d_{\mathcal{H}\Delta\mathcal{H}}(\mathcal{D}_S, \mathcal{D}_T) \;+\; \lambda^{*}. $$| Term | Meaning | What you can do about it |

|---|---|---|

| $\epsilon_S(h)$ | source-domain error | train better on the source |

| $d_{\mathcal{H}\Delta\mathcal{H}}$ | symmetric-difference divergence between domains | this is what domain adaptation reduces |

| $\lambda^{*}$ | error of the best joint predictor | irreducible — if it is large, no method will save you |

Two takeaways:

- Adaptation is bounded by an oracle. If source and target tasks are fundamentally different ($\lambda^*$ large), you are out of luck — you need new labels, not a fancier loss.

- Domain divergence has a tractable proxy. Train a binary classifier to distinguish source from target features. If it gets near 50% accuracy, your features are domain-invariant. This is exactly the mechanism DANN automates.

DANN — Adversarial Alignment in One Backward Pass#

Domain-Adversarial Neural Network (Ganin et al., 2016) is the most influential adversarial method, and the cleanest implementation of “minimise the domain divergence proxy”.

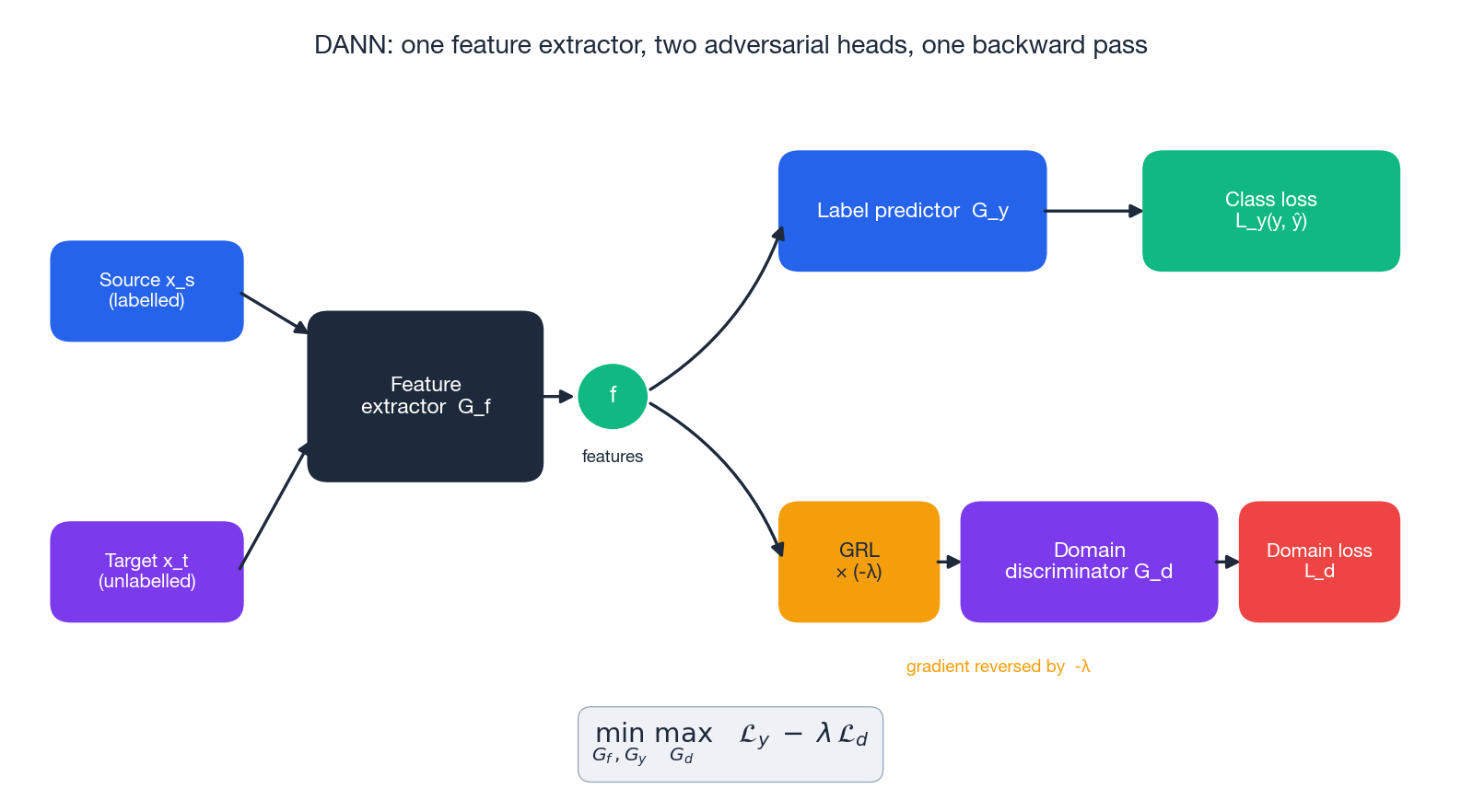

Three subnetworks, one shared trunk#

| Subnet | Role | Trained on |

|---|---|---|

| Feature extractor $G_f$ | maps $x$ to $f = G_f(x)$ | both domains |

| Label predictor $G_y$ | classifies $f \to \hat{y}$ | source labels |

| Domain discriminator $G_d$ | classifies $f \to$ source/target | both domains |

$G_d$ wants to tell the domains apart; $G_f$ wants to fool $G_d$ while still letting $G_y$ classify the source correctly.

The Gradient Reversal Layer (GRL)#

$$ \text{forward: }\; \text{GRL}(x) = x, \qquad \text{backward: }\; \frac{\partial\,\text{GRL}}{\partial x} = -\lambda\, I. $$GRL sits on the path from features to the domain head. During backprop, the discriminator’s gradient flips sign before reaching $G_f$

, so the same loss.backward() call:

- updates $G_y$ to classify better (normal gradients),

- updates $G_d$ to discriminate better (normal gradients),

- updates $G_f$ to confuse $G_d$ (reversed gradients on the domain term) while still helping $G_y$ .

No alternating training, no separate optimisers, no manual freezing.

The adversarial weight schedule#

$$\lambda_p = \frac{2}{1 + \exp(-\gamma p)} - 1, \qquad \gamma \approx 10,$$where $p \in [0, 1]$ is training progress. Early on ($\lambda \approx 0$ ), the network just learns good source features. As training proceeds ($\lambda \to 1$ ), domain alignment kicks in. Skipping this schedule is the single most common cause of “DANN trains but does worse than source-only”.

MMD — Matching Means in an RKHS#

Adversarial alignment is powerful but unstable. The non-adversarial alternative is to define an explicit distance between distributions and minimise it directly. Maximum Mean Discrepancy (Gretton et al., 2012) is the standard choice.

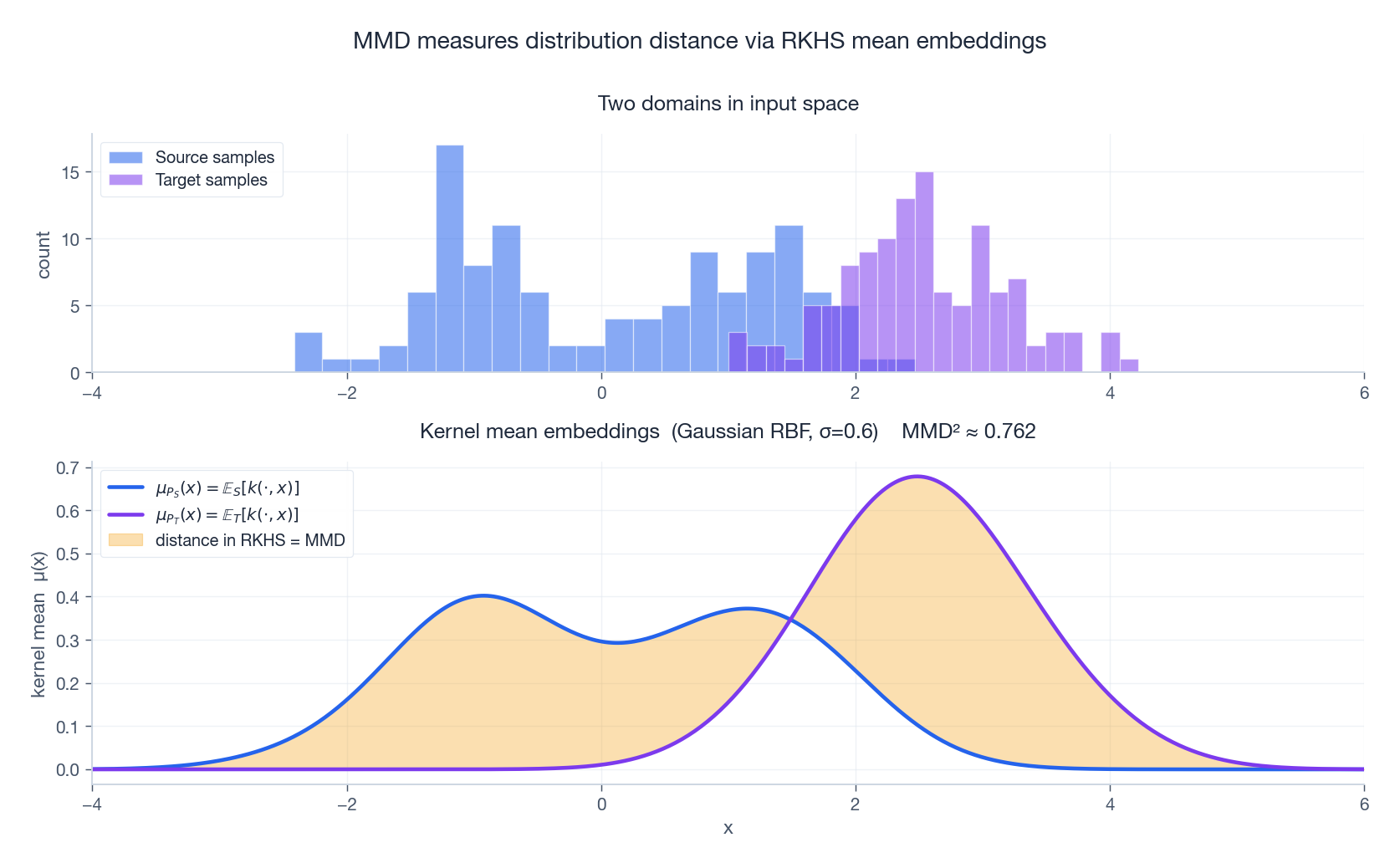

The idea#

$$\mu_P = \mathbb{E}_{X \sim P}[\phi(X)] \;\in\; \mathcal{H}.$$ $$\text{MMD}^2(P_S, P_T) = \|\mu_{P_S} - \mu_{P_T}\|_{\mathcal{H}}^2.$$The figure shows this graphically: even when raw histograms overlap a little, the kernel mean embeddings make the gap explicit, and the shaded area is exactly $\text{MMD}^2$ .

The estimator you actually compute#

$$ \widehat{\text{MMD}}^2 = \frac{1}{n_s^2}\sum_{i,j} k(x_i^s, x_j^s) + \frac{1}{n_t^2}\sum_{i,j} k(x_i^t, x_j^t) - \frac{2}{n_s n_t}\sum_{i,j} k(x_i^s, x_j^t). $$ $$\mathcal{L} = \mathcal{L}_{\text{task}} + \lambda \cdot \widehat{\text{MMD}}^2\!\big(G_f(X_S),\, G_f(X_T)\big).$$This is DAN / DDC (Long et al., 2015; Tzeng et al., 2014).

Practical tips#

- Use multi-kernel MMD. A mixture $k = \sum_u \beta_u k_{\sigma_u}$ of Gaussian RBFs at several bandwidths is robust to bandwidth misspecification.

- Median heuristic for $\sigma$ . Set the bandwidth to the median pairwise distance in the batch — cheap, robust, almost always good enough.

- Apply MMD to deeper layers. Lower layers carry domain-specific texture; the abstraction at the top is what you want aligned.

MMD vs DANN at a glance#

| MMD | DANN | |

|---|---|---|

| Distance | Kernel-based RKHS norm | Jensen–Shannon (via discriminator) |

| Optimisation | Direct minimisation | Adversarial minimax (GRL) |

| Stability | Very stable | Sometimes oscillates |

| Expressiveness | Tied to kernel choice | More flexible |

| Best when | Small/medium gap, less data | Large gap, abundant data |

A reasonable default workflow: try MMD first; switch to DANN if MMD plateaus.

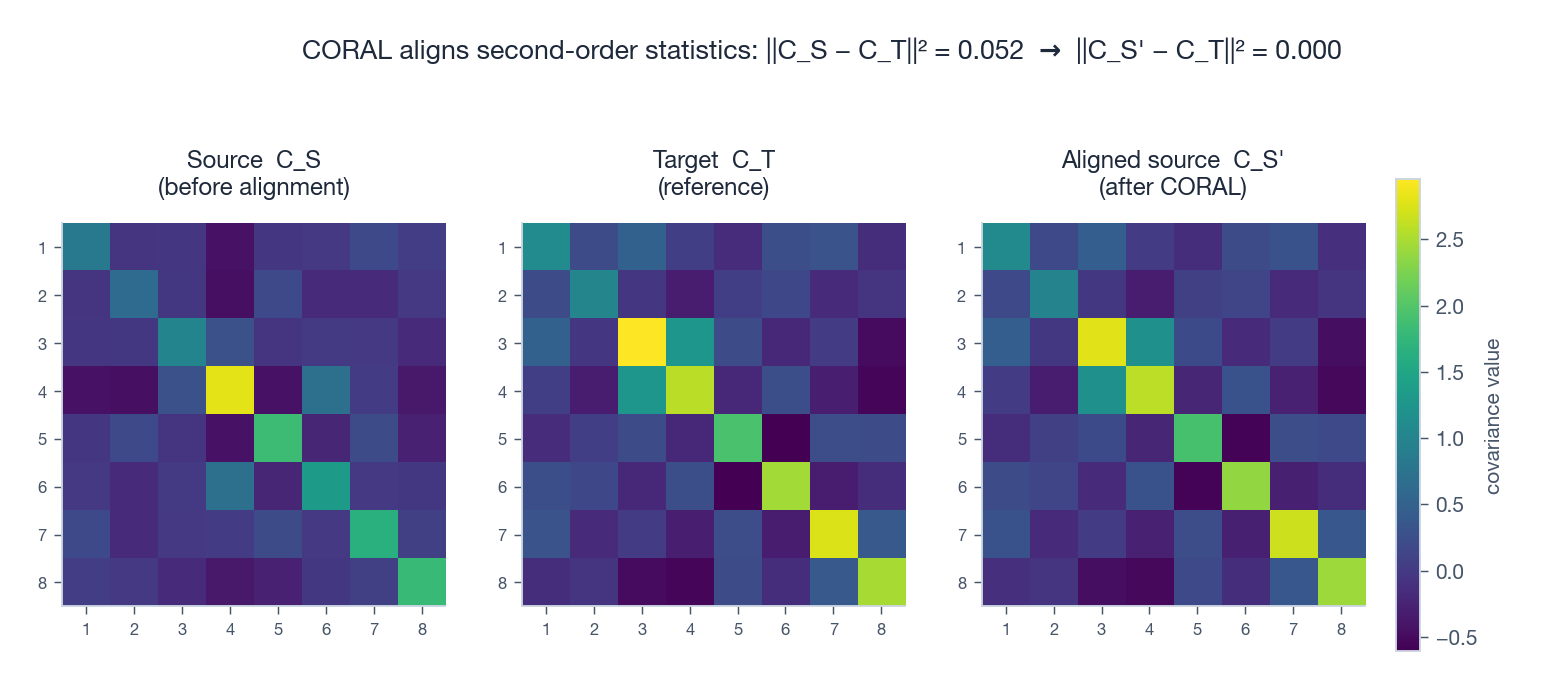

CORAL — Aligning Second-Order Statistics#

If matching means is good, matching means and covariances is often better. CORAL (Sun & Saenko, 2016) does exactly this.

Intuition — whitening + recolouring. Multiplying the source features by $C_S^{-1/2} C_T^{1/2}$ first removes the source’s covariance fingerprint, then paints on the target’s. Deep CORAL just adds the loss above to a deep network and lets the gradients do the same job implicitly.

CORAL is dirt cheap (one matrix and one Frobenius norm per batch), entirely deterministic, and surprisingly competitive on mild shifts. It is a great baseline before reaching for MMD or DANN.

AdaBN — The Free Lunch You Should Always Try First#

The simplest domain adaptation trick of all: recompute batch-norm statistics on the target.

Standard BN at test time uses the running mean and variance accumulated during source training. If the target has a different distribution, those statistics are wrong, and they sit between every conv layer and the next non-linearity. AdaBN (Li et al., 2017):

- Train normally on source.

- With weights frozen, run forward passes over unlabelled target data and recompute $\mu_T, \sigma_T^2$ for every BN layer.

- At deployment, swap source statistics for target ones.

Cost: minutes. Code change: replacing a few BatchNorm running stats. Effect: routinely reclaims 2–10 points of accuracy under covariate shift. Always try this first before any fancier method.

GAN-Based and Pixel-Level Adaptation#

Sometimes the gap is so visual — synthetic to real, day to night — that aligning features is too late. You want to translate the inputs themselves.

- CycleGAN learns two generators $G: \mathcal{X}_S \to \mathcal{X}_T$ and $F: \mathcal{X}_T \to \mathcal{X}_S$ subject to cycle consistency $F(G(x)) \approx x$ . Translate source images into target style, then train your classifier on the translated images with the original source labels. Beware: cycle consistency does not guarantee semantic preservation; combine with a perceptual or identity loss for safety.

- ADDA decouples the source and target encoders. Stage 1: train a source encoder + classifier normally. Stage 2: initialise a target encoder from the source, then adapt it adversarially against a domain discriminator while keeping the classifier frozen. Stage 3: at test time, route target inputs through the target encoder and the source classifier. This asymmetry gives ADDA more capacity than DANN at the cost of an extra training stage.

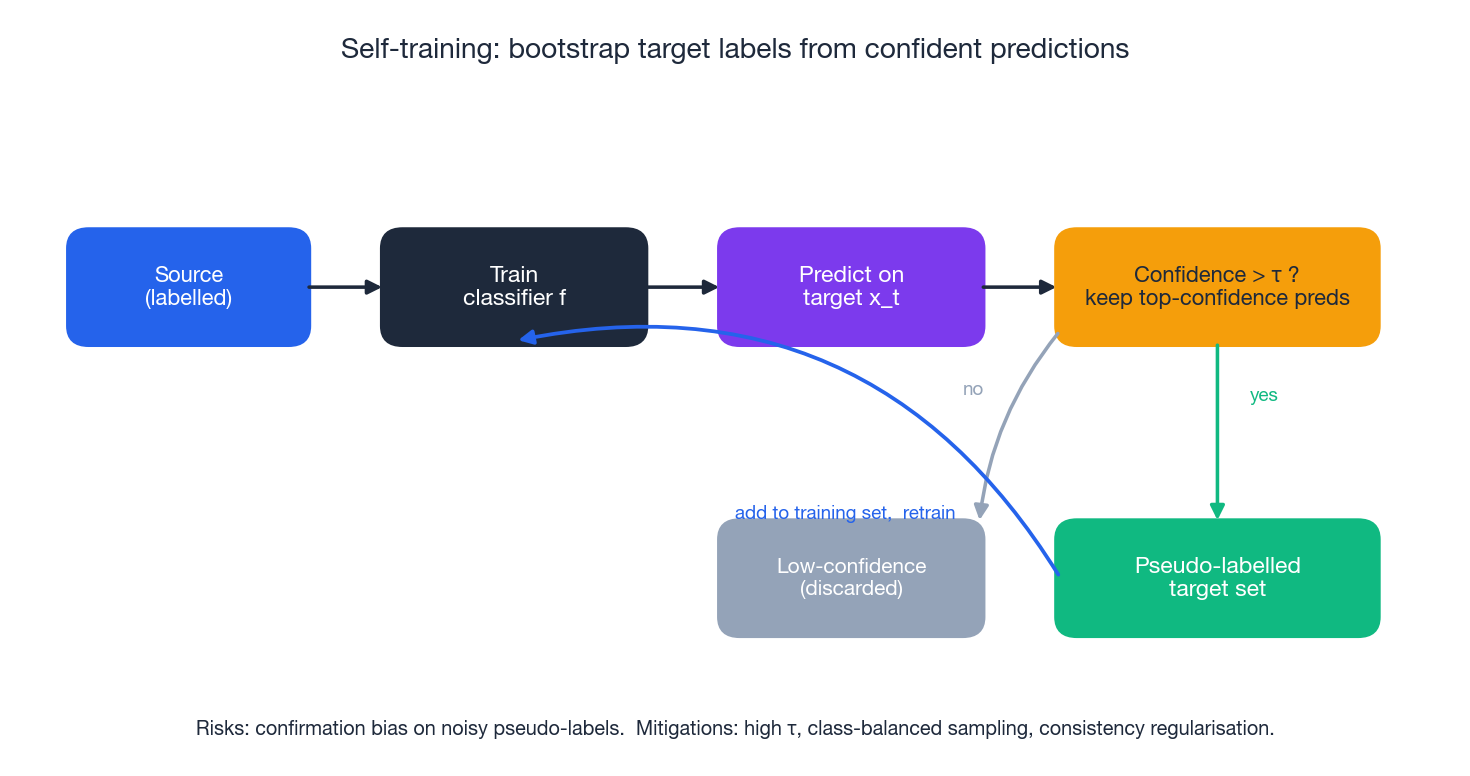

Self-Training — Bootstrapping Labels on the Target#

Adversarial and statistical alignment treat the target as one undifferentiated cloud. Self-training (also called pseudo-labelling) goes further: it uses your current model to produce target labels and then trains on them.

The loop is:

- Train $f$ on the source.

- Predict on every target sample; keep only those where $\max_y f(x)_y > \tau$ (a high confidence threshold).

- Treat the kept (input, prediction) pairs as new labelled data and retrain.

- Iterate.

Self-training is powerful and underestimated, but it has one infamous failure mode: confirmation bias. Wrong but confident predictions get re-fed into training and amplified. The standard mitigations are:

- a high threshold $\tau$ (typically 0.9+),

- class-balanced selection (cap the number kept per class),

- consistency regularisation under augmentations (FixMatch-style),

- restarting from the source model at each round rather than from the previous self-trained one.

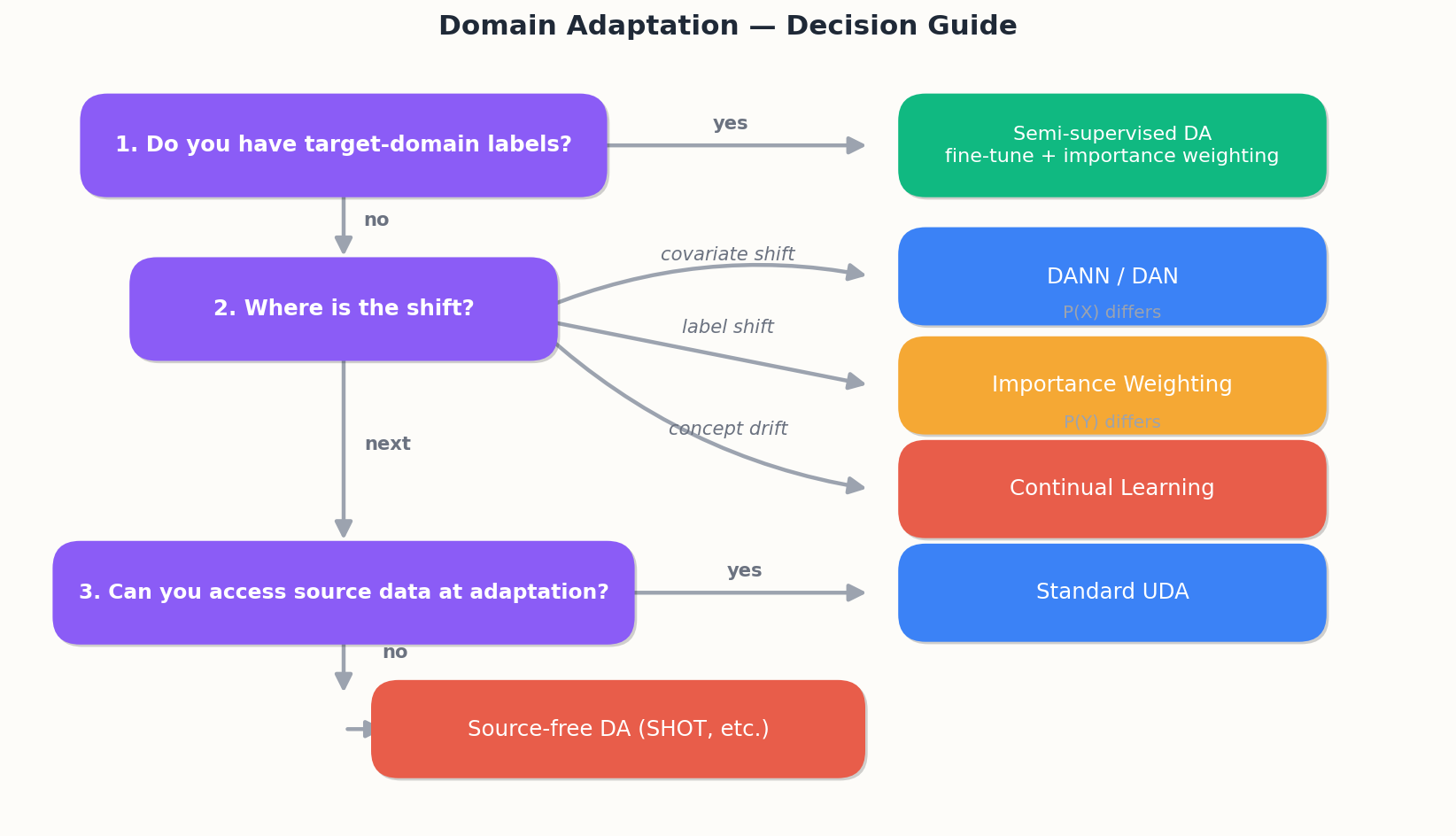

Decision Tree — Which Method, When?#

In practice a strong pipeline often combines methods: AdaBN for the easy gains, MMD or DANN for feature alignment, then a self-training round for the last few points.

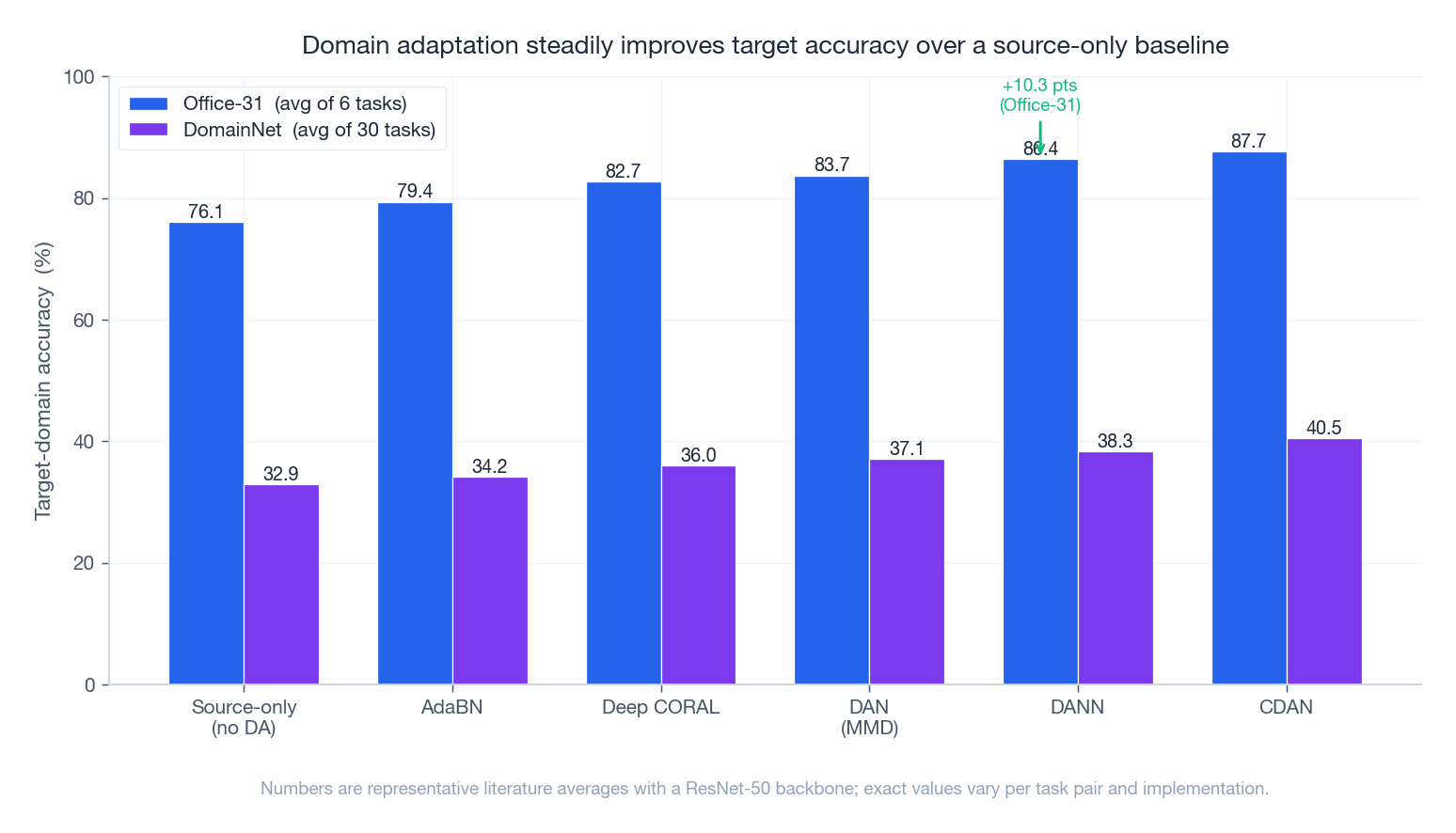

Benchmarks — How Much Does This Actually Help?#

The numbers are representative literature averages with a ResNet-50 backbone. Two things worth noticing:

- The biggest jump is from “nothing” to “anything”. Even AdaBN closes a meaningful chunk of the gap. Doing something matters far more than choosing the perfect method.

- DomainNet is genuinely harder than Office-31. A 40% accuracy on DomainNet still represents a strong method — the dataset has 345 classes across 6 wildly different visual styles. Always interpret DA accuracies relative to a source-only baseline, not in absolute terms.

Where Domain Adaptation Earns Its Keep#

- Medical imaging — Siemens vs GE scanners, 1.5T vs 3T MRI, hospital A vs hospital B.

- Autonomous driving — sunny to rainy, city A to city B, simulation to real.

- Recommendation — country to country, year to year, web to mobile.

- NLP — movie reviews to product reviews, news to social, formal to informal.

- Sim-to-real — synthetic data to real sensor data in robotics and self-driving.

The common pattern: source labels are abundant, target labels are expensive or impossible, and the model has to ship anyway.

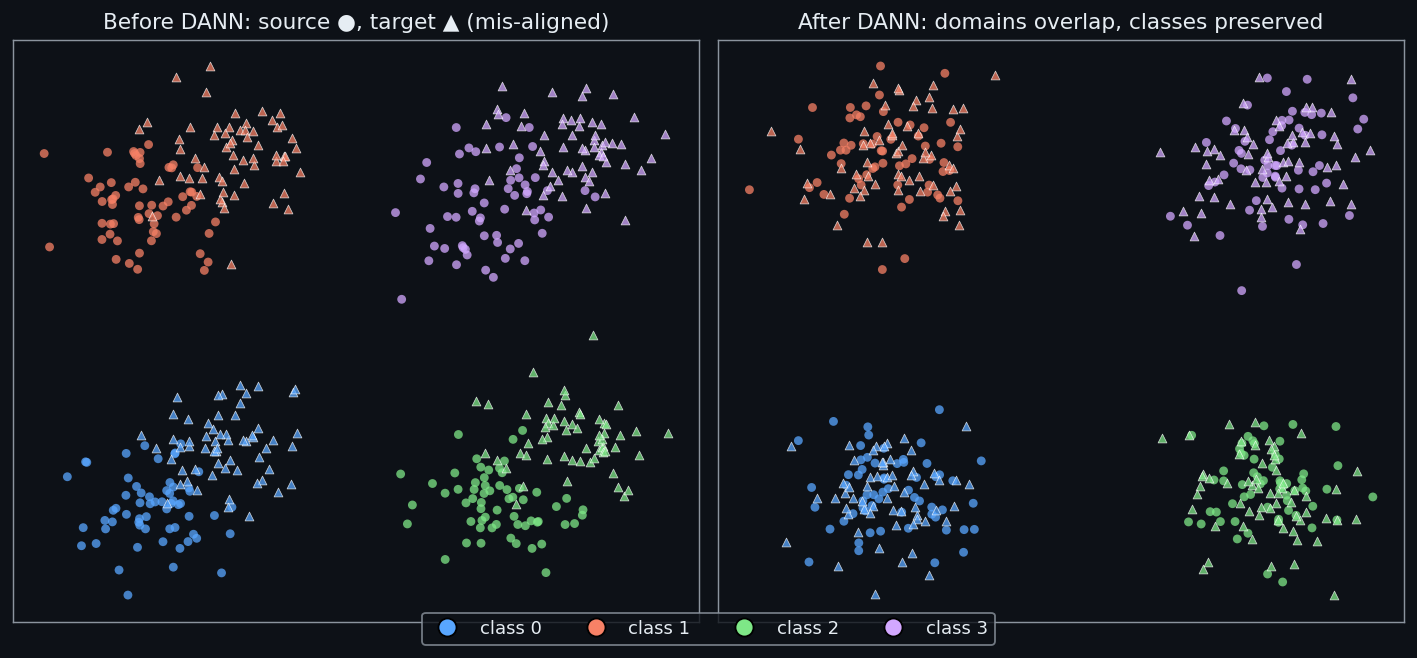

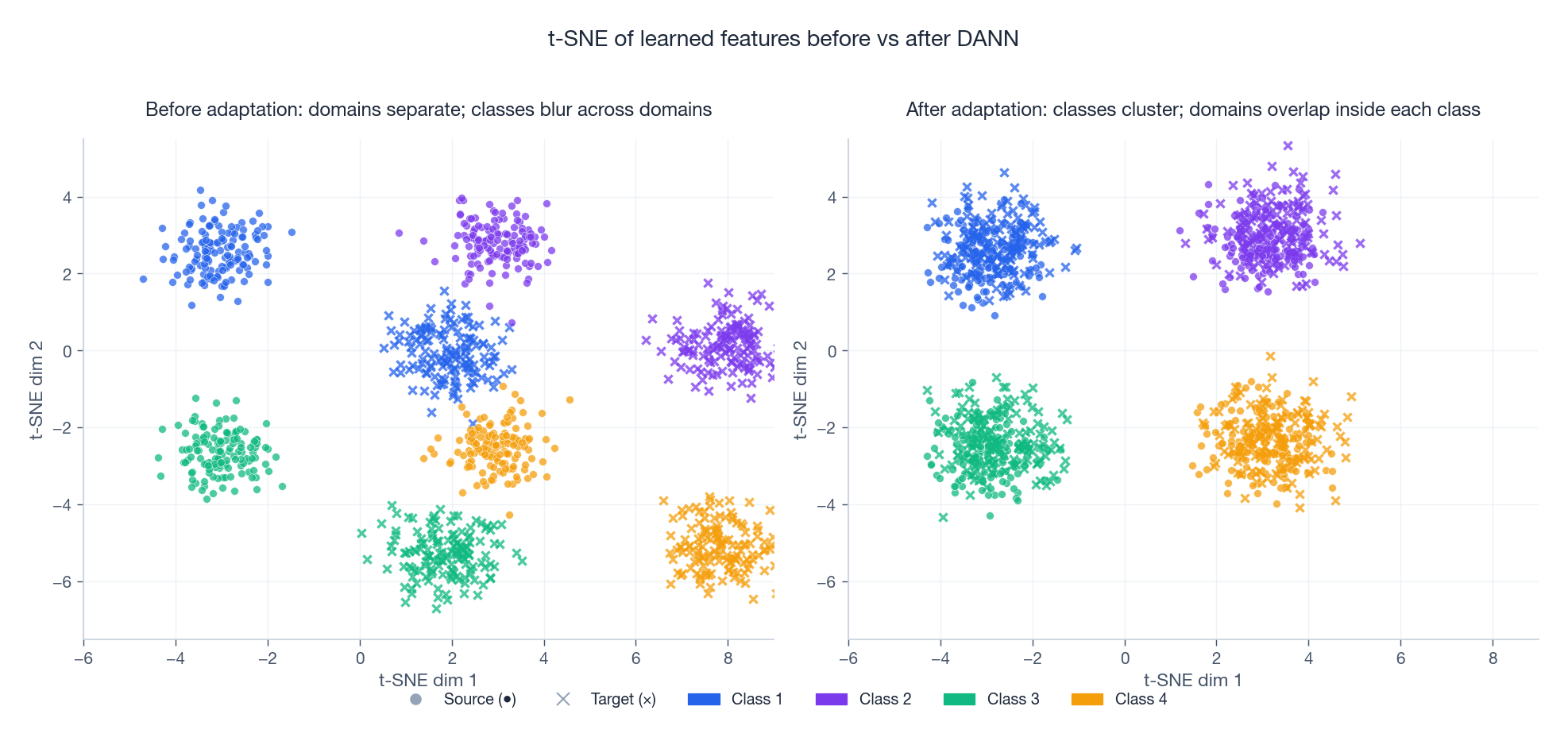

Visualising the Effect — t-SNE Before and After#

A standard sanity check after training a DA model: project source and target features through t-SNE. Before adaptation, samples cluster by domain; after, they cluster by class.

If your “after” plot still shows two domain blobs, alignment failed. If it shows one blob with class structure, alignment worked. This single picture is more diagnostic than any single number.

Complete Implementation: DANN#

| |

How this code works#

| Component | Role |

|---|---|

GradientReversalLayer | Identity forward, negated-gradient backward — turns the minimax into a single backward pass. |

_adaptive_lambda | Sigmoid ramp $\frac{2}{1 + e^{-\gamma p}} - 1$ — start small so the network learns features first. |

class_loss | Standard cross-entropy on source labels only (no target labels used). |

domain_loss | BCE: source = 1, target = 0 — trains the discriminator. |

| GRL + domain head | Reversed gradients flow back to $G_f$ → it learns to hide the domain. |

evaluate(alpha=0) | At test time we set $\lambda = 0$ ; the GRL is irrelevant — only the classification head is used. |

CORAL vs MMD vs DANN: Empirical Comparison#

The three alignment losses look different on paper but solve the same problem — pull source and target features into the same region of representation space. To make the trade-offs concrete, fix a single benchmark and run all three with the same backbone.

Setup. Office-31, Amazon $\to$ Webcam. Source $D_S = 2817$ labelled images across 31 classes; target $D_T = 795$ unlabelled images. ResNet-50 ImageNet-pretrained, last block fine-tuned, 256-d bottleneck before the classifier. Batch 32 source + 32 target, SGD with momentum 0.9, base lr $10^{-3}$ , 50 epochs. The only thing that changes between runs is the alignment loss attached to the bottleneck.

CORAL#

$$\mathcal{L}_{\text{CORAL}} = \frac{1}{4 d^2} \|C_S - C_T\|_F^2.$$ | |

MMD (multi-kernel)#

$$\widehat{\text{MMD}}^2 = \tfrac{1}{n_s^2}\!\sum k(x_i^s,x_j^s) + \tfrac{1}{n_t^2}\!\sum k(x_i^t,x_j^t) - \tfrac{2}{n_s n_t}\!\sum k(x_i^s,x_j^t).$$ | |

DANN#

$$\min_{G_f, G_y}\, \max_{G_d}\; \mathcal{L}_y - \lambda\, \mathcal{L}_d.$$ | |

Results#

| Method | Target Acc | Time / run | Hyperparam sensitivity |

|---|---|---|---|

| Source-only (no DA) | 68.2% | 8 min | — |

| CORAL | 76.0% | 12 min | Low |

| MMD multi-kernel | 78.4% | 18 min | Med (kernel bandwidth) |

| DANN | 80.1% | 22 min | High (GRL schedule) |

CORAL has nothing to tune beyond $\lambda$ , which mostly does not matter — anything in $[0.1, 10]$ gives within a point of the best. MMD is sensitive to the bandwidth set; the multi-kernel variant fixes most of that, but still rewards a sweep. DANN is the most sensitive of the three — get the $\lambda$ ramp wrong and you do worse than source-only.

Convergence behaviour#

CORAL and MMD both reach plateau within roughly 30 epochs and stay there. The loss curves are monotone, the target accuracy curve is monotone too, and you can early-stop on the source validation set with confidence.

DANN looks different. The classification loss and the domain loss fight each other, so target accuracy oscillates by 2–4 points across late epochs. You need the sigmoid ramp on $\lambda$ to keep the early epochs sane, and even then the right strategy is to track target accuracy on a tiny held-out set if you have one — picking the last epoch is often a mistake.

Practical recipe#

- Start with CORAL. Zero hyperparameters worth sweeping, deterministic, twelve lines of code. If this closes most of the gap, ship it.

- If CORAL plateaus, move to multi-kernel MMD. One additional knob (the bandwidth set), still stable, usually 1–3 points better.

- Only reach for DANN when adversarial training is operationally feasible — you have the budget for multiple runs to find the right $\lambda$ schedule, and you can monitor a small target-validation signal.

The honest summary: complexity buys you a few points of accuracy, not an order of magnitude. If those points matter (medical, autonomous driving), go DANN. If they do not, CORAL will pay for itself in debugging time saved.

Bridge: knowing which alignment loss to use presupposes that you know what kind of shift you are dealing with. The next section gives you the diagnostic.

Detecting Which Type of Shift Occurred#

The three shift types — covariate $P(X)$ , label $P(Y)$ , concept $P(Y \mid X)$ — call for different remedies, and the wrong remedy can hurt more than it helps. Importance weighting fixes covariate shift but does nothing for concept shift. Prior correction fixes label shift but is irrelevant if the inputs themselves moved. Before reaching for a method, run the diagnostic.

Algorithm 1 — covariate shift via a domain classifier#

Train a binary classifier to distinguish source inputs (label 1) from target inputs (label 0). Hold out a portion for validation and read the AUC.

- AUC near 0.5: source and target inputs are indistinguishable — no covariate shift to correct.

- AUC near 1.0: the input distributions are very different — large covariate shift, importance weights or feature alignment are warranted.

- Anything in between is a graded signal — the classifier’s predictions on source samples give you the density-ratio estimate $w(x) = P_T(x) / P_S(x) = (1 - p) / p$ where $p = P(\text{source} \mid x)$ .

Algorithm 2 — label shift via prior comparison#

$$\hat P_T(y) = \frac{1}{n_T} \sum_{j=1}^{n_T} \mathbb{1}[\arg\max_y f(x_j^t) = y].$$ $$\mathrm{KL}(P_S(Y) \,\|\, \hat P_T(Y)) = \sum_y P_S(y) \log \frac{P_S(y)}{\hat P_T(y)}.$$A KL above $\sim$ 0.05 is suspicious; above 0.2 is a strong signal of label shift. (For rigour, BBSE / RLLS deconvolve the source-model confusion matrix to recover an unbiased estimate of $P_T(Y)$ — for a quick diagnostic, the noisy version is enough.)

Algorithm 3 — concept shift via per-class confidence#

Concept shift is the sneakiest of the three: the inputs look the same, the prevalence is the same, but the labelling rule has changed. The signature is confident-but-wrong predictions on the target.

If you have even a tiny labelled target slice (50–100 examples per class), compute mean predicted confidence per class on that slice and compare against the corresponding slice’s accuracy. Big confidence with low accuracy on a class is the fingerprint of concept shift on that class.

A diagnostic helper#

| |

A toy numerical example#

Synthetic 2-d data, three scenarios, same diagnostic run on each:

| Scenario | AUC (cov) | KL (label) | Conf-Acc (concept) | Diagnosis |

|---|---|---|---|---|

| Clean Gaussians, same labels | 0.51 | 0.01 | 0.02 | no shift |

| Target shifted by $+1$ in $x_1$ | 0.94 | 0.04 | 0.03 | covariate |

| Target class prior $[0.1, 0.9]$ vs source $[0.5, 0.5]$ | 0.52 | 0.41 | 0.04 | label |

| Decision boundary flipped on target | 0.50 | 0.02 | 0.38 | concept |

Each shift type lights up exactly one column. Mixed shifts light up several — and the magnitudes tell you the order in which to fix them.

Bridge: with the diagnosis in hand, you can pick the method, but every method that uses pseudo-labels — self-training, FixMatch, joint training with target predictions — depends on those labels being trustworthy. That requires calibrated confidence, which the next section addresses.

Confidence Calibration Under Domain Shift#

Self-training and most semi-supervised DA methods filter pseudo-labels by confidence: keep $(x, \hat y)$ when $\max_y f(x)_y > \tau$ . The implicit assumption is that high softmax confidence implies high accuracy. Under domain shift this assumption breaks.

A source-trained model on the target domain is typically over-confident — it assigns 95% probability to predictions that are right only 70% of the time. The expected calibration error (ECE) on Webcam after Amazon training routinely lands at 18–22%. Filter pseudo-labels with $\tau = 0.9$ and you keep a sea of confident wrong labels — the textbook recipe for confirmation bias.

Two complementary fixes, in increasing order of nuance.

Fix 1 — temperature scaling#

$$\hat p_y = \frac{\exp(z_y / T)}{\sum_{y'} \exp(z_{y'} / T)}.$$$T > 1$ softens overconfident predictions; $T < 1$ sharpens underconfident ones. Optimisation is one-dimensional and convex — L-BFGS converges in a handful of iterations.

Fix 2 — focal loss on pseudo-labels#

When you train on filtered pseudo-labels, weight each by $(1 - \hat p)^\gamma$ — the focal-loss trick. High-confidence pseudo-labels (the over-confident ones most likely to be wrong) get a small weight; medium-confidence pseudo-labels (where the model still has signal) get the full gradient.

| |

Numerical effect#

On the same Amazon $\to$ Webcam setup as before, with a 100-example target-validation split:

| Stage | ECE on target | Self-training F1 |

|---|---|---|

| Source-only logits | 18.4% | 74.6 |

| + temperature scaling ($T \approx 2.1$ ) | 4.1% | 76.9 |

| + focal weighting on pseudo-labels | 3.8% | 77.8 |

Calibration alone reclaims 2.3 F1 of downstream self-training accuracy; focal weighting on top adds another 0.9. The ECE drop from 18.4% to 4.1% is the more important number — it tells you that confidences now mean what they say, and a $\tau = 0.9$ filter actually selects $\sim$ 90%-correct labels rather than $\sim$ 70%-correct ones.

Bridge: that completes the practical toolkit — diagnose the shift, pick an alignment method, calibrate before you pseudo-label. The summary that follows distils the whole pipeline into a checklist.

Summary#

Domain adaptation tackles the most practical problem in transfer learning: training data and deployment data come from different distributions. The toolkit, in roughly increasing order of effort:

- AdaBN — recompute batch-norm statistics on target; free, no retraining, always try first.

- CORAL — match source and target covariance matrices; cheap, deterministic.

- MMD (DAN) — match kernel mean embeddings; stable, principled, multi-kernel default.

- DANN — adversarial domain alignment via the gradient reversal layer; one backward pass.

- CDAN / ADDA — more flexible variants for larger gaps.

- CycleGAN — pixel-level translation when feature alignment is not enough.

- Self-training — pseudo-labels with a confidence gate; the last few points of accuracy.

The Ben-David bound tells you what is possible: shrink the source error and the domain divergence, and target error follows — as long as the joint optimal error is small. If it is not, no amount of alignment will help; you need labels.

Next: Part 4 — Few-Shot Learning , where we drop the assumption of abundant source data altogether and learn from a handful of examples per class.

References#

- Ganin et al. (2016). Domain-Adversarial Training of Neural Networks. JMLR. arXiv:1505.07818

- Long et al. (2015). Learning Transferable Features with Deep Adaptation Networks. ICML. arXiv:1502.02791

- Sun & Saenko (2016). Deep CORAL: Correlation Alignment for Deep Domain Adaptation. ECCV. arXiv:1607.01719

- Zhu et al. (2017). Unpaired Image-to-Image Translation using Cycle-Consistent Adversarial Networks (CycleGAN). ICCV. arXiv:1703.10593

- Tzeng et al. (2017). Adversarial Discriminative Domain Adaptation (ADDA). CVPR. arXiv:1702.05464

- Long et al. (2018). Conditional Adversarial Domain Adaptation (CDAN). NeurIPS. arXiv:1705.10667

- Ben-David et al. (2010). A Theory of Learning from Different Domains. Machine Learning.

- Li et al. (2017). Revisiting Batch Normalization for Practical Domain Adaptation (AdaBN). arXiv:1603.04779

- Gretton et al. (2012). A Kernel Two-Sample Test (MMD). JMLR. paper

- Lipton et al. (2018). Detecting and Correcting for Label Shift with Black Box Predictors. ICML. arXiv:1802.03916

- Sohn et al. (2020). FixMatch: Simplifying Semi-Supervised Learning with Consistency and Confidence. NeurIPS. arXiv:2001.07685

Transfer Learning 12 parts

- 01 Transfer Learning (1): Fundamentals and Core Concepts

- 02 Transfer Learning (2): Pre-training and Fine-tuning

- 03 Transfer Learning (3): Domain Adaptation you are here

- 04 Transfer Learning (4): Few-Shot Learning

- 05 Transfer Learning (5): Knowledge Distillation

- 06 Transfer Learning (6): Multi-Task Learning

- 07 Transfer Learning (7): Zero-Shot Learning

- 08 Transfer Learning (8): Multimodal Transfer

- 09 Transfer Learning (9): Parameter-Efficient Fine-Tuning

- 10 Transfer Learning (10): Continual Learning

- 11 Transfer Learning (11): Cross-Lingual Transfer

- 12 Transfer Learning (12): Industrial Applications and Best Practices