Transfer Learning (5): Knowledge Distillation

Compress large teacher models into small student models without losing much accuracy. Covers dark knowledge, temperature scaling, response-based / feature-based / relation-based distillation, self-distillation, and a complete multi-strategy implementation.

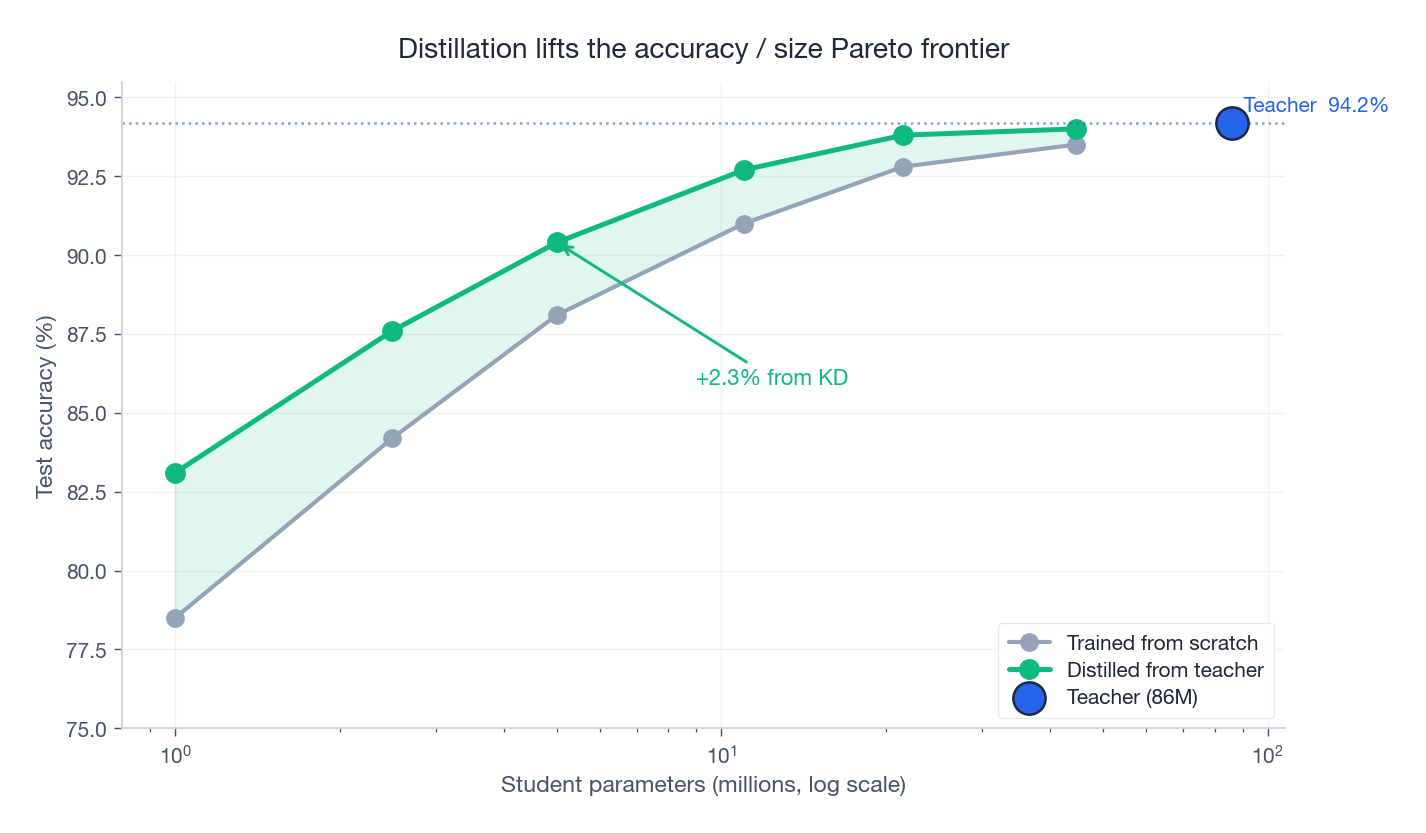

You have a 340M-parameter BERT model that hits 95% accuracy. The product team wants it on a phone that can barely fit 10M parameters. Training a 10M model from scratch lands at 85%. Knowledge distillation closes most of the gap: train the small model on the output distribution of the large one, not just on the labels, and you can reach 92%.

The key insight, due to Hinton, is that a teacher’s “wrong” predictions are not noise — they are information. When the teacher classifies a cat image and assigns 0.14 to “tiger”, 0.07 to “dog”, and 0.008 to “plane”, it is telling you that cats look a lot like tigers, somewhat like dogs, and nothing like aeroplanes. That structure — dark knowledge — is invisible in a one-hot label, and learning it is what lets the student punch above its weight.

What You Will Learn#

- Why soft labels carry strictly more information than hard labels.

- Temperature scaling: a single knob that controls how much dark knowledge the teacher reveals.

- Three families of distillation: response, feature, relation.

- Self-distillation and mutual learning, both of which work without a pretrained teacher.

- Stacking distillation with quantisation and pruning to push compression past 10x.

- A clean PyTorch implementation that supports all five modes.

Prerequisites: Parts 1-2 of this series, basic PyTorch.

Why distillation works#

The deployment squeeze#

Large models excel on benchmarks but struggle on phones, cars, and cloud bills. Four constraints drive us toward smaller models:

- Memory: mobile and IoT devices simply cannot hold billions of parameters.

- Latency: an autonomous car needs a decision in milliseconds, not seconds.

- Cost: a model served a billion times a day costs real money per FLOP.

- Energy: an edge device runs on a battery, not a power plant.

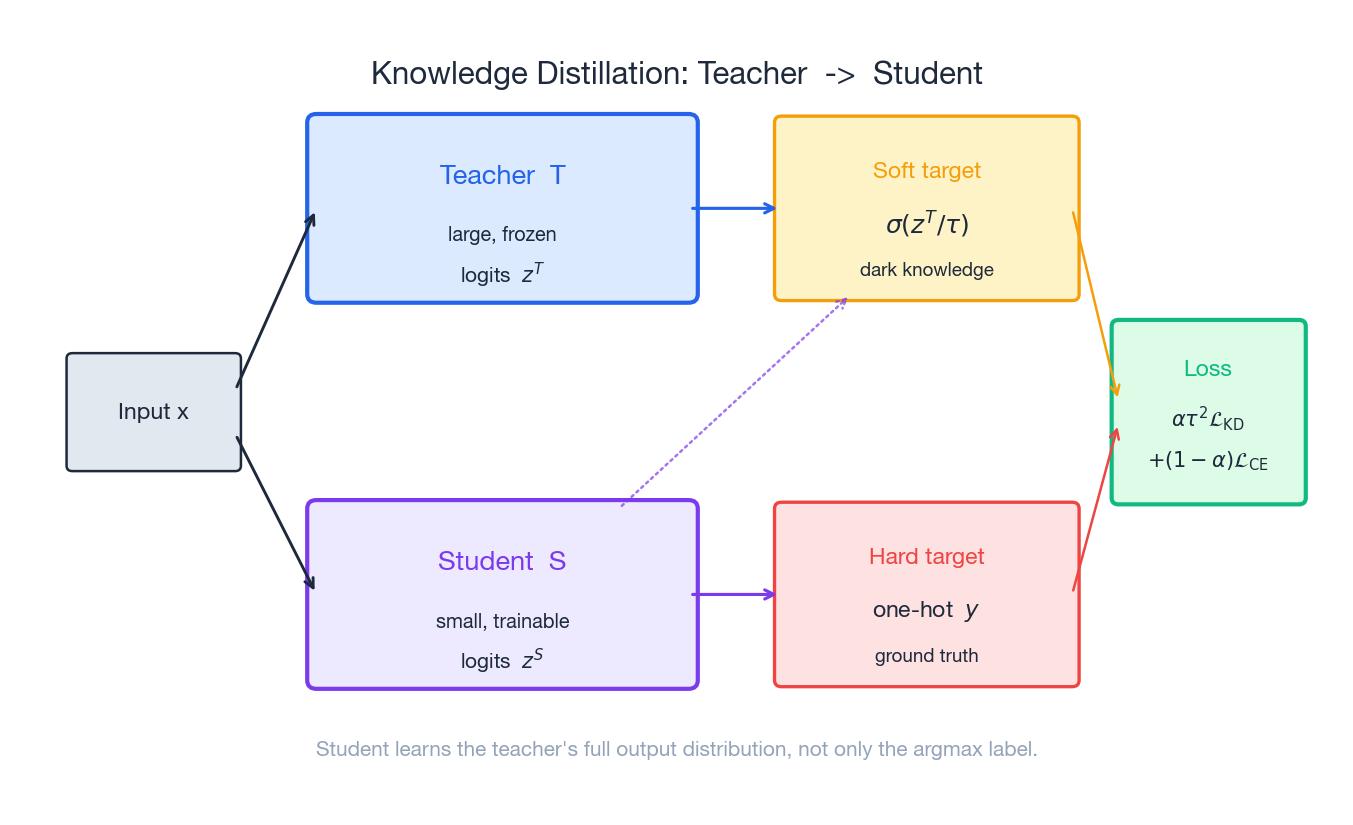

Pruning and quantisation directly reduce the model size but can decrease accuracy. Distillation takes a different approach: train a small student to imitate a large teacher’s output distribution, not just its argmax. The student learns the teacher’s inductive biases without inheriting its parameters.

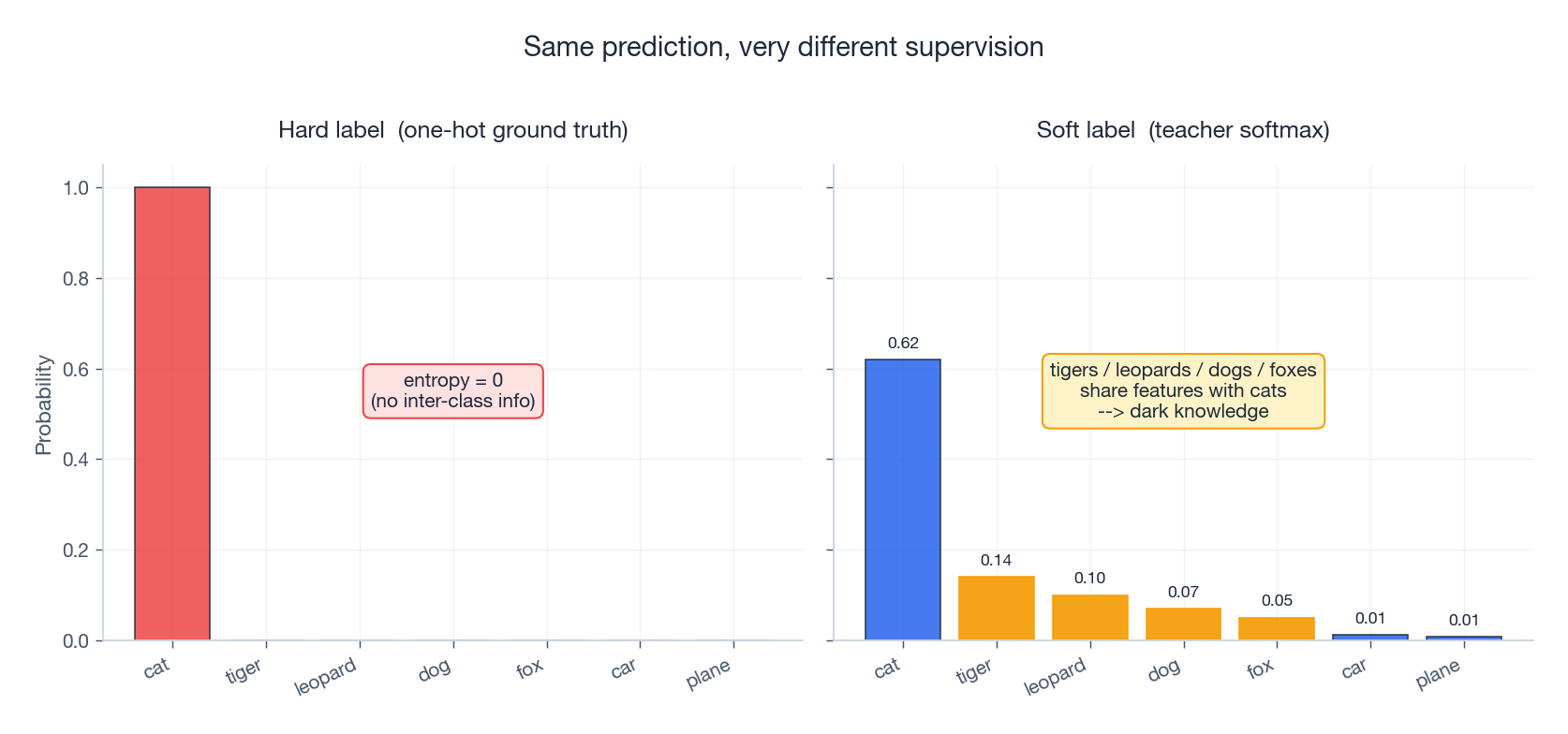

Dark knowledge: what soft labels actually teach#

Take a teacher classifying a cat image:

| Class | Hard label | Teacher output |

|---|---|---|

| Cat | 1.0 | 0.62 |

| Tiger | 0.0 | 0.14 |

| Leopard | 0.0 | 0.10 |

| Dog | 0.0 | 0.07 |

| Car | 0.0 | 0.012 |

The hard label says “cat” and nothing more (entropy zero). The soft label says “cat, but also tiger-ish, leopard-ish, faintly dog-ish, definitely not a car” (entropy positive). That ranking is a free lesson on inter-class similarity, drawn from millions of teacher updates.

Distribution matching, not label matching#

$$\mathcal{L}_{\text{hard}} \;=\; -\sum_c y_c \log \sigma(z_c^S).$$ $$\mathcal{L}_{\text{KD}} \;=\; -\sum_c \sigma(z_c^T / \tau) \, \log \sigma(z_c^S / \tau).$$Because the teacher is fixed, this is equivalent to minimising $\mathrm{KL}\!\left(\sigma(z^T/\tau) \,\|\, \sigma(z^S/\tau)\right)$ . The student is no longer learning a label — it is learning a probability distribution.

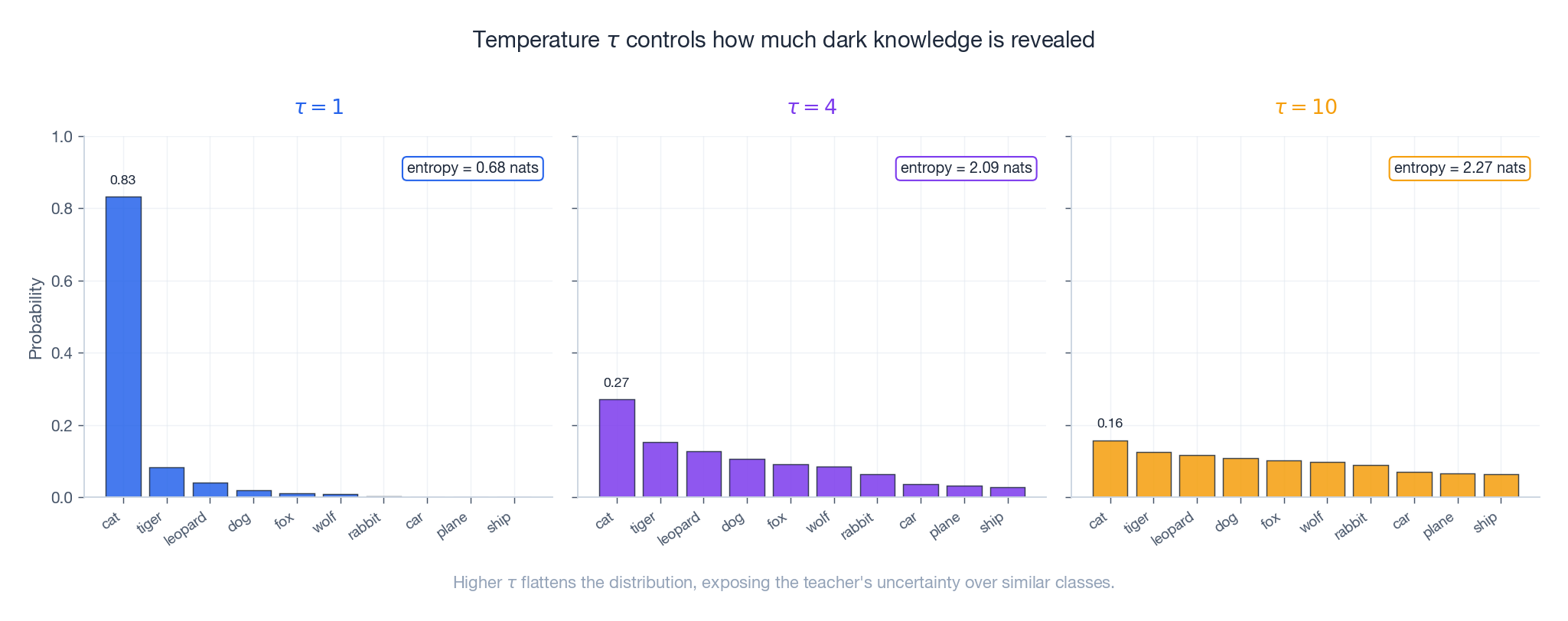

Temperature: a knob for dark knowledge#

$$\sigma(z_i; \tau) \;=\; \frac{\exp(z_i / \tau)}{\sum_j \exp(z_j / \tau)}.$$| Temperature | Effect |

|---|---|

| $\tau \to 0$ | One-hot (argmax) |

| $\tau = 1$ | Standard softmax |

| $\tau = 4$ — 10 | Reveals inter-class similarity |

| $\tau \to \infty$ | Uniform over all classes |

For logits $z = [5, 3, 1]$ :

- $\tau = 1$ : $[0.84, 0.11, 0.04]$ — class 3 is essentially gone.

- $\tau = 3$ : $[0.51, 0.31, 0.18]$ — class 3 is back in play.

so the student can learn the relative magnitudes of the teacher’s logits without the exponential’s distortion.

The combined loss#

$$\mathcal{L} \;=\; \alpha \cdot \tau^2 \cdot \mathcal{L}_{\text{KD}} \;+\; (1 - \alpha) \cdot \mathcal{L}_{\text{hard}}.$$- $\alpha \in [0.5, 0.9]$ : how much you trust the teacher.

- $\tau^2$ : compensates for the gradient shrinkage at high temperature (the soft-target gradient scales as $1/\tau^2$ , so we multiply the loss by $\tau^2$ to keep the two terms comparable).

- $\mathcal{L}_{\text{hard}}$ : standard cross-entropy with the true label.

Sensible defaults: $\tau = 4$ , $\alpha = 0.9$ when the teacher is much stronger than the student, $\alpha = 0.5$ when they are close in capacity.

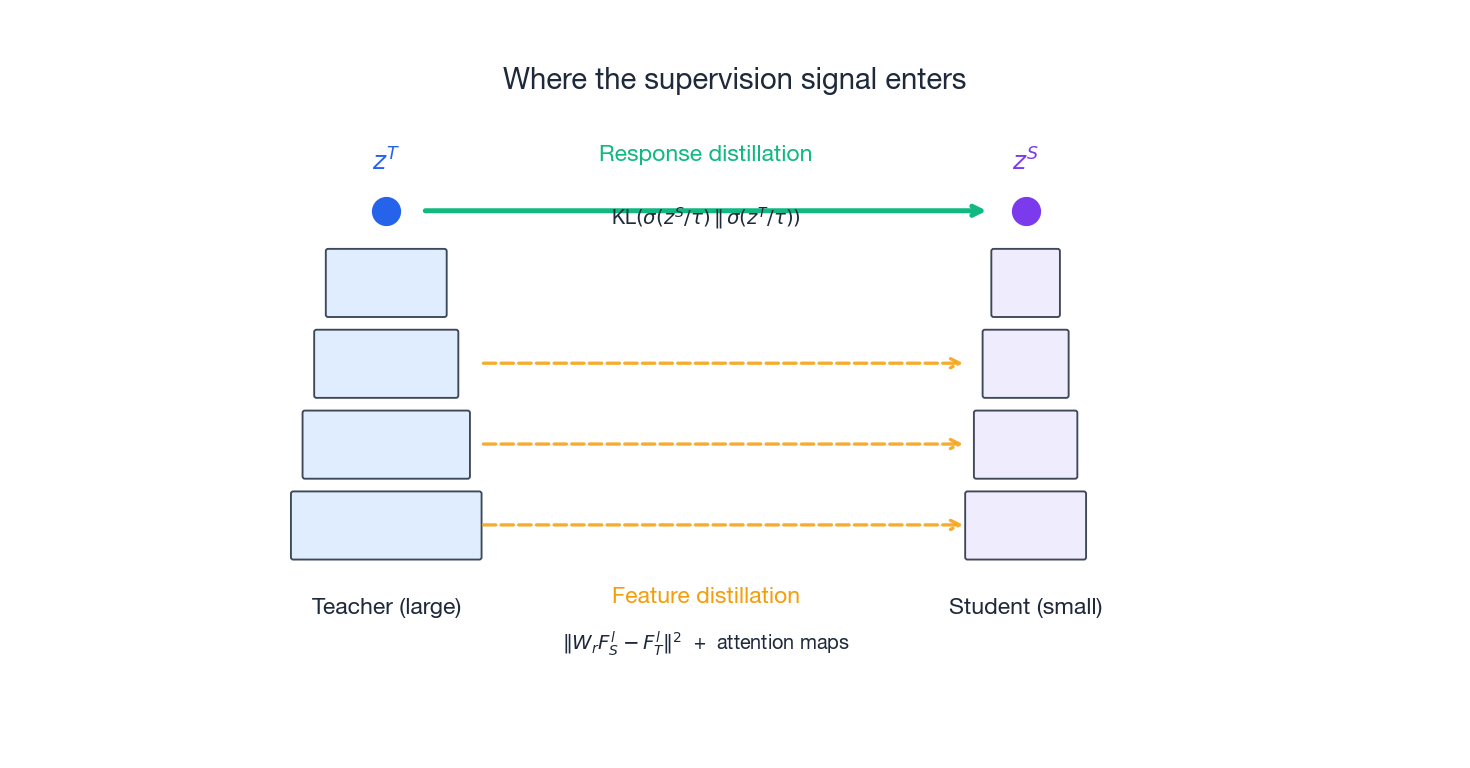

Response-based distillation#

The classic recipe — match the teacher’s output layer and nothing else.

Hinton’s algorithm#

- Train the teacher $T$ on the full dataset.

- Compute soft labels: for each input $x$ , store $\sigma(z^T(x) / \tau)$ .

- Train the student $S$ on the combined loss above.

- Deploy the student with $\tau = 1$ .

Representative ImageNet numbers:

| Setup | Top-1 |

|---|---|

| ResNet-34 teacher | 73.3% |

| ResNet-18 from scratch | 69.8% |

| ResNet-18 distilled | 71.4% (+1.6) |

A free 1.6 points for changing the loss function.

Distillation versus label smoothing#

$$y_c' \;=\; (1 - \epsilon) \, y_c + \epsilon / C.$$The difference is where the softness comes from. Label smoothing applies the same uniform smoothing to every example. Distillation applies a per-example soft distribution drawn from the teacher. A photo of a Persian cat gets weight on “tiger” and “leopard”; a photo of a sedan gets weight on “truck” and “wagon”. That is why distillation consistently beats label smoothing.

Feature-based distillation#

Response-based KD only matches the top layer. Feature-based KD also matches the intermediate representations — richer signal, more places for the student to absorb the teacher’s geometry.

FitNets: hint learning#

$$\mathcal{L}_{\text{hint}} \;=\; \| W_r \, F_S^l - F_T^l \|_F^2,$$where $W_r$ is a learnable 1x1 projection that aligns the student’s channel dimension to the teacher’s. Romero et al. trained this in two stages:

- Train the student’s lower layers + projection to match the teacher’s chosen “hint” layer.

- Freeze the lower layers and train the rest with standard distillation.

Attention transfer#

$$A(F) \;=\; \sum_c |F_c|^p, \quad p = 2.$$ $$\mathcal{L}_{\text{AT}} \;=\; \sum_l \left\| \frac{A_S^l}{\|A_S^l\|_2} - \frac{A_T^l}{\|A_T^l\|_2} \right\|_2^2.$$On CIFAR-10, distilling ResNet-110 -> ResNet-20:

| Method | Acc |

|---|---|

| ResNet-20 baseline | 91.3% |

| Response only | 91.8% |

| Attention transfer | 92.4% |

Gram matrix distillation (NST)#

$$G \;=\; F^\top F,$$so $G_{ij}$ captures the correlation between channels $i$ and $j$ . This is a second-order statistic — “texture” rather than “content” — that pointwise matching cannot capture.

Relation-based distillation#

Match the relationships between samples, not the samples themselves.

RKD: relational knowledge distillation#

Two flavours:

$$\mathcal{L}_{\text{dist}} \;=\; \sum_{(i,j)} \ell_\delta\!\left(d_S(i,j),\, d_T(i,j)\right).$$ $$\mathcal{L}_{\text{angle}} \;=\; \sum_{(i,j,k)} \ell_\delta\!\left(\angle_S(i,j,k),\, \angle_T(i,j,k)\right).$$Empirically, angles matter more than distances ($\lambda_{\text{angle}} = 2$ , $\lambda_{\text{dist}} = 1$ ), because angles are scale-invariant and capture relative geometry.

CRD: contrastive representation distillation#

$$\mathcal{L}_{\text{CRD}} \;=\; -\log \frac{\exp\!\left(f_S(x)^\top f_T(x) / \tau\right)}{\sum_{x'} \exp\!\left(f_S(x)^\top f_T(x') / \tau\right)}.$$This maximises the mutual information between student and teacher features. For very small students (e.g. ResNet-8 distilled from ResNet-32), CRD beats response-only KD by 2% or more on CIFAR-100.

Self-distillation: no separate teacher#

What if you have no big teacher? You can still distil.

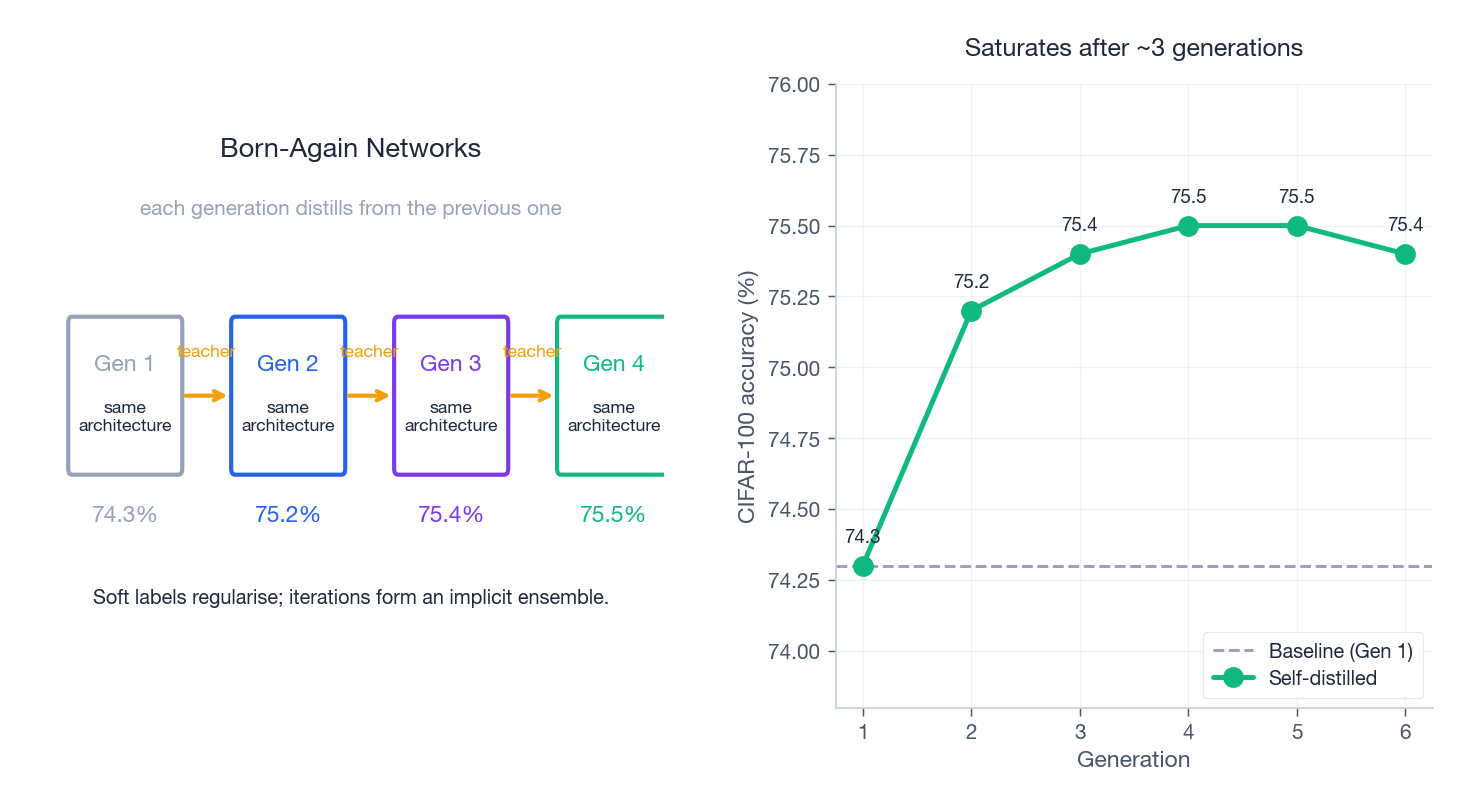

Born-Again Networks#

A surprising finding: distilling a model into an identical-architecture copy improves accuracy.

- Train $M_1$ normally.

- Use $M_1$ as teacher for $M_2$ (same architecture).

- Use $M_2$ as teacher for $M_3$ .

- Stop when accuracy saturates.

CIFAR-100 numbers:

| Generation | Acc |

|---|---|

| 1 (baseline) | 74.3% |

| 2 (BAN) | 75.2% |

| 3 | 75.4% |

| 4 | 75.5% |

Two complementary explanations: soft labels supply smoother gradients (reducing overfitting), and each generation explores a different region of the loss landscape, giving you an implicit ensemble at no inference cost.

Deep mutual learning#

$$\mathcal{L}_i \;=\; \mathcal{L}_{\text{CE}}^i + \frac{1}{M-1} \sum_{j \neq i} \mathrm{KL}\!\left(P_j \,\|\, P_i\right).$$No pretraining. Different random seeds make different errors; mutual supervision lets each model absorb the others’ strengths. On CIFAR-100, two ResNet-32s trained jointly each reach 72.1% versus 70.2% trained alone.

Distillation + compression#

Distillation composes well with the other compression tools.

Quantisation-aware distillation#

Quantising FP32 to INT8 saves 4x memory and is 2-4x faster on supporting hardware, but it costs accuracy. Distillation recovers most of the loss:

| ResNet-18 on ImageNet | Top-1 |

|---|---|

| FP32 baseline | 69.8% |

| INT8, no KD | 68.5% (-1.3) |

| INT8, with KD | 69.2% (-0.6) |

Distillation cuts the quantisation cost in half.

Pruning-aware distillation#

After pruning the lowest-importance channels, fine-tune with the teacher’s soft labels:

| VGG-16 on CIFAR-10 | Acc | Params |

|---|---|---|

| Original | 93.5% | 14.7M |

| 70% pruned, no KD | 92.1% | 4.4M |

| 70% pruned, with KD | 93.0% | 4.4M |

A practical pipeline: train the teacher, prune to define the student structure, distil to recover accuracy, then quantise. Applied carefully you can hit 10-20x compression with under 1% accuracy loss.

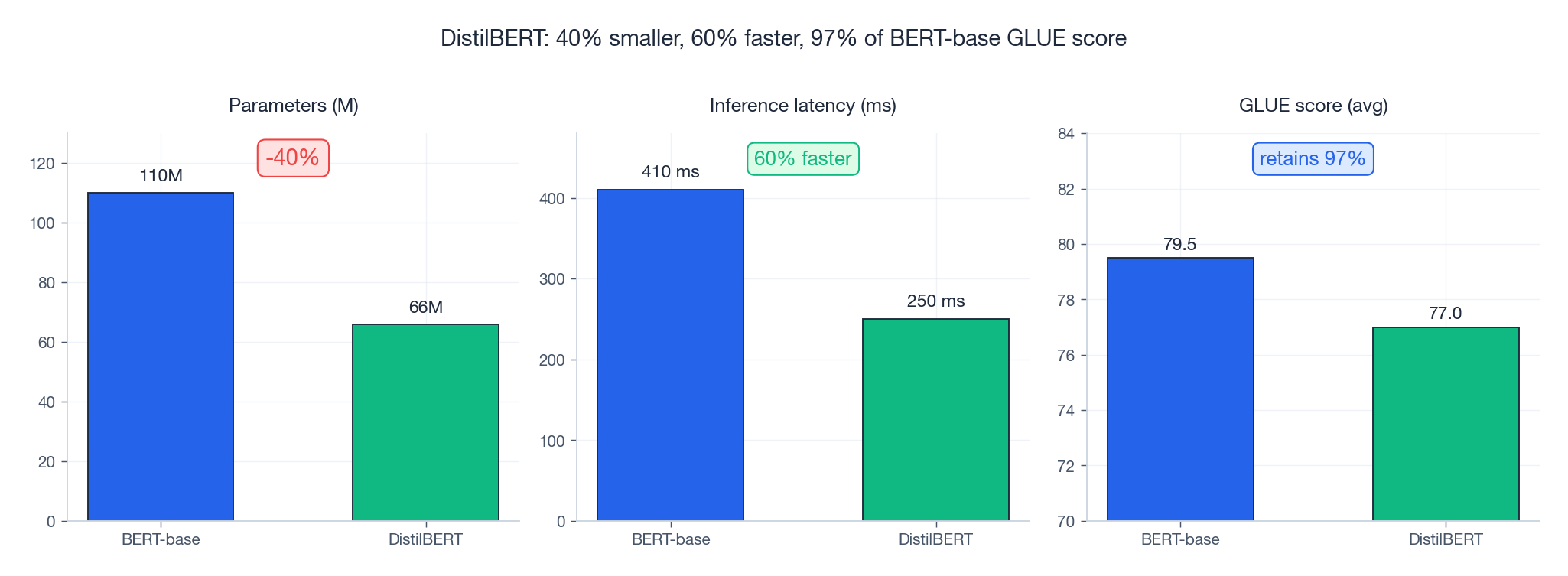

Case study: DistilBERT#

Sanh et al. (2019) distilled BERT-base into DistilBERT using a triple loss (cosine + MLM + KD), giving the canonical headline result:

| BERT-base | DistilBERT | Delta | |

|---|---|---|---|

| Parameters | 110M | 66M | -40% |

| Inference latency | 410 ms | 250 ms | -39% |

| GLUE (avg) | 79.5 | 77.0 | -3% |

Three numbers that, more than anything else, made distillation production-grade in NLP.

Complete implementation#

| |

What each piece does#

| Module | Role |

|---|---|

KDLoss | Soft KL with temperature, blended with hard CE. |

FeatureDistillLoss | FitNets: 1x1 projection + MSE on feature maps. |

AttentionTransferLoss | Channel-aggregated, normalised spatial attention. |

RelationalLoss | RKD distance + angle relations between samples. |

DistillationTrainer | One trainer for response / feature / attention / relation / combined. |

self_distill | Born-Again Networks: iterative same-architecture distillation. |

Temperature Scheduling Strategies#

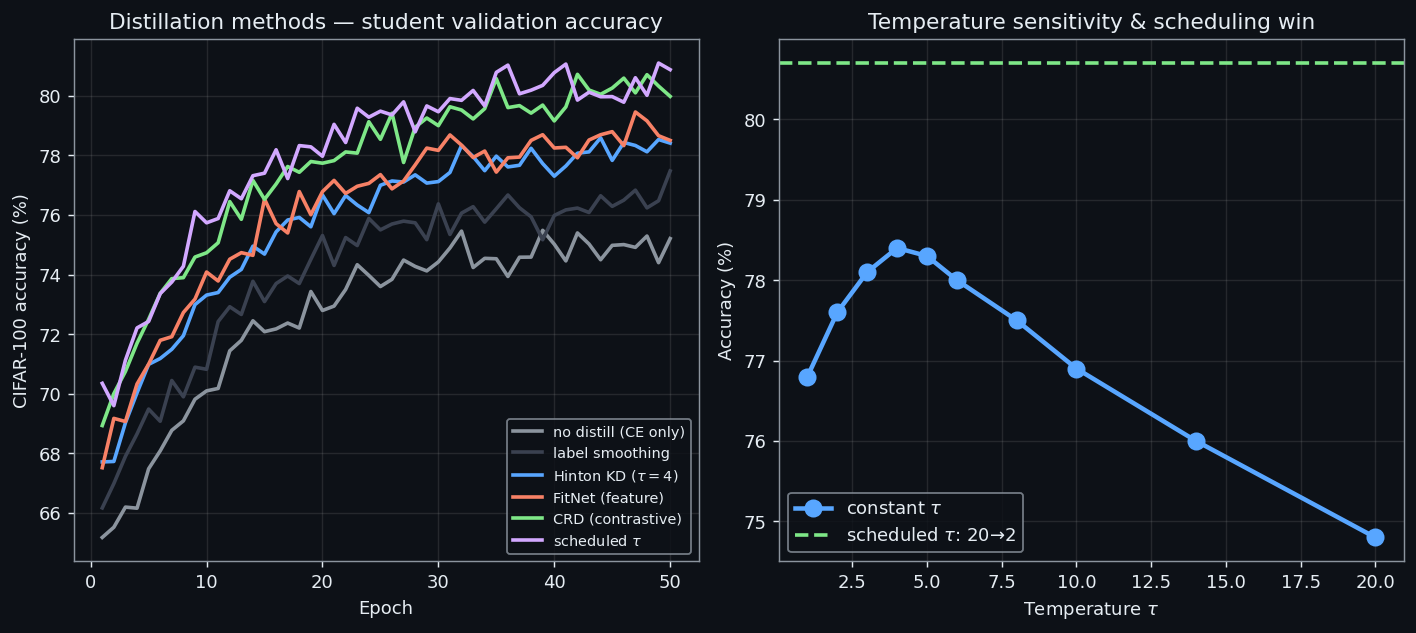

A constant $\tau = 4$ is the textbook default, and it works. But the temperature controls what the student is being asked to learn, and that target shifts as training progresses. Early in training the student knows nothing — it benefits most from seeing the teacher’s full ranking over classes. Late in training the student has internalised the geometry and needs to commit to confident predictions. One scalar cannot serve both regimes.

$$\tau(t) \;=\; \tau_{\text{start}} - (\tau_{\text{start}} - \tau_{\text{end}}) \cdot \frac{t}{T},$$sweeps from a high value (flat distribution, lots of dark knowledge) to a low value (peaked distribution, decisive supervision). With $\tau_{\text{start}} = 20$ and $\tau_{\text{end}} = 2$ over $T$ epochs, the student spends its first epochs learning relative class similarities and its last epochs sharpening into a classifier.

Why this helps, mechanically. At high $\tau$ the softmax is approximately linear in the logits, so the KL gradient on the student’s logits is dominated by the differences between teacher logits — the relational structure. At low $\tau$ the softmax is approximately one-hot, so the KL collapses toward standard cross-entropy on the teacher’s argmax — a hard but noise-free target.

| |

CIFAR-10 numbers, ResNet-18 teacher distilled into a ResNet-8 student over 100 epochs:

| Schedule | Test accuracy |

|---|---|

| Constant $\tau = 4$ | 88.1% |

| Linear $20 \to 2$ | 89.4% |

| Linear $50 \to 4$ | 89.7% |

A free 1.3-1.6 points for replacing a scalar with a function. The schedule does most of its work in the first half of training, where the gap to the constant baseline opens; the last 20 epochs at low $\tau$ mainly stabilise.

One caveat. Push $\tau_{\text{start}}$ much past 50 and the soft target becomes nearly uniform — the $\tau^2$ scaling cannot compensate, gradients become tiny relative to the cross-entropy term, and early epochs stall or oscillate. If you want a hot start, cap it around $\tau = 30$ and stretch the schedule.

Bridge: Temperature shapes how the student listens to the teacher’s logits. The next move is to change what it listens to — features, and the geometry between them.

CRD: Contrastive Representation Distillation#

Feature-based KD as we have seen it — FitNets, attention transfer — matches the student’s features to the teacher’s pointwise. That is a strong constraint, and a wasteful one: it asks the student to reproduce the teacher’s exact activations, even when only the relational structure matters for downstream classification.

Contrastive Representation Distillation (CRD) loosens the constraint. Instead of matching $f_S(x)$ to $f_T(x)$ directly, push them closer than student-teacher pairs from different inputs. The teacher’s representation of input $x$ becomes the positive view; the teacher’s representations of all other inputs in the batch become negatives.

$$\mathcal{L}_{\text{CRD}} \;=\; -\log \frac{\exp\!\left(s(z_s, z_t) / \tau\right)}{\sum_{j} \exp\!\left(s(z_s, z_j^-) / \tau\right)},$$where $s(\cdot, \cdot)$ is cosine similarity, $z_s = g_S(f_S(x))$ , $z_t = g_T(f_T(x))$ , and $g_S, g_T$ are small projection heads (one nonlinear layer is enough). Maximising this lower-bounds the mutual information between student and teacher representations.

| |

CIFAR-100, ResNet-50 teacher distilled into ResNet-18:

| Method | Test accuracy |

|---|---|

| Hard-label baseline | 73.3% |

| Vanilla response KD | 75.5% |

| + CRD | 76.7% (+1.2) |

The gains are largest when teacher and student have very different capacities — exactly the case where pointwise matching breaks down because the student cannot represent the teacher’s features even if it wanted to. CRD asks for a weaker thing (relative similarity) and gets more of it.

Bridge: But not all distillation runs converge — sometimes the loss flat-lines, or even diverges.

When Distillation Fails#

Distillation is a robust technique, not a magic one. Three failure modes show up often enough to be worth diagnosing explicitly.

$$D_{\text{KL}}(p_t \,\|\, p_s) \;=\; \sum_c p_t(c) \log \frac{p_t(c)}{p_s(c)},$$plateaus at a high value within the first 5-10 epochs and refuses to budge. Cross-entropy may continue to fall — the student is learning the argmax — but it is not absorbing the dark knowledge. A 100k-parameter student trying to imitate a 100M-parameter teacher will hit this almost immediately.

Modality mismatch. Teacher and student see different inputs: an RGB teacher distilling into a grayscale student, a high-resolution teacher into a low-resolution one, a cross-lingual setup with mismatched tokenisers. The teacher’s soft targets encode features the student has no access to, so $p_t$ becomes effectively noise from the student’s point of view. Symptom: KD loss is high and noisy, and the combined loss is worse than hard-label-only training.

Online distillation instability. In online or mutual-learning setups the teacher is updated concurrently with the student. The student is chasing a moving target, and if the teacher’s updates are large relative to the student’s, the KL trajectory oscillates instead of decaying. Symptom: epoch-to-epoch KL goes up and down by 20-30% with no downward trend.

A simple diagnostic catches all three:

| |

A healthy run halves its initial KL within roughly 50 epochs and continues drifting downward. If yours does not, the verdict points at the fix:

- Capacity — bump the student up one width or depth tier, or add intermediate-feature distillation so the student gets richer signal per parameter. If the student is locked in size, lower the teacher: distil into your target student via an intermediate teacher first (TA-KD).

- Modality mismatch — align inputs before distilling. Convert the teacher’s input space to match the student’s, or train a small adapter that maps student features into the teacher’s space and distil there.

- Instability — freeze the teacher periodically (update every $k$ student steps, not every step), drop the student’s learning rate, or warm up the KD weight $\alpha$ from 0 over the first few epochs.

The pattern across all three: the KL trajectory is a more sensitive instrument than test accuracy. It tells you whether the student is listening, before you find out whether it learned anything useful.

Bridge: With these diagnostics in hand, distillation moves from a hopeful loss term to an instrumented training procedure. The remaining questions are practical — how to pick hyperparameters, how far you can push compression, and how distillation interacts with the rest of the model-shrinking toolkit.

FAQ#

How do I pick the temperature?#

Start at $\tau = 4$ . The more classes you have and the more similar they are to each other (e.g. fine-grained species classification), the higher you should go — up to $\tau = 20$ for ImageNet-scale problems. Grid-search $\{2, 4, 8, 12, 20\}$ on a held-out set.

How small can the student get?#

You can compress 4-10x with very little loss. Past 50x even distillation cannot save you — expect 5-10% drops. The general rule: distillation buys you the most when the student has just enough capacity to represent what the teacher knows but not enough to learn it from labels alone.

Why does self-distillation work at all? The student has the same capacity as the teacher. Two reasons. (1) The soft targets are a stronger regulariser than one-hot labels, especially on small datasets. (2) Each generation lands in a slightly different basin of the loss landscape, so iterating is an implicit ensemble at zero inference cost. Expect 1-2% gains on CIFAR-100, with diminishing returns after 3 generations.

Can I use multiple teachers?#

Yes — average their soft outputs (uniformly or weighted by validation accuracy). This usually buys robustness more than headline accuracy, at the cost of training each teacher.

Distil first or prune first?#

Both, in this order: train teacher -> prune to define the student architecture -> distil to recover accuracy -> quantise. Each step preserves more knowledge than doing them independently because every later step still has the teacher to lean on.

Summary#

Knowledge distillation is the art of teaching a small model to think like a big one:

- Soft labels carry inter-class structure that one-hot labels destroy. That structure is the dark knowledge.

- Temperature is a single scalar that controls how much of it the student sees.

- Feature and relation distillation push beyond logits, matching intermediate representations and pairwise geometry.

- Self-distillation works without a separate teacher and gives you a free ensemble.

- Stacked with pruning and quantisation, distillation enables 10-20x compression with single-digit accuracy cost.

Next: Part 6 — Multi-Task Learning , where multiple tasks share parameters to improve generalisation and efficiency.

References#

- Hinton, Vinyals, Dean (2015). Distilling the Knowledge in a Neural Network. arXiv:1503.02531

- Romero et al. (2015). FitNets: Hints for Thin Deep Nets. ICLR. arXiv:1412.6550

- Zagoruyko & Komodakis (2017). Paying More Attention to Attention. ICLR. arXiv:1612.03928

- Park et al. (2019). Relational Knowledge Distillation. CVPR. arXiv:1904.05068

- Tian et al. (2020). Contrastive Representation Distillation. ICLR. arXiv:1910.10699

- Furlanello et al. (2018). Born-Again Neural Networks. ICML. arXiv:1805.04770

- Sanh et al. (2019). DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter. arXiv:1910.01108

- Zhang et al. (2018). Deep Mutual Learning. CVPR. arXiv:1706.00384

Transfer Learning 12 parts

- 01 Transfer Learning (1): Fundamentals and Core Concepts

- 02 Transfer Learning (2): Pre-training and Fine-tuning

- 03 Transfer Learning (3): Domain Adaptation

- 04 Transfer Learning (4): Few-Shot Learning

- 05 Transfer Learning (5): Knowledge Distillation you are here

- 06 Transfer Learning (6): Multi-Task Learning

- 07 Transfer Learning (7): Zero-Shot Learning

- 08 Transfer Learning (8): Multimodal Transfer

- 09 Transfer Learning (9): Parameter-Efficient Fine-Tuning

- 10 Transfer Learning (10): Continual Learning

- 11 Transfer Learning (11): Cross-Lingual Transfer

- 12 Transfer Learning (12): Industrial Applications and Best Practices