Transfer Learning (12): Industrial Applications and Best Practices

Series finale. A field guide to shipping transfer learning to production: when to use it, the end-to-end pipeline, compute and dollar economics, four landmark case studies, A/B testing, distribution-shift monitoring, and 12-month ROI.

A three-person team at a fintech startup shipped a fraud-detection model in two weeks that outperformed the previous system built by 12 engineers over 6 months. The secret? They fine-tuned a pretrained transformer on 5,000 labeled transactions instead of architecting a rule-based ensemble from scratch. The model caught 23% more fraud in the first month while cutting false positives in half. When their VP of Engineering asked why the old team took so long, the answer was simple: they didn’t have transfer learning.

This is the final part of the series. The previous eleven parts gave you the mechanics — pretraining, fine-tuning, domain adaptation, few-shot and zero-shot learning, distillation, multi-task learning, multimodality, parameter-efficient methods, continual learning, and cross-lingual transfer. This part is about the work that happens once the notebook closes: deciding whether to use transfer learning, how to thread it into a production pipeline, and how to know it is still working six months later.

Everything below is written from the perspective of a team that has to keep a model running, not one that has to publish a paper. The trade-offs are different. You will see more spreadsheets than equations, more monitoring dashboards than architecture diagrams, and more conversations with product managers than with conference reviewers. If your job is to ship and maintain models that create business value, this chapter is for you.

When Transfer Learning Is the Right Tool#

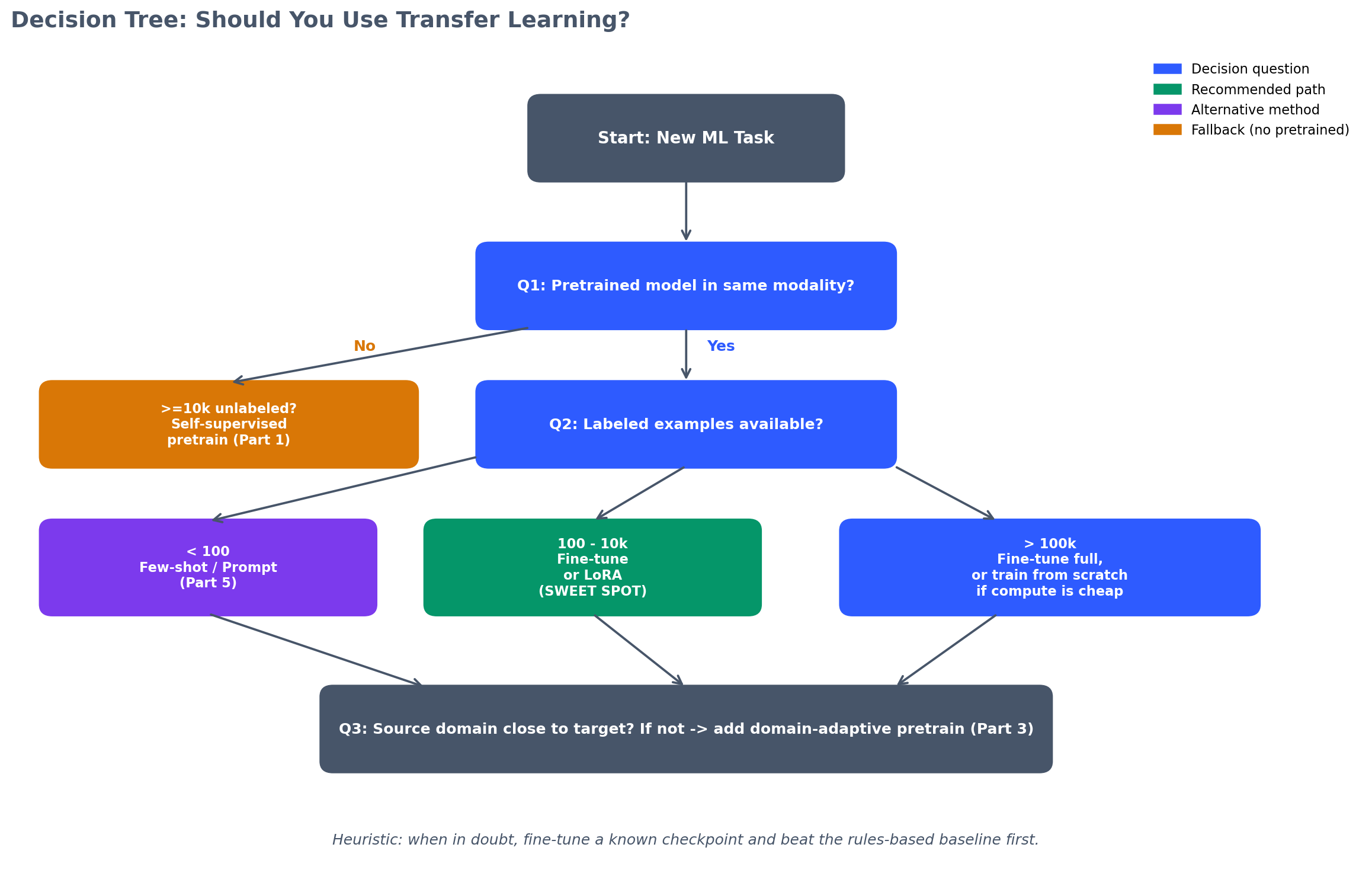

Transfer learning is not always the answer. Three questions determine whether it belongs in your stack:

1.1 Do you have enough labeled data to train from scratch?

If you have 100,000+ labeled examples evenly distributed across your output space, you can probably train from scratch and get competitive results. Transfer learning’s largest gains appear when labeled data is scarce (100–10,000 examples) or imbalanced.

A telecom company had 80,000 labeled customer-support tickets but wanted to add a new category (“5G troubleshooting”) with only 200 examples. Training from scratch on the full 80,200 examples produced 91% accuracy on the existing categories and 34% on the new one. Fine-tuning a BERT model pretrained on customer-service corpora gave 92% on existing categories and 78% on the new category within three epochs.

1.2 Is there a pretrained model whose source domain overlaps with yours?

A model pretrained on ImageNet (natural images) transfers well to medical X-rays (grayscale, structured anatomy) but poorly to satellite imagery (top-down perspective, different feature scales). Check Hugging Face, TensorFlow Hub, PyTorch Hub, and domain-specific repositories (e.g., BioBERT for biomedical text, Climate Change AI model zoo for environmental data).

If no pretrained model exists, you can still use self-supervised pretraining on your unlabeled data (Part 1 of this series), but that adds a training stage and requires more compute.

A logistics company wanted to predict package-delivery delays. No pretrained model existed for “logistics time-series,” but they had 10 million unlabeled shipment records. They pretrained a Transformer with masked time-series modeling (predicting missing time steps), then fine-tuned on 50,000 labeled delay events. The approach cut prediction error (MAE) by 18% compared to training an LSTM from scratch on the labeled set alone.

1.3 Do you have the engineering capacity to manage the complexity?

Transfer learning adds:

- Model selection overhead: comparing 5–10 pretrained checkpoints.

- Hyperparameter tuning: learning rate, layer freezing, warmup schedules.

- Version control: tracking both the pretrained weights and your fine-tuned deltas.

- Monitoring drift in the pretrained feature space (Section 8 ).

If your team is two people and you need a model deployed in a week, fine-tuning a well-known pretrained model is usually faster than training from scratch. If you are a single researcher with no MLOps support, training a smaller model from scratch may be simpler to debug.

A healthcare startup with one ML engineer chose to fine-tune BioBERT for clinical-note classification instead of training a custom LSTM. The pretrained model required only 2 hours of tuning on a single GPU and shipped to production in 4 days. Six months later, the same engineer added a second use case (medication extraction) by fine-tuning the same checkpoint on a different dataset in 3 hours.

Decision heuristic:

| Condition | Recommendation |

|---|---|

| < 1,000 labeled examples | Use transfer learning (few-shot or fine-tuning) |

| 1,000–10,000 labeled examples | Try both; transfer learning usually wins |

| 10,000–100,000 labeled examples | Transfer learning often still wins on speed and sample efficiency |

| > 100,000 labeled examples | Train from scratch if compute is cheap and you need full control; transfer learning if you want faster iteration |

| No relevant pretrained model | Self-supervised pretraining on your unlabeled data, or train from scratch |

| Team < 3 people | Use off-the-shelf pretrained models to save time |

| Deployment latency critical | Use distillation (Part 5) or parameter-efficient methods (Part 9) |

The End-to-End Transfer Learning Pipeline#

A production transfer learning system has six stages. Most tutorials cover stage 3 (fine-tuning) and ignore the other five.

Pretrained Model Selection#

Goal: Pick the checkpoint that minimizes downstream fine-tuning cost.

Process:

- Identify candidate models from public hubs (Hugging Face, TensorFlow Hub, PyTorch Hub, or domain-specific repositories).

- Filter by:

- Input modality (text, image, audio, multimodal).

- Architecture family (Transformer, ResNet, EfficientNet, etc.).

- Parameter count (latency budget).

- Pretraining data (closer to your domain = better transfer).

- Run a zero-shot or few-shot evaluation on a 100-example validation split.

- Pick the top 2–3 models for full fine-tuning.

A content-moderation team evaluated 8 pretrained vision models (ResNet-50, EfficientNet-B3, ViT-B/16, ConvNeXt-T, Swin-T, CLIP ViT-B/32, DINOv2, and a custom model pretrained on user-generated content). Zero-shot CLIP scored 68% accuracy; fine-tuned DINOv2 reached 94% after 1,000 labeled examples, beating the next-best model (Swin-T at 91%) while using 30% fewer parameters.

Common mistake: Picking the largest model without testing. A 1.5B-parameter model is not always better than a 300M-parameter model if the smaller one was pretrained on in-domain data.

Data Preparation#

Goal: Format your labeled data for the pretrained model’s input pipeline.

Checklist:

- Tokenization (text): use the exact tokenizer from the pretrained model. Mismatched vocabularies destroy performance.

- Image preprocessing (vision): match the normalization statistics (mean, std) used during pretraining. For ImageNet models, this is

mean=[0.485, 0.456, 0.406],std=[0.229, 0.224, 0.225]. - Sampling strategy: if your classes are imbalanced, oversample the minority class or use weighted loss (Part 2 ).

- Train/val/test split: hold out a test set that the model never sees during selection or tuning.

An e-commerce team fine-tuned a BERT model for product categorization. They initially used bert-base-uncased but tokenized text with a different library (spaCy instead of Hugging Face’s BertTokenizer). Accuracy was 67%. After switching to the correct tokenizer, accuracy jumped to 89% on the same data with the same hyperparameters. The issue: spaCy’s tokenization created out-of-vocabulary tokens that BERT mapped to [UNK], losing semantic information.

Fine-Tuning#

Goal: Adapt the pretrained model to your task with minimal overfitting.

Strategies (covered in Parts 2–4, 8):

- Full fine-tuning: update all parameters.

- Layer freezing: freeze early layers, train later layers.

- Parameter-efficient methods: LoRA, prefix tuning, adapters (Part 9 ).

Hyperparameters that matter most:

- Learning rate: 10x to 100x smaller than training from scratch. Typical range: $10^{-5}$ to $10^{-4}$ for Transformers, $10^{-4}$ to $10^{-3}$ for vision models.

- Warmup steps: 5–10% of total training steps.

- Epochs: 3–10 for full fine-tuning, 10–50 for parameter-efficient methods.

- Batch size: as large as GPU memory allows (use gradient accumulation if needed).

Validation strategy: Track validation loss and a task-specific metric (F1, AUC, BLEU, etc.). Stop when the metric plateaus for 2–3 epochs.

A sentiment-analysis pipeline fine-tuned RoBERTa on 5,000 product reviews. Initial experiments used lr=1e-3 (standard for training from scratch) and diverged after 50 steps. Reducing to lr=2e-5 with 300 warmup steps achieved 92% validation accuracy in 4 epochs. The team also experimented with LoRA (rank 16), which reached 91% accuracy with 100x fewer trainable parameters and allowed them to store 20 task-specific adapters instead of 20 full model copies.

Evaluation#

Goal: Measure performance on held-out data and edge cases.

Metrics:

- Aggregate metrics (accuracy, F1, AUC): compare to baseline and business requirements.

- Per-class metrics: identify which categories underperform.

- Slice-based evaluation (Part 4 ): test on subpopulations (e.g., different age groups, languages, image lighting conditions).

Checklist:

- Does the model beat the baseline (rules-based system, previous model, or training from scratch)?

- Does it meet the minimum acceptable performance threshold for production? (This is often higher than “beats baseline.”)

- Are there failure modes that create unacceptable business risk? (e.g., high false-positive rate on a rare but expensive class.)

A hiring platform built a resume-screening model by fine-tuning BERT. Aggregate F1 was 87%, beating the rule-based filter (F1 = 71%). But slice-based evaluation revealed the model had 61% recall on resumes from candidates who changed careers (non-linear work history) versus 94% recall on traditional linear resumes. The business required >= 80% recall on all slices. The team retrained with augmented examples of career-change resumes (synthesized by masking and replacing job titles), which lifted career-change recall to 83% while maintaining 88% aggregate F1.

Deployment#

Goal: Serve predictions in production with acceptable latency and cost.

Options:

- Real-time API (REST, gRPC): for user-facing applications (< 200ms latency).

- Batch inference: for offline scoring (daily recommendation updates, periodic re-ranking).

- Edge deployment: for on-device inference (mobile, IoT).

Optimization techniques:

- Quantization (Part 9 ): int8 or fp16 inference. Reduces memory by 2–4x and speeds up inference by 1.5–3x on most hardware.

- Model distillation (Part 5 ): train a smaller student model to mimic the fine-tuned teacher.

- ONNX or TensorRT export: compile the model for optimized inference.

- Batching: group requests to maximize GPU utilization.

A news app fine-tuned a 355M-parameter model for article recommendations. Latency on a single V100 GPU was 45ms per request, but the app required < 20ms to avoid user-perceived lag. The team:

- Quantized to int8 (latency dropped to 28ms, accuracy drop: 0.3%).

- Exported to ONNX and deployed on TensorRT (latency dropped to 16ms).

- Enabled request batching (max batch size 8), achieving 11ms average latency under production load.

Monitoring and Retraining#

Goal: Detect when the model degrades and decide when to retrain.

What to monitor (Section 8 has details):

- Prediction distribution: are output probabilities shifting?

- Input distribution: are feature statistics drifting?

- Business metrics: are click-through rates, conversion rates, or user satisfaction changing?

- Edge-case performance: is accuracy on rare slices dropping?

When to retrain:

- Scheduled (e.g., monthly, quarterly).

- Triggered by drift detection.

- Triggered by business-metric degradation.

A loan-approval model was retrained quarterly. Six months after launch, approval rates dropped from 68% to 52% even though the model’s validation accuracy remained at 91%. Investigation revealed that applicants’ income distributions had shifted (median income increased by 12% due to macroeconomic changes), but the model’s decision boundary was calibrated to the original distribution. Retraining on the last 3 months of data restored approval rates to 66% while maintaining risk-adjusted returns.

Compute and Cost Economics#

Transfer learning’s value proposition is speed and sample efficiency, but it still costs money. Below are benchmarks from real projects.

Compute Costs#

Fine-tuning a pretrained model (NLP, 110M parameters, 10,000 examples, 5 epochs):

- Hardware: 1x V100 (16GB).

- Time: 2 hours.

- Cloud cost (AWS p3.2xlarge): $6.12.

Training from scratch (same architecture, same data, 50 epochs to converge):

- Hardware: 1x V100.

- Time: 18 hours.

- Cloud cost: $55.08.

Fine-tuning with LoRA (same setup, rank 16):

- Time: 1.5 hours.

- Cloud cost: $4.59.

- Storage overhead: 2MB per task (versus 440MB for full model).

Takeaway: Fine-tuning costs 10–20x less than training from scratch in compute time. Parameter-efficient methods cut that by another 20–30% and make multi-task storage practical.

A marketing-tech company maintained 40 different text-classification models (one per customer vertical). Full fine-tuning required 40 x 440MB = 17.6GB of storage and $6 x 40 = $ 240 to retrain all models. Switching to LoRA reduced storage to 40 x 2MB = 80MB and retraining cost to $4.59 x 40 = $ 183.60, a 99.5% storage reduction and 23% cost reduction.

Labeling Costs#

Transfer learning reduces the number of labeled examples needed, which cuts annotation costs.

Example (image classification, 20 classes):

- Training from scratch: Requires ~1,000 examples/class = 20,000 total.

- Annotation cost (Mechanical Turk, $0.05/label): $ 1,000.

- Fine-tuning a pretrained model: Requires ~100 examples/class = 2,000 total.

- Annotation cost: $100.

Example (NER, medical documents, domain experts at $50/hour):

- Training from scratch: 10,000 documents, 15 minutes/document = 2,500 hours.

- Annotation cost: $125,000.

- Fine-tuning BioBERT with active learning: 1,200 documents selected by uncertainty sampling.

- Annotation cost: $15,000.

- Savings: $110,000.

A pharmaceutical company used this approach to build a drug-interaction extraction system. The active-learning loop queried annotators for the 1,200 most-uncertain examples over 6 iterations. The final model (fine-tuned BioBERT) achieved 89% F1, matching the performance of a from-scratch model trained on 8,000 examples in a prior project that cost $100,000 to label.

Engineering Time#

Transfer learning’s largest cost is often human time for experimentation.

Typical breakdown (4-week project, 2 engineers):

- Week 1: Model selection and zero-shot evaluation (20 hours).

- Week 2: Data preparation and first fine-tuning experiments (30 hours).

- Week 3: Hyperparameter tuning and slice-based evaluation (25 hours).

- Week 4: Deployment, monitoring setup, documentation (25 hours).

- Total: 100 hours = $20,000 (at $ 200/hour blended rate).

From-scratch baseline (12-week project, same team):

- Weeks 1–4: Architecture design, baseline experiments (80 hours).

- Weeks 5–8: Training at scale, debugging (100 hours).

- Weeks 9–12: Evaluation, deployment (60 hours).

- Total: 240 hours = $48,000.

Savings: $28,000 and 8 weeks of calendar time.

These numbers are conservative. In practice, from-scratch projects often take longer because architecture choices are less certain. One autonomous-vehicle startup spent 6 months building a custom object-detection architecture before realizing that fine-tuning YOLOv8 on their labeled driving data outperformed it in 3 weeks.

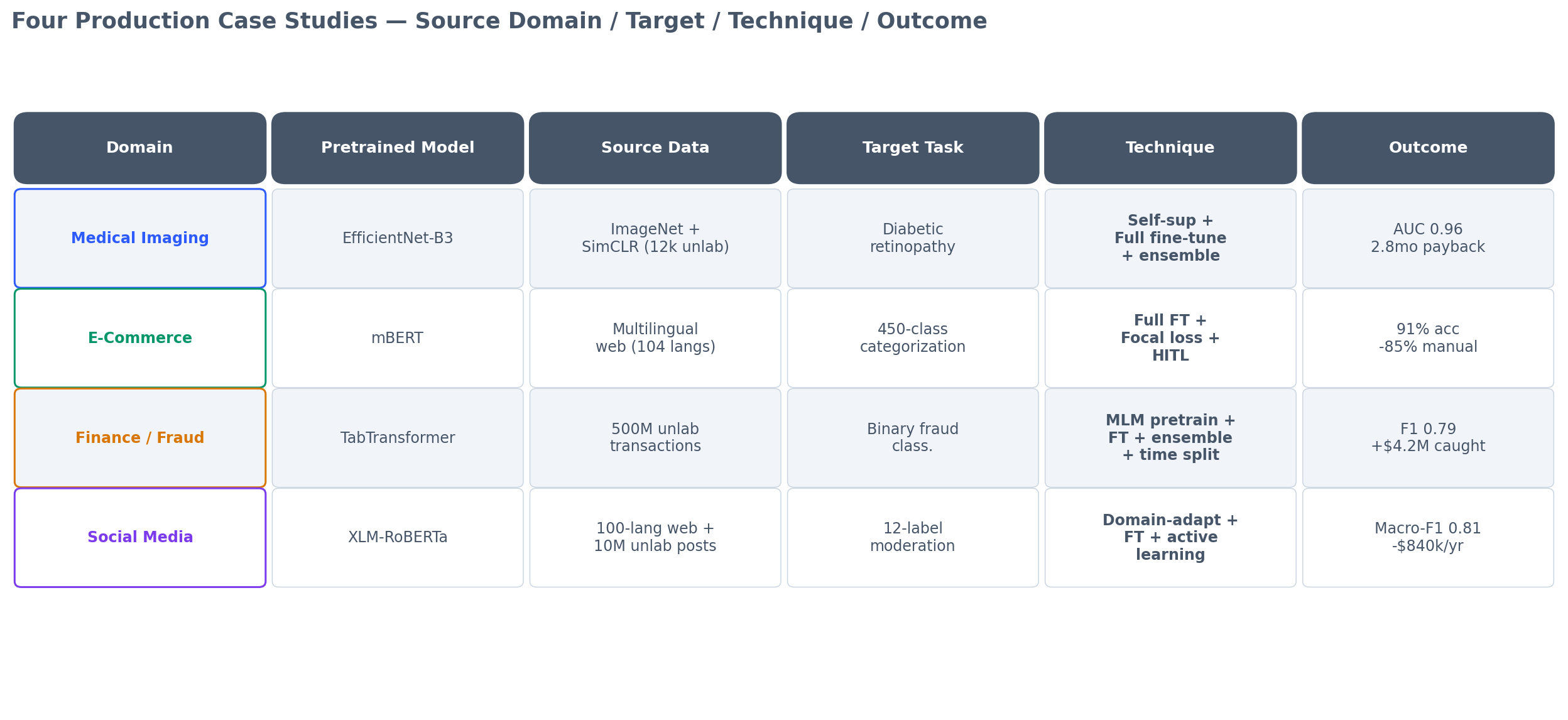

Case Studies#

Below are four real-world deployments (anonymized). Each shows a different facet of transfer learning in production.

Medical Imaging: Diabetic Retinopathy Detection#

Organization: Regional hospital network (Southeast Asia). Task: Binary classification (referable diabetic retinopathy: yes/no) from fundus photographs. Data: 3,500 labeled images (1,800 positive, 1,700 negative); 12,000 unlabeled images. Baseline: Ophthalmologist screening (sensitivity 91%, specificity 88%, cost $45/screening).

Approach:

- Pretrained model: EfficientNet-B3 (ImageNet weights).

- Self-supervised pretraining: SimCLR on the 12,000 unlabeled fundus images for 200 epochs (Part 1 ).

- Fine-tuning: Full fine-tuning on 3,500 labeled images for 30 epochs with heavy augmentation (random crops, color jitter, horizontal flips).

- Ensembling: Average predictions from 5 models trained with different random seeds.

Technical details that made it work:

- Class balancing: Oversampled positive cases 1.5x to account for slight imbalance.

- Augmentation tuning: Standard ImageNet augmentation hurt performance (fundus images have specific anatomical structure). Custom augmentation preserved the optic disc location while varying brightness and contrast, which simulated real acquisition variance.

- Calibration: Raw model outputs were poorly calibrated (predicted probabilities did not match true frequencies). Applied temperature scaling on a 500-image calibration set, which improved ECE from 0.14 to 0.03.

- Domain-specific thresholding: Instead of using 0.5 as the decision threshold, optimized for sensitivity >= 95% at the cost of specificity (healthcare context prioritized catching all positive cases). Final threshold: 0.32.

Results:

- Sensitivity: 96%, specificity: 84%, AUC: 0.96.

- Cost: $2/screening (cloud inference).

- Deployment: Processed 18,000 screenings in the first 6 months, flagging 2,400 for ophthalmologist review (versus 18,000 manual reviews previously).

- ROI: Saved $774,000 in ophthalmologist time ($ 45 x 15,600 screenings no longer requiring manual review) against $120,000 in ML development, cloud costs, and integration. Payback period: 2.8 months.

Key lesson: Self-supervised pretraining on unlabeled in-domain data (fundus images) was critical. A model fine-tuned directly from ImageNet weights achieved 93% sensitivity; adding SimCLR pretraining lifted it to 96%, crossing the clinical acceptability threshold.

E-Commerce: Product Categorization#

Organization: Mid-size online marketplace (Latin America). Task: Multi-class classification (450 product categories). Data: 80,000 labeled product titles + descriptions; 2 million unlabeled listings. Baseline: Keyword-based rules (72% accuracy, maintained by 3 ops analysts).

Approach:

- Pretrained model: mBERT (multilingual BERT, pretrained on 104 languages).

- Fine-tuning: Full fine-tuning on 80,000 labeled examples for 4 epochs.

- Active learning: Retrained monthly, prioritizing labeling for products the model was uncertain about (entropy > 1.5).

Technical details:

- Multilingual challenge: 60% of listings were in Spanish, 30% Portuguese, 10% English. mBERT handled code-switching (mixed-language titles) better than monolingual models.

- Long-tail categories: 180 of the 450 categories had < 50 examples. Standard cross-entropy loss gave 34% accuracy on these. Switching to focal loss (focusing on hard examples) improved long-tail accuracy to 61% while maintaining 94% on frequent categories.

- Inference optimization: Deployed as a real-time API (< 100ms latency required). Used ONNX Runtime with int8 quantization, achieving 68ms p95 latency on CPU (AWS c5.2xlarge).

- Human-in-the-loop: For predictions with max softmax probability < 0.7, the system routed to human review (15% of traffic). This maintained 98% end-to-end accuracy while requiring 85% fewer manual reviews than the baseline.

Results:

- Accuracy: 91% (versus 72% baseline).

- Reduced manual categorization workload by 85%.

- Saved 2.5 FTE ops analysts ($90,000/year).

- Increased correctly categorized listings by 26%, improving search relevance and conversion rates by an estimated 4% (A/B tested, Section 7 ).

Key lesson: Focal loss and human-in-the-loop routing were essential for handling long-tail categories. A naive fine-tuned model optimized for aggregate accuracy would have failed on rare categories, creating poor user experience.

Finance: Transaction Fraud Detection#

Organization: Payment processor (North America). Task: Binary classification (fraudulent transaction: yes/no). Data: 5 million labeled transactions (0.8% fraud rate); 500 million unlabeled transactions. Baseline: Gradient-boosted trees (XGBoost) with 120 hand-engineered features (F1 = 0.68, precision = 0.54, recall = 0.89).

Approach:

- Pretrained model: TabTransformer (Transformer for tabular data, pretrained with self-supervised masking on 500M unlabeled transactions).

- Fine-tuning: Full fine-tuning on 5M labeled examples with class weights (fraud class weighted 100x).

- Ensemble: Stacked ensemble of fine-tuned TabTransformer + XGBoost (meta-learner: logistic regression).

Technical details:

- Feature engineering elimination: The TabTransformer learned representations directly from raw categorical and numerical features (merchant ID, transaction amount, time, location, etc.), eliminating 80% of the feature-engineering pipeline.

- Imbalanced data: With 0.8% fraud rate, standard training collapsed to predicting “not fraud” for everything. Used a combination of oversampling (SMOTE on fraud cases) and focal loss (gamma=2).

- Temporal validation: Standard random train/test split was too optimistic (data leakage from temporally correlated fraud patterns). Switched to time-based split (train on months 1–10, validate on month 11, test on month 12), which better reflected production performance.

- Concept drift handling: Fraud patterns evolved weekly. Implemented a retraining schedule (every 2 weeks) with model versioning and A/B testing before rollout.

Results:

- F1: 0.79 (versus 0.68 baseline, +16%).

- Precision: 0.71 (versus 0.54, +31%, reducing false-positive customer friction).

- Recall: 0.89 (maintained).

- Caught an additional $4.2M in fraud over 6 months.

- Reduced false declines by 28%, improving customer satisfaction (measured by post-decline survey NPS).

Key lesson: Temporal validation prevented overfitting to time-specific patterns. Initial experiments with random splits showed F1 = 0.84 on the test set, but production F1 was 0.61 (catastrophic). Switching to time-based validation gave honest performance estimates and saved the project from a failed launch.

Social Media: Content Moderation#

Organization: Regional social network (Middle East, 40M users). Task: Multi-label classification (hate speech, violence, spam, sexual content, etc.; 12 labels). Data: 50,000 labeled posts (Arabic + English code-switching); 10 million unlabeled posts. Baseline: Outsourced human moderation ($1.2M/year).

Approach:

- Pretrained model: XLM-RoBERTa (cross-lingual RoBERTa, pretrained on 100 languages).

- Self-supervised pretraining: Continued MLM pretraining on 10M unlabeled in-domain posts for 50,000 steps (Part 1 ).

- Fine-tuning: Multi-label classification head, trained for 10 epochs with binary cross-entropy loss.

- Active learning: Weekly retraining, querying human moderators for high-uncertainty cases.

Technical details:

- Code-switching: 35% of posts mixed Arabic and English in a single sentence. XLM-RoBERTa’s cross-lingual embeddings handled this naturally, while monolingual models failed (Arabic BERT: 67% F1, English RoBERTa: 61% F1, XLM-RoBERTa: 83% F1).

- Multi-label threshold tuning: Each label had a different optimal decision threshold (spam: 0.3, hate speech: 0.6, etc.). Used a validation set to tune per-label thresholds via grid search.

- Adversarial robustness: Malicious users deliberately misspelled words to evade detection (e.g., “h@te” instead of “hate”). Augmented training data with character-level perturbations (random insertions, deletions, substitutions) to improve robustness.

- Latency: Required < 50ms inference for real-time moderation. Used ONNX + TensorRT on T4 GPUs, achieving 32ms p95 latency.

Results:

- Macro-F1: 0.81 (weighted average across 12 labels).

- Automated 70% of moderation decisions, reducing human workload by $840,000/year.

- Reduced average moderation time from 18 hours (queue backlog) to 2 minutes (real-time flagging + human review).

- Improved user-reported content-quality scores by 22% (internal survey).

Key lesson: Continued pretraining on in-domain unlabeled data (code-switched social media posts) was more valuable than using the off-the-shelf XLM-RoBERTa checkpoint directly. The domain adaptation step (Part 3 ) improved F1 from 0.74 to 0.81, making the difference between a marginal and a transformative deployment.

When Transfer Learning Fails (and What to Do)#

Not all transfer learning projects succeed. Common failure modes:

5.1 Negative Transfer

The pretrained model hurts performance compared to training from scratch.

Cause: Source and target domains are too dissimilar. The pretrained features mislead the fine-tuning process.

Example: Fine-tuning an ImageNet-pretrained ResNet for galaxy morphology classification (astronomy images). ImageNet features encode object boundaries and textures; galaxies are diffuse, low-contrast structures. Performance was worse than a randomly initialized ResNet.

Fix: Use self-supervised pretraining on in-domain unlabeled data (Part 1 ), or train from scratch.

5.2 Catastrophic Forgetting

Fine-tuning destroys the pretrained model’s general knowledge, making it overfit to the small target dataset.

Cause: Learning rate too high, or too many fine-tuning epochs.

Example: Fine-tuning GPT-2 on 500 customer-support conversations for 50 epochs with lr=1e-3. The model memorized the 500 examples and lost its language-modeling ability (perplexity on a held-out general corpus increased from 22 to 890).

Fix: Lower learning rate (1e-5 to 1e-4), fewer epochs (3–10), or use parameter-efficient methods (LoRA, adapters) to preserve the base model.

5.3 Data Leakage

The pretrained model was trained on data that overlaps with your test set, inflating performance estimates.

Cause: Large web-scraped pretraining datasets (e.g., LAION-5B, Common Crawl) may contain your evaluation benchmarks.

Example: A team fine-tuned CLIP for a proprietary image-retrieval task and reported 96% accuracy. Later discovered that 15% of their test images were in LAION-5B (CLIP’s pretraining set). True performance on non-leaked data was 81%.

Fix: Check for data overlap using embedding-based near-duplicate detection. Create held-out test sets from data collected after the pretrained model’s release date.

5.4 Distribution Shift After Deployment

The model performs well at launch but degrades over time as the input distribution drifts.

Example: A job-recommendation model fine-tuned on 2019–2020 resumes performed well in 2020 (F1 = 0.88) but dropped to F1 = 0.72 by mid-2021 as remote-work trends changed resume language and job descriptions.

Fix: Monitor input and output distributions (Section 8 ). Retrain periodically or use continual learning (Part 10) to adapt without forgetting.

Common Mistakes That Kill Transfer Learning Projects#

Beyond the technical failure modes above, organizational and process mistakes often doom projects before they launch. Here are the top five:

Mistake 1: Skipping the Baseline

Team spends 6 weeks fine-tuning a state-of-the-art model, achieves 87% accuracy, ships to production, then discovers a simple rule-based system would have given 91% accuracy.

Why it happens: Transfer learning is exciting; writing if statements is boring. But the boring solution often wins.

Fix: Always implement the simplest possible baseline first (rules, logistic regression, small decision tree). If transfer learning beats it by < 5%, question whether the added complexity is worth it.

Mistake 2: Ignoring Inference Cost

Model achieves great offline metrics, but production inference costs $0.15 per prediction. The product can’t afford it and the model is never deployed.

Fix: Calculate cost per prediction x predicted request volume during the design phase. If a fine-tuned BERT model costs $0.02/prediction and you expect 10M predictions/month, that's $ 200,000/month. Could a distilled or quantized version serve 90% of requests at $0.001/prediction?

Mistake 3: Tuning on the Test Set

Team iterates on hyperparameters while checking test-set performance. Test accuracy reaches 94%. Production accuracy is 79%.

Fix: Treat the test set as write-only. Evaluate on it exactly once, after all decisions are finalized. Use a separate validation set for tuning.

Mistake 4: Shipping Without Monitoring

Model is deployed, works great for 2 months, then silently degrades. Six months later, a major incident reveals it’s been underperforming for months.

Fix: Monitoring is not optional. At minimum, track: (1) prediction distribution over time, (2) input feature distributions, (3) business metric (CTR, conversion rate, etc.). Set up alerts for anomalies.

Mistake 5: Overcomplicating the Architecture

Team fine-tunes a pretrained model, but “enhances” it with custom attention layers, extra auxiliary tasks, multi-stage training, and ensemble tricks. The final system requires 14 steps to train and 3 people to maintain.

Fix: Resist the urge to add complexity unless it delivers a large, measurable gain. A video-classification team started with a fine-tuned TimeSformer (F1 = 0.83). They added optical flow (+0.01), audio embeddings (+0.02), temporal ensembling (+0.01), and TTA (+0.01). Final F1: 0.88 — but training time went from 4 hours to 19, and deployment required 3 separate inference pipelines. They shipped the base model and invested saved engineering time in labeling more data instead.

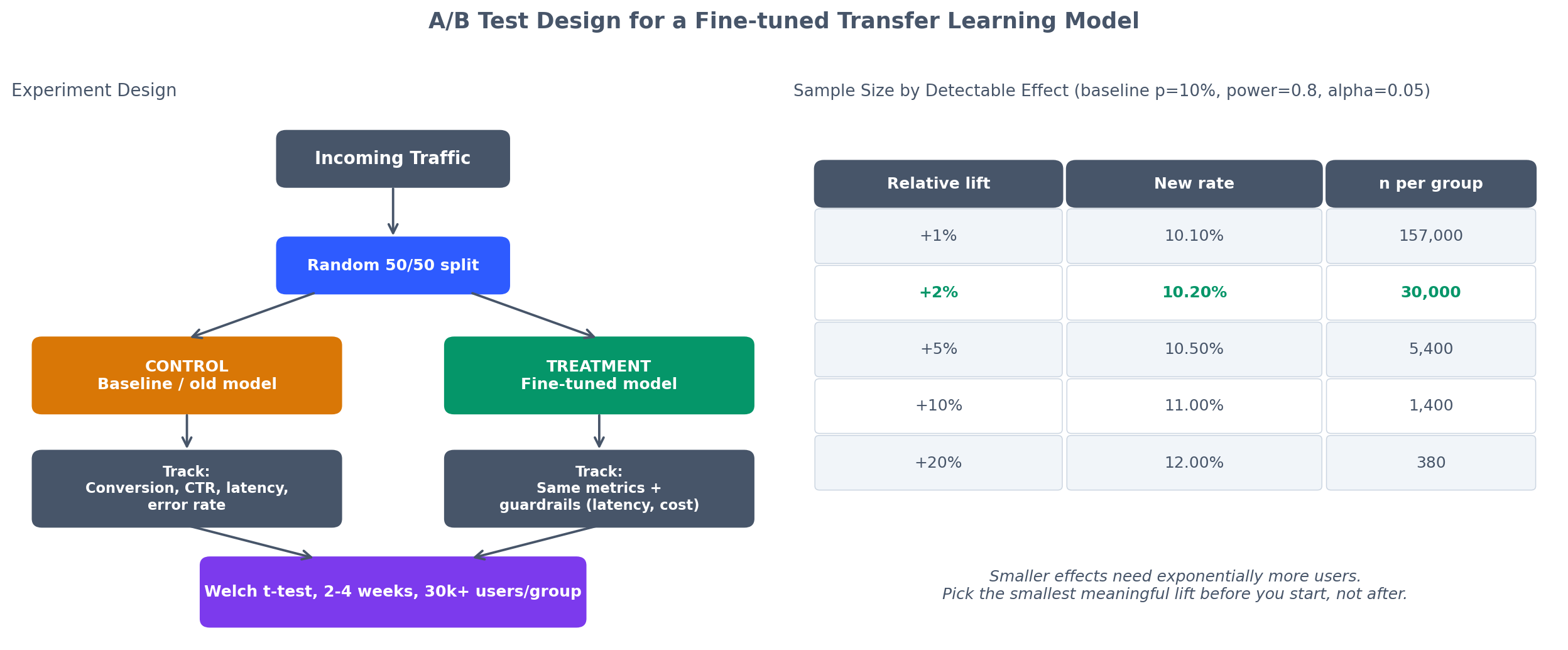

A/B Testing and Evaluation in Production#

Offline metrics (accuracy, F1, AUC) are necessary but not sufficient. The model must improve business outcomes.

Designing the A/B Test#

Goal: Compare the new model (fine-tuned) against the baseline (existing system or no-model control).

Setup:

- Randomly assign users (or requests) to treatment (new model) or control (baseline).

- Run for 2–4 weeks to collect statistically significant data.

- Track business metrics (revenue, engagement, conversion rate, etc.) and guardrail metrics (latency, error rate, user complaints).

Sample size calculation:

$$n = \frac{2 (Z_{\alpha/2} + Z_\beta)^2 \bar{p} (1 - \bar{p})}{(\Delta p)^2}$$where $\bar{p} = 0.10$ , $\Delta p = 0.002$ , $Z_{\alpha/2} = 1.96$ , $Z_\beta = 0.84$ . This gives $n \approx 30{,}000$ users per group.

Iterating Based on User Feedback#

A/B tests measure what happened, but user feedback explains why.

Methods:

- Surveys: Ask users in the treatment group if they noticed a difference.

- Session replay: Watch how users interact with the model’s outputs.

- Support tickets: Track if the new model generates more complaints.

Monitoring and Maintaining Production Models#

A deployed model is not a static artifact. Inputs drift, user behavior changes, and upstream systems evolve. Monitoring detects problems before they become incidents.

What to Monitor#

1. Prediction distribution: Track the distribution of predicted classes or values. Alert if it shifts significantly from the baseline distribution.

2. Input distribution: Monitor feature statistics (mean, variance, quantiles) for drift. Use KL divergence or Population Stability Index (PSI) for categorical features.

3. Model confidence: Track the distribution of predicted probabilities. If high-confidence predictions drop from 60% to 30% of traffic, the model is encountering unfamiliar inputs.

4. Business metrics: Track the downstream impact (revenue, conversions, user satisfaction). A stable model accuracy with declining business metrics means the model is optimizing for the wrong thing.

When to Retrain#

Hybrid approach (recommended):

- Retrain on a schedule (e.g., quarterly).

- Add drift detection to trigger early retraining if needed.

- Use the PSI threshold of 0.25 as a “retrain now” signal.

Return on Investment (ROI)#

Leadership cares about business impact, not validation loss.

Cost Categories#

| Category | One-time | Recurring |

|---|---|---|

| Data labeling | $X | - |

| Model development (engineering hours) | $Y | - |

| Compute (training) | $Z | - |

| Compute (inference) | - | $A/month |

| Monitoring and maintenance | - | $B/month |

Example Calculation (Customer Support Chatbot)#

Costs:

- Data labeling (10,000 Q&A pairs): $15,000.

- Engineering (6 weeks, 2 engineers): $48,000.

- Compute (fine-tuning): $500.

- Inference (100,000 requests/month): $200/month.

- Maintenance (10 hours/month): $2,000/month.

Total first-year cost: $15,000 + $ 48,000 + $500 + ($ 200 + $2,000) x 12 = $ 89,900.

Benefits:

- Automated 40% of support tickets (20,000 tickets/month).

- Each ticket costs $8 in agent time.

- Savings: 20,000 x 0.4 x $8 = $ 64,000/month = $768,000/year.

ROI: ($768,000 - $ 89,900) / $89,900 = 754%. Payback period: 1.4 months.

Practical Q&A#

Q: Should I fine-tune all layers or freeze the early ones?

Depends on dataset size and domain similarity:

- < 1,000 examples, similar domain: Freeze all but the last 2–3 layers.

- 1,000–10,000 examples, similar domain: Freeze the first 50% of layers.

10,000 examples, or different domain: Fine-tune all layers with a small learning rate.

Q: How do I pick the learning rate?

Use the learning rate finder (Smith, 2017): train for 100–200 steps with exponentially increasing LR from $10^{-7}$ to $10^{-1}$ . Plot loss vs. LR. Pick the steepest descent point (usually 10x smaller than the minimum-loss point). For most Transformer fine-tuning, $10^{-5}$ to $10^{-4}$ works well.

Q: Can I fine-tune a model on multiple tasks simultaneously?

Yes (multi-task learning, Part 6 ). Use a shared backbone with task-specific heads, or train a single head with mixed task data. Trade-off: multi-task learning can improve data efficiency but may hurt individual task performance if tasks conflict.

Q: My fine-tuned model is too large for production. What do I do?

Three options:

- Quantization: int8 or fp16 (2–4x smaller, < 1% accuracy loss).

- Distillation: Train a smaller student (10x smaller, ~5% accuracy loss).

- Parameter-efficient methods: Store only LoRA adapters (1–2% of model size).

Q: How often should I retrain?

Depends on domain velocity:

- Static domain (medical imaging): Retrain yearly.

- Slowly evolving (e-commerce): Retrain quarterly.

- Fast-changing (social media, fraud): Retrain monthly or weekly.

Set up drift monitoring to detect when retraining is needed.

Q: What if no pretrained model exists for my domain?

Two options:

- Self-supervised pretraining on your unlabeled data (worth it if > 10,000 unlabeled examples).

- Train from scratch (if > 100,000 labeled examples and sufficient compute).

Q: How do I handle imbalanced classes?

Three strategies in order:

- Class weights in the loss function (simplest).

- Focal loss (auto-focuses on hard examples).

- Resampling (oversample minority or undersample majority).

Q: Can I use transfer learning incrementally as new data arrives?

Yes (continual learning, Part 10 ). Key challenges: catastrophic forgetting and data imbalance. Solutions: experience replay, EWC, or parameter-efficient methods that add new knowledge without overwriting old weights.

Summary#

Transfer learning is not a research technique waiting for production. It is already the default in most applied ML. The question is not “should we use transfer learning?” but “which pretrained model, how much fine-tuning, and how do we keep it running?”

The economics are clear: transfer learning cuts development time by 50–80%, reduces labeled-data requirements by 10x, and often improves performance. But it is not automatic. You still need to understand your data, choose the right architecture, tune hyperparameters, test rigorously, monitor continuously, and retrain when distributions shift.

The 12-part series ends here. You now have the full toolkit: pretraining strategies (Part 1 ), fine-tuning techniques (Parts 2–4 ), advanced methods (Parts 5–11), and this final part on production deployment. The rest is execution.

If you take one idea from this series, let it be this: transfer learning is not about using someone else’s model. It is about using someone else’s model as a starting point and making it yours. The pretrained weights are raw material. Your data, your task, your evaluation, your deployment, and your monitoring turn them into a product.

Good luck shipping.

Transfer Learning 12 parts

- 01 Transfer Learning (1): Fundamentals and Core Concepts

- 02 Transfer Learning (2): Pre-training and Fine-tuning

- 03 Transfer Learning (3): Domain Adaptation

- 04 Transfer Learning (4): Few-Shot Learning

- 05 Transfer Learning (5): Knowledge Distillation

- 06 Transfer Learning (6): Multi-Task Learning

- 07 Transfer Learning (7): Zero-Shot Learning

- 08 Transfer Learning (8): Multimodal Transfer

- 09 Transfer Learning (9): Parameter-Efficient Fine-Tuning

- 10 Transfer Learning (10): Continual Learning

- 11 Transfer Learning (11): Cross-Lingual Transfer

- 12 Transfer Learning (12): Industrial Applications and Best Practices you are here